Chapter 10 Bayesian Models of Cognition

📍 Where we are in the Bayesian modeling workflow: Chs. 1–8 used the Bayesian statistical framework as a tool to model cognitive processes in the matching-pennies task. This chapter introduces a distinct scientific claim: the Bayesian cognition hypothesis — the proposal that the mind itself operates according to Bayes’ theorem. We use our Bayesian statistical tools to test whether this hypothesis fits human behaviour in a social learning task, applying the full validation battery from Chs. 5–7. The chapter also introduces Leave-Future-Out cross-validation for sequential models, a technique flagged in Ch. 7 but not developed there.

10.1 A Terminological Clarification

Before proceeding, one potential source of confusion deserves explicit attention. This entire course has used Bayesian statistics as its inferential framework: we represent uncertainty as probability distributions, write likelihoods, specify priors, and obtain posteriors via Stan. That is our tool.

This chapter introduces a distinct scientific claim: the Bayesian cognition hypothesis. This is the proposal that the mind itself operates according to principles analogous to Bayes’ theorem — that people represent beliefs as probability distributions, update them optimally when new evidence arrives, and combine information sources in proportion to their reliability. Whether this hypothesis is empirically correct is an open and contested question. We use our Bayesian statistical tools to test it.

Keeping these two senses of “Bayesian” separate is essential for clean scientific thinking.

10.2 Introduction

The human mind constantly receives input from multiple sources: direct sensory evidence, social information from others, and prior knowledge built from experience. A fundamental question in cognitive science is how these disparate pieces of information are combined to produce coherent beliefs about the world.

The Bayesian framework offers a powerful approach to modelling this process. Under this framework, the mind is conceptualised as a probabilistic machine that continuously updates its beliefs based on new evidence. This contrasts with rule-based or purely associative models by emphasising:

- Representations of uncertainty: Beliefs are represented as probability distributions, not single values.

- Optimal integration: Information is combined according to its reliability.

- Prior knowledge: New evidence is interpreted in light of existing beliefs.

Formally, Bayes’ theorem states:

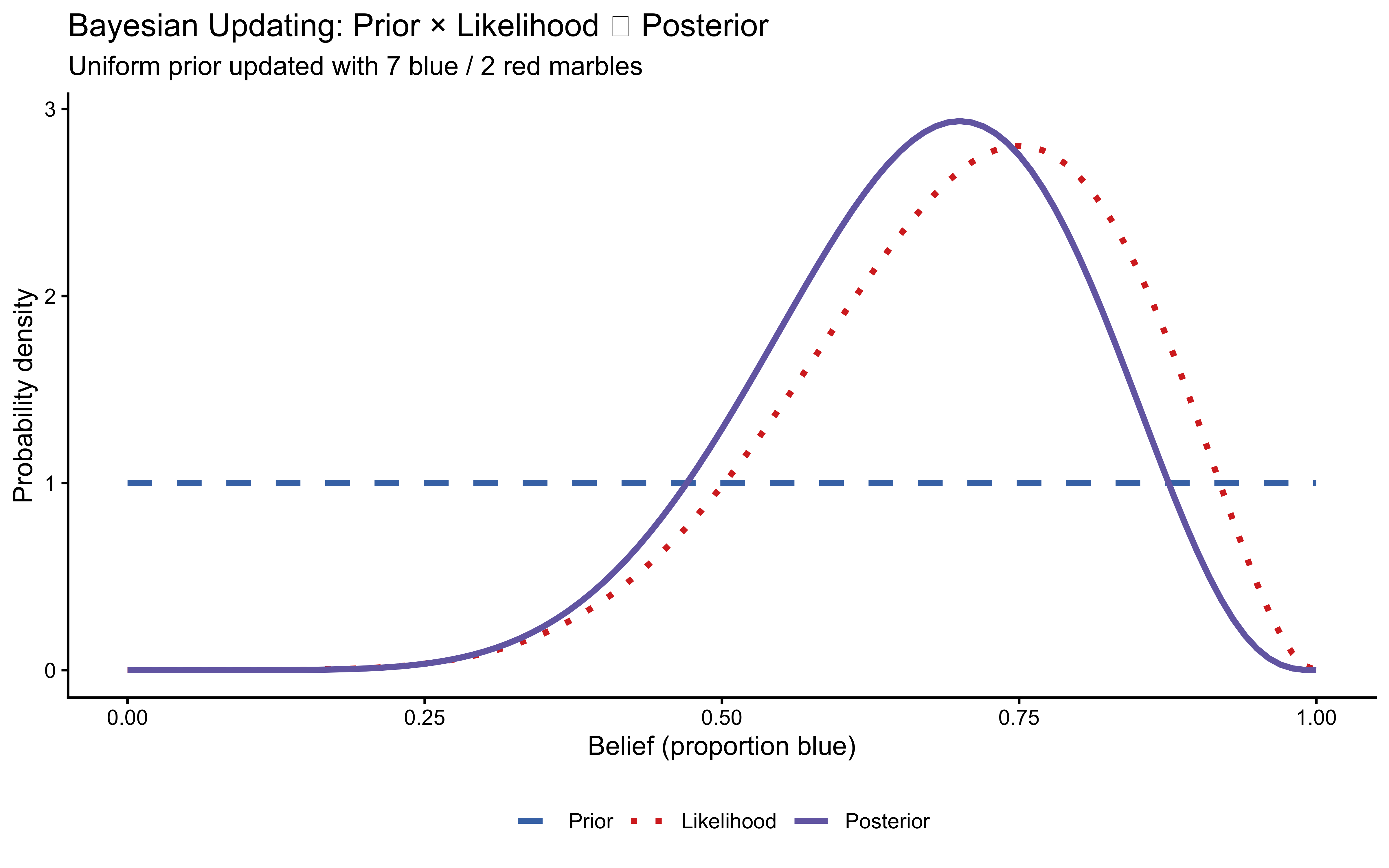

\[P(\text{belief} \mid \text{evidence}) \propto P(\text{evidence} \mid \text{belief}) \times P(\text{belief})\]

where \(P(\text{belief} \mid \text{evidence})\) is the posterior, \(P(\text{evidence} \mid \text{belief})\) is the likelihood, and \(P(\text{belief})\) is the prior. The \(\propto\) symbol denotes proportionality — the product on the right must be normalised by dividing by \(P(\text{evidence})\) to recover a proper probability. In practice, this normalisation constant is handled automatically when we work with conjugate families such as the Beta distribution.

Note: In a more traditional formulation, Bayes’ theorem is written: \[P(\text{belief} \mid \text{evidence}) = \frac{P(\text{evidence} \mid \text{belief}) \times P(\text{belief})}{P(\text{evidence})}\] The normalising denominator \(P(\text{evidence})\) ensures the posterior integrates to one. Because this constant does not depend on the belief parameter, it cancels in most computations — hence the proportionality shorthand.

In cognitive terms, this means people integrate new information with existing knowledge, giving more weight to reliable information sources and less weight to unreliable ones.

10.3 Learning Objectives

After completing this chapter, you will be able to:

- Understand the basic principles of Bayesian information integration and distinguish the Bayesian cognition hypothesis from the Bayesian statistics toolkit.

- Implement three cognitive models — a Simple Bayesian Agent (SBA), a Proportional Bayesian Agent (PBA), and a Weighted Bayesian Agent (WBA) — in raw Stan, and understand the distinct cognitive claim encoded by each.

- Execute the full Bayesian workflow (prior predictive checks → SBC → fitting → diagnostics → LOO-PIT → sensitivity analysis → model comparison) on cognitive models.

- Identify when standard LOO-CV is invalid and know the leave-future-out remedy for sequential data.

- Extend both models to multilevel structures that capture individual differences.

10.4 Chapter Roadmap

This chapter follows the same three-phase workflow established in Chapters 5–7:

- Phase 1 — Generative Plausibility: Prior predictive checks confirm that our priors generate cognitively plausible behaviour before any data are observed.

- Phase 2 — Model Criticism and Robustness: Posterior predictive checks, LOO-PIT, and sensitivity analysis assess how well the fitted model captures the data and how sensitive conclusions are to prior choice.

- Phase 3 — Computational Validation: Parameter recovery and Simulation-Based Calibration (SBC) certify that the Stan sampler is unbiased and can recover the true parameters from data generated by our models.

Note: Phase 3 (SBC) is presented before Phase 2 (empirical diagnostics) in this chapter, mirroring the logical dependency: we validate the computational engine on simulated data before trusting inferences on real or agent-generated data.

Critical precondition (from Chapter 7): Model comparison is only scientifically meaningful if all candidate models have individually passed their quality checks. We apply this discipline here.

10.5 The Bayesian Framework for Cognition

Bayesian models of cognition explore the idea that the mind operates according to principles similar to Bayes’ theorem, combining different sources of evidence to form updated beliefs. Most commonly this is framed in terms of prior beliefs being updated with new evidence to form a posterior.

10.5.1 Visualising Bayesian Updating

x <- seq(0, 1, by = 0.01)

prior <- dbeta(x, 1, 1)

likelihood <- dbeta(x, 7, 3)

posterior <- dbeta(x, 8, 4)

# 1. Define the length explicitly outside the tibble

n_x <- length(x)

plot_data <- tibble(

x = rep(x, 3),

density = c(prior, likelihood, posterior),

distribution = factor(

# 2. Use the explicit length variable here

rep(c("Prior", "Likelihood", "Posterior"), each = n_x),

levels = c("Prior", "Likelihood", "Posterior")

)

)

ggplot(plot_data, aes(x = x, y = density,

colour = distribution, linetype = distribution)) +

geom_line(linewidth = 1.2) +

scale_colour_manual(

values = c("Prior" = "#4575b4", "Likelihood" = "#d73027",

"Posterior" = "#756bb1")

) +

scale_linetype_manual(

values = c("Prior" = "dashed", "Likelihood" = "dotted",

"Posterior" = "solid")

) +

labs(

title = "Bayesian Updating: Prior × Likelihood ∝ Posterior",

subtitle = "Uniform prior updated with 7 blue / 2 red marbles",

x = "Belief (proportion blue)",

y = "Probability density",

colour = NULL, linetype = NULL

) +

theme(legend.position = "bottom")

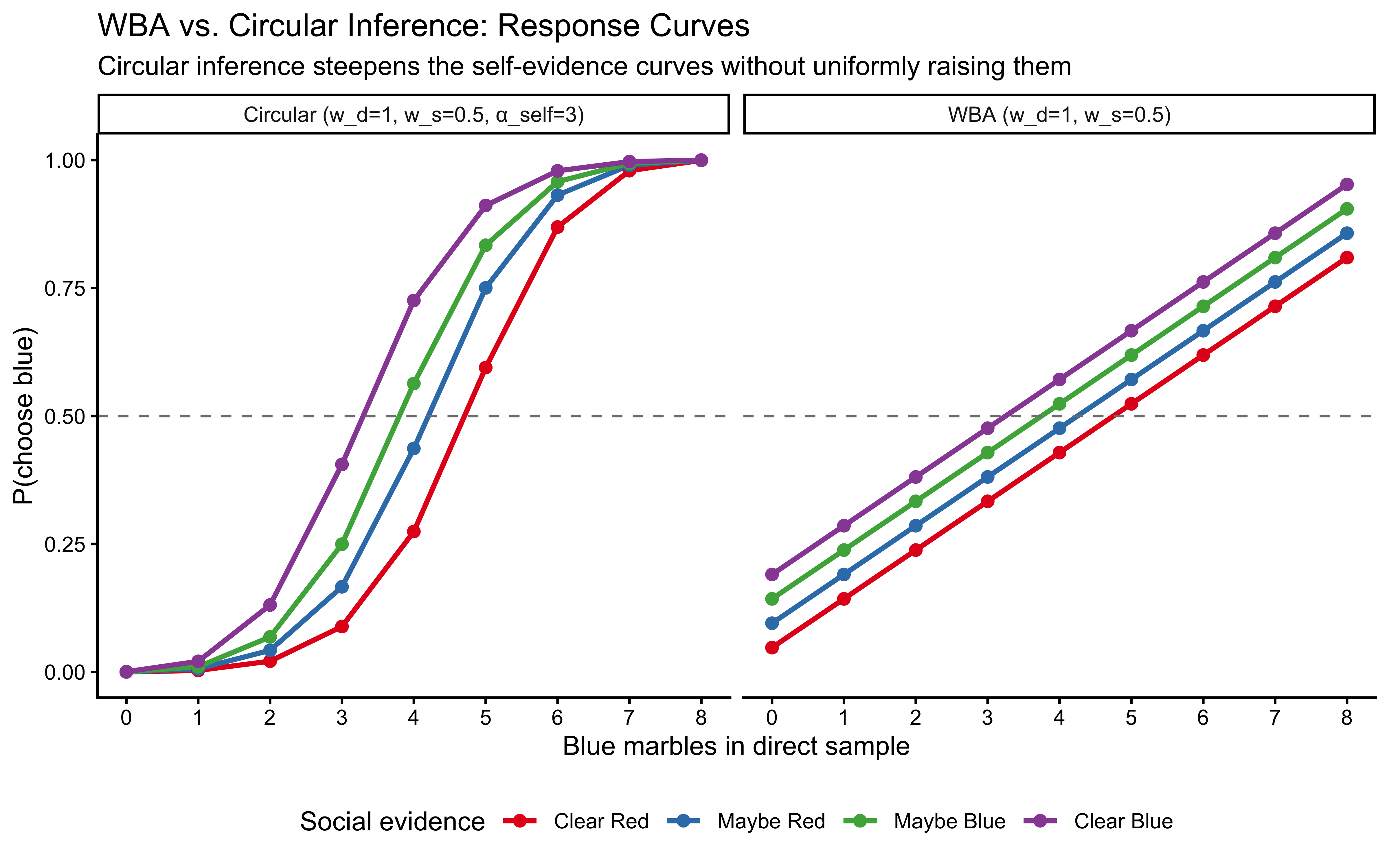

The posterior is narrower than either source alone — combining information increases certainty. Its peak lies between prior and likelihood, but closer to the likelihood because the evidence was relatively strong. The bottom line: Bayesian integration is not averaging; it is precision-weighted combination.

10.5.2 Bayesian Models in Cognitive Science

Bayesian cognitive models have been successfully applied to:

- Perception: combining multi-sensory cues (visual, auditory, tactile) into a unified percept.

- Learning: updating beliefs from observation and instruction.

- Decision-making: weighing different evidence sources when making choices.

- Social cognition: integrating others’ opinions with one’s own knowledge.

- Psychopathology: understanding schizophrenia and autism in terms of atypical Bayesian inference — hyper-precise priors, reduced likelihood weighting, or altered social learning.

10.7 The Three Models

This chapter develops and compares three competing models of evidence integration, ordered by increasing flexibility.

10.7.1 Model 1: Simple Bayesian Agent (SBA)

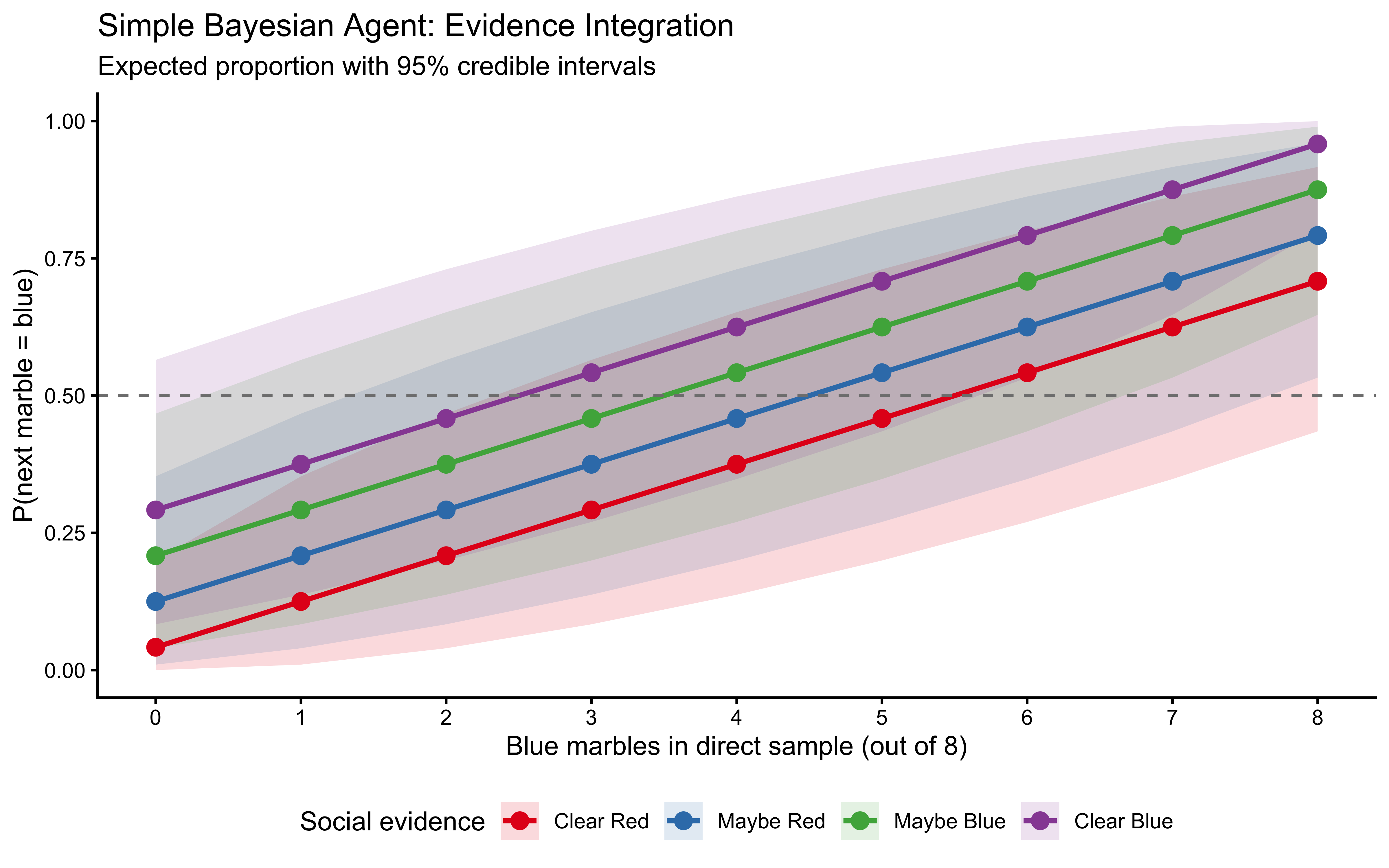

The SBA assigns unit weight per pseudo-marble to both evidence sources — meaning each observed (or encoded) marble contributes exactly one pseudocount regardless of its origin. Because direct and social evidence have different encoded sample sizes (\(n_1 = 8\) vs \(n_2 = 3\)), the SBA is not symmetric in its maximum influence: direct evidence can shift the posterior up to 2.67× more than social evidence. Its only structural choice is the Jeffreys prior pseudo-count \(\alpha_0 = \beta_0 = 0.5\). For trial \(i\):

\[y_i \sim \text{BetaBinomial}(1,\ \alpha_i,\ \beta_i)\] \[\alpha_i = 0.5 + k_{1,i} + k_{2,i}\] \[\beta_i = 0.5 + (n_{1,i} - k_{1,i}) + (n_{2,i} - k_{2,i})\]

The SBA has no free parameters — it is a fully specified prediction machine.

10.7.2 Model 2: Proportional Bayesian Agent (PBA)

The PBA encodes the hypothesis that trust is a zero-sum attentional budget: whatever weight is allocated to direct evidence is subtracted from social evidence. A single free parameter \(p \in [0, 1]\) governs this allocation:

\[y_i \sim \text{BetaBinomial}(1,\ \alpha_i,\ \beta_i)\] \[\alpha_i = 0.5 + p \cdot k_{1,i} + (1-p) \cdot k_{2,i}\] \[\beta_i = 0.5 + p \cdot (n_{1,i} - k_{1,i}) + (1-p) \cdot (n_{2,i} - k_{2,i})\]

where \(p \to 1\) means social evidence is ignored; \(p \to 0\) means direct evidence is ignored. The PBA has one free parameter: \(p\). Note that \(p = 0.5\) does not produce equal influence from both sources, because the two pools have different sizes: at \(p = 0.5\), direct evidence contributes up to \(0.5 \times 8 = 4\) pseudocounts while social evidence contributes at most \(0.5 \times 3 = 1.5\). True equal maximum contribution from both sources would require \(p \cdot 8 = (1-p) \cdot 3\), yielding \(p \approx 0.27\). While \(p = 0.5\) does recover behaviour structurally close to the SBA (preserving proportional weighting within each source), it should not be read as “balanced” integration.

Cognitive interpretation of the PBA: The PBA treats integration as a competition between two evidence sources. The total evidence budget is fixed at 1 — an agent cannot simultaneously overweight both sources. This contrasts with the WBA below, where the two sources are evaluated independently.

10.7.3 Model 3: Weighted Bayesian Agent (WBA)

The WBA relaxes the zero-sum constraint. The two weight parameters \(w_d > 0\) and \(w_s > 0\) scale direct and social evidence independently:

\[y_i \sim \text{BetaBinomial}(1,\ \alpha_i,\ \beta_i)\] \[\alpha_i = 0.5 + w_d \cdot k_{1,i} + w_s \cdot k_{2,i}\] \[\beta_i = 0.5 + w_d \cdot (n_{1,i} - k_{1,i}) + w_s \cdot (n_{2,i} - k_{2,i})\]

A weight of 1.0 means the agent treats each pseudo-marble at face value; less than 1.0 means downweighting (scepticism); greater than 1.0 means overweighting. Negative weights are not assigned a prior — they would imply anti-correlated updating, which has no cognitive interpretation in this task. One interpretive caution: because social evidence is encoded as total2 = 3 pseudo-marbles when the actual sample was 8, a participant who correctly intuits that the other person saw 8 marbles might rationally apply \(w_s \approx 8/3 \approx 2.67\) simply to compensate for the encoding approximation, not because they genuinely over-trust the social source. Recovered \(w_s\) values should therefore be read as trust relative to the encoding, not as an absolute measure of social reliance.

The WBA has two free parameters: \(w_d\) and \(w_s\).

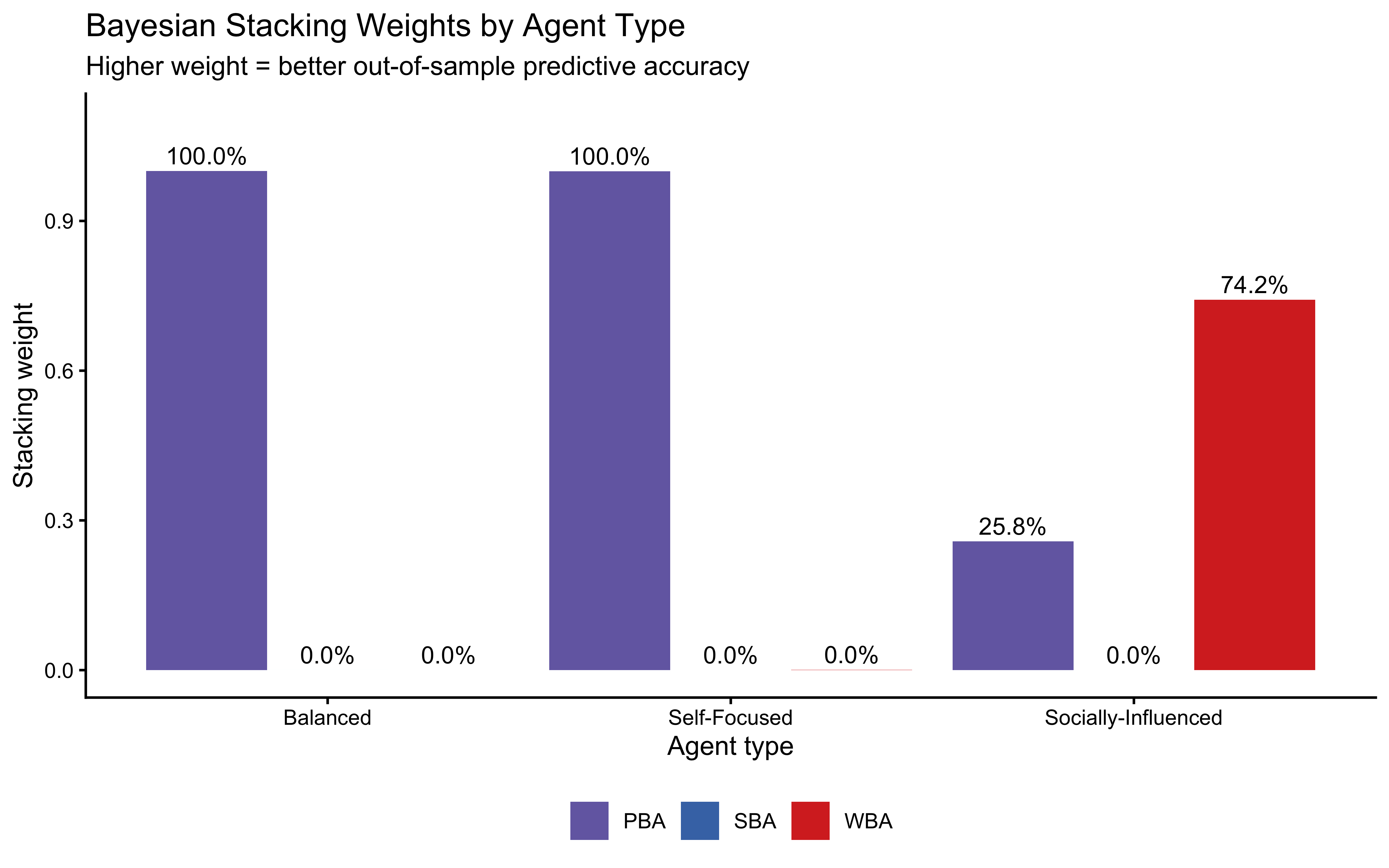

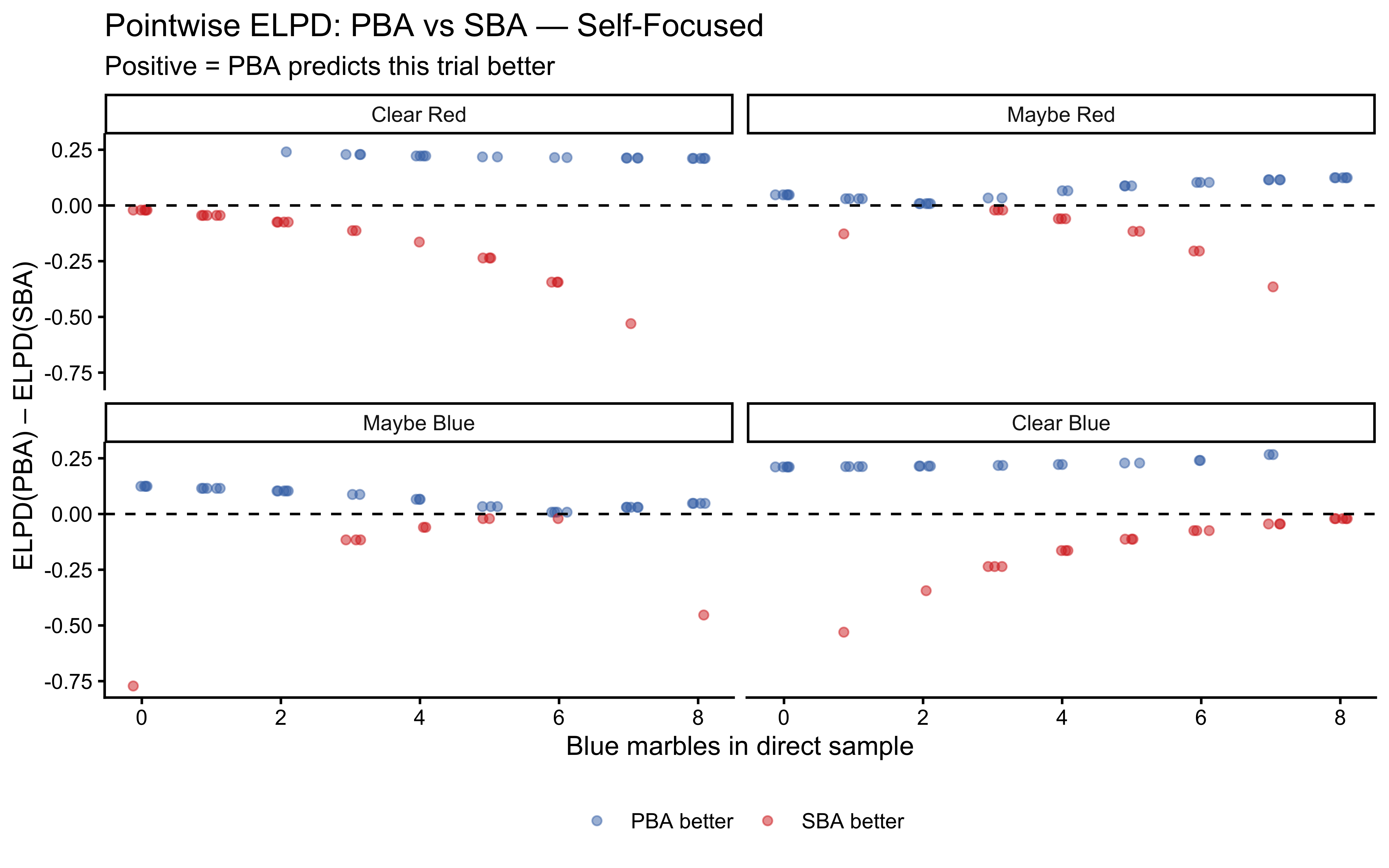

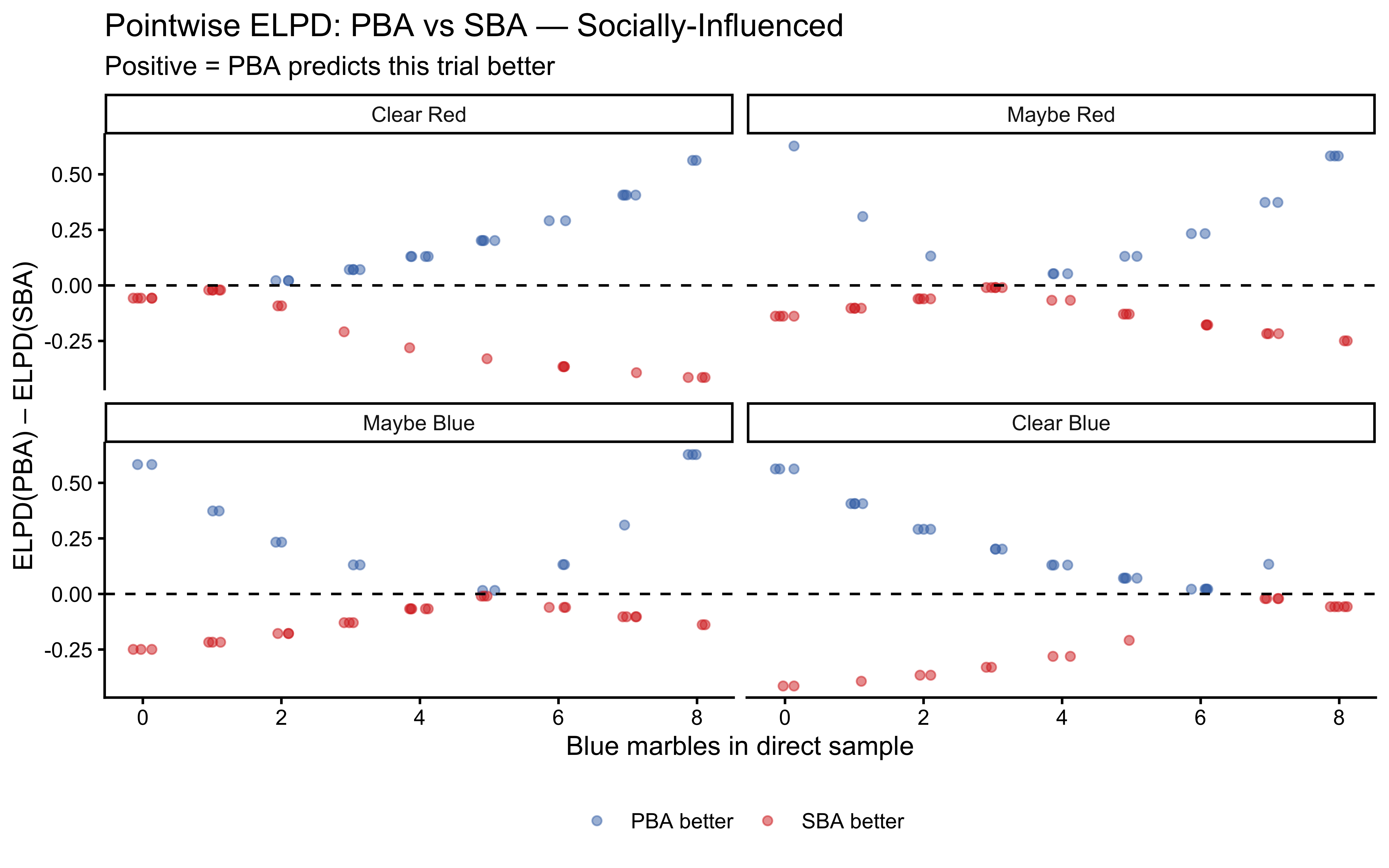

Comparing the three models: The SBA fixes both weights at 1 (0 free parameters). The PBA allows differential allocation but not differential scaling (1 free parameter). The WBA allows both allocation and scaling to vary independently (2 free parameters). LOO model comparison will arbitrate which level of flexibility the data support. Each model encodes a distinct cognitive hypothesis, not merely a different number of parameters.

Identifiability note — WBA: \(w_d\) and \(w_s\) are jointly identified by the differential pattern of choices across trials where direct and social evidence conflict. With the full grid of 9 × 4 evidence combinations (repeated), both parameters are well-separated in the likelihood. We verify this computationally in the SBC section.

Identifiability note — PBA: \(p\) is identified whenever direct and social evidence point in conflicting directions. With 9 × 4 evidence combinations, the PBA is well identified. We verify this in SBC below.

Extension: estimating the effective sample size of social evidence. Rather than fixing

total2 = 3, one can treat the effective social sample size \(n_s > 0\) as a free parameter and let the data determine how many marble-equivalents the social signal is worth. Keeping \(k_{2,i}\) as the ordinal level (0–3) and floating \(n_s\) as the denominator, the WBA becomes: \[\alpha_i = 0.5 + w_d \cdot k_{1,i} + w_s \cdot k_{2,i}\] \[\beta_i = 0.5 + w_d \cdot (8 - k_{1,i}) + w_s \cdot (n_s - k_{2,i})\] with, for example, \(n_s \sim \text{Half-Normal}(0, 8)\), reflecting that the other person’s true sample was at most 8 marbles. In Stan,n_sis declared asreal<lower=3>(lower-bounded to prevent \(n_s < k_{2,i} = 3\)) and its log-prior added totarget. One practical caution: \(n_s\) and \(w_s\) are partially collinear — only the products \(w_s \cdot k_{2,i}\) and \(w_s \cdot (n_s - k_{2,i})\) appear in the likelihood. A strong prior on \(n_s\), or fixing it and freeing only \(w_s\), is advisable unless the evidence grid is sufficiently dense to separate them via SBC.

10.8 R Implementations

Before touching Stan, we implement the models in R to build intuition and generate simulated agent data.

10.8.1 The Beta-Binomial Integration Function

# Compute the posterior Beta parameters and summary statistics

# for a single trial of evidence integration.

#

# alpha_prior = beta_prior = 0.5: Jeffreys prior (parameterisation-invariant).

# Returns: list with alpha_post, beta_post, expected_rate,

# ci_lower, ci_upper, choice (based on expected_rate > 0.5)

betaBinomialModel <- function(alpha_prior = 0.5, beta_prior = 0.5,

blue1, total1, blue2, total2,

w_direct = 1, w_social = 1) {

alpha_post <- alpha_prior + w_direct * blue1 + w_social * blue2

beta_post <- beta_prior + w_direct * (total1 - blue1) +

w_social * (total2 - blue2)

expected_rate <- alpha_post / (alpha_post + beta_post)

ci_lower <- qbeta(0.025, alpha_post, beta_post)

ci_upper <- qbeta(0.975, alpha_post, beta_post)

list(

alpha_post = alpha_post,

beta_post = beta_post,

expected_rate = expected_rate,

ci_lower = ci_lower,

ci_upper = ci_upper,

choice = ifelse(expected_rate > 0.5, "Blue", "Red")

)

}10.8.2 Simulating the Evidence Space

total1 <- 8 # direct evidence sample size (constant)

total2 <- 3 # social evidence total signals (constant)

scenarios <- expand_grid(

blue1 = seq(0, 8, 1),

blue2 = seq(0, 3, 1)

) |>

mutate(red1 = total1 - blue1, red2 = total2 - blue2)

# SBA predictions (w_direct = w_social = 1, Jeffreys prior)

sim_data <- scenarios |>

pmap_dfr(function(blue1, red1, blue2, red2) {

result <- betaBinomialModel(0.5, 0.5, blue1, total1, blue2, total2)

tibble(

blue1 = blue1,

blue2 = blue2,

expected_rate = result$expected_rate,

ci_lower = result$ci_lower,

ci_upper = result$ci_upper,

choice = result$choice

)

}) |>

mutate(

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

)

)10.8.3 Visualising Bayesian Integration

ggplot(sim_data,

aes(x = blue1, y = expected_rate,

colour = social_evidence, group = social_evidence)) +

geom_ribbon(aes(ymin = ci_lower, ymax = ci_upper,

fill = social_evidence), alpha = 0.15, colour = NA) +

geom_line(linewidth = 1) +

geom_point(size = 3) +

geom_hline(yintercept = 0.5, linetype = "dashed", colour = "grey50") +

scale_x_continuous(breaks = 0:8) +

scale_colour_brewer(palette = "Set1") +

scale_fill_brewer(palette = "Set1") +

coord_cartesian(ylim = c(0, 1)) +

labs(

title = "Simple Bayesian Agent: Evidence Integration",

subtitle = "Expected proportion with 95% credible intervals",

x = "Blue marbles in direct sample (out of 8)",

y = "P(next marble = blue)",

colour = "Social evidence",

fill = "Social evidence"

) +

theme(legend.position = "bottom")

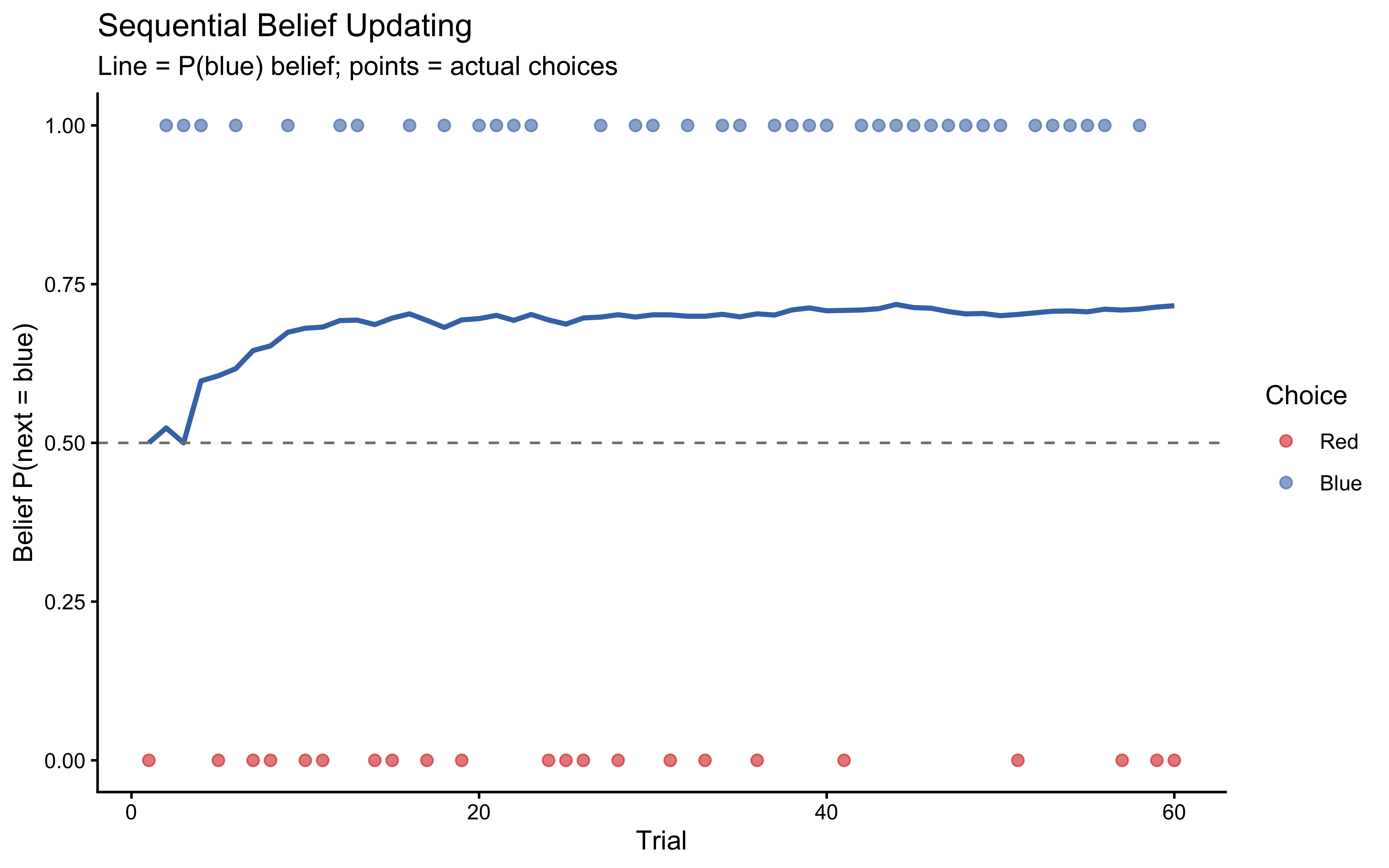

The influence of social evidence is greatest when direct evidence is ambiguous (near 4 blue marbles) and weakest at the extremes. This is the signature Bayesian property: stronger evidence dominates weaker evidence.

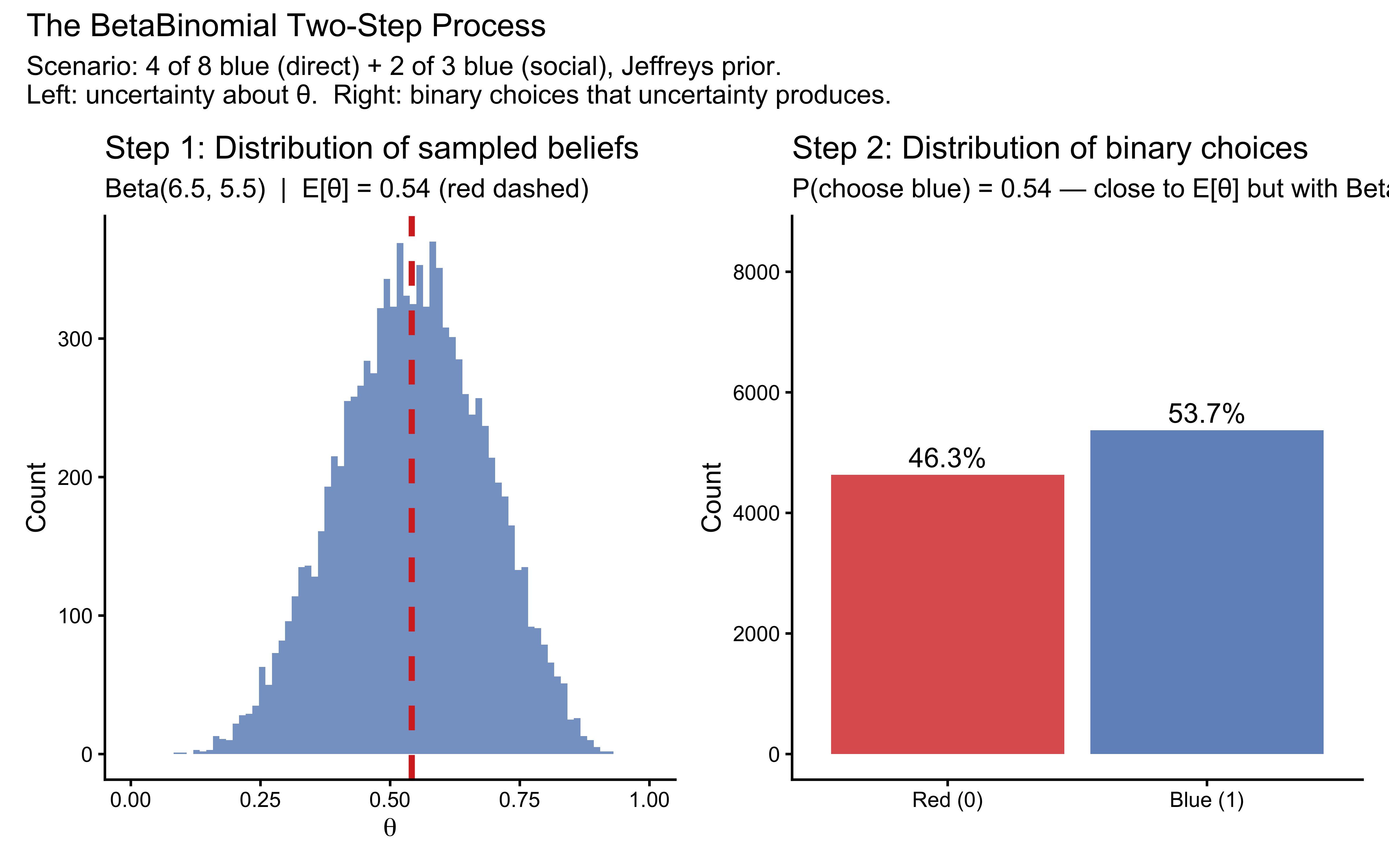

10.8.4 How the BetaBinomial Generates a Distribution of Choices

The plot above shows expected rates — the posterior mean of \(\theta\) for each evidence combination. This is useful for intuition but obscures something important about how the model actually generates behaviour.

The BetaBinomial likelihood is not a deterministic threshold rule. It describes a two-step stochastic process:

- Draw a belief: Sample the true proportion \(\theta \sim \text{Beta}(\alpha, \beta)\), representing genuine uncertainty about the jar’s composition.

- Draw a choice: Given that \(\theta\), sample a binary choice \(y \sim \text{Bernoulli}(\theta)\).

The consequence is that even when the posterior mean is 0.70 in favour of blue, the agent will not choose blue exactly 70% of the time in a perfectly mechanical way. There is variability from two sources simultaneously: uncertainty about what the jar proportion actually is (step 1), and the irreducible randomness of acting on that belief with a single guess (step 2). This variability is not noise added on top of the model — it is the model. The Beta distribution encodes what a rational agent should believe; the Bernoulli draw captures the randomness of a single decision.

This two-stage structure has a measurable implication: the BetaBinomial has greater variance than a Binomial with the same mean. This excess variance is called overdispersion, and it arises precisely because \(\theta\) must itself be sampled from the Beta rather than being known.

# Illustrate the two-step BetaBinomial sampling for a single scenario.

# Scenario: 4 blue of 8 direct + 2 blue of 3 social (Jeffreys prior)

alpha_demo <- 0.5 + 4 + 2 # = 6.5

beta_demo <- 0.5 + 4 + 1 # = 5.5

n_sims <- 10000

set.seed(42)

# Step 1: sample theta from the posterior Beta

theta_draws <- rbeta(n_sims, alpha_demo, beta_demo)

# Step 2: sample choice from Bernoulli(theta)

choice_draws <- rbinom(n_sims, 1, theta_draws)

expected_rate_demo <- alpha_demo / (alpha_demo + beta_demo)

p_theta <- tibble(theta = theta_draws) |>

ggplot(aes(x = theta)) +

geom_histogram(bins = 80, fill = "#4575b4", alpha = 0.7) +

geom_vline(xintercept = expected_rate_demo,

colour = "#d73027", linewidth = 1.2, linetype = "dashed") +

scale_x_continuous(limits = c(0, 1)) +

labs(

title = "Step 1: Distribution of sampled beliefs",

subtitle = sprintf(

"Beta(%.1f, %.1f) | E[θ] = %.2f (red dashed)",

alpha_demo, beta_demo, expected_rate_demo

),

x = expression(theta),

y = "Count"

)

p_choice <- tibble(

choice = factor(choice_draws, levels = c(0, 1),

labels = c("Red (0)", "Blue (1)"))

) |>

ggplot(aes(x = choice, fill = choice)) +

geom_bar(alpha = 0.8) +

geom_text(

stat = "count",

aes(label = scales::percent(after_stat(count / sum(count)), accuracy = 0.1)),

vjust = -0.4, size = 4

) +

scale_fill_manual(

values = c("Red (0)" = "#d73027", "Blue (1)" = "#4575b4")

) +

coord_cartesian(ylim = c(0, n_sims * 0.85)) +

labs(

title = "Step 2: Distribution of binary choices",

subtitle = sprintf(

"P(choose blue) = %.2f — close to E[θ] but with BetaBinomial overdispersion",

mean(choice_draws)

),

x = NULL, y = "Count", fill = NULL

) +

theme(legend.position = "none")

p_theta + p_choice +

plot_annotation(

title = "The BetaBinomial Two-Step Process",

subtitle = paste(

"Scenario: 4 of 8 blue (direct) + 2 of 3 blue (social), Jeffreys prior.",

"\nLeft: uncertainty about θ. Right: binary choices that uncertainty produces."

)

)

The left panel shows that even in a relatively informative scenario (11 total observations), there is genuine uncertainty about \(\theta\). The right panel shows that this uncertainty propagates into choices: the agent does not produce a deterministic 68% blue rate but a distribution of outcomes whose spread exceeds what a Binomial with \(p = 0.68\) would produce.

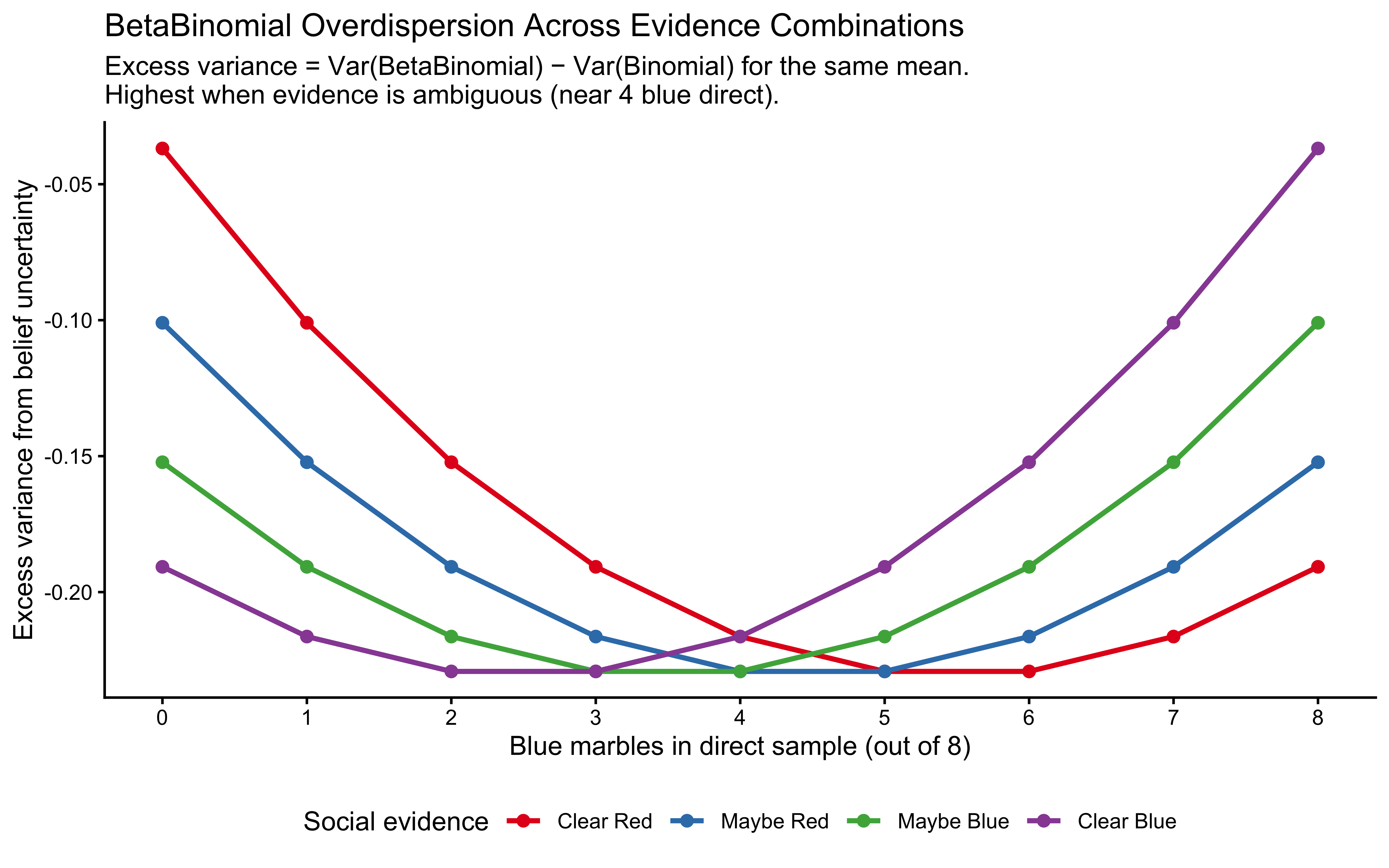

# Quantify overdispersion across the evidence grid.

# BetaBinomial variance vs Binomial variance for the same expected rate.

# Excess = fingerprint of belief uncertainty.

overdispersion_data <- expand_grid(

blue1 = 0:8,

blue2 = 0:3

) |>

mutate(

alpha_p = 0.5 + blue1 + blue2,

beta_p = 0.5 + (8 - blue1) + (3 - blue2),

theta_hat = alpha_p / (alpha_p + beta_p),

# BetaBinomial variance for a single Bernoulli draw (n=1)

var_bb = (alpha_p * beta_p) /

((alpha_p + beta_p)^2 * (alpha_p + beta_p + 1)),

# Binomial variance (Bernoulli)

var_binom = theta_hat * (1 - theta_hat),

excess = var_bb - var_binom,

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

)

)

ggplot(overdispersion_data,

aes(x = blue1, y = excess,

colour = social_evidence, group = social_evidence)) +

geom_line(linewidth = 1) +

geom_point(size = 2) +

scale_colour_brewer(palette = "Set1") +

scale_x_continuous(breaks = 0:8) +

labs(

title = "BetaBinomial Overdispersion Across Evidence Combinations",

subtitle = paste(

"Excess variance = Var(BetaBinomial) − Var(Binomial) for the same mean.",

"\nHighest when evidence is ambiguous (near 4 blue direct)."

),

x = "Blue marbles in direct sample (out of 8)",

y = "Excess variance from belief uncertainty",

colour = "Social evidence"

) +

theme(legend.position = "bottom")

Overdispersion is greatest when evidence is ambiguous — near 4 blue marbles direct, where the Beta posterior is widest and \(\theta\) is least constrained. This has a concrete implication for model checking: if real participants produce choices that are more variable than a Binomial with \(p = \hat{\theta}\) would predict, that is not a misspecification — it is exactly what the BetaBinomial predicts. If choices are less variable (more deterministic than the model allows), the LOO-PIT will show a hump-shaped histogram, signalling that a threshold decision rule rather than probabilistic sampling better describes the data.

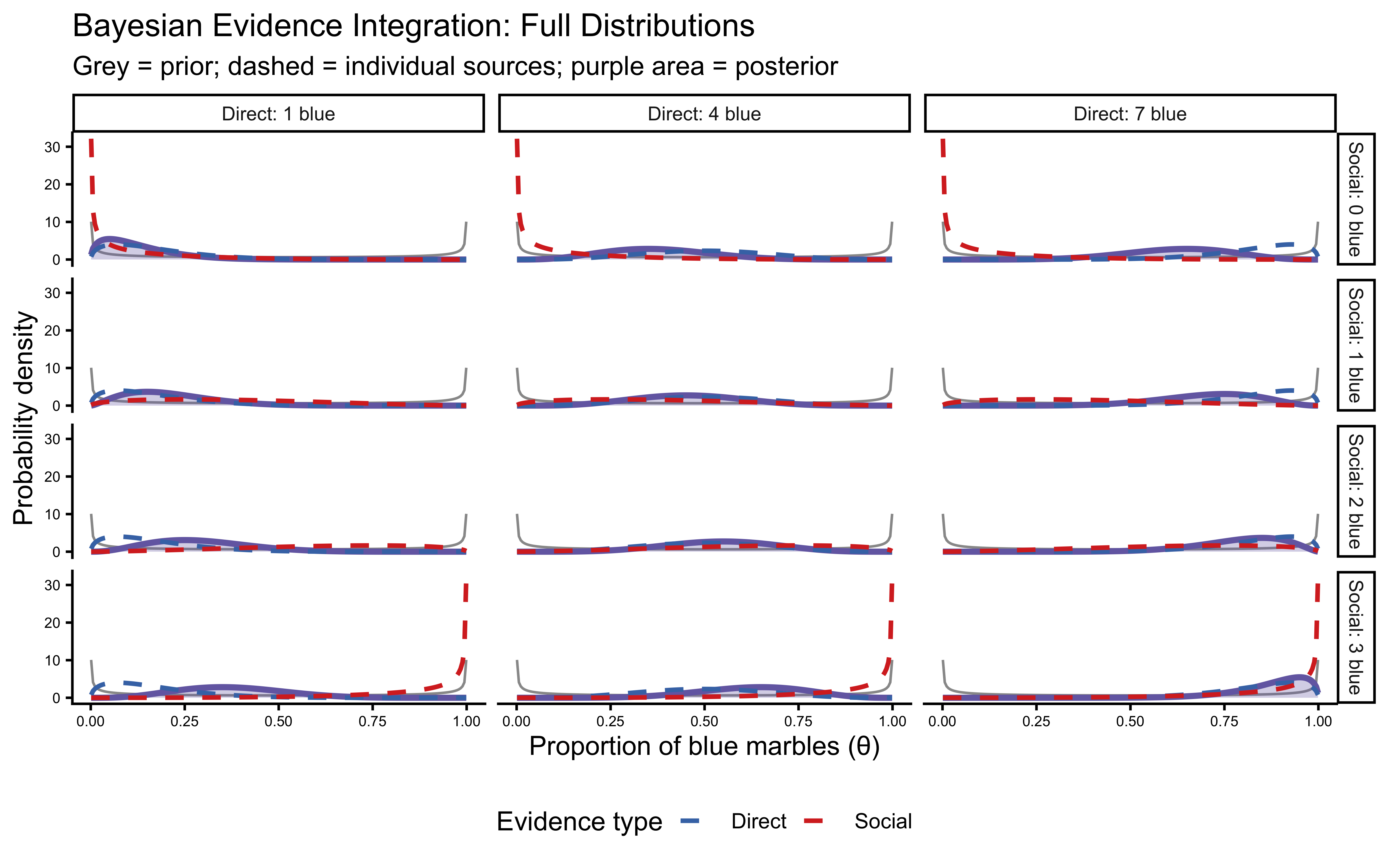

10.8.5 Examining Belief Distributions for Selected Scenarios

# Jeffreys prior: alpha0 = beta0 = 0.5

simpleBayesianDistributions <- function(blue1, red1, blue2, red2) {

theta <- seq(0.001, 0.999, length.out = 200)

tibble(

theta = theta,

prior = dbeta(theta, 0.5, 0.5),

direct = dbeta(theta, 0.5 + blue1, 0.5 + red1),

social = dbeta(theta, 0.5 + blue2, 0.5 + red2),

posterior = dbeta(theta, 0.5 + blue1 + blue2, 0.5 + red1 + red2)

)

}

selected_scenarios <- expand_grid(

blue1 = c(1, 4, 7),

blue2 = c(0, 1, 2, 3)

)

distribution_data <- selected_scenarios |>

pmap_dfr(function(blue1, blue2) {

simpleBayesianDistributions(

blue1, total1 - blue1, blue2, total2 - blue2

) |>

mutate(

blue1 = blue1,

blue2 = blue2,

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

)

)

})

ggplot(distribution_data) +

geom_line(aes(x = theta, y = prior),

colour = "grey60", linetype = "solid", linewidth = 0.5) +

geom_area(aes(x = theta, y = posterior),

fill = "#756bb1", alpha = 0.3) +

geom_line(aes(x = theta, y = posterior),

colour = "#756bb1", linewidth = 1.2) +

geom_line(aes(x = theta, y = direct, colour = "Direct"),

linewidth = 0.9, linetype = "dashed") +

geom_line(aes(x = theta, y = social, colour = "Social"),

linewidth = 0.9, linetype = "dashed") +

facet_grid(

blue2 ~ blue1,

labeller = labeller(

blue1 = function(x) paste("Direct:", x, "blue"),

blue2 = function(x) paste("Social:", x, "blue")

)

) +

scale_colour_manual(values = c("Direct" = "#4575b4", "Social" = "#d73027")) +

labs(

title = "Bayesian Evidence Integration: Full Distributions",

subtitle = "Grey = prior; dashed = individual sources; purple area = posterior",

x = "Proportion of blue marbles (θ)",

y = "Probability density",

colour = "Evidence type"

) +

theme(

legend.position = "bottom",

strip.text = element_text(size = 8),

axis.text = element_text(size = 6)

)

10.8.6 The Weighted Bayesian Agent: Mathematical Extension

The WBA extends the SBA by introducing weight parameters \(w_d\) and \(w_s\), keeping the same Jeffreys prior pseudo-count \(\alpha_0 = \beta_0 = 0.5\):

\[\alpha_\text{post} = 0.5 + w_d \cdot k_1 + w_s \cdot k_2\] \[\beta_\text{post} = 0.5 + w_d \cdot (n_1 - k_1) + w_s \cdot (n_2 - k_2)\]

Cognitively, \(w_d > 1\) means the agent overweights direct evidence (treats observations as more diagnostic than they are); \(w_s < 1\) means social information is discounted. A weight of 0 means the source is ignored entirely.

This is not simple averaging. Because the weights multiply the count of evidence before adding it to the Beta parameters, they modulate the effective sample size of each source. The natural Beta-distribution normalisation still handles uncertainty correctly.

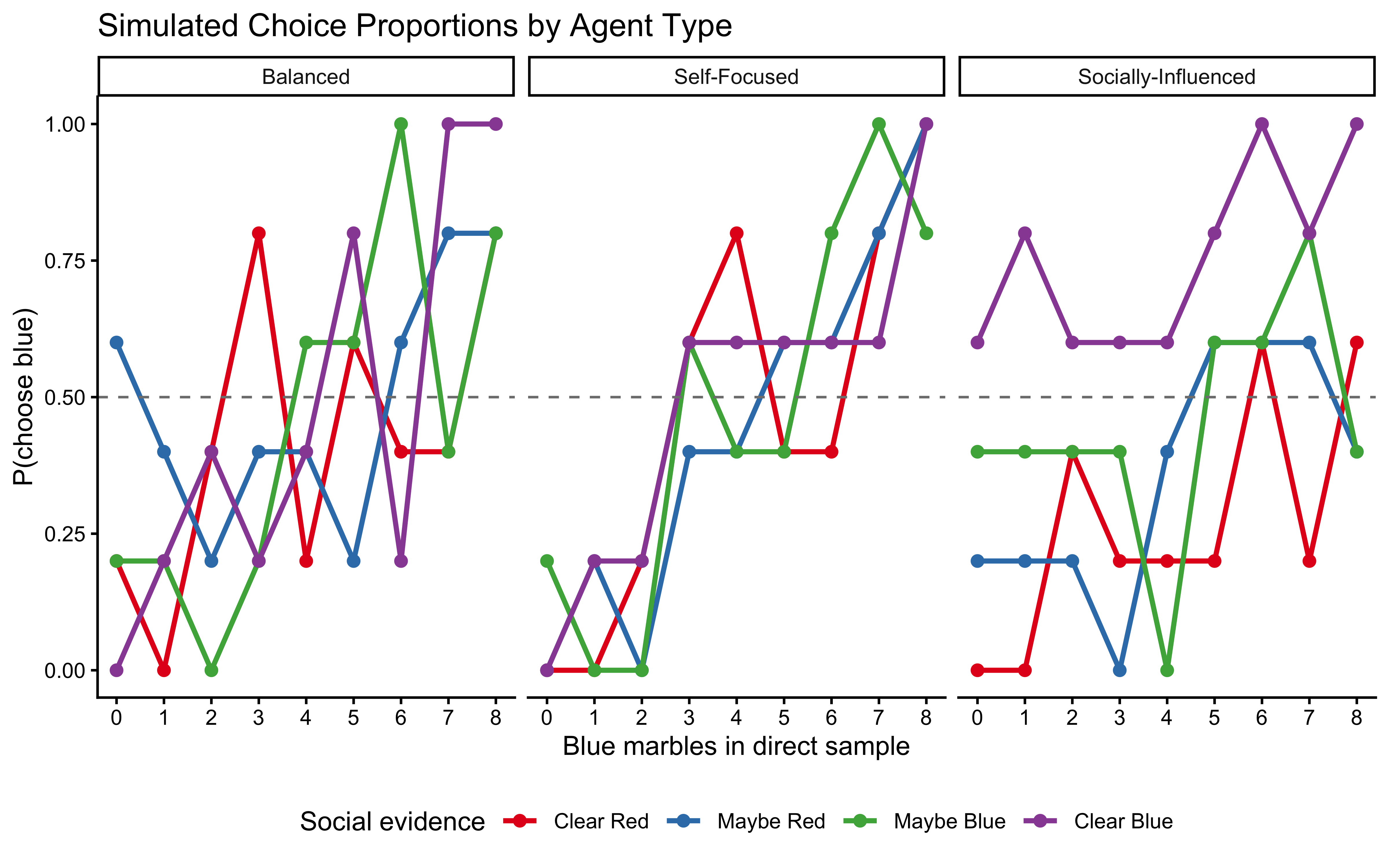

agents <- tibble(

agent_type = c("Balanced", "Self-Focused", "Socially-Influenced"),

weight_direct = c(1.0, 1.5, 0.7),

weight_social = c(1.0, 0.5, 2.0)

)

evidence_combinations <- expand_grid(

blue1 = 0:8,

blue2 = 0:3,

total1 = 8,

total2 = 3

)

generate_agent_decisions <- function(weight_direct, weight_social,

evidence_df, n_samples = 5) {

evidence_df |>

slice(rep(seq_len(n()), each = n_samples)) |>

group_by(blue1, blue2, total1, total2) |>

mutate(sample_id = seq_len(n())) |>

ungroup() |>

pmap_dfr(function(blue1, blue2, total1, total2, sample_id) {

result <- betaBinomialModel(

0.5, 0.5, blue1, total1, blue2, total2,

w_direct = weight_direct, w_social = weight_social

)

# Choice is sampled from the posterior predictive (BetaBinomial)

alpha_p <- result$alpha_post

beta_p <- result$beta_post

p <- rbeta(1, alpha_p, beta_p)

tibble(

sample_id = sample_id,

blue1 = blue1,

blue2 = blue2,

total1 = total1,

total2 = total2,

expected_rate = result$expected_rate,

choice = rbinom(1, 1, p),

choice_label = ifelse(choice == 1, "Blue", "Red")

)

})

}

simulation_results <- agents |>

pmap_dfr(function(agent_type, weight_direct, weight_social) {

generate_agent_decisions(weight_direct, weight_social,

evidence_combinations, n_samples = 5) |>

mutate(agent_type = agent_type)

}) |>

mutate(

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

),

agent_type = factor(

agent_type,

levels = c("Balanced", "Self-Focused", "Socially-Influenced")

)

)

# Quick visualisation

ggplot(

simulation_results |>

group_by(agent_type, blue1, social_evidence) |>

summarise(p_blue = mean(choice), .groups = "drop"),

aes(x = blue1, y = p_blue, colour = social_evidence, group = social_evidence)

) +

geom_line(linewidth = 1) +

geom_point(size = 2) +

geom_hline(yintercept = 0.5, linetype = "dashed", colour = "grey50") +

facet_wrap(~ agent_type) +

scale_colour_brewer(palette = "Set1") +

scale_x_continuous(breaks = 0:8) +

coord_cartesian(ylim = c(0, 1)) +

labs(

title = "Simulated Choice Proportions by Agent Type",

x = "Blue marbles in direct sample",

y = "P(choose blue)",

colour = "Social evidence"

) +

theme(legend.position = "bottom")

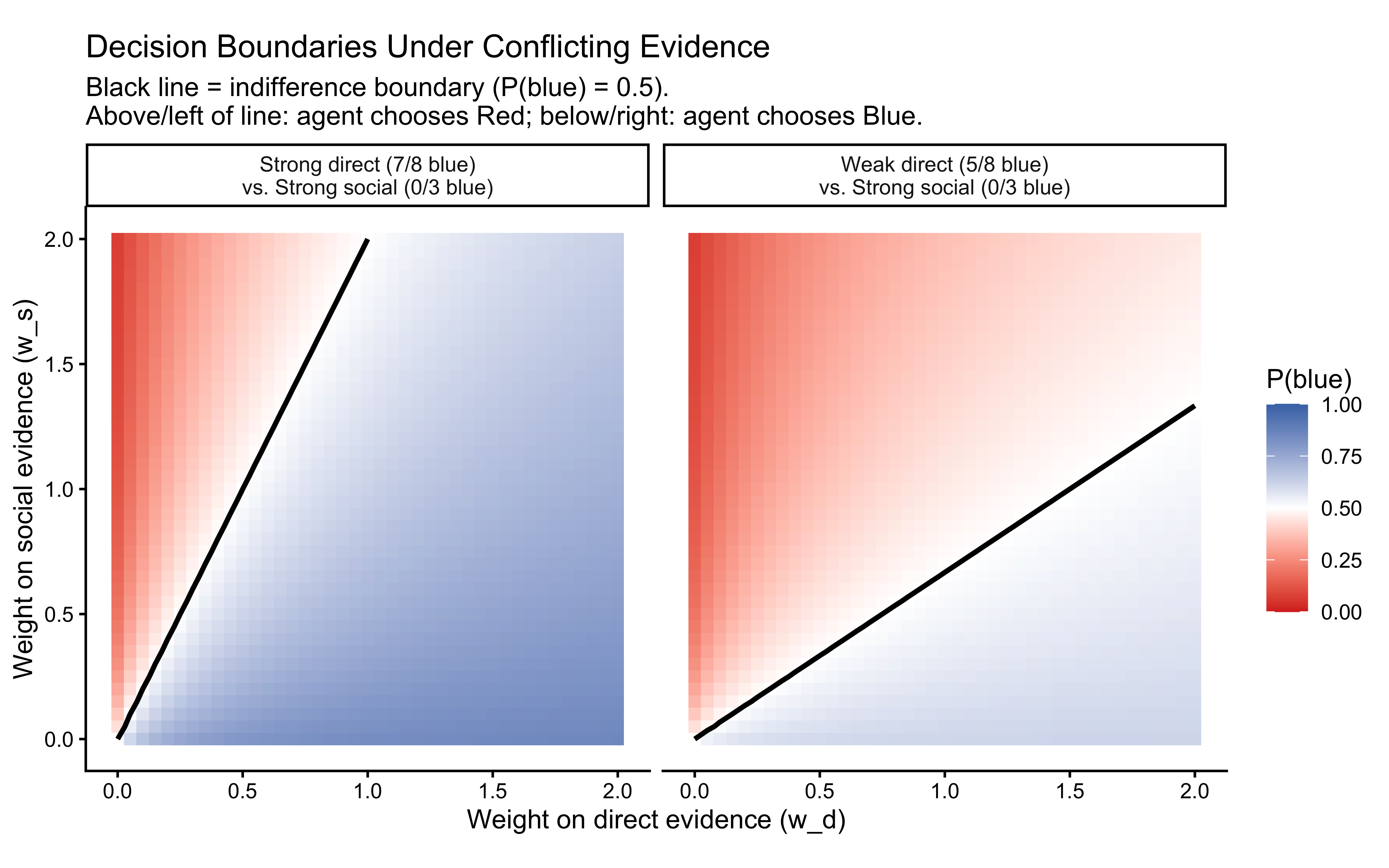

10.8.7 How the WBA Resolves Conflicting Evidence

A key cognitive question is: how does the balance of weights determine an agent’s choice when direct and social evidence point in opposite directions? We can answer this visually by sweeping \(w_d\) and \(w_s\) across a grid and plotting the decision boundary — the contour at which the agent is indifferent between blue and red.

# Two conflict scenarios from the original chapter

scenario_strong <- list(blue1 = 7L, total1 = 8L, blue2 = 0L, total2 = 3L)

scenario_weak <- list(blue1 = 5L, total1 = 8L, blue2 = 0L, total2 = 3L)

evaluate_conflict <- function(scenario) {

expand_grid(

weight_direct = seq(0, 2, by = 0.05),

weight_social = seq(0, 2, by = 0.05)

) |>

mutate(

alpha_post = 0.5 + weight_direct * scenario$blue1 +

weight_social * scenario$blue2,

beta_post = 0.5 + weight_direct * (scenario$total1 - scenario$blue1) +

weight_social * (scenario$total2 - scenario$blue2),

expected_rate = alpha_post / (alpha_post + beta_post)

)

}

conflict_strong <- evaluate_conflict(scenario_strong) |> mutate(scenario = "Strong direct (7/8 blue)\nvs. Strong social (0/3 blue)")

conflict_weak <- evaluate_conflict(scenario_weak) |> mutate(scenario = "Weak direct (5/8 blue)\nvs. Strong social (0/3 blue)")

conflict_all <- bind_rows(conflict_strong, conflict_weak)

ggplot(conflict_all,

aes(x = weight_direct, y = weight_social, fill = expected_rate)) +

geom_tile() +

geom_contour(aes(z = expected_rate), breaks = 0.5,

colour = "black", linewidth = 1) +

scale_fill_gradient2(

low = "#d73027", mid = "white", high = "#4575b4",

midpoint = 0.5, limits = c(0, 1)

) +

facet_wrap(~ scenario) +

coord_fixed() +

labs(

title = "Decision Boundaries Under Conflicting Evidence",

subtitle = "Black line = indifference boundary (P(blue) = 0.5).\nAbove/left of line: agent chooses Red; below/right: agent chooses Blue.",

x = "Weight on direct evidence (w_d)",

y = "Weight on social evidence (w_s)",

fill = "P(blue)"

) +

theme(legend.position = "right")

Reading the plot: Each cell’s colour shows the WBA’s predicted probability of choosing blue for that weight combination. The black contour line marks the decision boundary — the set of \((w_d, w_s)\) pairs at which the two sources of evidence exactly cancel. Above and to the left of this line, social evidence wins (agent chooses red); below and to the right, direct evidence wins (agent chooses blue).

Two insights stand out:

Evidence strength shifts the boundary. In the strong-direct scenario (7/8 blue), the boundary is pushed far toward high \(w_s\) — it takes a very large social weight to overcome the direct evidence for blue. In the weak-direct scenario (5/8), the boundary sits closer to the diagonal, meaning even moderate social weights can reverse the decision.

The SBA is a single point. The Simple Bayesian Agent always sits at \((w_d, w_s) = (1, 1)\). Wherever that point falls relative to the boundary determines its prediction. For the strong-direct scenario, \((1,1)\) is comfortably below the boundary (chooses blue). For the weak-direct scenario, \((1,1)\) is again below but closer to the boundary. The SBA cannot adjust — the WBA can.

10.9 Stan Implementation

10.9.1 Model 1: Simple Bayesian Agent

SimpleAgent_stan <- "

// Simple Bayesian Agent — Jeffreys prior (alpha0 = beta0 = 0.5).

// No free parameters: the SBA is a deterministic prediction machine.

data {

int<lower=1> N;

array[N] int<lower=0, upper=1> choice;

array[N] int<lower=0> blue1;

array[N] int<lower=0> total1;

array[N] int<lower=0> blue2;

array[N] int<lower=0> total2;

}

transformed data {

// Jeffreys prior for a binomial proportion: Beta(0.5, 0.5).

// Parameterisation-invariant; concentrates slightly more mass near 0 and 1

// than the uniform Beta(1, 1). With 8+ observations per trial the

// difference is negligible, but the choice is principled.

real alpha0 = 0.5;

real beta0 = 0.5;

}

parameters {

// No free parameters.

}

model {

for (i in 1:N) {

real alpha_post = alpha0 + blue1[i] + blue2[i];

real beta_post = beta0

+ (total1[i] - blue1[i])

+ (total2[i] - blue2[i]);

target += beta_binomial_lpmf(choice[i] | 1, alpha_post, beta_post);

}

}

generated quantities {

vector[N] log_lik;

array[N] int prior_pred;

array[N] int posterior_pred;

for (i in 1:N) {

real alpha_post = alpha0 + blue1[i] + blue2[i];

real beta_post = beta0

+ (total1[i] - blue1[i])

+ (total2[i] - blue2[i]);

log_lik[i] = beta_binomial_lpmf(choice[i] | 1, alpha_post, beta_post);

posterior_pred[i] = beta_binomial_rng(1, alpha_post, beta_post);

// Prior predictive: Jeffreys pseudo-counts only, no trial evidence

prior_pred[i] = beta_binomial_rng(1, alpha0, beta0);

}

}

"

write_stan_file(SimpleAgent_stan,

dir = "stan/",

basename = "ch9_simple_agent.stan")## [1] "/Users/au209589/Dropbox/Teaching/AdvancedCognitiveModeling23_book/stan/ch9_simple_agent.stan"10.9.2 Model 2: Proportional Bayesian Agent

ProportionalAgent_stan <- "

// Proportional Bayesian Agent (PBA).

// p in [0,1] allocates the unit evidence budget between direct and social.

// p = 0.5 approximates the SBA; p -> 1 ignores social; p -> 0 ignores direct.

// Jeffreys prior pseudo-counts (alpha0 = beta0 = 0.5) consistent with SBA.

data {

int<lower=1> N;

array[N] int<lower=0, upper=1> choice;

array[N] int<lower=0> blue1;

array[N] int<lower=0> total1;

array[N] int<lower=0> blue2;

array[N] int<lower=0> total2;

}

parameters {

// Allocation of the unit evidence budget to direct evidence.

// Beta(2, 2): weakly bell-shaped, symmetric about 0.5, keeps

// prior mass away from the boundaries where geometry can degrade.

real<lower=0, upper=1> p;

}

model {

target += beta_lpdf(p | 2, 2);

profile(\"likelihood\") {

for (i in 1:N) {

real alpha_post = 0.5

+ p * blue1[i]

+ (1.0 - p) * blue2[i];

real beta_post = 0.5

+ p * (total1[i] - blue1[i])

+ (1.0 - p) * (total2[i] - blue2[i]);

target += beta_binomial_lpmf(choice[i] | 1, alpha_post, beta_post);

}

}

}

generated quantities {

vector[N] log_lik;

array[N] int prior_pred;

array[N] int posterior_pred;

real p_prior = beta_rng(2, 2);

for (i in 1:N) {

real alpha_post = 0.5 + p * blue1[i] + (1.0 - p) * blue2[i];

real beta_post = 0.5

+ p * (total1[i] - blue1[i])

+ (1.0 - p) * (total2[i] - blue2[i]);

log_lik[i] = beta_binomial_lpmf(choice[i] | 1, alpha_post, beta_post);

posterior_pred[i] = beta_binomial_rng(1, alpha_post, beta_post);

// Prior predictive using sampled prior p

real ap = 0.5 + p_prior * blue1[i] + (1.0 - p_prior) * blue2[i];

real bp = 0.5

+ p_prior * (total1[i] - blue1[i])

+ (1.0 - p_prior) * (total2[i] - blue2[i]);

prior_pred[i] = beta_binomial_rng(1, ap, bp);

}

}

"

write_stan_file(ProportionalAgent_stan,

dir = "stan/",

basename = "ch9_proportional_agent.stan")## [1] "/Users/au209589/Dropbox/Teaching/AdvancedCognitiveModeling23_book/stan/ch9_proportional_agent.stan"10.9.3 Model 3: Weighted Bayesian Agent

WeightedAgent_stan <- "

// Weighted Bayesian Agent.

// w_direct, w_social >= 0 scale the effective count of each evidence source.

// Jeffreys prior pseudo-counts (0.5) consistent with SBA and PBA.

data {

int<lower=1> N;

array[N] int<lower=0, upper=1> choice;

array[N] int<lower=0> blue1;

array[N] int<lower=0> total1;

array[N] int<lower=0> blue2;

array[N] int<lower=0> total2;

}

parameters {

real<lower=0> weight_direct;

real<lower=0> weight_social;

}

model {

// Priors on log scale (equivalent to log-normal in natural scale).

// lognormal(0, 0.5) concentrates mass roughly in [0.2, 5],

// spanning strong underweighting to strong overweighting.

target += lognormal_lpdf(weight_direct | 0, 0.5);

target += lognormal_lpdf(weight_social | 0, 0.5);

profile(\"likelihood\") {

for (i in 1:N) {

real alpha_post = 0.5

+ weight_direct * blue1[i]

+ weight_social * blue2[i];

real beta_post = 0.5

+ weight_direct * (total1[i] - blue1[i])

+ weight_social * (total2[i] - blue2[i]);

target += beta_binomial_lpmf(choice[i] | 1, alpha_post, beta_post);

}

}

}

generated quantities {

vector[N] log_lik;

array[N] int prior_pred;

array[N] int posterior_pred;

// Prior samples for predictive checks

real wd_prior = lognormal_rng(0, 0.5);

real ws_prior = lognormal_rng(0, 0.5);

for (i in 1:N) {

// Posterior predictions

real alpha_post = 0.5

+ weight_direct * blue1[i]

+ weight_social * blue2[i];

real beta_post = 0.5

+ weight_direct * (total1[i] - blue1[i])

+ weight_social * (total2[i] - blue2[i]);

log_lik[i] = beta_binomial_lpmf(choice[i] | 1, alpha_post, beta_post);

posterior_pred[i] = beta_binomial_rng(1, alpha_post, beta_post);

// Prior predictions using sampled prior weights

real ap = 0.5 + wd_prior * blue1[i] + ws_prior * blue2[i];

real bp = 0.5 + wd_prior * (total1[i] - blue1[i])

+ ws_prior * (total2[i] - blue2[i]);

prior_pred[i] = beta_binomial_rng(1, ap, bp);

}

}

"

write_stan_file(WeightedAgent_stan,

dir = "stan/",

basename = "ch9_weighted_agent.stan")## [1] "/Users/au209589/Dropbox/Teaching/AdvancedCognitiveModeling23_book/stan/ch9_weighted_agent.stan"10.10 Phase 1: Prior Predictive Checks

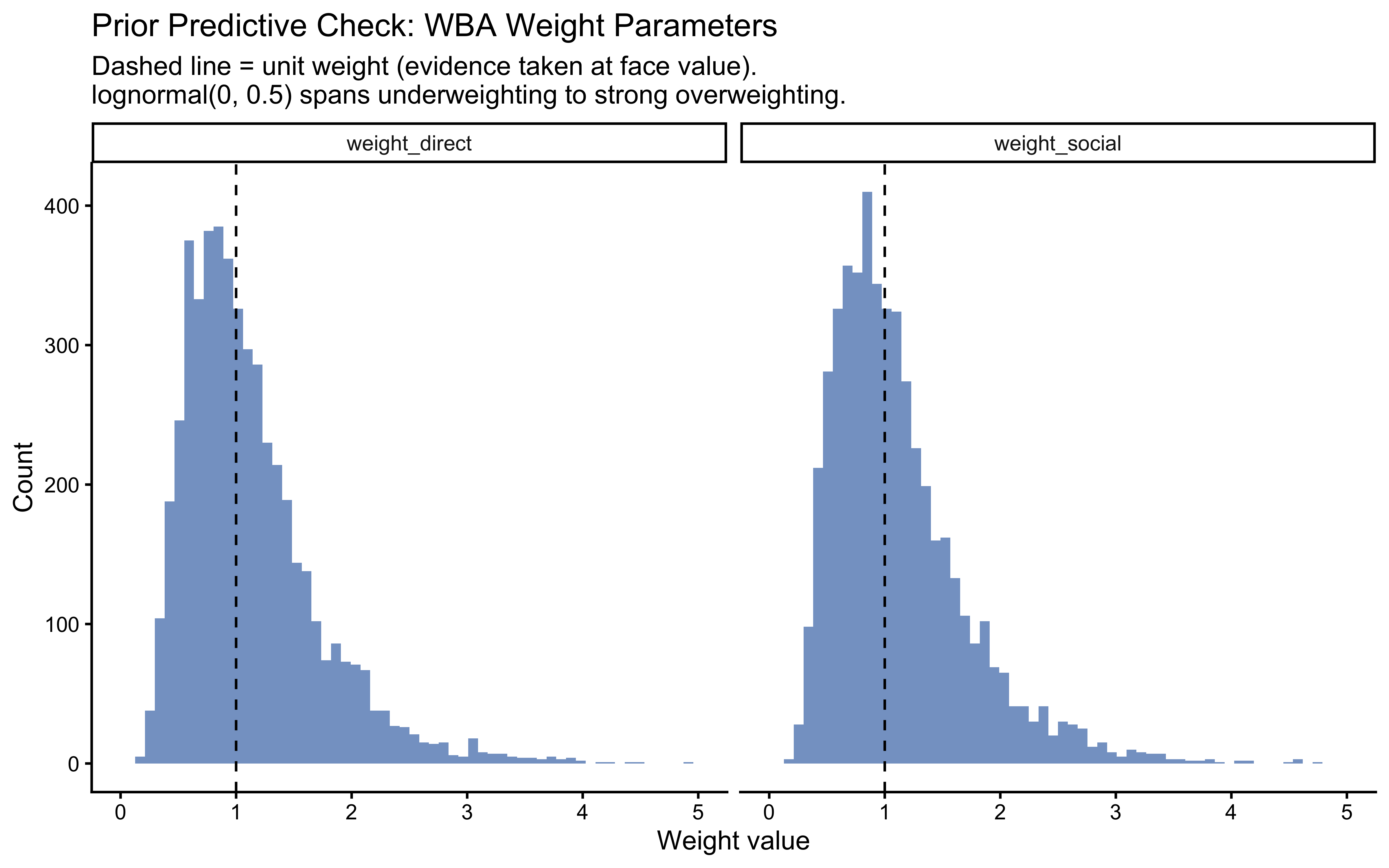

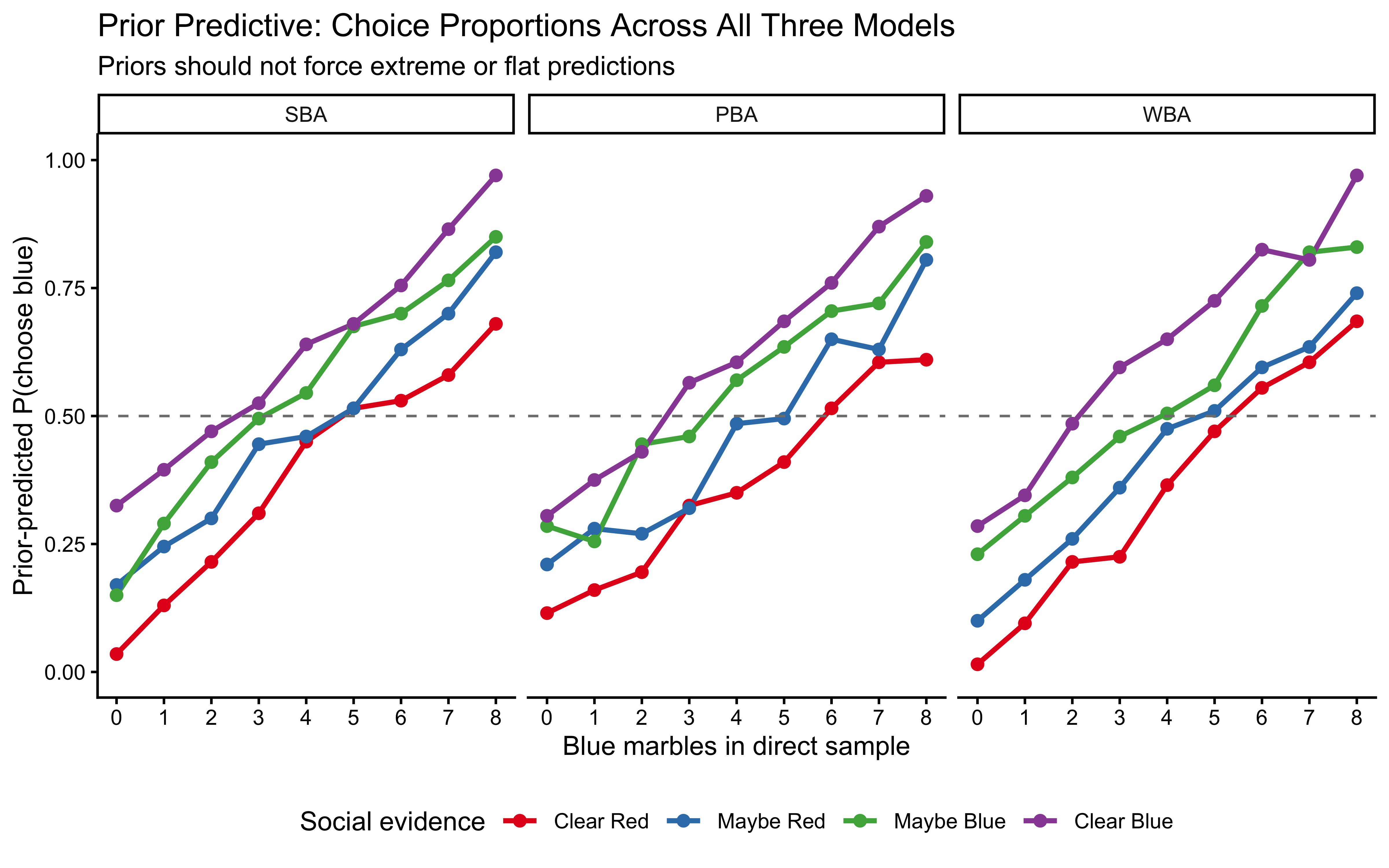

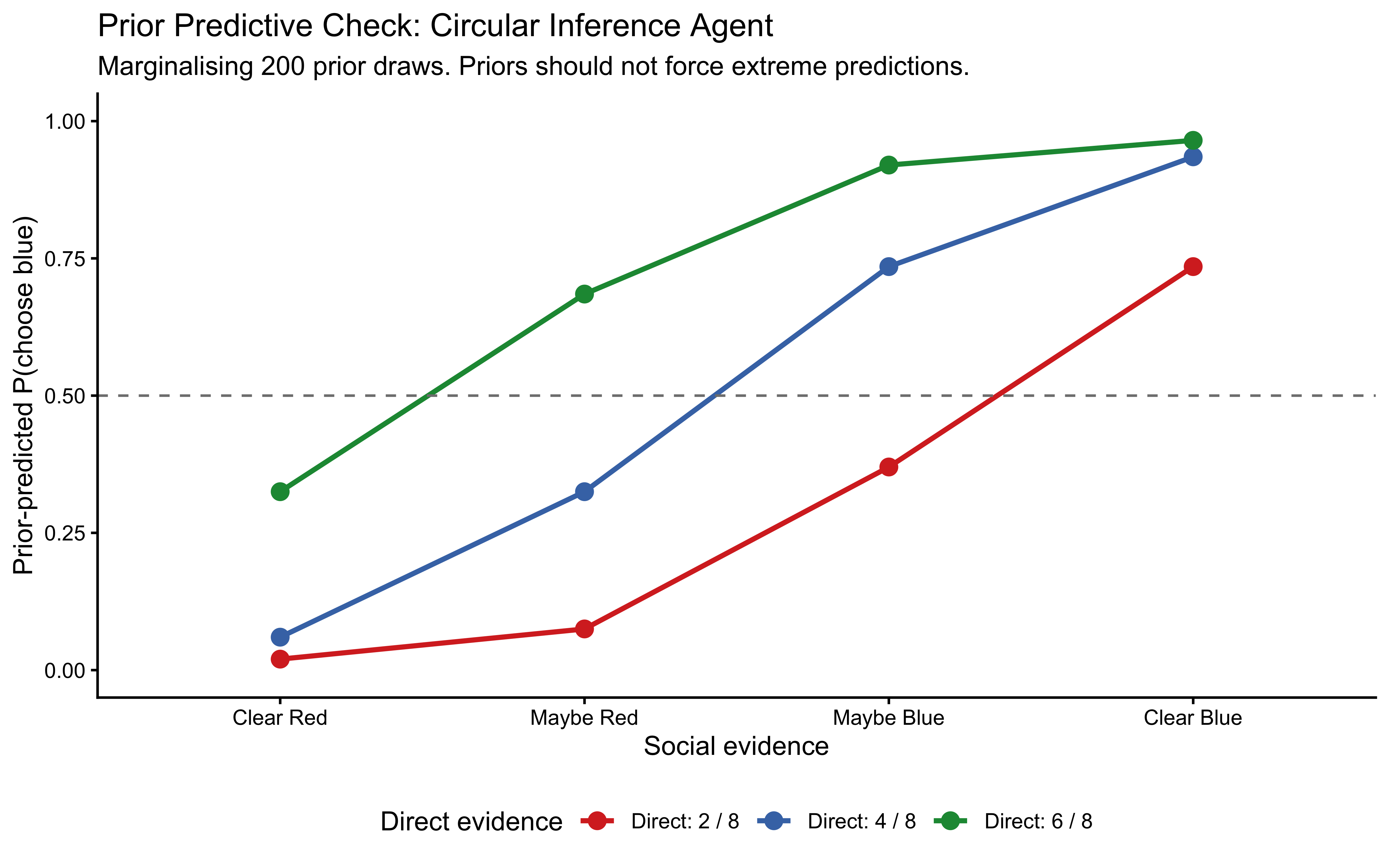

Before fitting to any data, we must confirm that the priors of each model generate cognitively plausible behaviour.

- SBA: The Jeffreys prior Beta(0.5, 0.5) gives a BetaBinomial marginal that is slightly U-shaped rather than flat — it places marginally more prior mass on confident choices, but with 11 total observations per trial this barely affects the prior predictive.

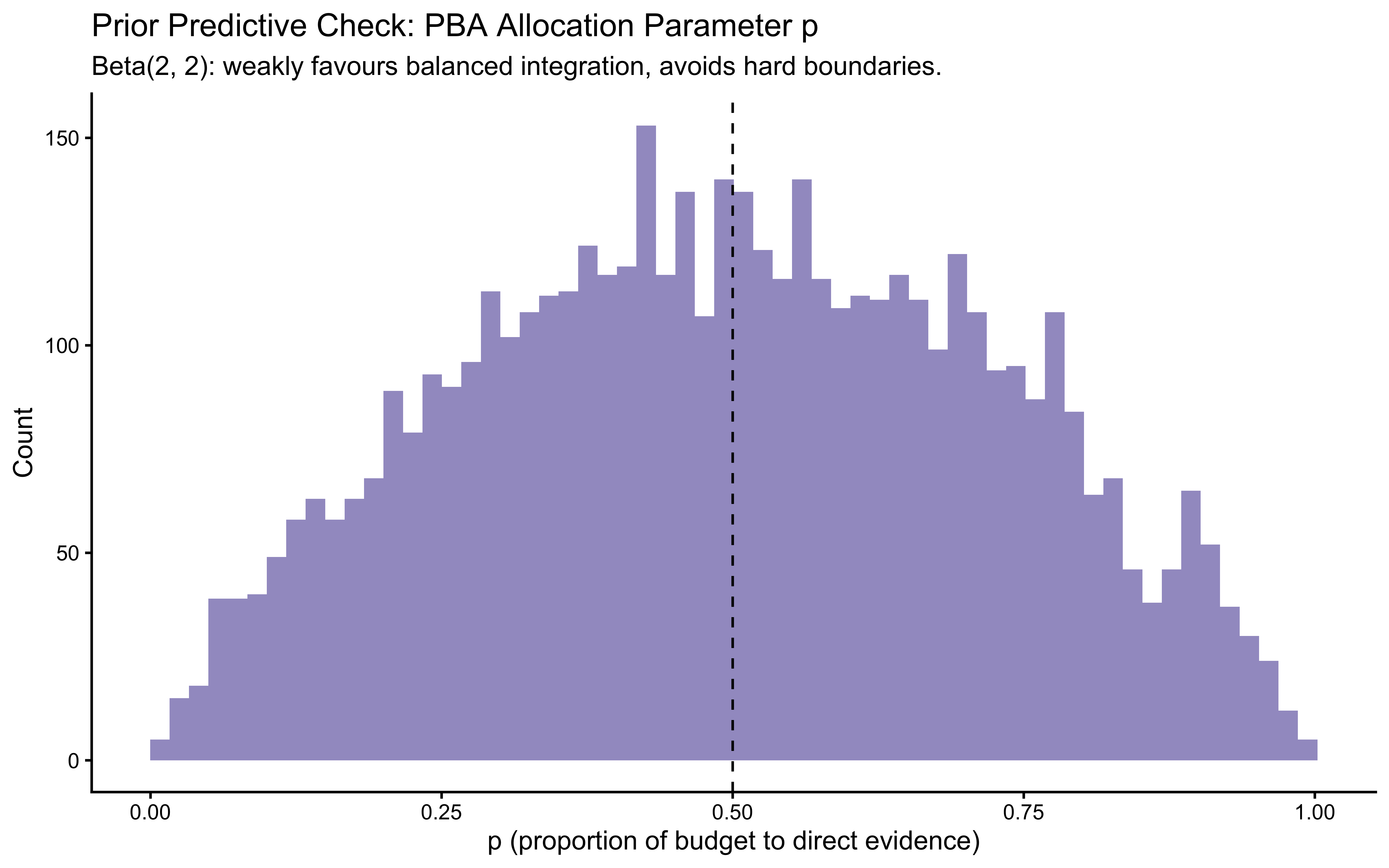

- PBA: The Beta(2, 2) prior on \(p\) is weakly bell-shaped around 0.5. We verify that this does not force predictions to be symmetric across all conditions.

- WBA: The lognormal(0, 0.5) prior on \(w_d\) and \(w_s\) spans roughly 0.2 to 5 — from strong underweighting to strong overweighting. If the prior concentrated mass only near 1.0, it would be essentially a tighter SBA. Checking the prior first confirms that the WBA can represent the full range of integration strategies before any data are seen.

set.seed(42)

n_prior <- 5000

# ── WBA: inspect prior on weight scale ──────────────────────────────

wd_prior_samples <- rlnorm(n_prior, meanlog = 0, sdlog = 0.5)

ws_prior_samples <- rlnorm(n_prior, meanlog = 0, sdlog = 0.5)

tibble(weight_direct = wd_prior_samples,

weight_social = ws_prior_samples) |>

pivot_longer(everything(), names_to = "parameter", values_to = "value") |>

ggplot(aes(x = value)) +

geom_histogram(bins = 60, fill = "#4575b4", alpha = 0.7) +

geom_vline(xintercept = 1, linetype = "dashed", colour = "black") +

scale_x_continuous(limits = c(0, 5)) +

facet_wrap(~ parameter) +

labs(

title = "Prior Predictive Check: WBA Weight Parameters",

subtitle = "Dashed line = unit weight (evidence taken at face value).\nlognormal(0, 0.5) spans underweighting to strong overweighting.",

x = "Weight value",

y = "Count"

)

# ── PBA: inspect prior on allocation parameter p ────────────────────

tibble(p = rbeta(n_prior, 2, 2)) |>

ggplot(aes(x = p)) +

geom_histogram(bins = 60, fill = "#756bb1", alpha = 0.7) +

geom_vline(xintercept = 0.5, linetype = "dashed", colour = "black") +

labs(

title = "Prior Predictive Check: PBA Allocation Parameter p",

subtitle = "Beta(2, 2): weakly favours balanced integration, avoids hard boundaries.",

x = "p (proportion of budget to direct evidence)",

y = "Count"

)

# ── Prior-predictive choices across the evidence grid: all three models ─

ppc_from_prior <- function(alpha_fn, beta_fn, n_rep = 200, label = "") {

evidence_combinations |>

slice(rep(seq_len(n()), times = n_rep)) |>

mutate(

alpha_p = alpha_fn(n(), blue1, blue2, total1, total2),

beta_p = beta_fn(n(), blue1, blue2, total1, total2),

theta = rbeta(n(), alpha_p, beta_p),

y = rbinom(n(), 1, theta)

) |>

group_by(blue1, blue2) |>

summarise(p_blue = mean(y), .groups = "drop") |>

mutate(

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

),

model = label

)

}

sba_ppc <- ppc_from_prior(

alpha_fn = function(n, b1, b2, ...) 0.5 + b1 + b2,

beta_fn = function(n, b1, b2, t1, t2) 0.5 + (t1 - b1) + (t2 - b2),

label = "SBA"

)

pba_ppc <- ppc_from_prior(

alpha_fn = function(n, b1, b2, ...) {

p_draw <- rbeta(n, 2, 2); 0.5 + p_draw * b1 + (1 - p_draw) * b2

},

beta_fn = function(n, b1, b2, t1, t2) {

p_draw <- rbeta(n, 2, 2)

0.5 + p_draw * (t1 - b1) + (1 - p_draw) * (t2 - b2)

},

label = "PBA"

)

wba_ppc <- ppc_from_prior(

alpha_fn = function(n, b1, b2, ...) {

wd <- rlnorm(n, 0, 0.5); ws <- rlnorm(n, 0, 0.5)

0.5 + wd * b1 + ws * b2

},

beta_fn = function(n, b1, b2, t1, t2) {

wd <- rlnorm(n, 0, 0.5); ws <- rlnorm(n, 0, 0.5)

0.5 + wd * (t1 - b1) + ws * (t2 - b2)

},

label = "WBA"

)

bind_rows(sba_ppc, pba_ppc, wba_ppc) |>

mutate(model = factor(model, levels = c("SBA", "PBA", "WBA"))) |>

ggplot(aes(x = blue1, y = p_blue,

colour = social_evidence, group = social_evidence)) +

geom_line(linewidth = 1) +

geom_point(size = 2) +

geom_hline(yintercept = 0.5, linetype = "dashed", colour = "grey50") +

scale_x_continuous(breaks = 0:8) +

scale_colour_brewer(palette = "Set1") +

coord_cartesian(ylim = c(0, 1)) +

facet_wrap(~ model) +

labs(

title = "Prior Predictive: Choice Proportions Across All Three Models",

subtitle = "Priors should not force extreme or flat predictions",

x = "Blue marbles in direct sample",

y = "Prior-predicted P(choose blue)",

colour = "Social evidence"

) +

theme(legend.position = "bottom")

Interpretation: The SBA prior predictive is nearly flat across all conditions — the Jeffreys pseudo-count contributes negligibly against 11 observations. The PBA and WBA show structured gradients even under the prior, confirming that the prior is not degenerate, but with substantial uncertainty. None of the three priors force predictions to extreme values uniformly. All three pass the plausibility check.

10.11 Phase 3: Simulation Validation

Empirical fitting must never occur before simulation validation. We proceed in two steps: (1) single-fit parameter recovery to confirm the model can identify parameters from a known dataset; (2) SBC to certify that the inference engine is globally unbiased.

10.11.1 Preparing Data for Validation

prepare_stan_data <- function(df) {

list(

N = nrow(df),

choice = df$choice,

blue1 = df$blue1,

total1 = df$total1,

blue2 = df$blue2,

total2 = df$total2

)

}

sim_data_for_stan <- simulation_results |>

mutate(total1 = 8L, total2 = 3L)

balanced_data <- sim_data_for_stan |> filter(agent_type == "Balanced")

self_focused_data <- sim_data_for_stan |> filter(agent_type == "Self-Focused")

socially_influenced_data <- sim_data_for_stan |> filter(agent_type == "Socially-Influenced")

stan_data_balanced <- prepare_stan_data(balanced_data)

stan_data_self_focused <- prepare_stan_data(self_focused_data)

stan_data_socially_influenced <- prepare_stan_data(socially_influenced_data)10.11.3 Single-Fit Parameter Recovery

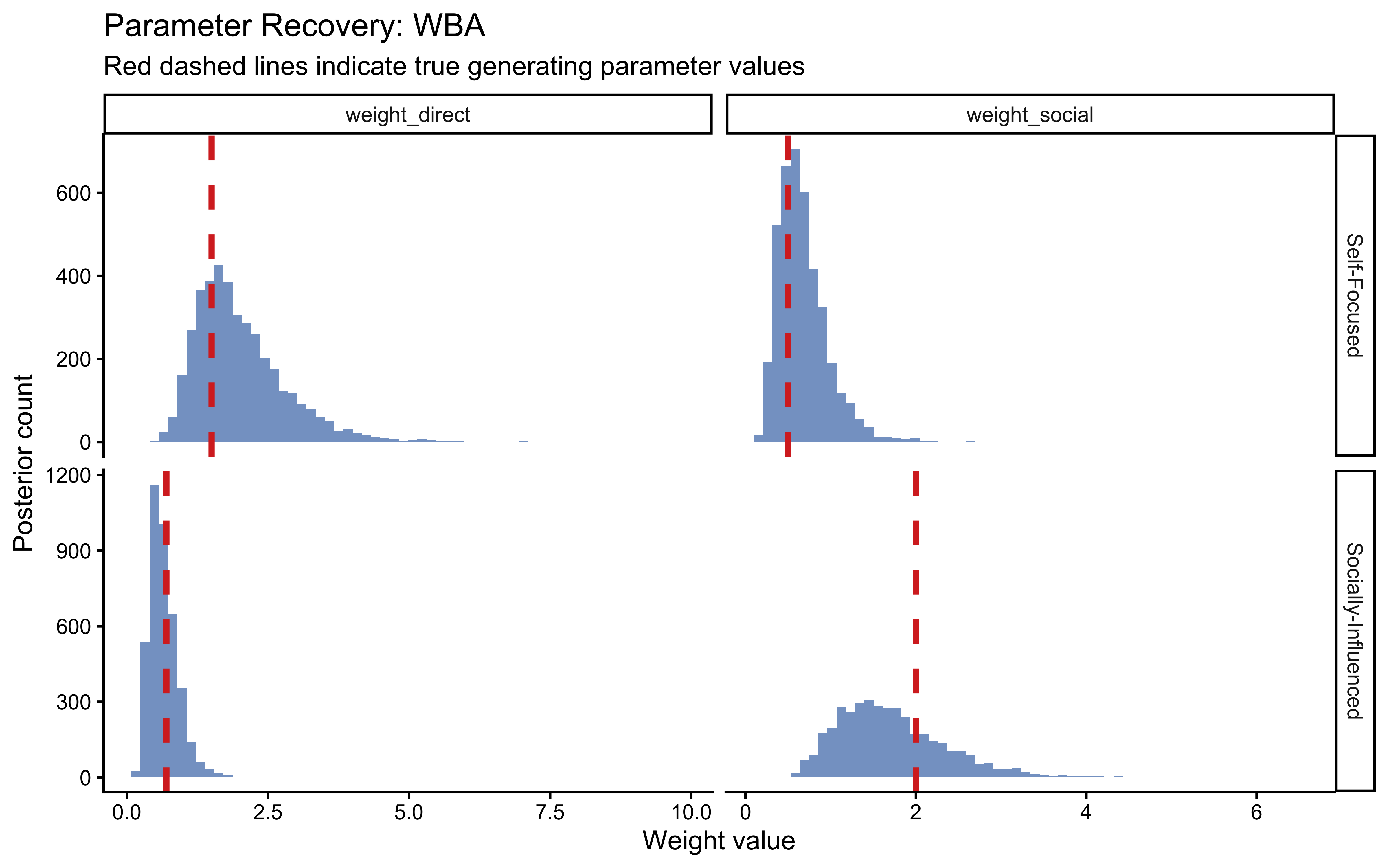

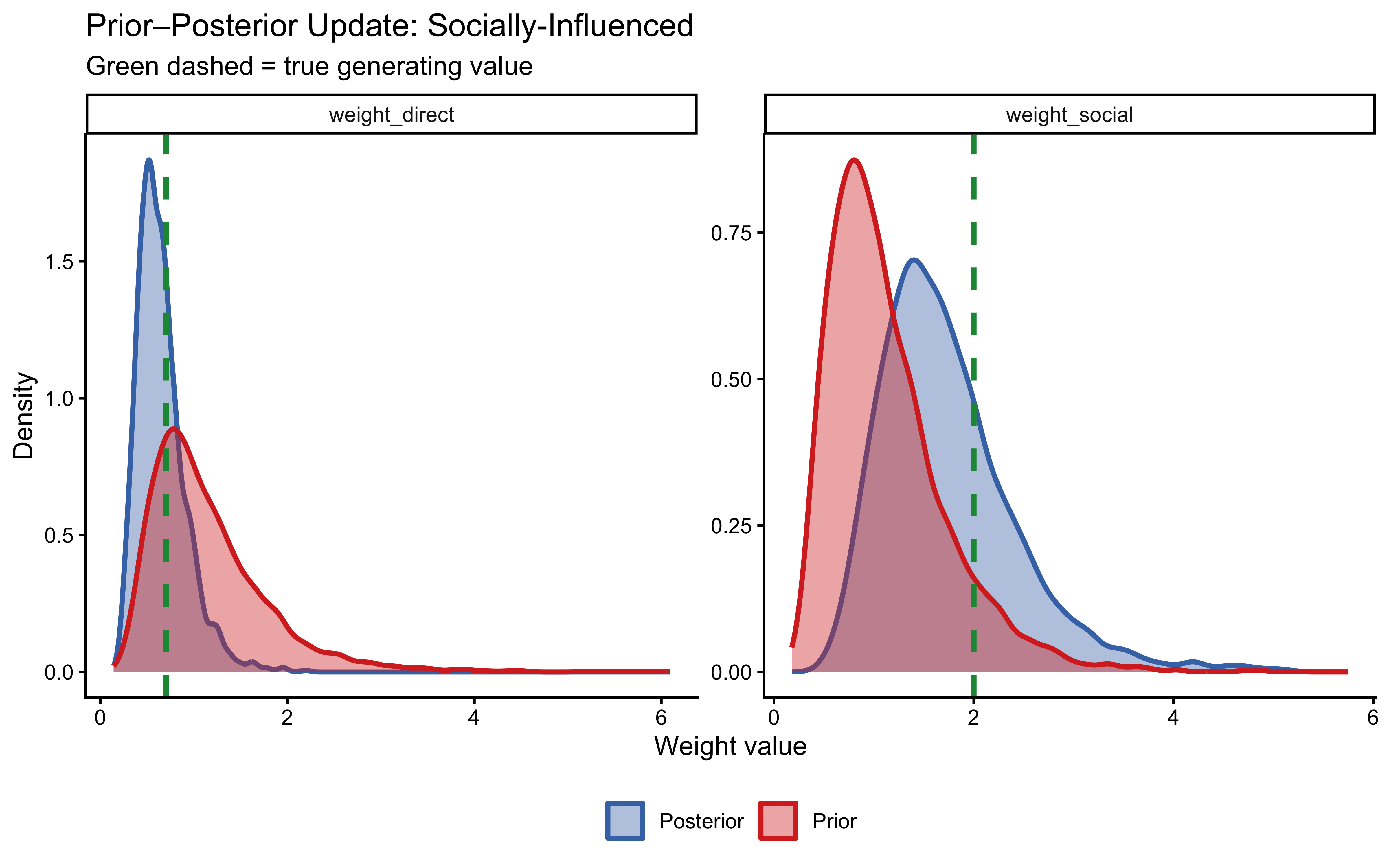

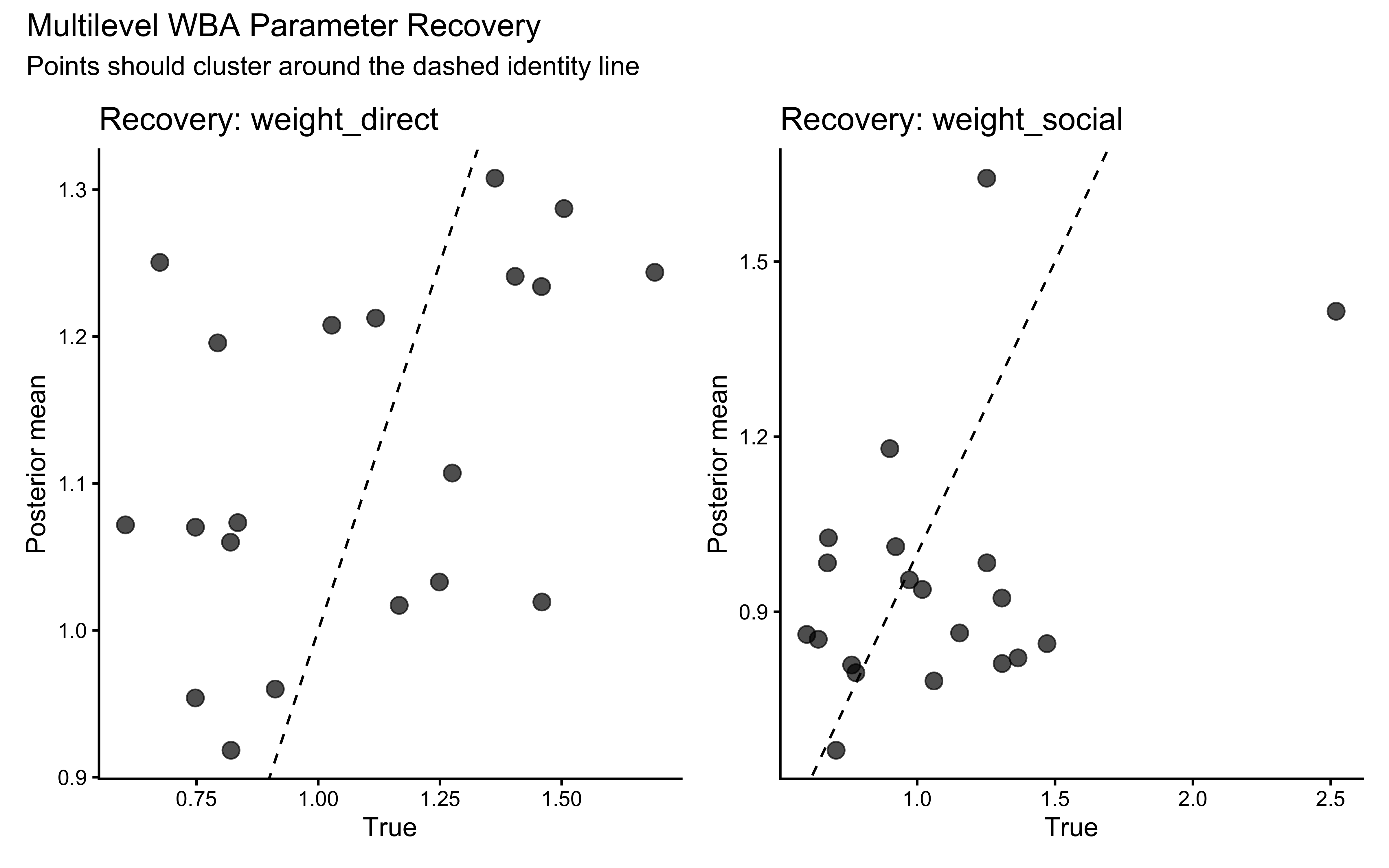

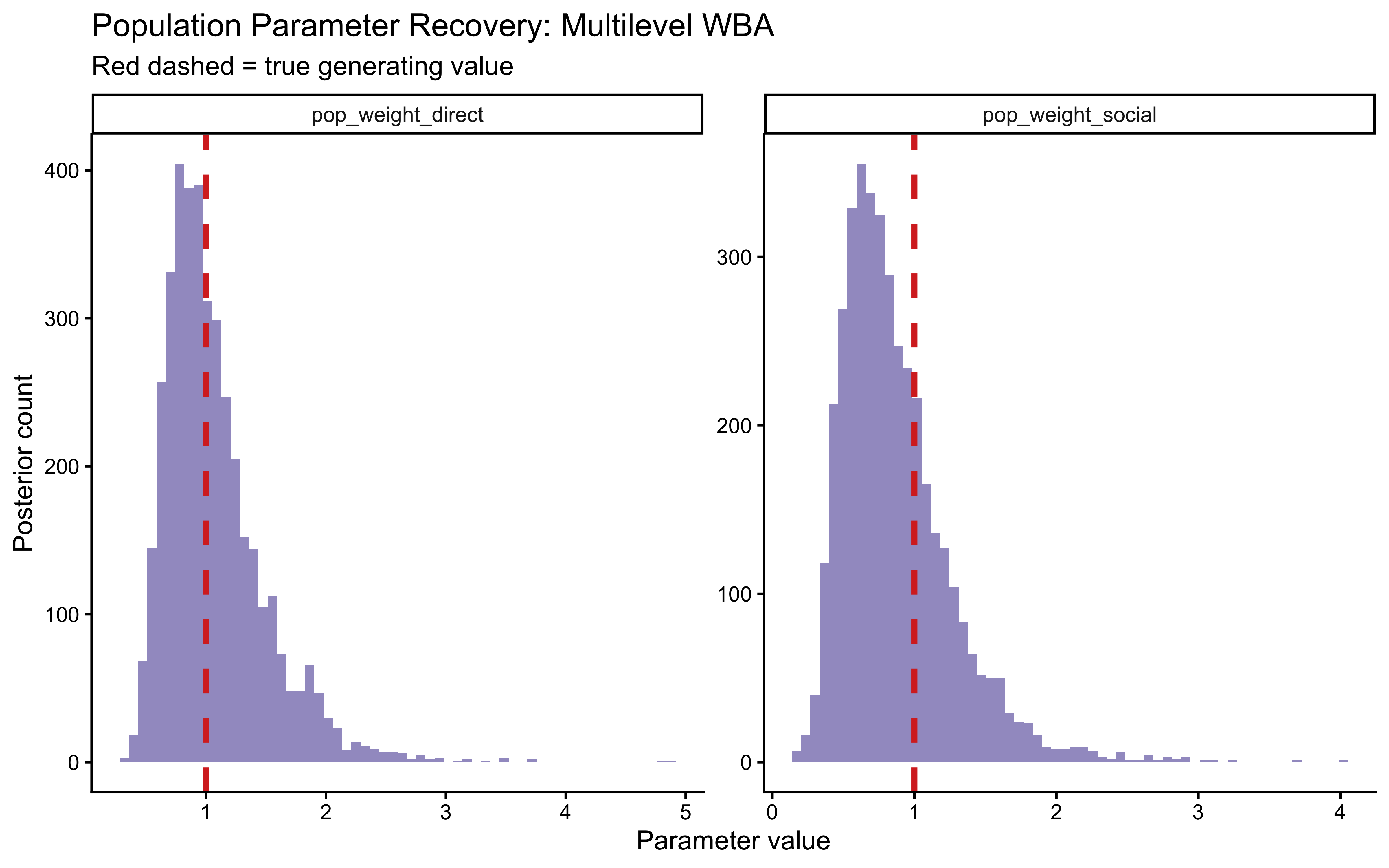

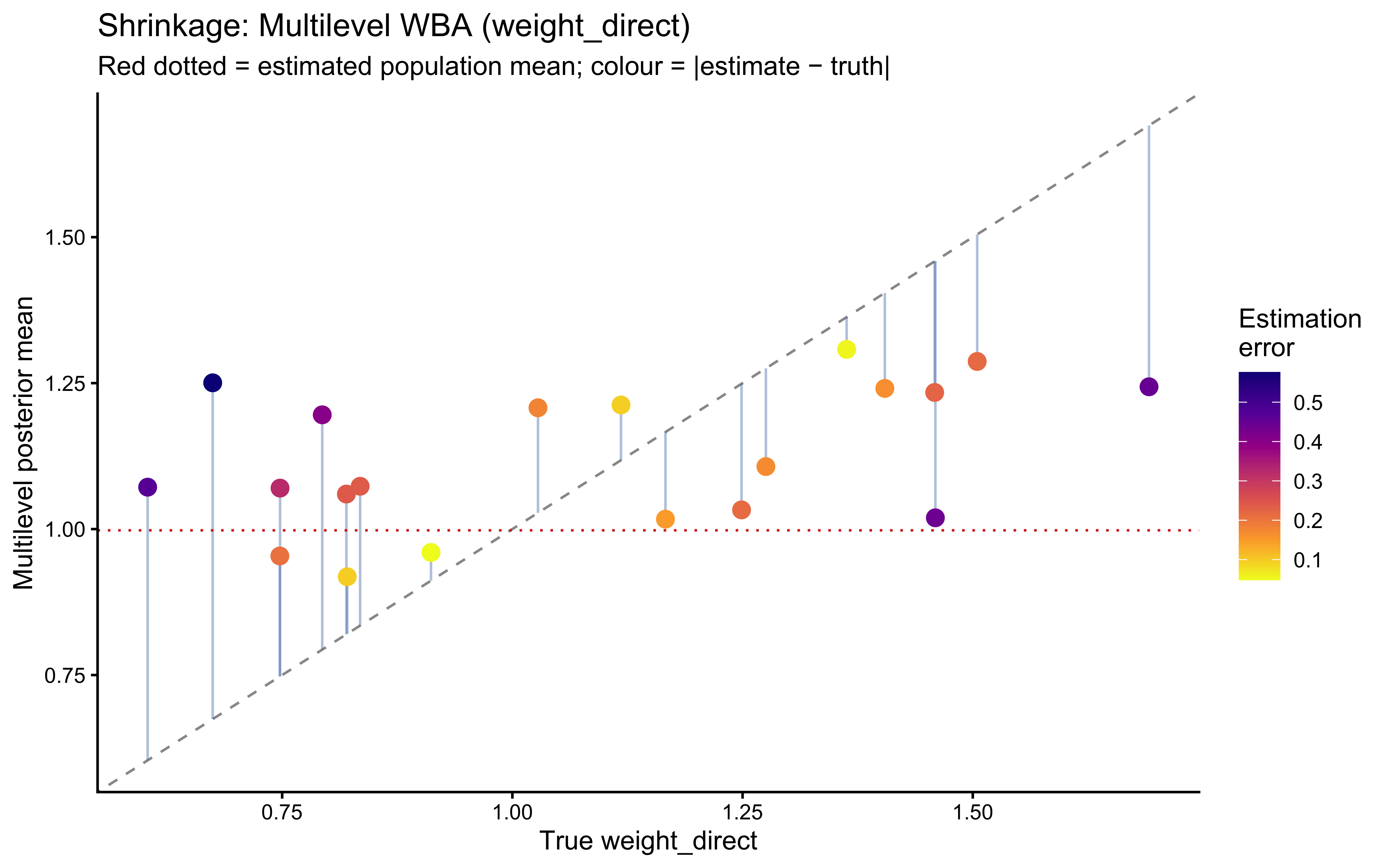

We fit the WBA to the Self-Focused agent (true \(w_d = 1.5\), \(w_s = 0.5\)) and the Socially-Influenced agent (true \(w_d = 0.7\), \(w_s = 2.0\)) to verify that the model recovers the generating parameters.

fit_recovery_self <- if (regenerate_simulations) {

fit <- mod_weighted$sample(

data = stan_data_self_focused,

seed = 101,

chains = 4,

parallel_chains = 4,

iter_warmup = 1000,

iter_sampling = 1000,

refresh = 0

)

fit$save_object("simmodels/ch9_fit_recovery_self.rds")

fit

} else {

readRDS("simmodels/ch9_fit_recovery_self.rds")

}## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.5 seconds.

## Chain 2 finished in 0.5 seconds.

## Chain 3 finished in 0.5 seconds.

## Chain 4 finished in 0.5 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.5 seconds.

## Total execution time: 3.3 seconds.fit_recovery_social <- if (regenerate_simulations) {

fit <- mod_weighted$sample(

data = stan_data_socially_influenced,

seed = 102,

chains = 4,

parallel_chains = 4,

iter_warmup = 1000,

iter_sampling = 1000,

refresh = 0

)

fit$save_object("simmodels/ch9_fit_recovery_social.rds")

fit

} else {

readRDS("simmodels/ch9_fit_recovery_social.rds")

}## Running MCMC with 4 parallel chains...## Chain 1 finished in 0.6 seconds.

## Chain 2 finished in 0.6 seconds.

## Chain 3 finished in 0.6 seconds.

## Chain 4 finished in 0.6 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.6 seconds.

## Total execution time: 3.5 seconds.# Visualise recovery

true_params <- tibble(

agent = c("Self-Focused", "Self-Focused",

"Socially-Influenced", "Socially-Influenced"),

parameter = rep(c("weight_direct", "weight_social"), 2),

true_value = c(1.5, 0.5, 0.7, 2.0)

)

recovery_draws <- bind_rows(

as_draws_df(fit_recovery_self$draws(c("weight_direct", "weight_social"))) |>

mutate(agent = "Self-Focused"),

as_draws_df(fit_recovery_social$draws(c("weight_direct", "weight_social"))) |>

mutate(agent = "Socially-Influenced")

) |>

pivot_longer(c(weight_direct, weight_social),

names_to = "parameter", values_to = "value")

ggplot(recovery_draws, aes(x = value)) +

geom_histogram(bins = 60, fill = "#4575b4", alpha = 0.7) +

geom_vline(data = true_params,

aes(xintercept = true_value),

colour = "#d73027", linewidth = 1.2, linetype = "dashed") +

facet_grid(agent ~ parameter, scales = "free") +

labs(

title = "Parameter Recovery: WBA",

subtitle = "Red dashed lines indicate true generating parameter values",

x = "Weight value",

y = "Posterior count"

)

Both generating values fall within the bulk of their respective posteriors — a necessary (though not sufficient) condition for trust in the model’s identifiability.

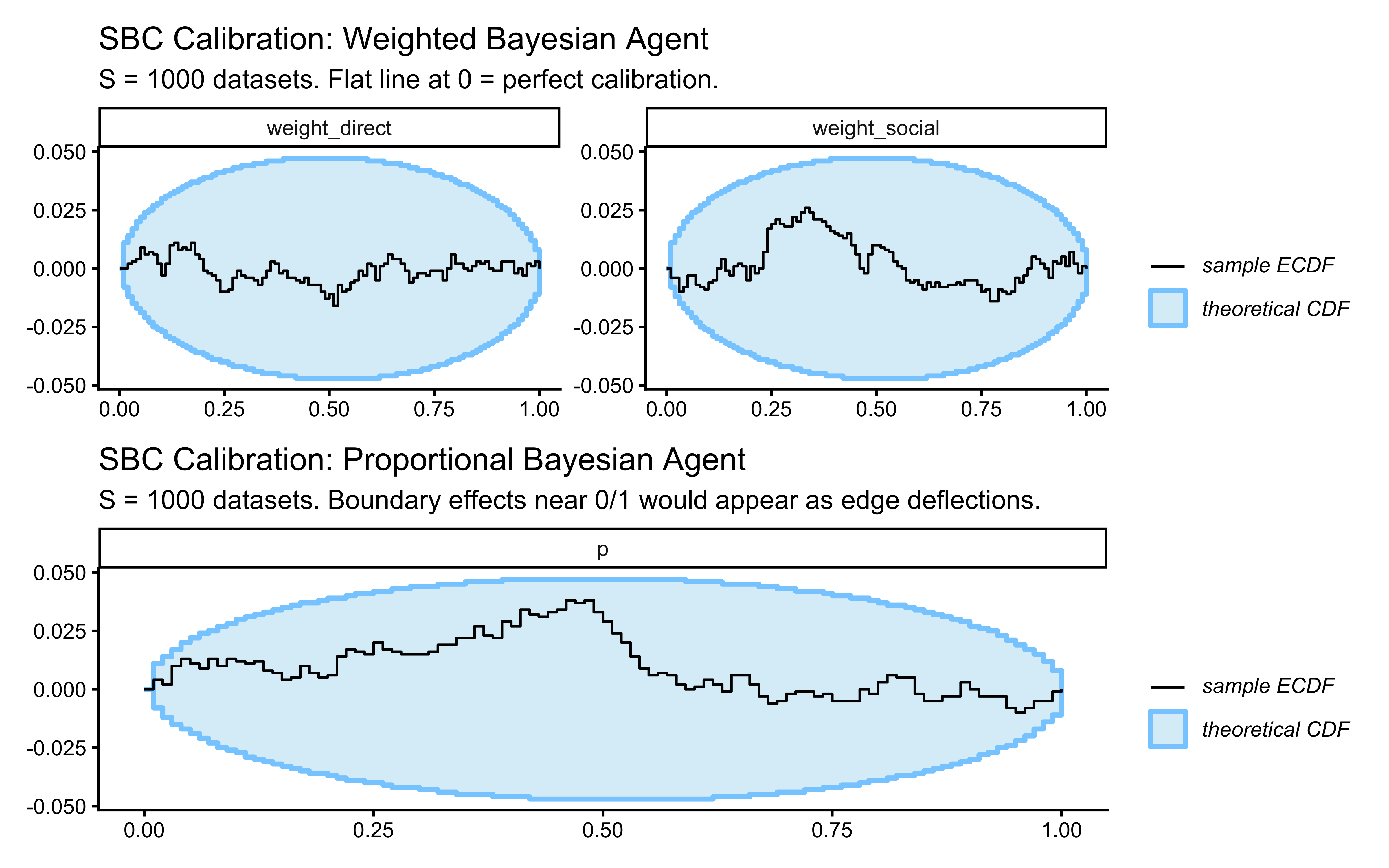

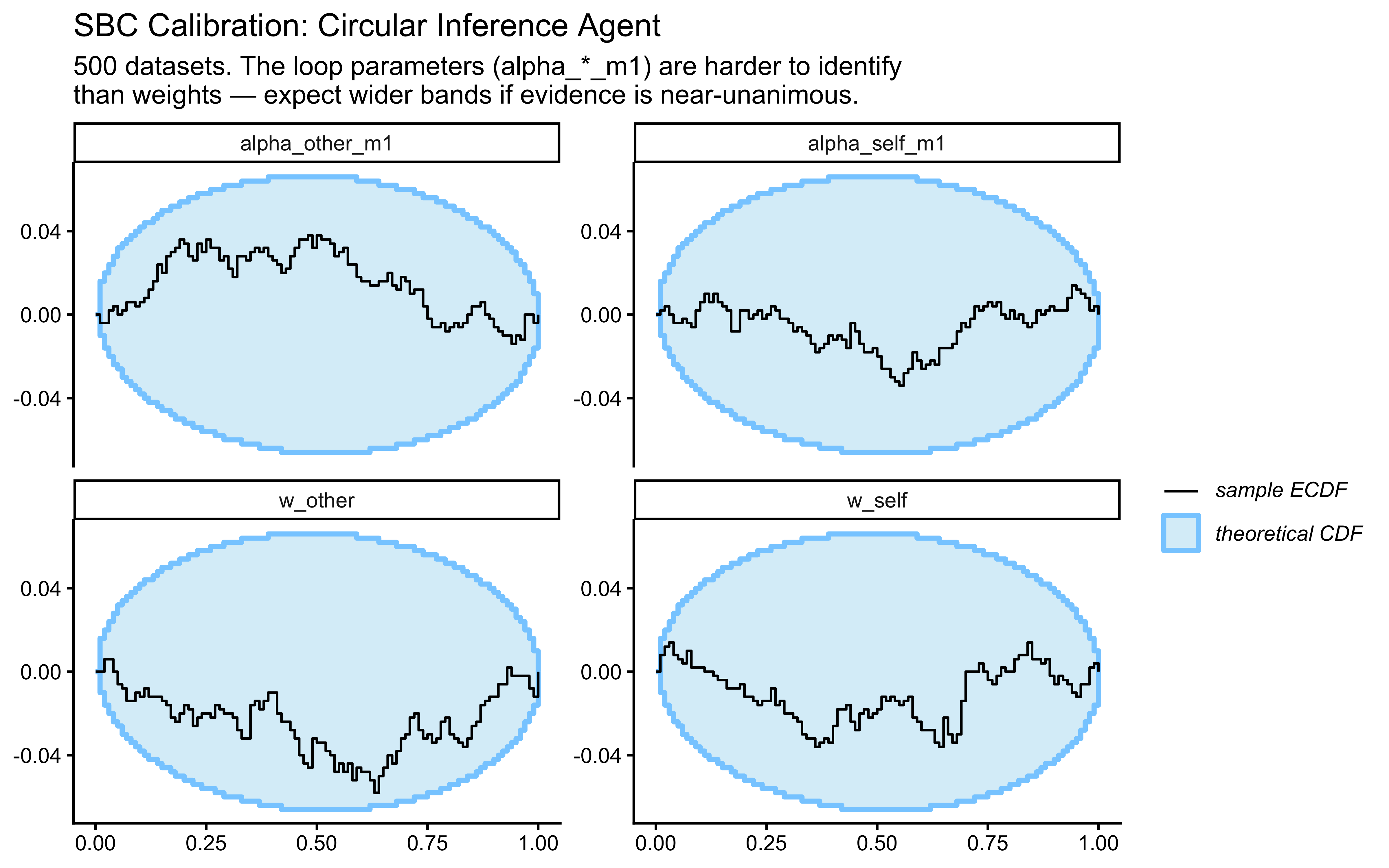

10.11.4 Simulation-Based Calibration (SBC)

Single-fit recovery can succeed by chance. SBC provides a global certificate: if we sample parameters from the prior, simulate a dataset, fit the model, and rank the true parameter among the posterior draws, then under a correctly specified and well-sampled model those ranks must be uniformly distributed (Talts et al. 2018; Säilynoja et al. 2022).

We use ECDF-difference plots as the primary diagnostic (Säilynoja et al. 2022). A perfectly calibrated model produces a flat ECDF-diff at 0; a U-shaped curve indicates over-dispersed posteriors (prior too narrow); a hump-shaped curve indicates over-concentrated posteriors (prior too wide or data dominating).

Why run SBC before fitting to agent data? The WBA’s weight parameters are bounded (positive) and interact multiplicatively in the Beta-Binomial likelihood. These features can cause calibration failures that look like good posteriors — the sampler produces plausible-looking draws that are systematically biased. SBC catches this globally before the model touches empirical data. The PBA’s bounded \(p\) parameter requires its own SBC pass: the Beta(2,2) prior was chosen to avoid boundary geometry pathologies near \(p = 0\) or \(p = 1\), but this must be verified computationally.

n_sbc_datasets <- 1000 # minimum per cheatsheet

if (regenerate_simulations) {

N_sbc <- stan_data_self_focused$N

# ── WBA SBC generator ──────────────────────────────────────────────

sbc_generator <- SBC_generator_function(

function(N) {

wd <- rlnorm(1, 0, 0.5)

ws <- rlnorm(1, 0, 0.5)

ev <- evidence_combinations |>

slice_sample(n = N, replace = TRUE)

# Jeffreys prior pseudo-counts in SBC generator must match the model

alpha_p <- 0.5 + wd * ev$blue1 + ws * ev$blue2

beta_p <- 0.5 + wd * (ev$total1 - ev$blue1) +

ws * (ev$total2 - ev$blue2)

p_blue <- rbeta(N, alpha_p, beta_p)

choices <- rbinom(N, 1, p_blue)

list(

variables = list(weight_direct = wd, weight_social = ws),

generated = list(

N = N,

choice = choices,

blue1 = ev$blue1,

total1 = ev$total1,

blue2 = ev$blue2,

total2 = ev$total2

)

)

},

N = N_sbc

)

sbc_datasets <- generate_datasets(sbc_generator, n_sbc_datasets)

sbc_backend <- SBC::SBC_backend_cmdstan_sample(

mod_weighted,

chains = 2,

iter_warmup = 500,

iter_sampling = 500,

parallel_chains = 2,

refresh = 0,

show_messages = FALSE

)

sbc_results <- compute_SBC(sbc_datasets, sbc_backend, keep_fits = FALSE)

saveRDS(sbc_results, "simmodels/ch9_sbc_wba_results.rds")

# ── PBA SBC generator ──────────────────────────────────────────────

sbc_generator_pba <- SBC_generator_function(

function(N) {

p_true <- rbeta(1, 2, 2)

ev <- evidence_combinations |> slice_sample(n = N, replace = TRUE)

alpha_p <- 0.5 + p_true * ev$blue1 + (1 - p_true) * ev$blue2

beta_p <- 0.5 + p_true * (ev$total1 - ev$blue1) +

(1 - p_true) * (ev$total2 - ev$blue2)

theta <- rbeta(N, alpha_p, beta_p)

choices <- rbinom(N, 1, theta)

list(

variables = list(p = p_true),

generated = list(

N = N,

choice = choices,

blue1 = ev$blue1,

total1 = ev$total1,

blue2 = ev$blue2,

total2 = ev$total2

)

)

},

N = N_sbc

)

sbc_datasets_pba <- generate_datasets(sbc_generator_pba, n_sbc_datasets)

sbc_backend_pba <- SBC::SBC_backend_cmdstan_sample(

mod_proportional,

chains = 2,

iter_warmup = 500,

iter_sampling = 500,

parallel_chains = 2,

refresh = 0,

show_messages = FALSE

)

sbc_results_pba <- compute_SBC(sbc_datasets_pba, sbc_backend_pba,

keep_fits = FALSE)

saveRDS(sbc_results_pba, "simmodels/ch9_sbc_pba_results.rds")

} else {

sbc_results <- readRDS("simmodels/ch9_sbc_wba_results.rds")

sbc_results_pba <- readRDS("simmodels/ch9_sbc_pba_results.rds")

}

# ── Primary diagnostic: ECDF-difference plots ─────────────────────

p_sbc_wba <- SBC::plot_ecdf_diff(

sbc_results, variables = c("weight_direct", "weight_social")

) +

labs(

title = "SBC Calibration: Weighted Bayesian Agent",

subtitle = paste0("S = ", n_sbc_datasets,

" datasets. Flat line at 0 = perfect calibration.")

)

p_sbc_pba <- SBC::plot_ecdf_diff(sbc_results_pba, variables = "p") +

labs(

title = "SBC Calibration: Proportional Bayesian Agent",

subtitle = paste0("S = ", n_sbc_datasets,

" datasets. Boundary effects near 0/1 would appear as edge deflections.")

)

p_sbc_wba / p_sbc_pba

Interpreting the SBC plots: Both ECDF-difference curves should lie within the grey confidence bands (pointwise 95% intervals under the uniform null). For the PBA, pay particular attention to the tails near 0 and 1 — Beta(2,2) was chosen specifically to keep prior mass away from the boundaries, which would otherwise create funnel geometry in the sampler. Systematic deviations in either model must be resolved before proceeding to empirical fitting.

# Summary statistics: fraction of problematic ranks — both models

sbc_results$stats |>

group_by(variable) |>

summarise(n_datasets = n(), mean_rank = mean(rank),

sd_rank = sd(rank), .groups = "drop") |>

mutate(model = "WBA") |>

bind_rows(

sbc_results_pba$stats |>

group_by(variable) |>

summarise(n_datasets = n(), mean_rank = mean(rank),

sd_rank = sd(rank), .groups = "drop") |>

mutate(model = "PBA")

) |>

dplyr::select(model, variable, everything()) |>

knitr::kable(digits = 2, caption = "SBC Rank Summary (WBA and PBA)")| model | variable | n_datasets | mean_rank | sd_rank |

|---|---|---|---|---|

| WBA | weight_direct | 1000 | 49.58 | 28.94 |

| WBA | weight_social | 1000 | 49.30 | 29.13 |

| PBA | p | 1000 | 48.58 | 29.48 |

10.12 Phase 2: Fitting, Diagnostics, and Comparison

10.12.1 Fitting the Models

sampler_args <- list(

chains = 4,

parallel_chains = 4,

iter_warmup = 1000,

iter_sampling = 1000,

refresh = 0

)

agent_datasets <- list(

Balanced = stan_data_balanced,

`Self-Focused` = stan_data_self_focused,

`Socially-Influenced` = stan_data_socially_influenced

)

fit_or_load <- function(file, model, data, seed) {

if (regenerate_simulations) {

fit <- do.call(

model$sample,

c(list(data = data, seed = seed), sampler_args)

)

fit$save_object(file)

fit

} else {

readRDS(file)

}

}

# Simple agent fits

fit_simple_balanced <- fit_or_load(

"simmodels/ch9_fit_simple_balanced.rds", mod_simple,

stan_data_balanced, 201)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.3 seconds.

## Chain 2 finished in 0.3 seconds.

## Chain 3 finished in 0.3 seconds.

## Chain 4 finished in 0.3 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.3 seconds.

## Total execution time: 3.2 seconds.fit_simple_self_focused <- fit_or_load(

"simmodels/ch9_fit_simple_self.rds", mod_simple,

stan_data_self_focused, 202)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.3 seconds.

## Chain 2 finished in 0.3 seconds.

## Chain 3 finished in 0.3 seconds.

## Chain 4 finished in 0.3 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.3 seconds.

## Total execution time: 3.3 seconds.fit_simple_social <- fit_or_load(

"simmodels/ch9_fit_simple_social.rds", mod_simple,

stan_data_socially_influenced, 203)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.3 seconds.

## Chain 2 finished in 0.3 seconds.

## Chain 3 finished in 0.3 seconds.

## Chain 4 finished in 0.3 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.3 seconds.

## Total execution time: 3.9 seconds.# Proportional agent fits

fit_prop_balanced <- fit_or_load(

"simmodels/ch9_fit_prop_balanced.rds", mod_proportional,

stan_data_balanced, 207)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.4 seconds.

## Chain 2 finished in 0.4 seconds.

## Chain 3 finished in 0.5 seconds.

## Chain 4 finished in 0.4 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.4 seconds.

## Total execution time: 3.1 seconds.fit_prop_self_focused <- fit_or_load(

"simmodels/ch9_fit_prop_self.rds", mod_proportional,

stan_data_self_focused, 208)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.4 seconds.

## Chain 2 finished in 0.5 seconds.

## Chain 3 finished in 0.4 seconds.

## Chain 4 finished in 0.4 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.4 seconds.

## Total execution time: 3.3 seconds.fit_prop_social <- fit_or_load(

"simmodels/ch9_fit_prop_social.rds", mod_proportional,

stan_data_socially_influenced, 209)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.4 seconds.

## Chain 2 finished in 0.4 seconds.

## Chain 3 finished in 0.4 seconds.

## Chain 4 finished in 0.4 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.4 seconds.

## Total execution time: 3.3 seconds.# Weighted agent fits

fit_weighted_balanced <- fit_or_load(

"simmodels/ch9_fit_weighted_balanced.rds", mod_weighted,

stan_data_balanced, 204)## Running MCMC with 4 parallel chains...## Chain 1 finished in 0.6 seconds.

## Chain 2 finished in 0.6 seconds.

## Chain 3 finished in 0.6 seconds.

## Chain 4 finished in 0.5 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.6 seconds.

## Total execution time: 3.4 seconds.fit_weighted_self_focused <- fit_or_load(

"simmodels/ch9_fit_weighted_self.rds", mod_weighted,

stan_data_self_focused, 205)## Running MCMC with 4 parallel chains...

##

## Chain 1 finished in 0.5 seconds.

## Chain 2 finished in 0.5 seconds.

## Chain 3 finished in 0.5 seconds.

## Chain 4 finished in 0.5 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.5 seconds.

## Total execution time: 3.5 seconds.fit_weighted_social <- fit_or_load(

"simmodels/ch9_fit_weighted_social.rds", mod_weighted,

stan_data_socially_influenced, 206)## Running MCMC with 4 parallel chains...## Chain 1 finished in 0.6 seconds.

## Chain 2 finished in 0.6 seconds.

## Chain 3 finished in 0.6 seconds.

## Chain 4 finished in 0.6 seconds.

##

## All 4 chains finished successfully.

## Mean chain execution time: 0.6 seconds.

## Total execution time: 3.4 seconds.10.12.2 Geometry Diagnostics

A complete diagnostic report is mandatory before interpreting any posteriors. We apply the five-threshold battery from the cheatsheet to every fit.

Why run the full five-threshold diagnostic battery on every fit? The WBA is fitted separately to three agent types. A subtle geometry issue — say, the two weight parameters trading off against each other — might appear only when fitting the Self-Focused agent, not the Balanced one. Checking all fits individually costs little and prevents one pathological fit from contaminating downstream comparisons.

check_diagnostics <- function(fit, label) {

# 1. Stan-level divergences, R-hat, tree-depth

fit$cmdstan_diagnose()

# 2. Tabular summary: flag parameters outside threshold

smry <- fit$summary() |>

filter(!is.na(rhat)) |>

mutate(

rhat_ok = rhat < 1.01,

ess_b_ok = ess_bulk > 400,

ess_t_ok = ess_tail > 400

)

n_fail <- smry |>

filter(!rhat_ok | !ess_b_ok | !ess_t_ok) |>

nrow()

cat("\n---", label, "---\n")

cat("Parameters failing thresholds:", n_fail,

"out of", nrow(smry), "\n")

if (n_fail > 0) {

smry |>

filter(!rhat_ok | !ess_b_ok | !ess_t_ok) |>

dplyr::select(variable, mean, rhat, ess_bulk, ess_tail) |>

print()

}

invisible(smry)

}

# Proportional agent diagnostics

check_diagnostics(fit_prop_balanced, "PBA — Balanced")## Checking sampler transitions treedepth.

## Treedepth satisfactory for all transitions.

##

## Checking sampler transitions for divergences.

## No divergent transitions found.

##

## Checking E-BFMI - sampler transitions HMC potential energy.

## E-BFMI satisfactory.

##

## Rank-normalized split effective sample size satisfactory for all parameters.

##

## Rank-normalized split R-hat values satisfactory for all parameters.

##

## Processing complete, no problems detected.

##

## --- PBA — Balanced ---

## Parameters failing thresholds: 0 out of 543## Checking sampler transitions treedepth.

## Treedepth satisfactory for all transitions.

##

## Checking sampler transitions for divergences.

## No divergent transitions found.

##

## Checking E-BFMI - sampler transitions HMC potential energy.

## E-BFMI satisfactory.

##

## Rank-normalized split effective sample size satisfactory for all parameters.

##

## Rank-normalized split R-hat values satisfactory for all parameters.

##

## Processing complete, no problems detected.

##

## --- PBA — Self-Focused ---

## Parameters failing thresholds: 0 out of 543## Checking sampler transitions treedepth.

## Treedepth satisfactory for all transitions.

##

## Checking sampler transitions for divergences.

## No divergent transitions found.

##

## Checking E-BFMI - sampler transitions HMC potential energy.

## E-BFMI satisfactory.

##

## Rank-normalized split effective sample size satisfactory for all parameters.

##

## Rank-normalized split R-hat values satisfactory for all parameters.

##

## Processing complete, no problems detected.

##

## --- PBA — Socially-Influenced ---

## Parameters failing thresholds: 0 out of 543# Weighted agent diagnostics (the model with free parameters)

check_diagnostics(fit_weighted_balanced, "WBA — Balanced")## Checking sampler transitions treedepth.

## Treedepth satisfactory for all transitions.

##

## Checking sampler transitions for divergences.

## No divergent transitions found.

##

## Checking E-BFMI - sampler transitions HMC potential energy.

## E-BFMI satisfactory.

##

## Rank-normalized split effective sample size satisfactory for all parameters.

##

## Rank-normalized split R-hat values satisfactory for all parameters.

##

## Processing complete, no problems detected.

##

## --- WBA — Balanced ---

## Parameters failing thresholds: 0 out of 545## Checking sampler transitions treedepth.

## Treedepth satisfactory for all transitions.

##

## Checking sampler transitions for divergences.

## No divergent transitions found.

##

## Checking E-BFMI - sampler transitions HMC potential energy.

## E-BFMI satisfactory.

##

## Rank-normalized split effective sample size satisfactory for all parameters.

##

## Rank-normalized split R-hat values satisfactory for all parameters.

##

## Processing complete, no problems detected.

##

## --- WBA — Self-Focused ---

## Parameters failing thresholds: 0 out of 545## Checking sampler transitions treedepth.

## Treedepth satisfactory for all transitions.

##

## Checking sampler transitions for divergences.

## No divergent transitions found.

##

## Checking E-BFMI - sampler transitions HMC potential energy.

## E-BFMI satisfactory.

##

## Rank-normalized split effective sample size satisfactory for all parameters.

##

## Rank-normalized split R-hat values satisfactory for all parameters.

##

## Processing complete, no problems detected.

##

## --- WBA — Socially-Influenced ---

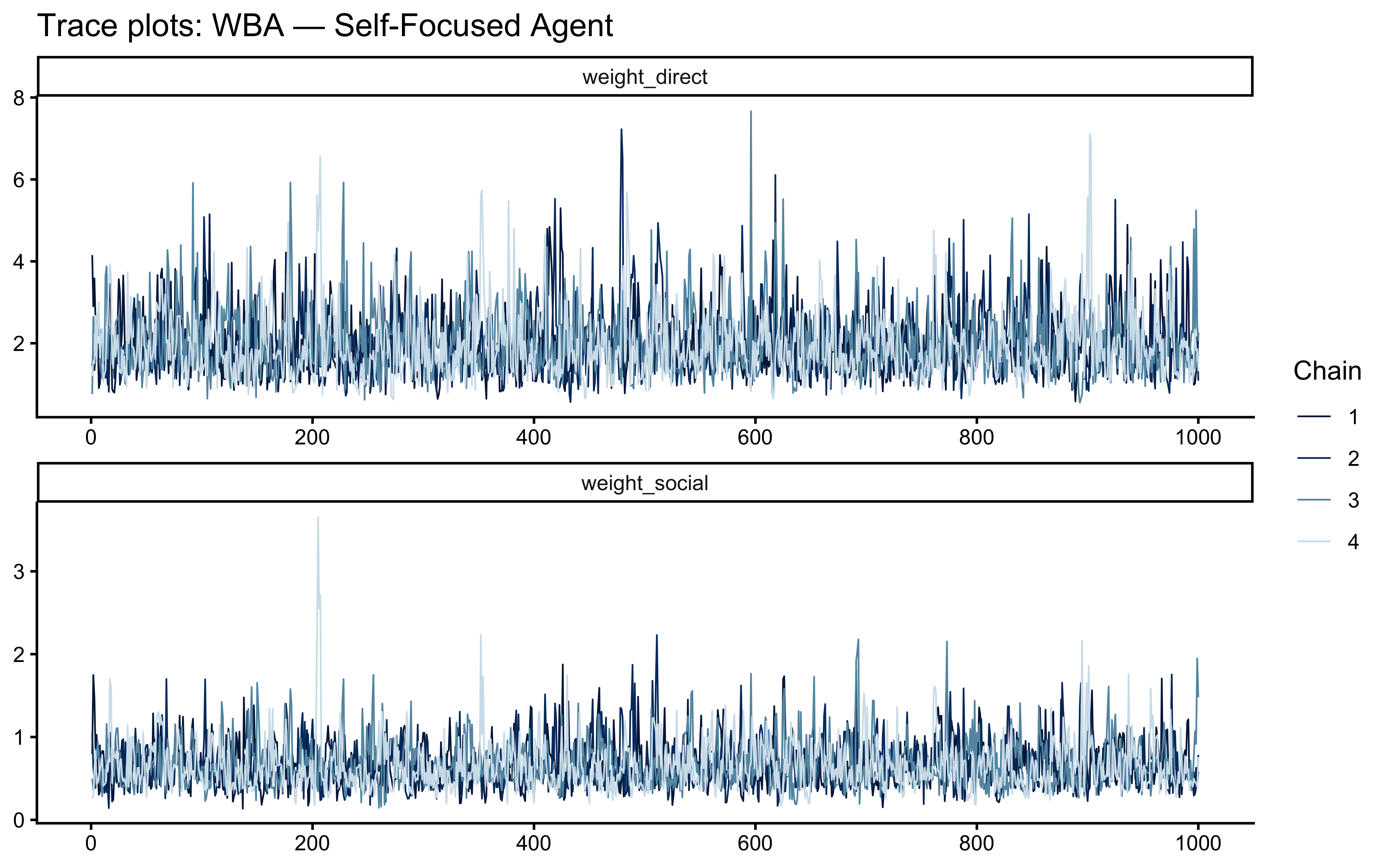

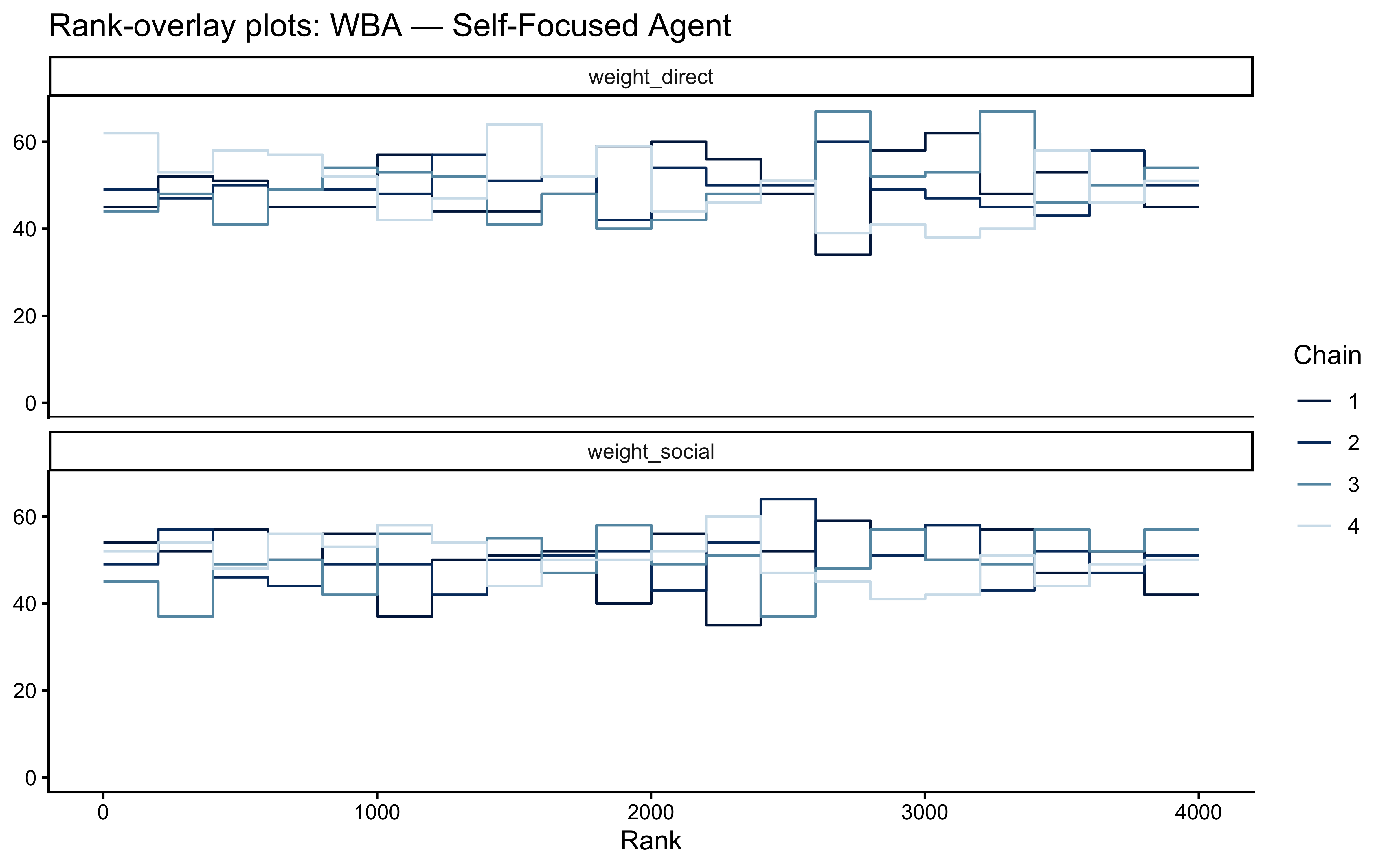

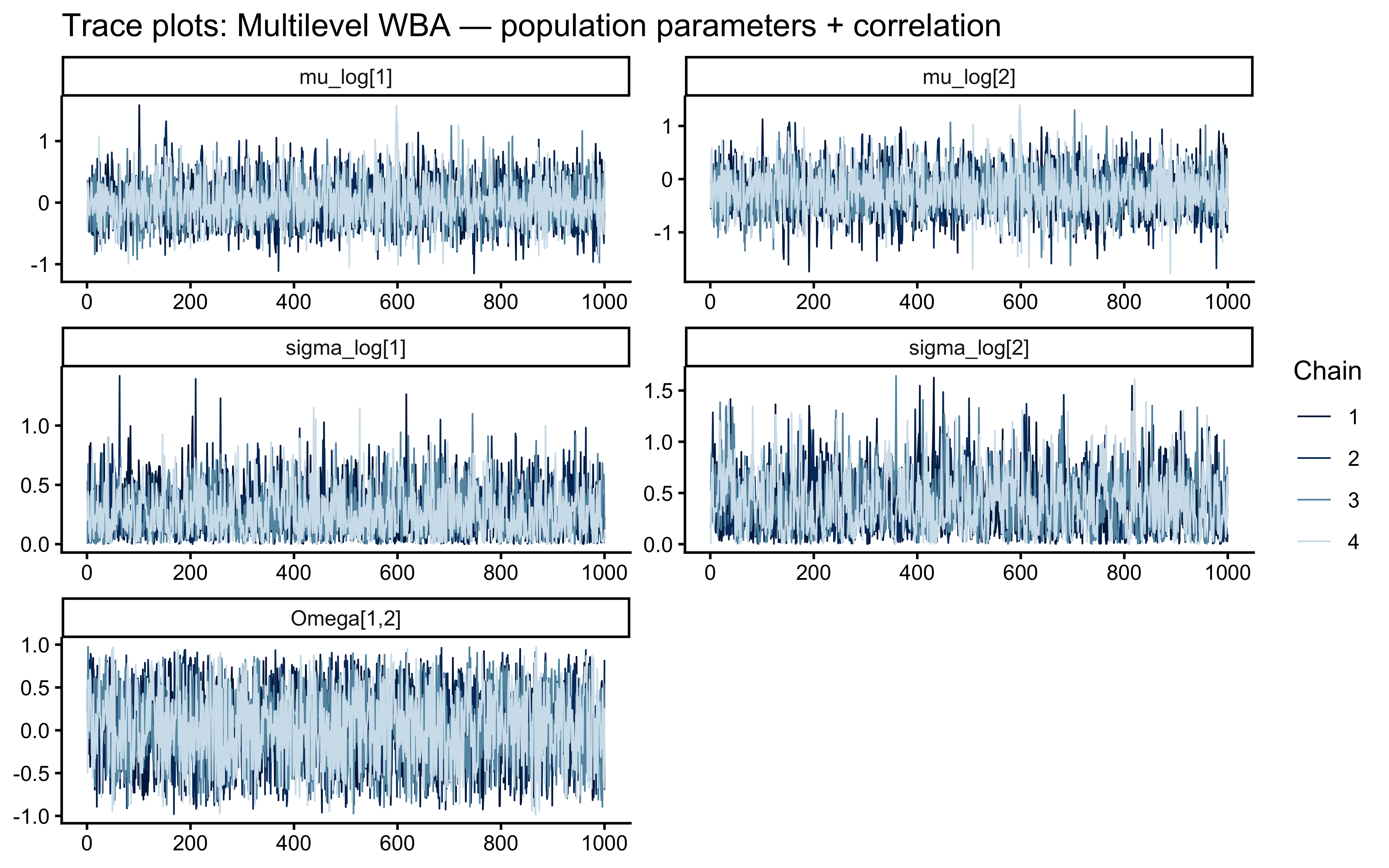

## Parameters failing thresholds: 0 out of 545# MCMC trace and rank-overlay plots for the Self-Focused WBA fit

bayesplot::mcmc_trace(

fit_weighted_self_focused$draws(c("weight_direct", "weight_social")),

facet_args = list(ncol = 1)

) + labs(title = "Trace plots: WBA — Self-Focused Agent")

bayesplot::mcmc_rank_overlay(

fit_weighted_self_focused$draws(c("weight_direct", "weight_social")),

facet_args = list(ncol = 1)

) + labs(title = "Rank-overlay plots: WBA — Self-Focused Agent")

Thresholds (cheatsheet):

| Diagnostic | Threshold | Interpretation if violated |

|---|---|---|

| Divergences | = 0 | Posterior geometry pathology; reparameterise first |

| \(\hat{R}\) (rank-norm.) | < 1.01 | Chains not mixed; increase warmup or reparameterise |

| Bulk ESS | > 400 | Insufficient central-tendency samples |

| Tail ESS | > 400 | Insufficient tail samples; quantile estimates unreliable |

| E-BFMI | > 0.2 | Energy-trajectory pathology; check priors |

If any threshold fails, treat the geometry, not the symptoms. Do not increase adapt_delta or max_treedepth without first diagnosing the cause via mcmc_scatter() pairwise plots and inspecting for funnel geometry.

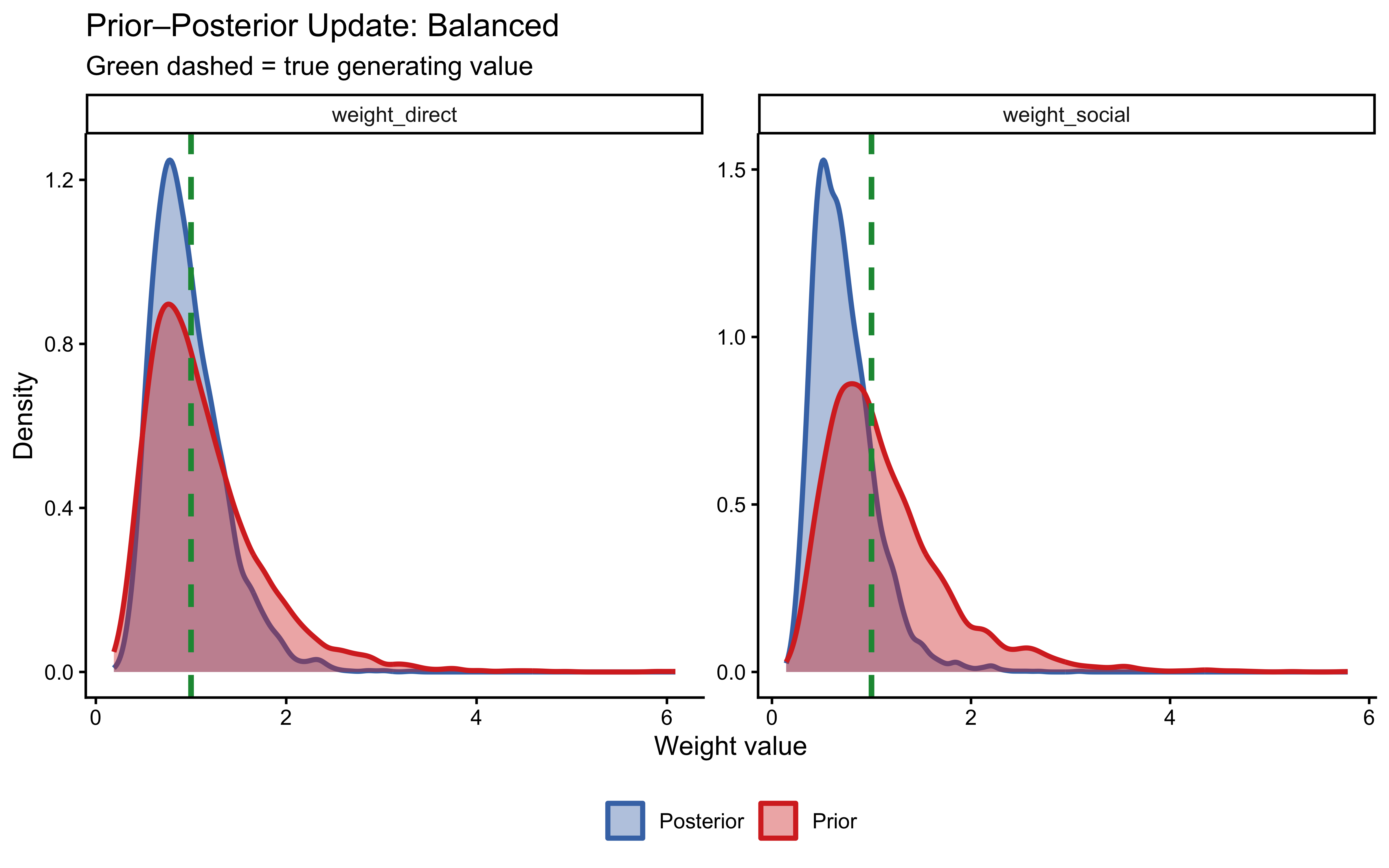

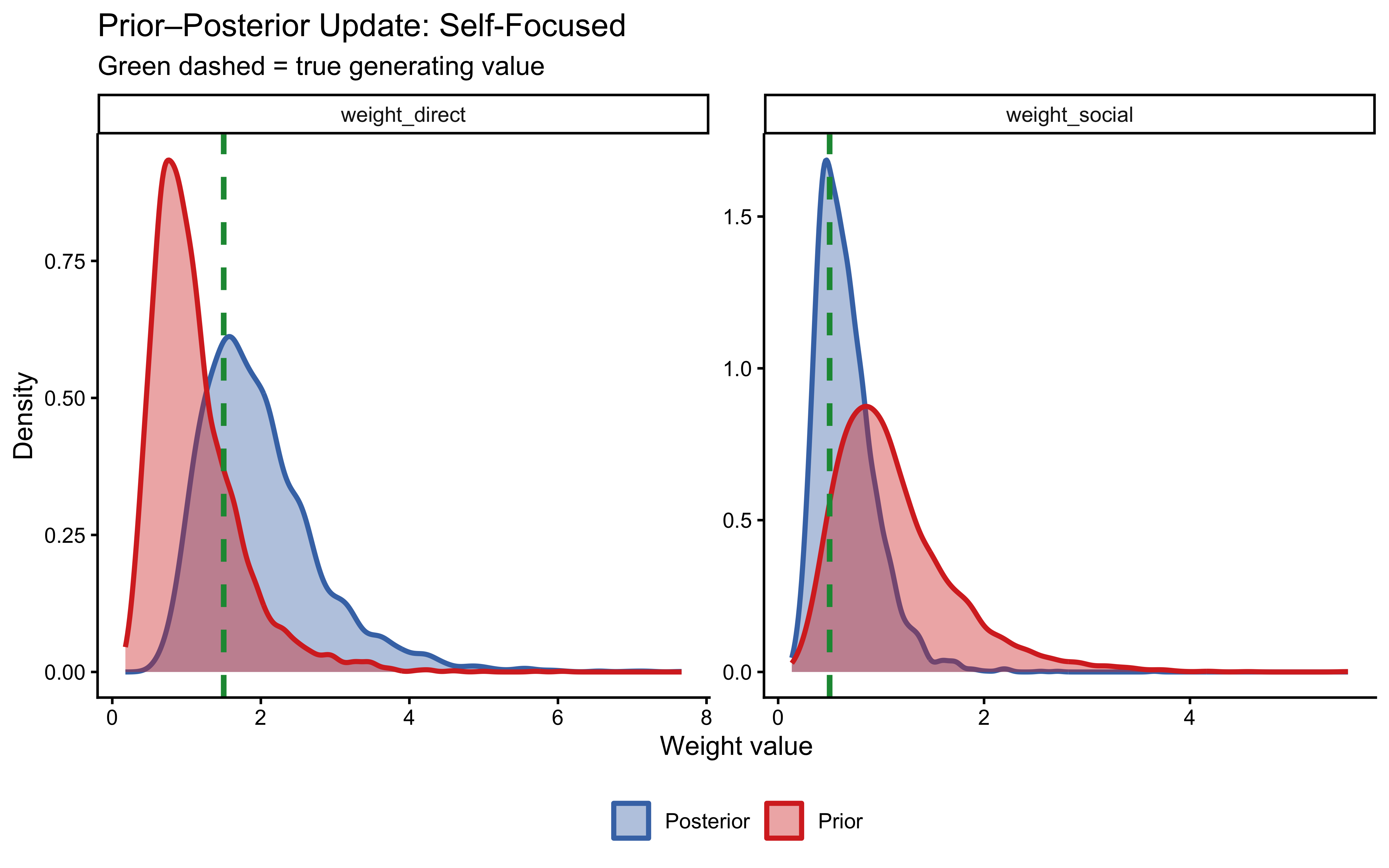

10.12.3 Prior-Posterior Update Visualisation

plot_prior_posterior <- function(fit, true_wd = NULL, true_ws = NULL,

label = "") {

n_prior <- 4000

prior_draws <- tibble(

weight_direct = rlnorm(n_prior, 0, 0.5),

weight_social = rlnorm(n_prior, 0, 0.5),

source = "Prior"

)

post_draws <- as_draws_df(fit$draws(c("weight_direct", "weight_social"))) |>

as_tibble() |>

dplyr::select(weight_direct, weight_social) |>

mutate(source = "Posterior")

combined <- bind_rows(prior_draws, post_draws) |>

pivot_longer(c(weight_direct, weight_social),

names_to = "parameter", values_to = "value")

true_vals <- if (!is.null(true_wd)) {

tibble(

parameter = c("weight_direct", "weight_social"),

true_value = c(true_wd, true_ws)

)

} else NULL

p <- ggplot(combined, aes(x = value, fill = source, colour = source)) +

geom_density(alpha = 0.4, linewidth = 1) +

facet_wrap(~ parameter, scales = "free") +

scale_fill_manual(values = c("Prior" = "#d73027", "Posterior" = "#4575b4")) +

scale_colour_manual(values = c("Prior" = "#d73027", "Posterior" = "#4575b4")) +

labs(

title = paste("Prior–Posterior Update:", label),

x = "Weight value",

y = "Density",

fill = NULL, colour = NULL

) +

theme(legend.position = "bottom")

if (!is.null(true_vals)) {

p <- p + geom_vline(

data = true_vals,

aes(xintercept = true_value),

colour = "#1a9641", linewidth = 1.1, linetype = "dashed"

) +

labs(subtitle = "Green dashed = true generating value")

}

p

}

plot_prior_posterior(fit_weighted_balanced,

true_wd = 1.0, true_ws = 1.0, label = "Balanced")

plot_prior_posterior(fit_weighted_self_focused,

true_wd = 1.5, true_ws = 0.5, label = "Self-Focused")

plot_prior_posterior(fit_weighted_social,

true_wd = 0.7, true_ws = 2.0, label = "Socially-Influenced")

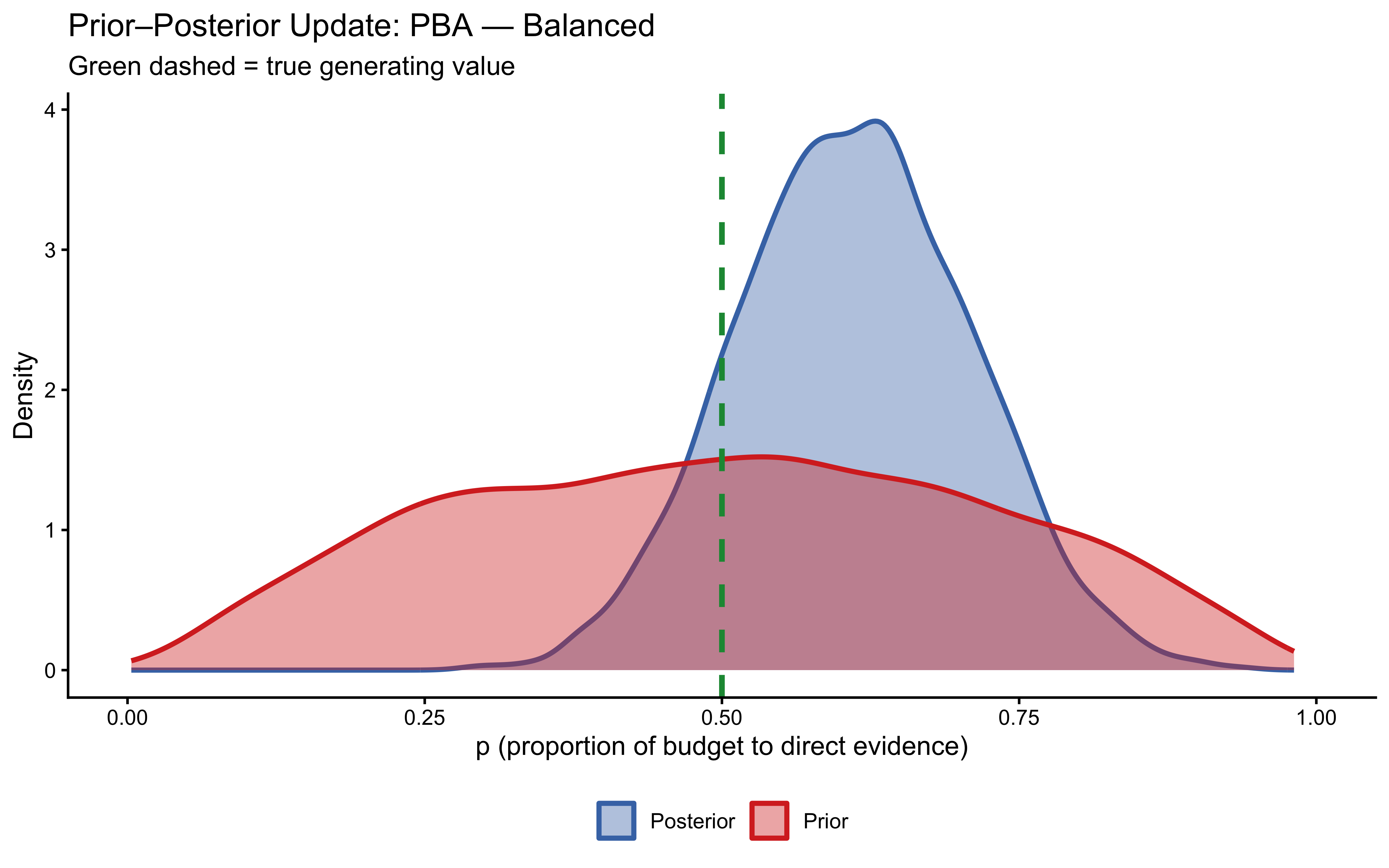

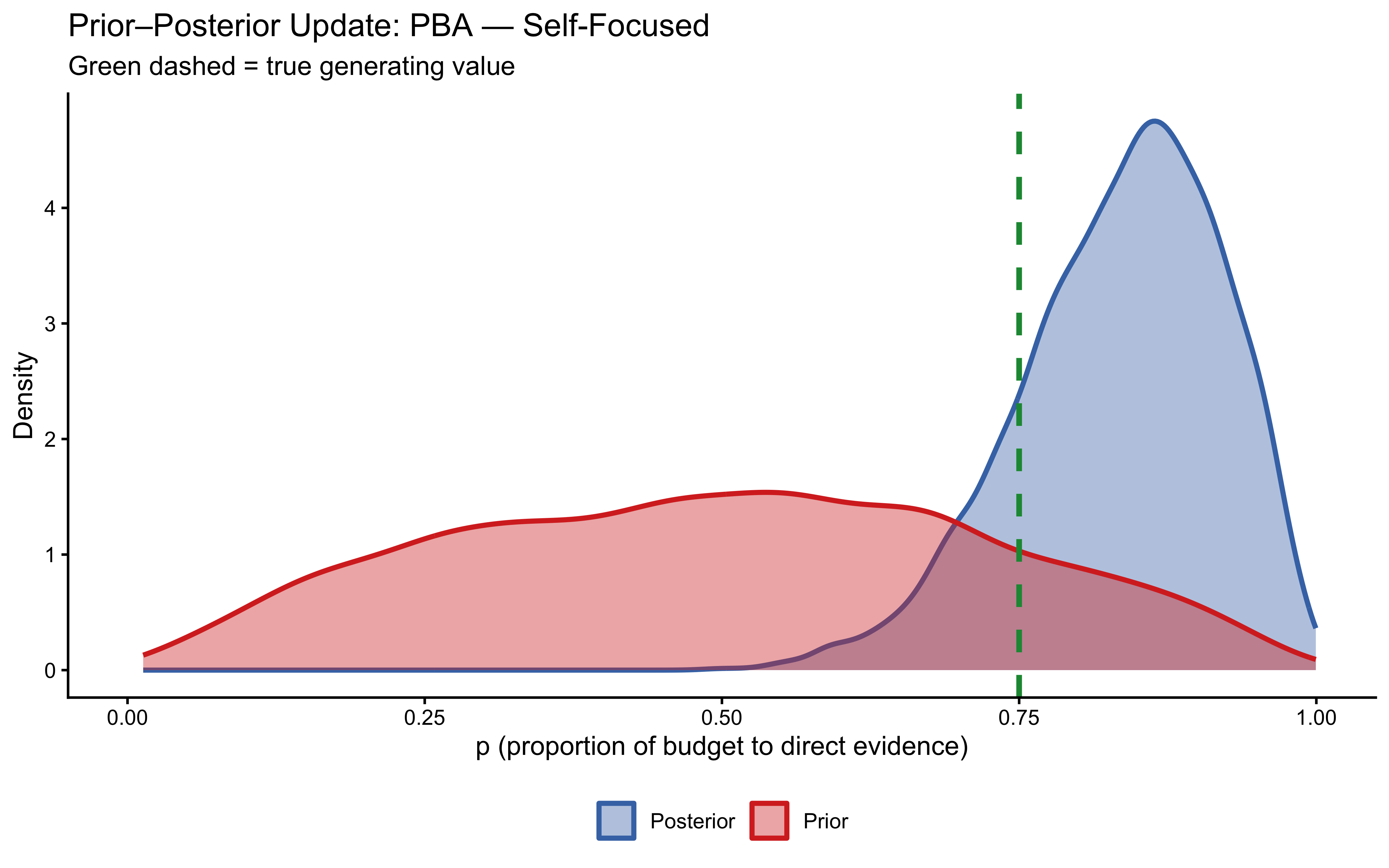

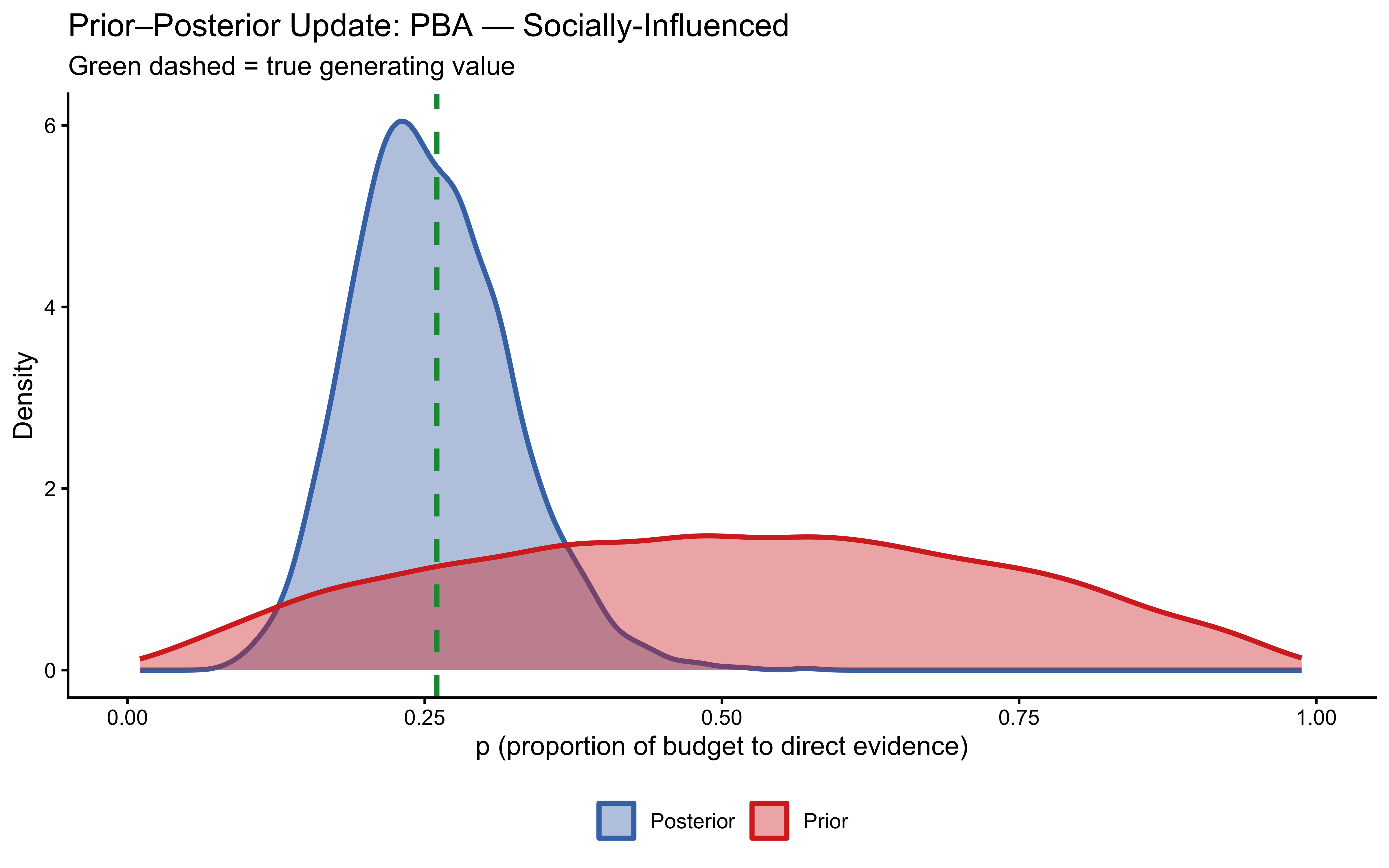

# ── PBA prior–posterior update ────────────────────────────────────────

plot_prior_posterior_pba <- function(fit, true_p = NULL, label = "") {

n_pr <- 4000

prior_draws <- tibble(p = rbeta(n_pr, 2, 2), source = "Prior")

post_draws <- as_draws_df(fit$draws("p")) |>

as_tibble() |> dplyr::select(p) |> mutate(source = "Posterior")

combined <- bind_rows(prior_draws, post_draws)

pl <- ggplot(combined, aes(x = p, fill = source, colour = source)) +

geom_density(alpha = 0.4, linewidth = 1) +

scale_fill_manual(values = c("Prior" = "#d73027", "Posterior" = "#4575b4")) +

scale_colour_manual(values = c("Prior" = "#d73027", "Posterior" = "#4575b4")) +

coord_cartesian(xlim = c(0, 1)) +

labs(title = paste("Prior–Posterior Update: PBA —", label),

x = "p (proportion of budget to direct evidence)",

y = "Density", fill = NULL, colour = NULL) +

theme(legend.position = "bottom")

if (!is.null(true_p)) {

pl <- pl +

geom_vline(xintercept = true_p, colour = "#1a9641",

linewidth = 1.1, linetype = "dashed") +

labs(subtitle = "Green dashed = true generating value")

}

pl

}

plot_prior_posterior_pba(fit_prop_balanced, true_p = 0.5, label = "Balanced")

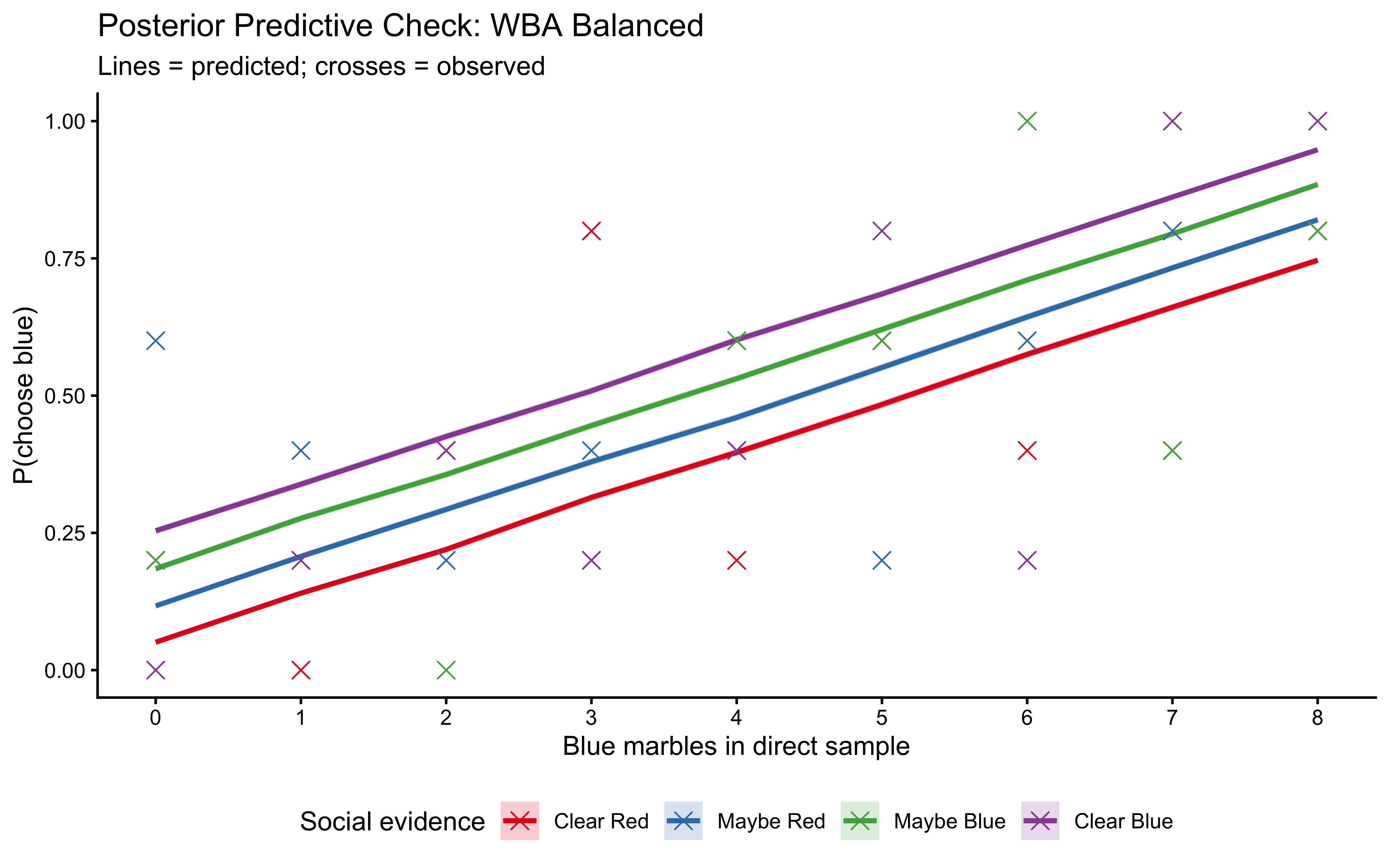

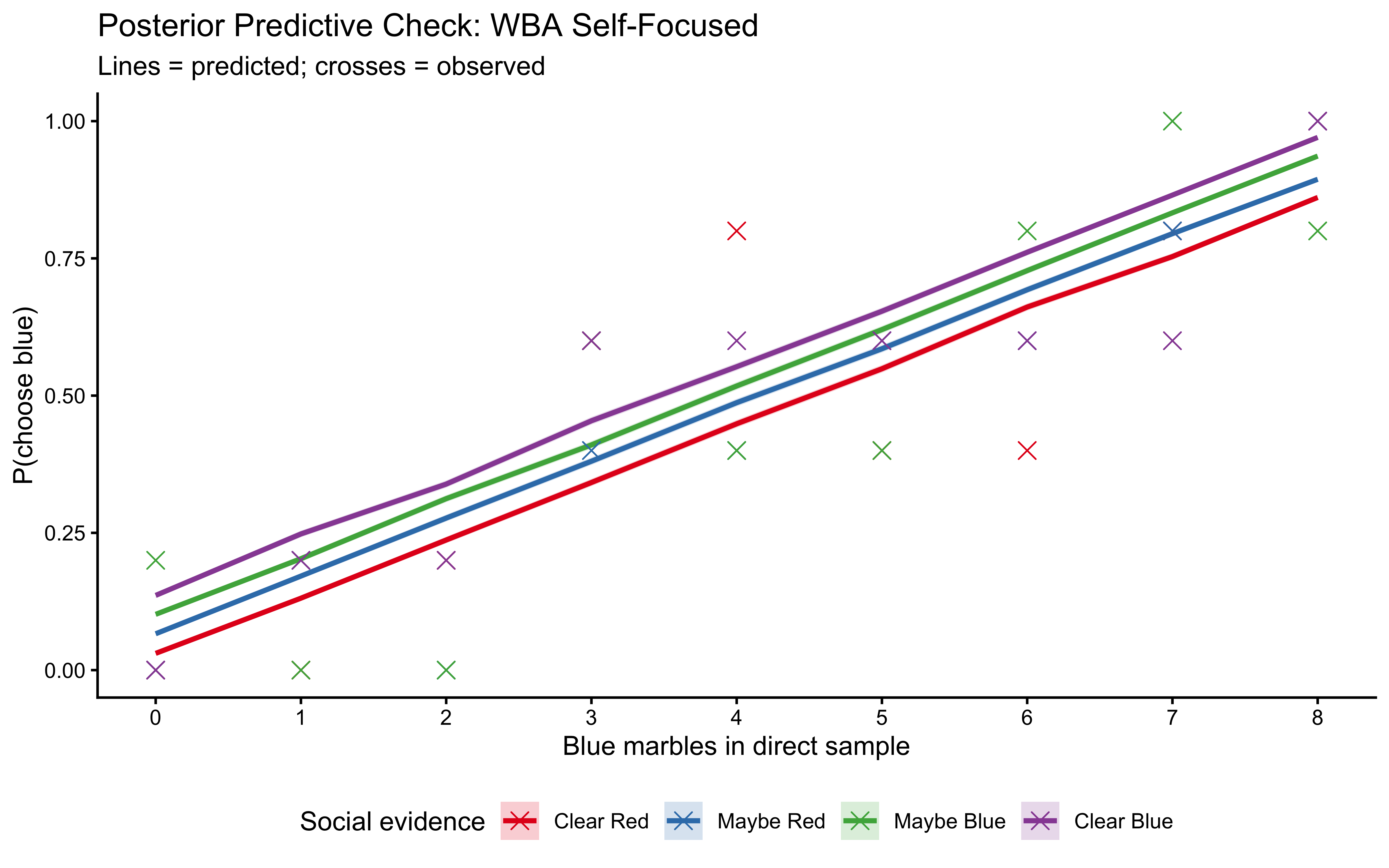

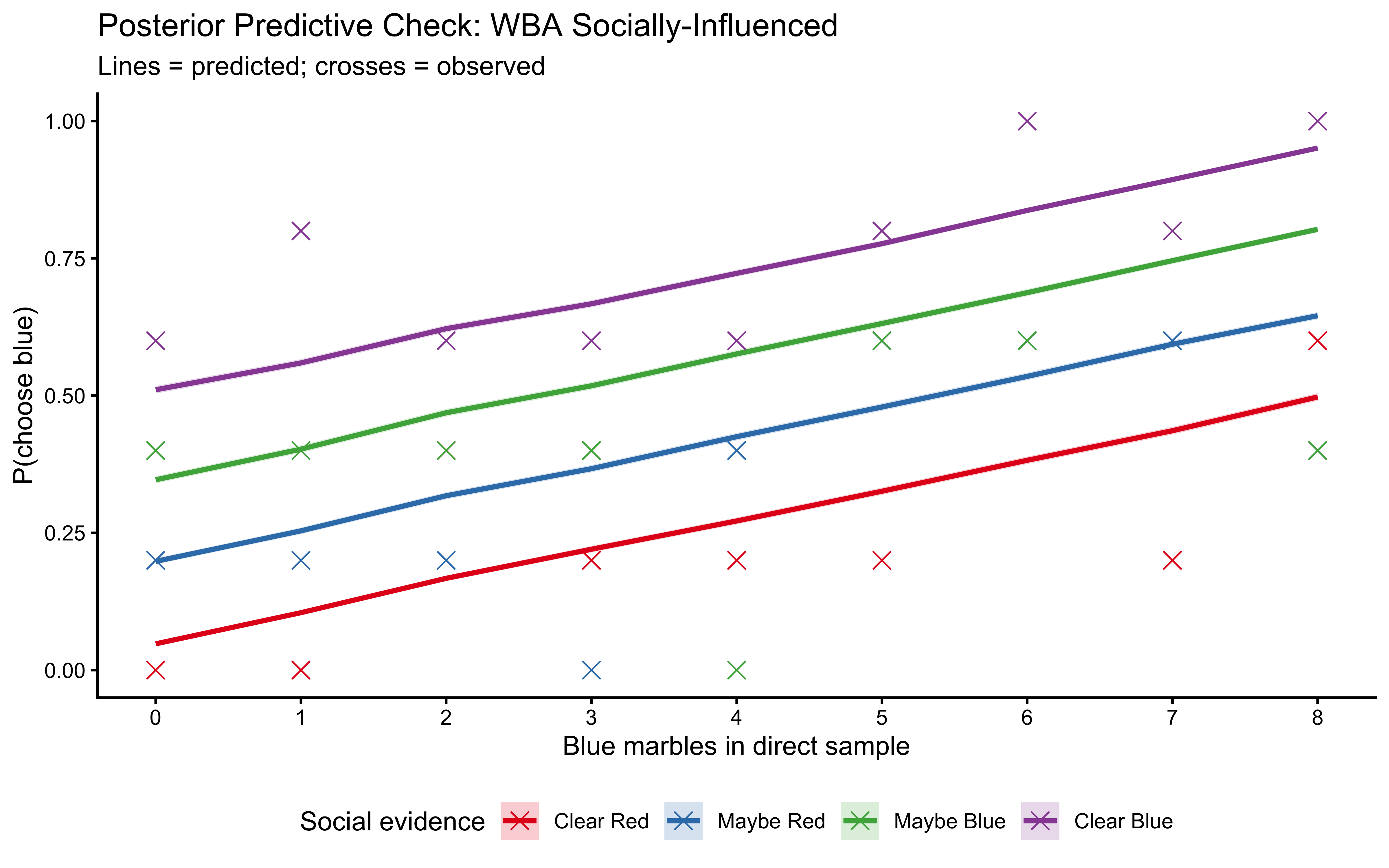

10.12.4 Posterior Predictive Checks (In-Sample)

Standard PPCs reuse the training data and therefore represent an optimistic measure of fit. We report them here for completeness but they are neither necessary nor sufficient: the rigorous out-of-sample check is LOO-PIT, presented in the next section.

plot_ppc <- function(fit, sim_df, param_name = "posterior_pred", label = "") {

pred_samples <- fit$draws(param_name, format = "data.frame") |>

dplyr::select(-.chain, -.iteration, -.draw) |>

pivot_longer(everything(), names_to = "obs_id", values_to = "pred") |>

mutate(obs_id = parse_number(obs_id))

obs_with_id <- sim_df |>

mutate(obs_id = row_number()) |>

dplyr::select(obs_id, blue1, blue2)

pred_summary <- pred_samples |>

left_join(obs_with_id, by = "obs_id") |>

group_by(blue1, blue2) |>

summarise(

p_pred = mean(pred),

se_pred = sqrt(p_pred * (1 - p_pred) / n()),

.groups = "drop"

) |>

mutate(

lower = p_pred - 1.96 * se_pred,

upper = p_pred + 1.96 * se_pred,

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

)

)

p_obs <- sim_df |>

group_by(blue1, blue2) |>

summarise(p_obs = mean(choice), .groups = "drop") |>

mutate(

social_evidence = factor(

blue2, levels = 0:3,

labels = c("Clear Red", "Maybe Red", "Maybe Blue", "Clear Blue")

)

)

ggplot(pred_summary,

aes(x = blue1, colour = social_evidence, group = social_evidence)) +

geom_ribbon(aes(ymin = lower, ymax = upper,

fill = social_evidence), alpha = 0.2, colour = NA) +

geom_line(aes(y = p_pred), linewidth = 1) +

geom_point(data = p_obs, aes(y = p_obs), shape = 4, size = 3) +

scale_x_continuous(breaks = 0:8) +

scale_colour_brewer(palette = "Set1") +

scale_fill_brewer(palette = "Set1") +

coord_cartesian(ylim = c(0, 1)) +

labs(

title = paste("Posterior Predictive Check:", label),

subtitle = "Lines = predicted; crosses = observed",

x = "Blue marbles in direct sample",

y = "P(choose blue)",

colour = "Social evidence", fill = "Social evidence"

) +

theme(legend.position = "bottom")

}

plot_ppc(fit_weighted_balanced, balanced_data, label = "WBA Balanced")

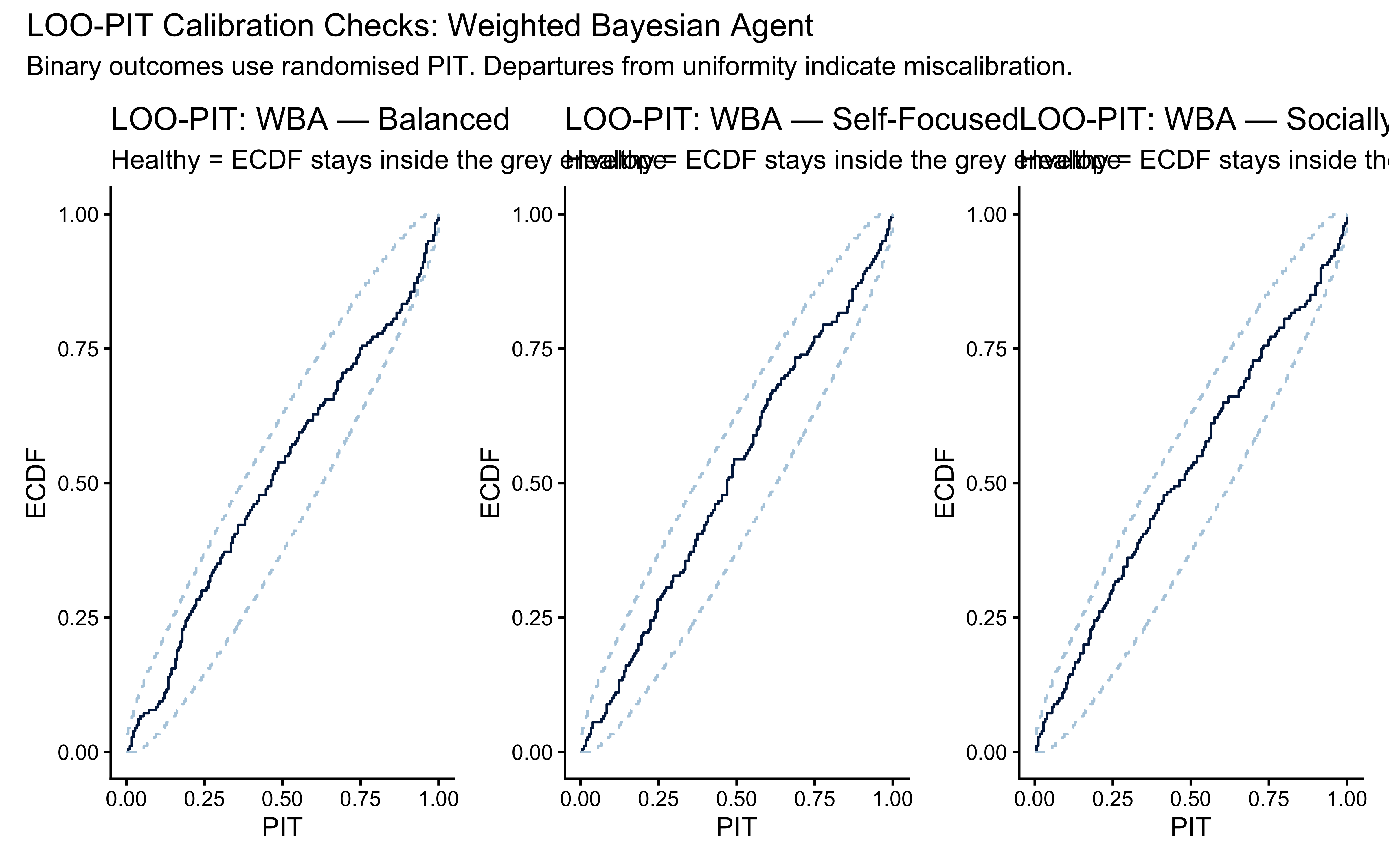

10.12.5 LOO-PIT: The Rigorous Out-of-Sample Check

Standard PPCs use training data and thus overestimate predictive accuracy. Leave-One-Out Probability Integral Transforms (LOO-PIT) evaluate calibration on effectively held-out data (Gabry et al. 2019). For a well-calibrated model, the LOO-PIT histogram should be uniform; systematic deviations (U-shaped, hump-shaped, skewed) indicate specific failure modes.

Why LOO-PIT in addition to standard PPCs? Standard PPCs use the training data and therefore overestimate fit. LOO-PIT evaluates calibration on effectively held-out observations. A model can pass PPCs while being systematically miscalibrated — LOO-PIT detects this by checking whether the model’s predictive uncertainty matches reality trial by trial.

compute_loo_pit <- function(fit, data_list, label) {

log_lik <- fit$draws("log_lik", format = "matrix")

loo_obj <- loo(log_lik)

# Pareto-k escalation protocol (cheatsheet)

k_vals <- loo_obj$diagnostics$pareto_k

n_high <- sum(k_vals >= 0.7)

n_mod <- sum(k_vals >= 0.5 & k_vals < 0.7)

cat("\n---", label, "---\n")

cat("Pareto-k: k < 0.5:", sum(k_vals < 0.5), "| 0.5 <= k < 0.7:", n_mod,

"| k >= 0.7:", n_high, "\n")

if (n_high > 0) {

cat(" -> Applying loo_moment_match() for k >= 0.7 observations\n")

# loo_obj <- loo_moment_match(fit, loo = loo_obj) # uncomment if needed

}

loo_obj

}

loo_simple_balanced <- compute_loo_pit(fit_simple_balanced, stan_data_balanced,

"SBA Balanced")##

## --- SBA Balanced ---

## Pareto-k: k < 0.5: 0 | 0.5 <= k < 0.7: 0 | k >= 0.7: 180

## -> Applying loo_moment_match() for k >= 0.7 observationsloo_simple_self <- compute_loo_pit(fit_simple_self_focused, stan_data_self_focused,

"SBA Self-Focused")##

## --- SBA Self-Focused ---

## Pareto-k: k < 0.5: 0 | 0.5 <= k < 0.7: 0 | k >= 0.7: 180

## -> Applying loo_moment_match() for k >= 0.7 observationsloo_simple_social <- compute_loo_pit(fit_simple_social, stan_data_socially_influenced,

"SBA Socially-Influenced")##

## --- SBA Socially-Influenced ---

## Pareto-k: k < 0.5: 0 | 0.5 <= k < 0.7: 0 | k >= 0.7: 180

## -> Applying loo_moment_match() for k >= 0.7 observations##

## --- PBA Balanced ---

## Pareto-k: k < 0.5: 180 | 0.5 <= k < 0.7: 0 | k >= 0.7: 0##

## --- PBA Self-Focused ---

## Pareto-k: k < 0.5: 180 | 0.5 <= k < 0.7: 0 | k >= 0.7: 0loo_prop_social <- compute_loo_pit(fit_prop_social, stan_data_socially_influenced,

"PBA Socially-Influenced")##

## --- PBA Socially-Influenced ---

## Pareto-k: k < 0.5: 180 | 0.5 <= k < 0.7: 0 | k >= 0.7: 0##

## --- WBA Balanced ---

## Pareto-k: k < 0.5: 180 | 0.5 <= k < 0.7: 0 | k >= 0.7: 0loo_weighted_self <- compute_loo_pit(fit_weighted_self_focused, stan_data_self_focused,

"WBA Self-Focused")##

## --- WBA Self-Focused ---

## Pareto-k: k < 0.5: 180 | 0.5 <= k < 0.7: 0 | k >= 0.7: 0loo_weighted_social <- compute_loo_pit(fit_weighted_social, stan_data_socially_influenced,

"WBA Socially-Influenced")##

## --- WBA Socially-Influenced ---

## Pareto-k: k < 0.5: 180 | 0.5 <= k < 0.7: 0 | k >= 0.7: 0# Randomised PIT for binary choices (same approach as Ch. 7)

compute_randomized_pit <- function(y, loo_obj, seed = 101) {

set.seed(seed)

p_obs <- exp(loo_obj$pointwise[, "elpd_loo"])

pit_vals <- ifelse(y == 1,

runif(length(y), 1 - p_obs, 1),

runif(length(y), 0, p_obs))

return(pit_vals)

}

pit_w_balanced <- compute_randomized_pit(stan_data_balanced$choice,

loo_weighted_balanced)

pit_w_self <- compute_randomized_pit(stan_data_self_focused$choice,

loo_weighted_self)

pit_w_social <- compute_randomized_pit(stan_data_socially_influenced$choice,

loo_weighted_social)

p_pit_b <- bayesplot::ppc_pit_ecdf(pit = pit_w_balanced) +

labs(title = "LOO-PIT: WBA — Balanced",

subtitle = "Healthy = ECDF stays inside the grey envelope")

p_pit_s <- bayesplot::ppc_pit_ecdf(pit = pit_w_self) +

labs(title = "LOO-PIT: WBA — Self-Focused",

subtitle = "Healthy = ECDF stays inside the grey envelope")

p_pit_o <- bayesplot::ppc_pit_ecdf(pit = pit_w_social) +

labs(title = "LOO-PIT: WBA — Socially-Influenced",

subtitle = "Healthy = ECDF stays inside the grey envelope")

(p_pit_b | p_pit_s | p_pit_o) +

plot_annotation(

title = "LOO-PIT Calibration Checks: Weighted Bayesian Agent",

subtitle = "Binary outcomes use randomised PIT. Departures from uniformity indicate miscalibration."

)

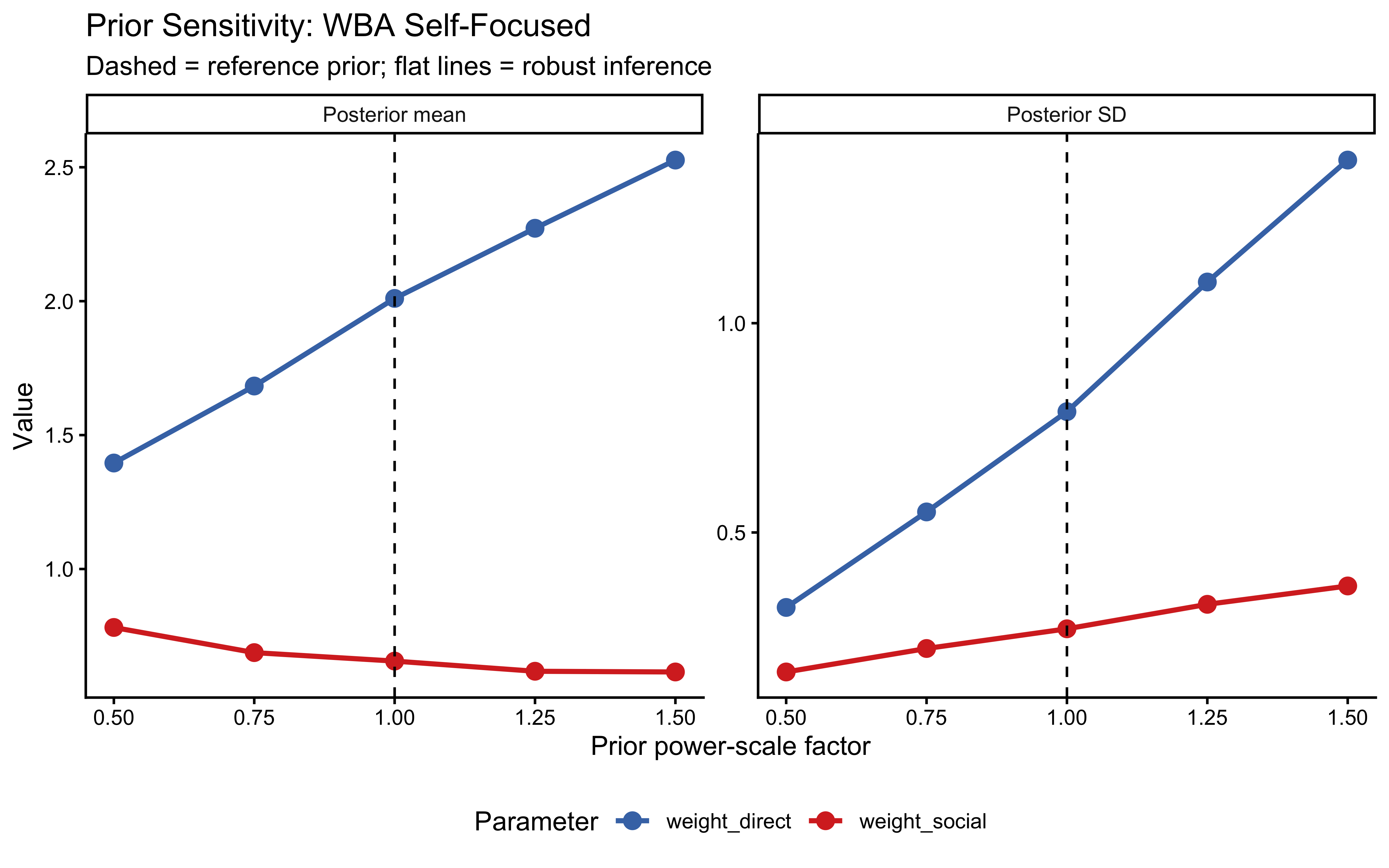

10.12.6 Prior Sensitivity Analysis

We assess how sensitive the WBA posterior is to scaling the prior and likelihood using priorsense::powerscale_sensitivity(). This identifies parameters whose posteriors shift substantially under mild prior perturbation — a sign of insufficient data or a poorly chosen prior.

# powerscale_sensitivity requires a brms or priorsense-compatible fit.

# For raw cmdstanr fits we use the manual power-scaling approach.

manual_sensitivity <- function(fit_ref, data_list, model,

scales = c(0.5, 0.75, 1.0, 1.25, 1.5),

label = "") {

results <- map_dfr(scales, function(s) {

# Power-scale the prior by adjusting the lognormal sdlog

# Doubling sdlog broadens the prior (s < 1 tightens it)

new_sdlog <- 0.5 * s

modified_stan <- gsub(

"lognormal_lpdf(weight_direct | 0, 0.5)",

sprintf("lognormal_lpdf(weight_direct | 0, %.3f)", new_sdlog),

WeightedAgent_stan, fixed = TRUE

)

modified_stan <- gsub(

"lognormal_lpdf(weight_social | 0, 0.5)",

sprintf("lognormal_lpdf(weight_social | 0, %.3f)", new_sdlog),

modified_stan, fixed = TRUE

)

tmp_file <- tempfile(fileext = ".stan")

writeLines(modified_stan, tmp_file)

mod_tmp <- cmdstan_model(tmp_file)

fit_tmp <- mod_tmp$sample(

data = data_list,

seed = 999,

chains = 2,

iter_warmup = 500,

iter_sampling = 500,

refresh = 0

)

draws <- as_draws_df(fit_tmp$draws(c("weight_direct", "weight_social")))

tibble(

scale = s,

wd_mean = mean(draws$weight_direct),

ws_mean = mean(draws$weight_social),

wd_sd = sd(draws$weight_direct),

ws_sd = sd(draws$weight_social)

)

})

results |>

pivot_longer(-scale, names_to = "stat", values_to = "value") |>

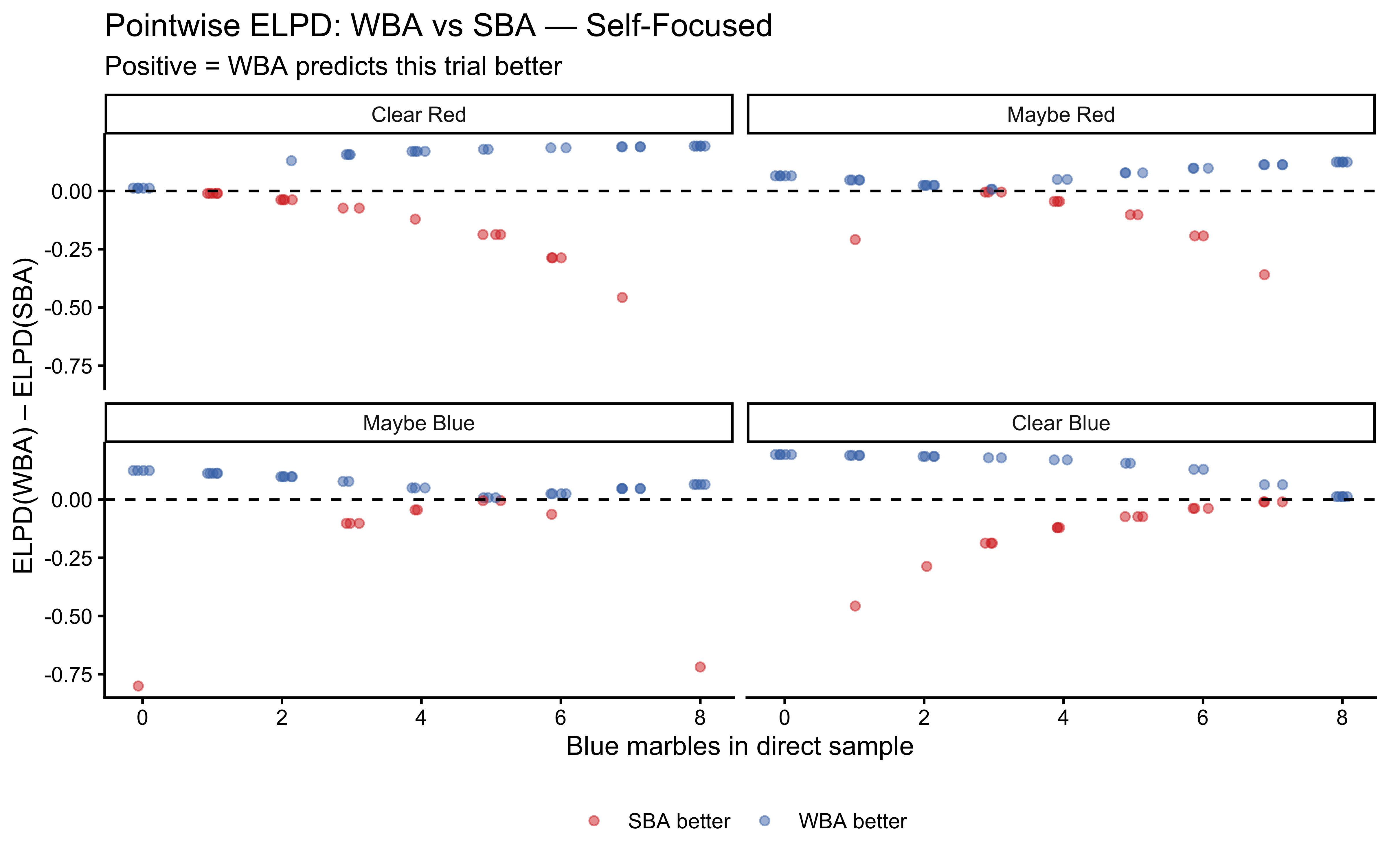

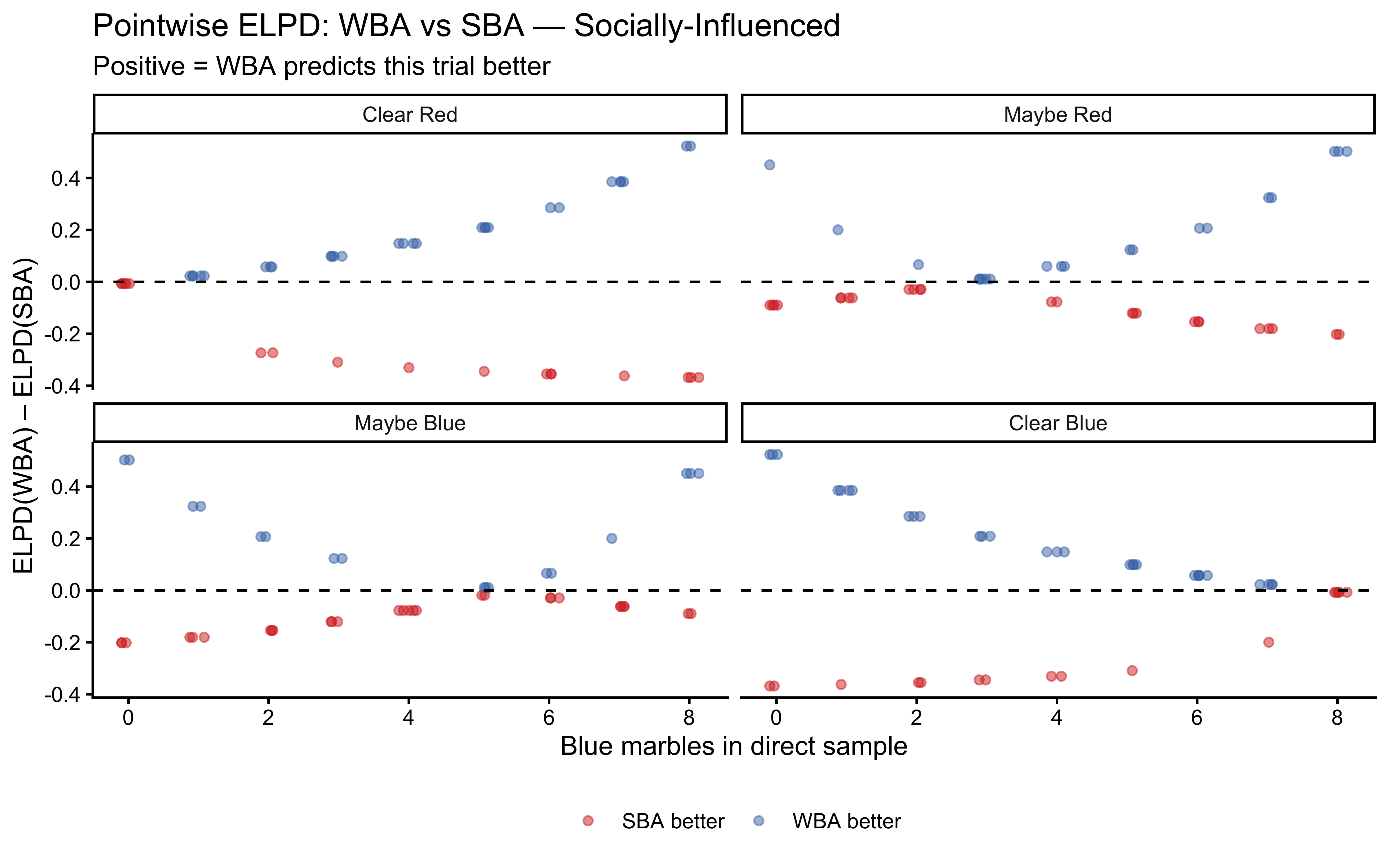

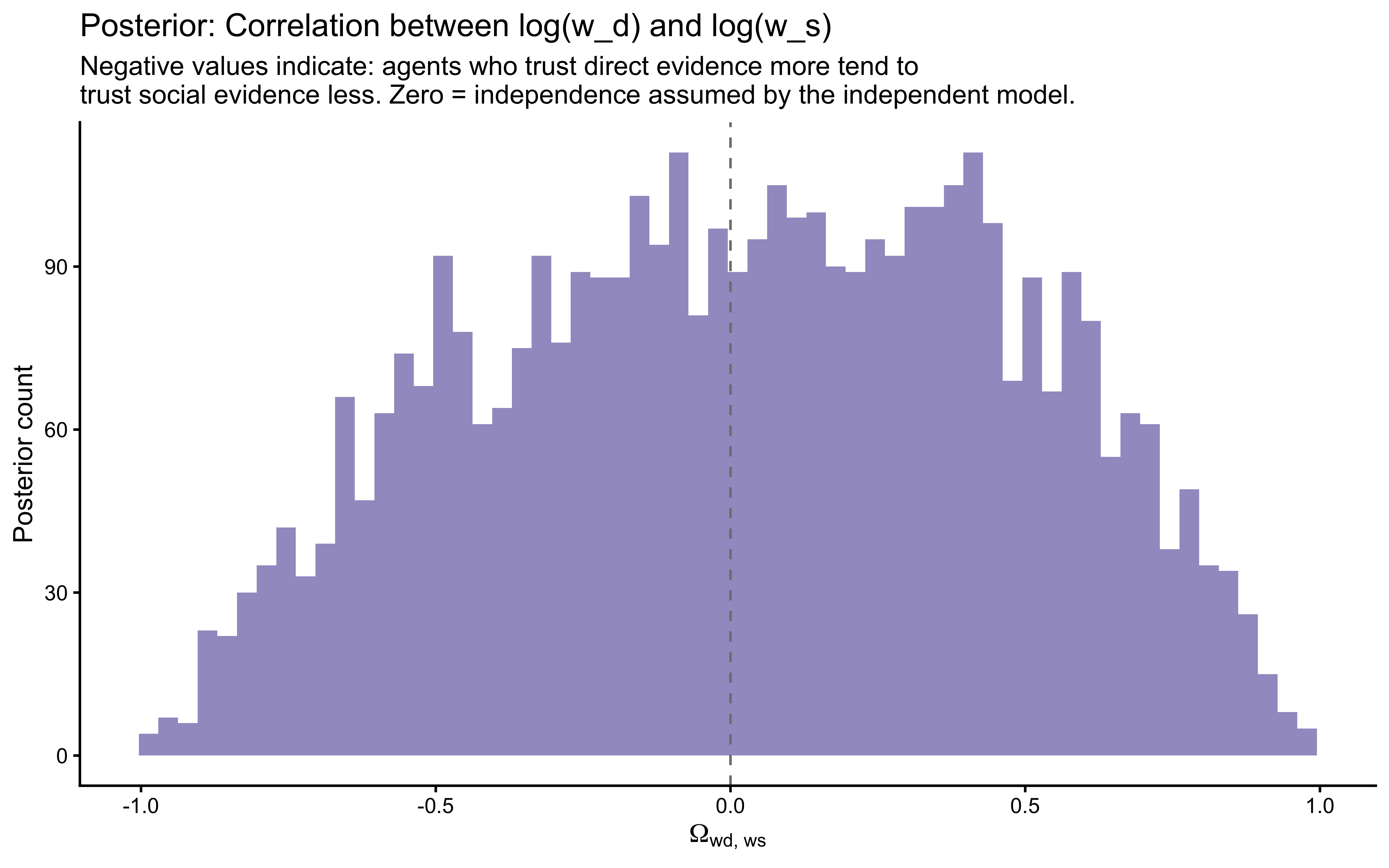

mutate(