Chapter 8 Deciding Between Competing Theories: Model Comparison

📍 Where we are in the Bayesian modeling workflow: Chs. 1–6 built models, validated them, and scaled them to populations. Every model passed a six-phase quality battery before fitting real data. This chapter asks the next question: when two validated models both fit the data, which one better predicts data it has never seen? The answer uses PSIS-LOO cross-validation and requires the validated fits from Ch. 6. The reversal learning design introduced in Ch. 5 and used in Ch. 6 is essential here too — it is the design that makes the two models distinguishable in the first place.

By Chapter 6 we had a working Bayesian workflow: specify a generative model, validate it with SBC and parameter recovery, fit it to data, and diagnose the posterior geometry, both in the case of single agent and hierarchical models. We now face a different question: we rarely have only one possible model of the cognitive processes underlying the observed behaviors.

Sometimes we have different models representing competing theoretical accounts of cognitive processes. For instance, a Gaussian filter model of learning regularities in one’s opponent’s behavior in the Matching Pennies game vs a Theory of Mind model of learning the model used by the opponent vs. an active inference model trying to actively deceive the opponent.

Sometimes we have simpler and more complex versions of the same model: should we assume we equally learn from all feedback (using a reinforcement learning model) or should we assume different learning from positive and negative feedback (using a dual learning rate model)?

In either case, once we have ensured that all models involved pass their individual quality checks, how do we decide which better accounts for the observed data?

There are many ways to compare models, but here we develop a Bayesian predictive approach consistent with our approach in previous chapters. The core argument is that a model should be judged not by how well it explains the data it was trained on, but by how well it predicts data it has never seen. We formalize this as the Expected Log Predictive Density (ELPD), build an efficient computational approximation via PSIS-LOO, and apply the full workflow to the biased and memory agent models from Chapter 6. This approach also gives us a principled way to identify difficult to predict data points (sometimes called influential observations), which can tell us much about the limits of our models and different mechanisms at work in different portions of the data. However, note that focusing on predictive abilities does not directly equate to model most likely to be true! It is a useful pragmatic measure and we should keep it in mind.

As in previous chapters, we heavily rely on simulations to understand these techniques and validate our model comparison procedure. Because we control the data-generating mechanism, we can evaluate not just whether model comparison produces a preference, but whether it produces the correct preference — the model recovery test.

Remember, model comparison is not a fail-safe procedure to determine the absolute “truth”. All models are simplifications. Instead, it helps us identify which of our candidate models provides the most useful or most plausible account of the data, given our current understanding and the available evidence. Model comparison should always be part of a larger principled scientific workflow using models as stepping stones to deeper understanding, not as final arbiters of truth.

CRITICAL PRECONDITION: Model comparison is only scientifically meaningful if all candidate models have already passed the individual quality checks established in Chapter 5. If Model A converges perfectly but Model B fails its SBC calibration or has persistent divergences, an ELPD comparison is a “garbage-in, garbage-out” exercise. We only compare models that have earned the right to be taken seriously.

8.1 Learning Objectives

After completing this chapter, you will be able to:

Explain the failure mode of in-sample fit: Understand why simply choosing the model that fits the training data best is misleading and leads to the “memorization” of noise.

Understand Cross-Validation: Grasp the core concept of partitioning data into training and test sets to estimate generalization performance.

Define ELPD rigorously: State the Expected Log Predictive Density as the formal Bayesian target for predictive accuracy.

Implement PSIS-LOO: Compute ELPD estimates from Stan fits and use Pareto-\(\hat{k}\) to identify when the importance-sampling approximation is unreliable.

Characterize influential observations: Link high \(\hat{k}\) values back to the cognitive structure of the task, distinguishing between person-level outliers and trial-level surprises.

Perform exact agent-level K-Fold CV: Implement a parallelized cross-validation pipeline to test how models generalize to completely new participants.

Diagnose LOO-PIT calibration: Evaluate whether a model’s predictive uncertainty matches reality using randomized PIT values and the formal POT-C test.

Interpret loo_compare() and Bayesian Stacking: Reason about the \(|elpd\_diff|/se\_diff\) ratio and use stacking weights to find the optimal predictive mixture of models.

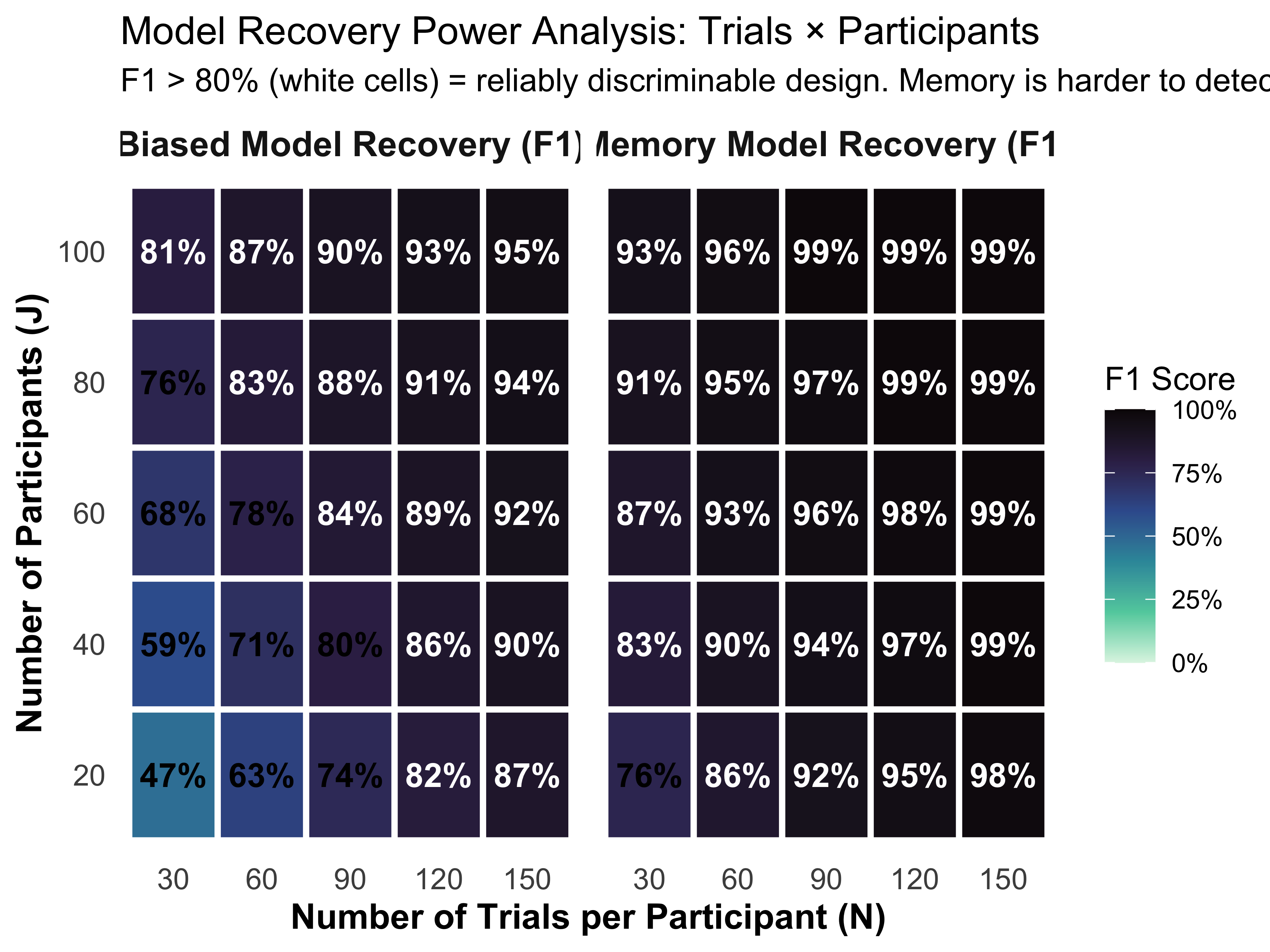

Conduct Power Analysis for Model Recovery: Use 2x2 confusion matrices and 2D trade-off plots (Trials vs. Participants) to determine if an experimental design is discriminative enough to identify the true cognitive mechanism.

Evaluate Model Calibration via SBC: Move beyond binary “wins” to assess the probabilistic calibration of model weights using Simulation-Based Calibration for Models.

Identify sequentially dependent data: Recognize when trial-level exchangeability is violated and describe the Leave-Future-Out (LFO-CV) remedy.

8.2 Why In-Sample Fit Fails: The Overfitting Trap

The most naive approach to comparing two models is to ask which fits the training data better. The problem with this criterion is that it is systematically biased toward more complex models. A model with enough free parameters can memorize any finite dataset. But memorization is not understanding — a model that has memorized the noise in its training data will make poor predictions on new data it has never seen.

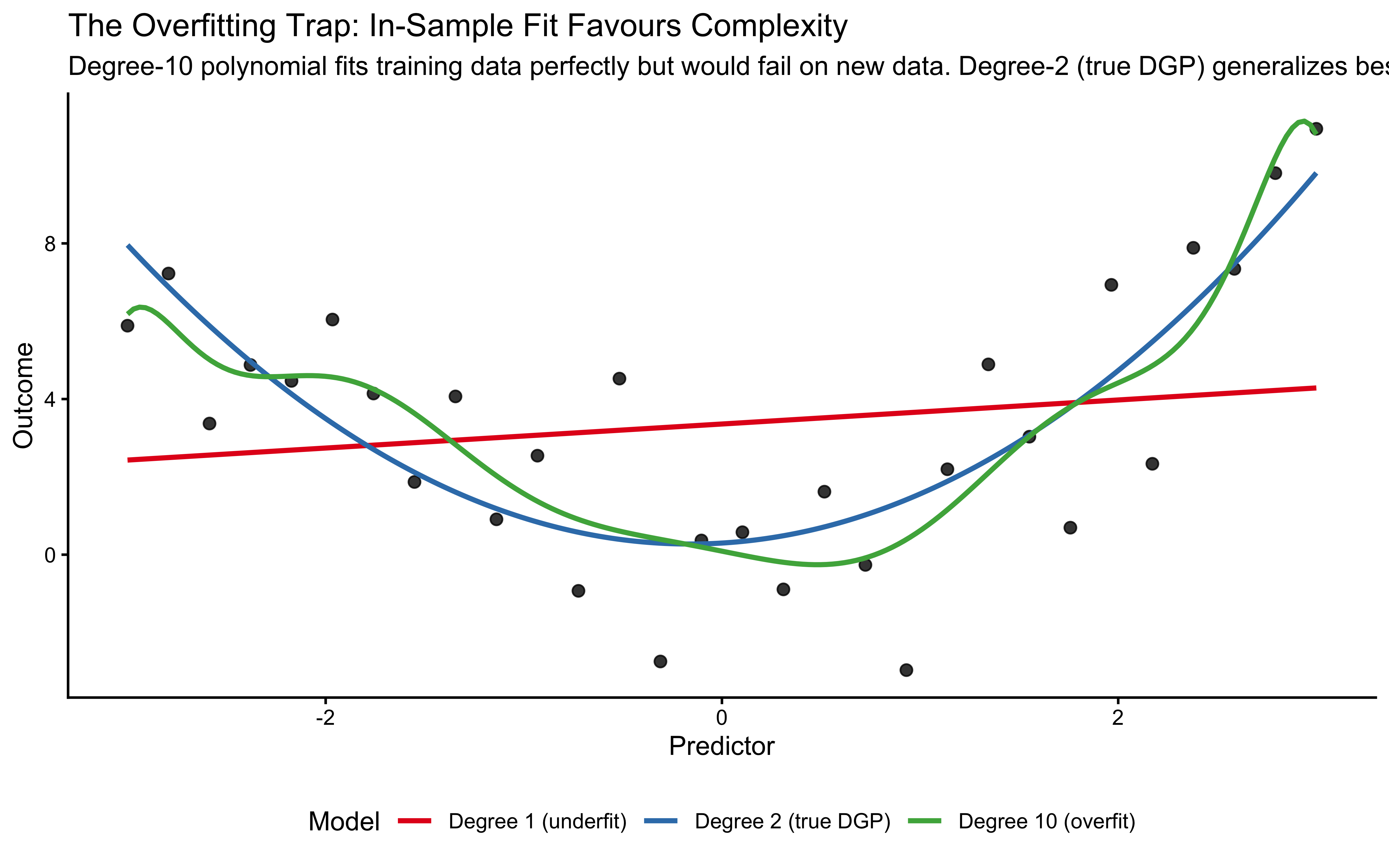

To make this concrete, we generate data from a simple quadratic curve with added noise, then fit three polynomial models of increasing complexity.

set.seed(101)

n <- 30

x <- seq(-3, 3, length.out = n)

y_true <- 1 + 0.5 * x + 0.8 * x^2

y_obs <- y_true + rnorm(n, 0, 2.5)

df_fit <- tibble(x = x, y = y_obs)

fit_p1 <- lm(y ~ x, data = df_fit)

fit_p2 <- lm(y ~ poly(x, 2), data = df_fit)

fit_p10 <- lm(y ~ poly(x, 10), data = df_fit)

x_pred <- seq(-3, 3, length.out = 200)

pred_df <- tibble(

x = x_pred,

lin = predict(fit_p1, newdata = data.frame(x = x_pred)),

quad = predict(fit_p2, newdata = data.frame(x = x_pred)),

high = predict(fit_p10, newdata = data.frame(x = x_pred))

) |>

pivot_longer(-x, names_to = "model", values_to = "y_pred") |>

mutate(model = factor(model,

levels = c("lin", "quad", "high"),

labels = c("Degree 1 (underfit)", "Degree 2 (true DGP)",

"Degree 10 (overfit)")))

ggplot(df_fit, aes(x = x, y = y)) +

geom_point(size = 2, alpha = 0.8) +

geom_line(data = pred_df, aes(y = y_pred, color = model), linewidth = 1) +

scale_color_brewer(palette = "Set1", name = "Model") +

labs(title = "The Overfitting Trap: In-Sample Fit Favours Complexity",

subtitle = "Degree-10 polynomial fits training data perfectly but would fail on new data. Degree-2 (true DGP) generalizes best.",

x = "Predictor", y = "Outcome") +

theme(legend.position = "bottom")

In this plot, we generated data from a quadratic curve with added noise. A linear model (red) underfits, missing a crucial feature of the data: the curvature. A degree-10 polynomial (green) overfits the data: it captures the curvature, but also the noise, deviating here and there to capture each stray datapoint. The quadratic model (blue) captures the underlying trend without fitting every wiggle, representing a better balance.

The error these models make on the training data (e.g. root mean square error) monotonically decreases as we increase the complexity of the model (and the variance explained, \(R^2\), monotonically increases), and therefore we cannot use it to choose between models. We need a criterion that rewards genuine generalization: models that capture the signal without overfitting the noise.

8.2.1 The Concept of Cross-Validation

To find this balance between fitting and generalizing, we need a method to test how well our model performs on data it hasn’t seen yet. Since we usually only have one dataset, we artificially create “unseen” data by hiding parts of our dataset from the model during the training phase. This is the core concept of Cross-Validation (CV).

The general process is:

Split your data into a training set and a test set, respecting the structure in the data. E.g. if you have multiple participants, you might want to hold out entire participants for testing, rather than random trials, to test generalization to new individuals.

Fit (train) your model using only the training data.

Evaluate the model’s predictions on the hidden test data.

Repeat this process with different splits and average the performance.

8.2.1.1 K-Fold Cross-Validation

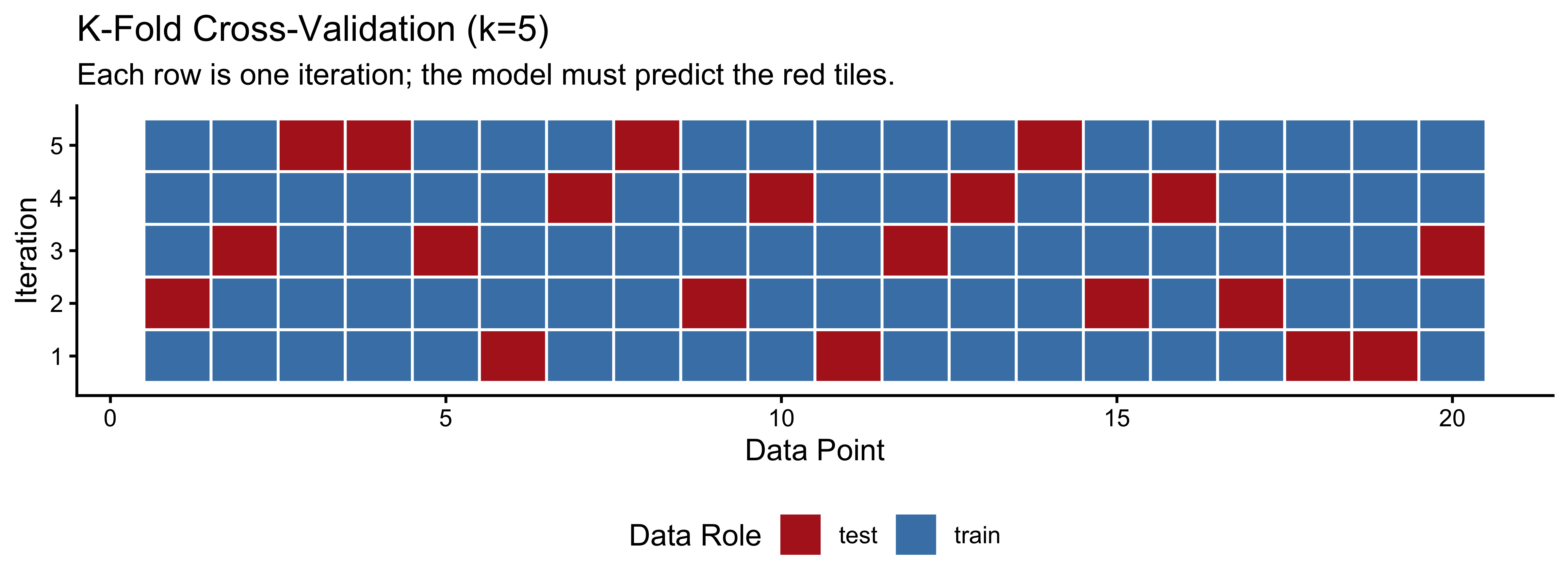

In K-Fold cross-validation, we divide the data into \(K\) equally sized subsets (folds). We train the model on \(K-1\) folds and test it on the remaining fold, repeating this until every fold has served as the test set exactly once.

# Visualization of k-fold cross-validation

n_data <- 20

cv_data <- tibble(index = 1:n_data, value = rnorm(n_data))

cv_data$fold <- sample(rep(1:5, length.out = n_data))

cv_viz_data <- tibble(

iteration = rep(1:5, each = n_data),

data_point = rep(1:n_data, 5),

role = ifelse(cv_data$fold[data_point] == iteration, "test", "train")

)

ggplot(cv_viz_data, aes(x = data_point, y = iteration, fill = role)) +

geom_tile(color = "white", linewidth = 0.5) +

scale_fill_manual(values = c("train" = "steelblue", "test" = "firebrick"),

name = "Data Role") +

labs(title = "K-Fold Cross-Validation (k=5)",

subtitle = "Each row is one iteration; the model must predict the red tiles.",

x = "Data Point", y = "Iteration") +

theme(panel.grid = element_blank(), legend.position = "bottom")

8.2.1.2 Leave-One-Out Cross-Validation (LOO-CV)

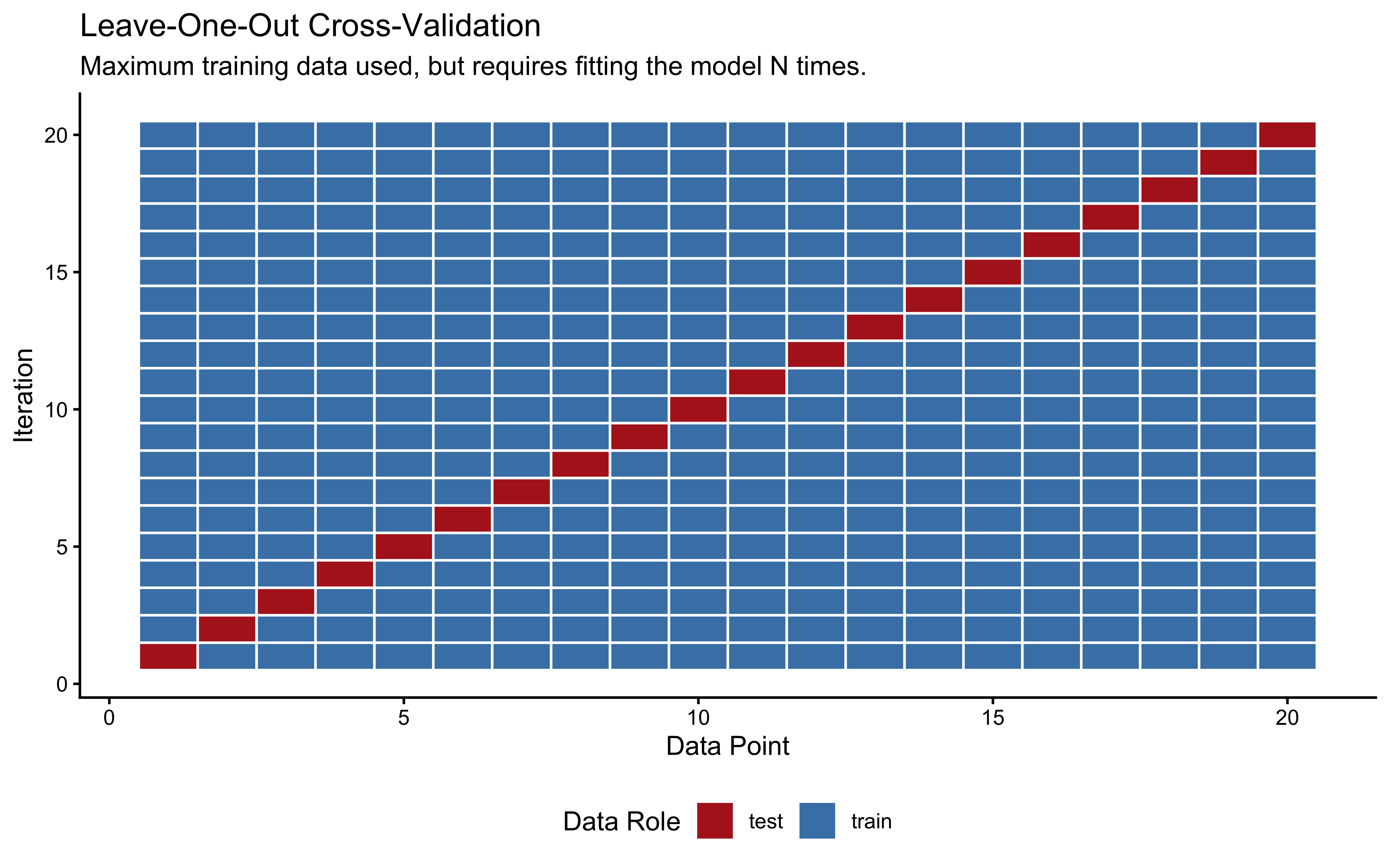

Leave-One-Out Cross-Validation (LOO-CV) is the extreme limit of K-Fold, where \(K\) equals the total number of data points (\(N\)). In each iteration, we hold out exactly one observation for testing and train the model on the remaining \(N-1\) observations.

loo_viz_data <- tibble(

iteration = rep(1:n_data, each = n_data),

data_point = rep(1:n_data, n_data),

role = ifelse(iteration == data_point, "test", "train")

)

ggplot(loo_viz_data, aes(x = data_point, y = iteration, fill = role)) +

geom_tile(color = "white", linewidth = 0.5) +

scale_fill_manual(values = c("train" = "steelblue", "test" = "firebrick"),

name = "Data Role") +

labs(title = "Leave-One-Out Cross-Validation",

subtitle = "Maximum training data used, but requires fitting the model N times.",

x = "Data Point", y = "Iteration") +

theme(panel.grid = element_blank(), legend.position = "bottom")

Cross-validation is especially important in cognitive Bayesian modeling for several reasons:

Bayesian models can be highly parameterized (especially hierarchical ones), making them prone to overfitting.

Prior choices can significantly influence a model’s predictive performance.

We often need to compare competing theoretical accounts of the same behavior.

However, implementing cross-validation for Bayesian models presents two key challenges that we must solve:

Proper Scoring: In standard machine learning, we might just calculate the mean squared error or accuracy on the test fold. But Bayesian models output probability distributions, not just point estimates. We need an appropriate metric for evaluating the quality of the full predictive distribution.

Computational Cost: For a dataset with 6,000 observations, true LOO-CV would require fitting our Stan model 6,000 separate times. Because MCMC sampling can take hours, exact LOO-CV is usually practically impossible.

In the next sections, we will solve the first challenge using the Expected Log Predictive Density (ELPD), and the second challenge using an elegant mathematical shortcut called PSIS-LOO.

8.3 Formalizing Predictive Accuracy: The ELPD

8.3.1 The Log-Score Proper Scoring Rule

Any model \(M\) can generate a predictive distribution: how likely different values of the outcome variable are estimated to be given the data it has seen and the model’s assumptions.

When an actual new outcome \(\tilde{y}_0\) is observed, we evaluate the model by checking exactly how much probability mass it assigned to the event that actually happened: \(p(\tilde{y}_0 \mid y, M)\).

We take the natural logarithm of this probability to compute the log-score: \[\text{log-score}(M, \tilde{y}_0) = \log p(\tilde{y}_0 \mid y, M)\]

We use the log scale for two primary reasons:

Additivity: The joint probability of independent future observations is the product of their individual probabilities. On the log scale, this becomes a sum, which is mathematically tractable and avoids computational underflow: probabilities being smaller than one being multiplied with each other become small very quickly and run into approximation issues, because computers use only a fixed number of decimals.

Information Theory: The negative log-score, \(-\log p(\tilde{y}_0 \mid y, M)\), is the surprisal or Shannon information content of the outcome under the model. Maximizing the log-score is equivalent to minimizing the model’s surprise.

We call the log-score a scoring rule: a function that provides a summary measure for the evaluation of probabilistic forecasts. A scoring rule is strictly proper if the model maximizes its expected score if and only if the probability distribution it outputs exactly matches the true data-generating distribution. This is a desirable property: a model cannot “game” the score by being strategically overconfident or underconfident. Overconfident models - those that assign near-zero probability to outcomes that then occur - incur unbounded penalties. A model that says “I am 99% certain this participant will choose right” and the participant chooses left will be penalized far more than a model that honestly said “I think it’s about 60%.”

You may have encountered Bayes Factors as a method for model comparison. Bayes Factors rely on the marginal likelihood—the probability of the data integrated over the entire prior. While theoretically elegant, Bayes Factors are notoriously sensitive to the exact width of priors and are often computationally impossible to calculate for the complex hierarchical models used in cognitive science. In this course, we rely on ELPD and Cross-Validation because they focus on predictive generalization, are more robust to prior choices, and provide trial-level diagnostics that Bayes Factors lack.

8.3.2 The Expected Log Predictive Density

The log-score evaluates a model on a single new observation. But what we really want is a summary over all possible future observations - a measure of how good the model would be in general, not just on one lucky or unlucky draw.

The Expected Log Predictive Density (ELPD) does exactly this: it averages the log-score over all possible future observations, weighted by how likely those observations are under the true data-generating distribution \(p_t\):

\[\text{ELPD} = \int p_t(\tilde{y}) \log p(\tilde{y} \mid y, M) \, d\tilde{y}\]

where \(p(\tilde{y} \mid y, M) = \int p(\tilde{y} \mid \theta) \, p(\theta \mid y) \, d\theta\) is the posterior predictive distribution - the model’s prediction for a new observation, averaged over the uncertainty in its parameters. The model with the highest ELPD makes the best average predictions across the full range of possible future data.

There is one crucial catch: we do not know \(p_t\) - the true distribution of future observations. If we did, we would not need a model in the first place! So we have to estimate the ELPD from the data we have. Leave-one-out cross-validation (LOO-CV) provides an approach that is asymptotically unbiased:

\[\widehat{\text{ELPD}}_{\text{LOO}} = \sum_{i=1}^{N} \log p(y_i \mid y_{-i}, M)\]

The notation \(y_{-i}\) means “all observations except observation \(i\)”. So for each data point, we ask: if the model had never seen this observation, how well would it predict it? A model that memorized \(y_i\) to reduce its training loss will be penalized here, because the LOO posterior is computed without \(y_i\). The prediction must generalize - it cannot cheat by peeking at the answer.

But how do we calculate it?

8.3.3 The Importance-Sampling Shortcut

Computing \(\widehat{\text{ELPD}}_{\text{LOO}}\) exactly would require fitting the model \(N\) times - once with each observation left out. For our hierarchical models with 50 agents and 120 trials each, that is 6,000 separate MCMC runs. This is not feasible in practice, especially when we get to more complex models.

Fortunately, there is an elegant mathematical shortcut, which is analogous to what we have seen when assessing prior sensitivity. The idea is to use the single full-data posterior we already have and to reweight its draws to approximate what the posterior would have looked like had we omitted observation \(i\). .

The mathematical weights that accomplish this reweighting are the inverse of the likelihood contributions: observations that the model fits well (high likelihood) get down-weighted when computing what the model “would have” predicted without them. These log-scale importance weights are simply the negative pointwise log-likelihoods:

\[\log r_i^{(s)} = -\log p(y_i \mid \theta^{(s)})\]

These are already stored in our Stan log_lik block from Chapter 6. So no additional computation is required: everything we need is already sitting in our existing model fits.

However, we should be careful. If the model’s estimate would barely change when we removing observation \(i\), then \(i\) is not very influential, and we can approximate the LOO estimate cheaply. If the model’s estimate would change a lot, then \(i\) is influential, and our approximation is less reliable. We therefore need to use a diagnostic to flag such cases: the Pareto-\(\hat{k}\), which we cover in detail in a later paragraph.

This combination of LOO cross-validation with Pareto-Smoothed Importance Sampling is called PSIS-LOO (Vehtari, Gelman & Gabry, 2017), and it is our primary tool for model comparison, so we’ll go through it step by step.

Why learn the mechanics before using

loo()? Theloo()function is one line of R. But knowing what it is doing — reweighting posterior draws to approximate leave-one-out predictions, then checking whether those weights are stable via the Pareto tail — lets you diagnose failures and interpret the Pareto-\(\hat{k}\) diagnostic as a cognitive signal (which observations is the model surprised by?) rather than just a warning light. The under-the-hood demonstration on the next page is worth reading once carefully; after that,loo()will never be a black box.

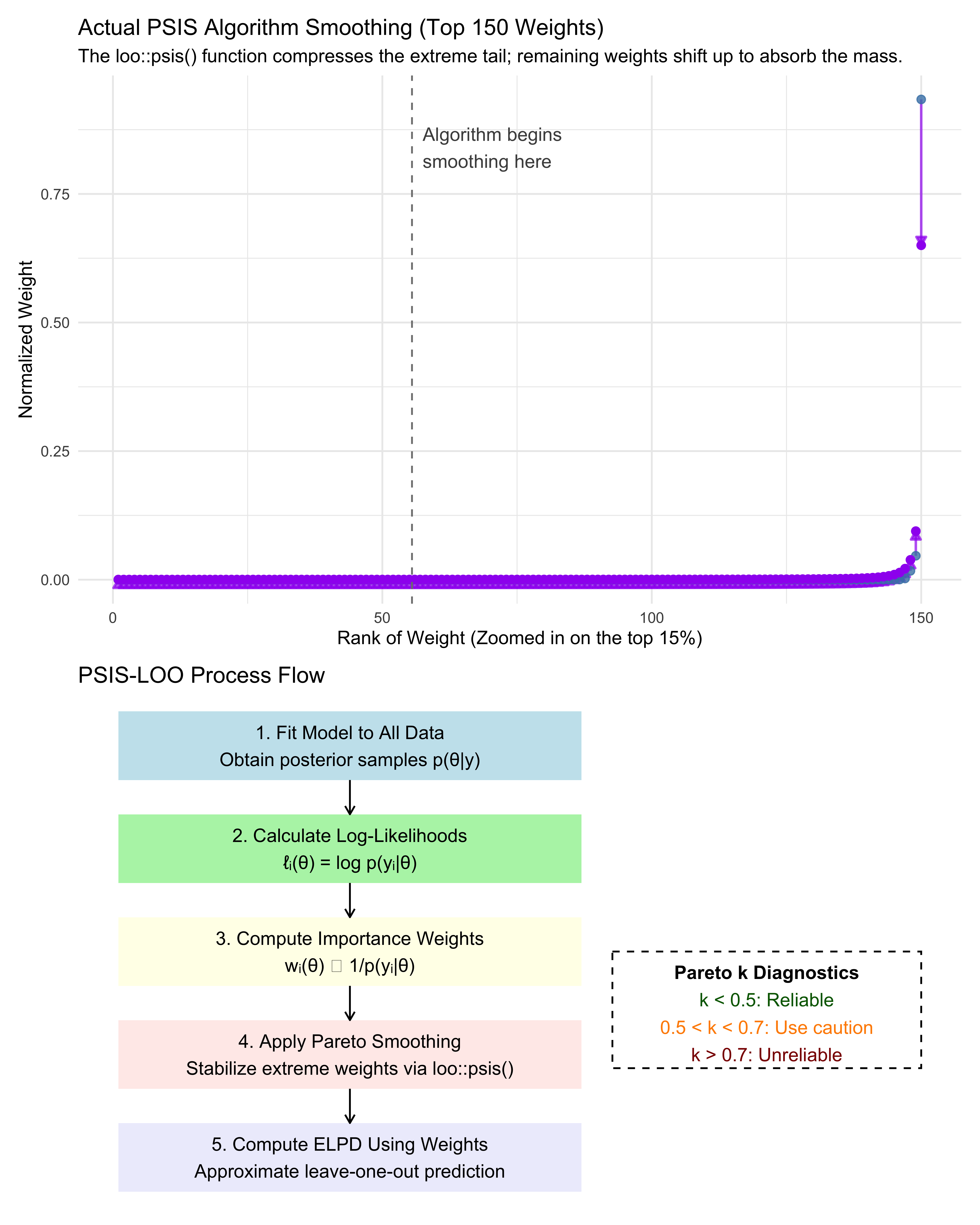

To really understand what the loo() function will do for us later, let’s look under the hood. We can simulate the PSIS-LOO process directly using the loo package’s internal engine. We will generate 1,000 simulated log-likelihood values, inject some extreme outliers to simulate a “shocked” model, and feed them directly into the actual loo::psis() algorithm.

We can then extract the smoothed weights and compare them to the raw weights to see exactly how the algorithm protects us from extreme MCMC draws.

n_draws <- 1000

n_draws <- 1000

# 1. Simulate realistic raw log-importance weights

# 995 well-behaved draws, and 5 massive breakaway outliers

log_w_raw <- c(rnorm(995, mean = 0, sd = 1), 10, 12, 14, 15, 18)

# 2. Apply the ACTUAL PSIS algorithm

psis_result <- loo::psis(log_w_raw, r_eff = 1)

# Print the diagnostic to prove we triggered the smoothing

cat("Pareto-k diagnostic from the real algorithm:", round(psis_result$diagnostics$pareto_k, 2), "\n")## Pareto-k diagnostic from the real algorithm: 1.76# Print the diagnostic to prove we triggered the smoothing

cat("Pareto-k diagnostic from the real algorithm:", round(psis_result$diagnostics$pareto_k, 2), "\n")## Pareto-k diagnostic from the real algorithm: 1.76# 3. Extract and normalize weights for visualization

# Raw weights

w_raw <- exp(log_w_raw)

w_raw_norm <- w_raw / sum(w_raw)

# Smoothed weights (must also be explicitly normalized)

w_smooth_raw <- exp(as.numeric(psis_result$log_weights))

w_smooth_norm <- w_smooth_raw / sum(w_smooth_raw)

# 4. Sort the weights from smallest to largest to visualize the "tail"

# We sort by the raw weights so we can see how the specific extreme indices change

sort_order <- order(w_raw_norm)

weight_data <- data.frame(

raw = w_raw_norm[sort_order],

smoothed = w_smooth_norm[sort_order]

)

# The PSIS algorithm smooths the top 3 * sqrt(S) weights.

# For S=1000, that is the top ~95 weights.

weight_data_top <- tail(weight_data, 150)

weight_data_top$rank <- 1:150

tail_start_x <- 150 - 95 + 0.5

# PLOT: Importance weights before and after Pareto smoothing

p_weights <- ggplot(weight_data_top, aes(x = rank)) +

# Arrows showing the shift computed by the real algorithm

geom_segment(aes(xend = rank, y = raw, yend = smoothed),

arrow = arrow(length = unit(0.2, "cm"), type = "closed"),

color = "purple", linewidth = 0.7, alpha = 0.7) +

# Points drawn on top

geom_point(aes(y = raw), color = "steelblue", size = 2, alpha = 0.8) +

geom_point(aes(y = smoothed), color = "purple", size = 2, alpha = 1.0) +

# Add a vertical line to show where the algorithm began smoothing

geom_vline(xintercept = tail_start_x, linetype = "dashed", color = "gray50") +

annotate("text", x = tail_start_x + 2, y = max(weight_data_top$raw) * 0.9,

label = "Algorithm begins\nsmoothing here", hjust = 0, color = "gray30") +

# Labels and styling

labs(title = "Actual PSIS Algorithm Smoothing (Top 150 Weights)",

subtitle = "The loo::psis() function compresses the extreme tail; remaining weights shift up to absorb the mass.",

x = "Rank of Weight (Zoomed in on the top 15%)", y = "Normalized Weight") +

theme_minimal()

# PLOT: Conceptual diagram of PSIS-LOO process

p_flow <- ggplot() +

annotate("rect", xmin = 0.5, xmax = 3.5, ymin = 4, ymax = 5, fill = "lightblue", alpha = 0.7) +

annotate("text", x = 2, y = 4.5, label = "1. Fit Model to All Data\nObtain posterior samples p(θ|y)") +

annotate("rect", xmin = 0.5, xmax = 3.5, ymin = 2.5, ymax = 3.5, fill = "lightgreen", alpha = 0.7) +

annotate("text", x = 2, y = 3, label = "2. Calculate Log-Likelihoods\nℓᵢ(θ) = log p(yᵢ|θ)") +

annotate("rect", xmin = 0.5, xmax = 3.5, ymin = 1, ymax = 2, fill = "lightyellow", alpha = 0.7) +

annotate("text", x = 2, y = 1.5, label = "3. Compute Importance Weights\nwᵢ(θ) ∝ 1/p(yᵢ|θ)") +

annotate("rect", xmin = 0.5, xmax = 3.5, ymin = -0.5, ymax = 0.5, fill = "mistyrose", alpha = 0.7) +

annotate("text", x = 2, y = 0, label = "4. Apply Pareto Smoothing\nStabilize extreme weights via loo::psis()") +

annotate("rect", xmin = 0.5, xmax = 3.5, ymin = -2, ymax = -1, fill = "lavender", alpha = 0.7) +

annotate("text", x = 2, y = -1.5, label = "5. Compute ELPD Using Weights\nApproximate leave-one-out prediction") +

annotate("segment", x = 2, y = c(4, 2.5, 1, -0.5), xend = 2, yend = c(3.5, 2, 0.5, -1),

arrow = arrow(length = unit(0.2, "cm"))) +

annotate("rect", xmin = 3.7, xmax = 5.7, ymin = -0.2, ymax = 1.5, fill = "white", color = "black", linetype = "dashed") +

annotate("text", x = 4.7, y = 1.2, label = "Pareto k Diagnostics", fontface = "bold") +

annotate("text", x = 4.7, y = 0.8, label = "k < 0.5: Reliable", color = "darkgreen") +

annotate("text", x = 4.7, y = 0.4, label = "0.5 < k < 0.7: Use caution", color = "darkorange") +

annotate("text", x = 4.7, y = 0, label = "k > 0.7: Unreliable", color = "darkred") +

theme_void() +

labs(title = "PSIS-LOO Process Flow")

p_weights / p_flow

Notice how a few random MCMC draws initially monopolize the raw importance weights (the high blue dots). If we calculated ELPD using those raw weights, our cross-validation score would be highly erratic and dictated by statistical noise. The Pareto smoothing (purple dots) gently compresses the extreme tail of these weights into a more stable distribution.

Mathematically, an extreme raw importance weight means the approximation is unstable. Cognitively, an extreme importance weight means the model is utterly shocked by this specific participant’s choice. We will use this dual mathematical/cognitive interpretation to flag influential observations later.

But first let’s get to the thick of it and start simulating data.

8.4 Simulation Setup for Model Recovery

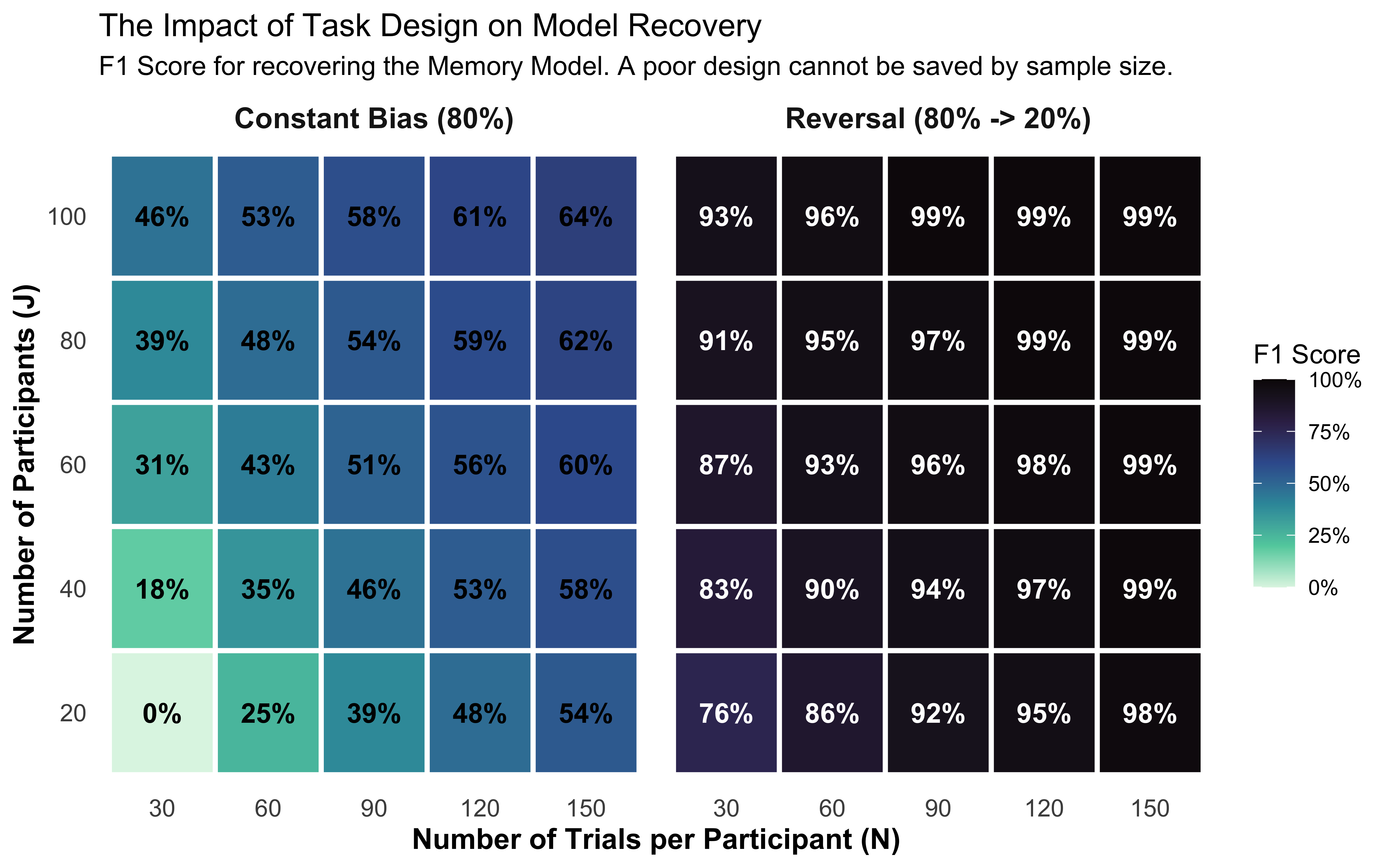

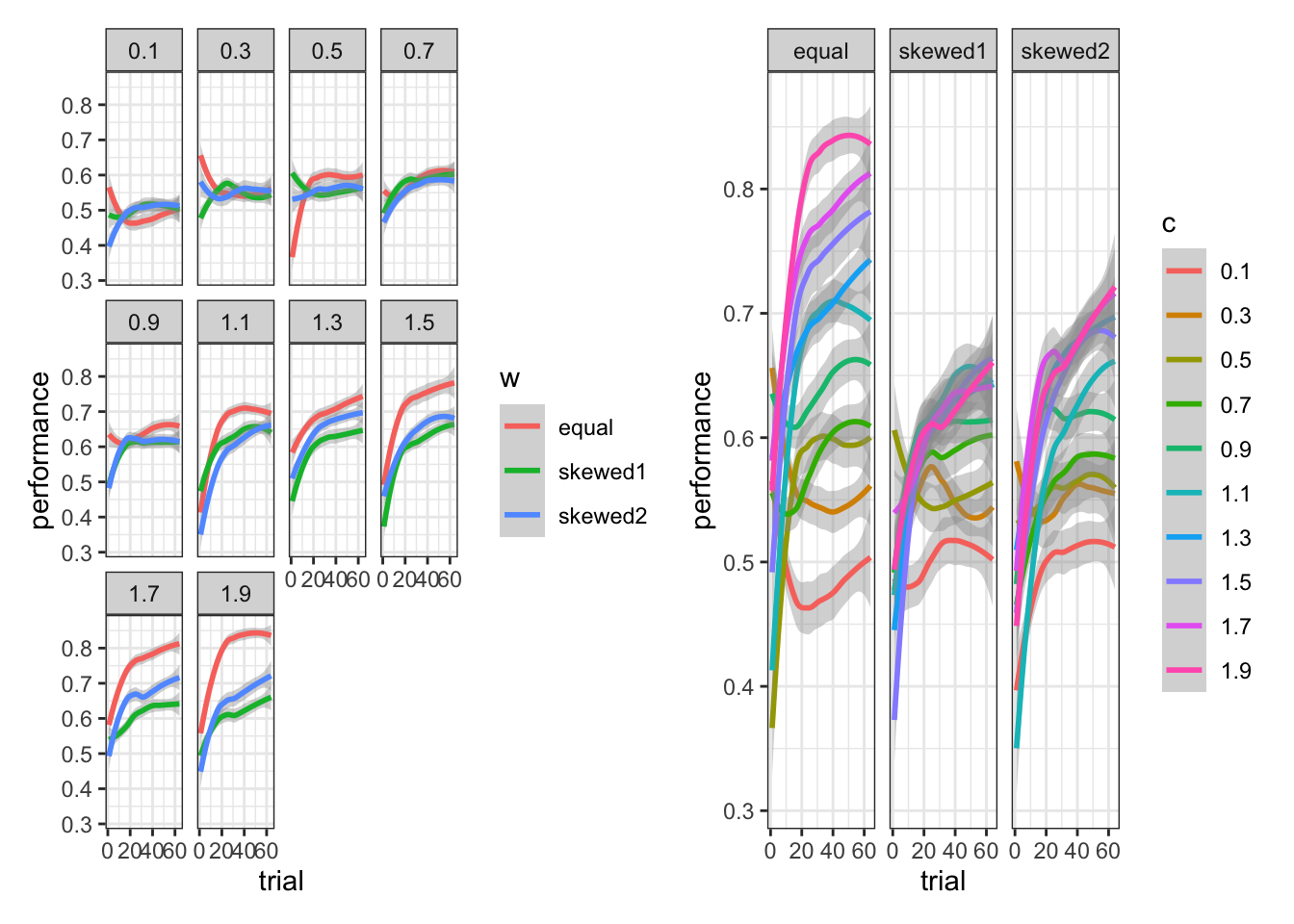

We simulate two populations of agents playing against a shared opponent using the reversal design first validated in Ch. 5 and used throughout Ch. 6: the opponent plays 80% right for the first half of the session, then switches to 20% right for the second half. We use the exact sequence loaded from Ch. 6 above (opponent_reversal), taking the first 120 trials (60 + 60) for this chapter’s shorter sessions.

Why this design? If the opponent plays a constant bias, the memory agent’s internal running average quickly settles near that value and stays flat.

Behaviorally, an agent responding to a flat memory signal is mathematically indistinguishable from a biased agent — both produce a fixed right-leaning rate. The reversal forces the memory signal to change sign at trial 61: memory agents reverse their behavior while biased agents do not. This contrast is what makes the two models theoretically separable, and why Ch. 5 discovered that a constant-bias design made bias and beta unidentifiable.

Note on recovery failure: We simulate a population with strong memory sensitivity (\(\mu_\beta = 1.5\)). With weak memory (\(\mu_\beta \approx 0.3\)), the behavioral difference would be subtle enough that the simpler biased model would win the ELPD comparison by default due to its lower complexity penalty — a correct finding, not a method failure.

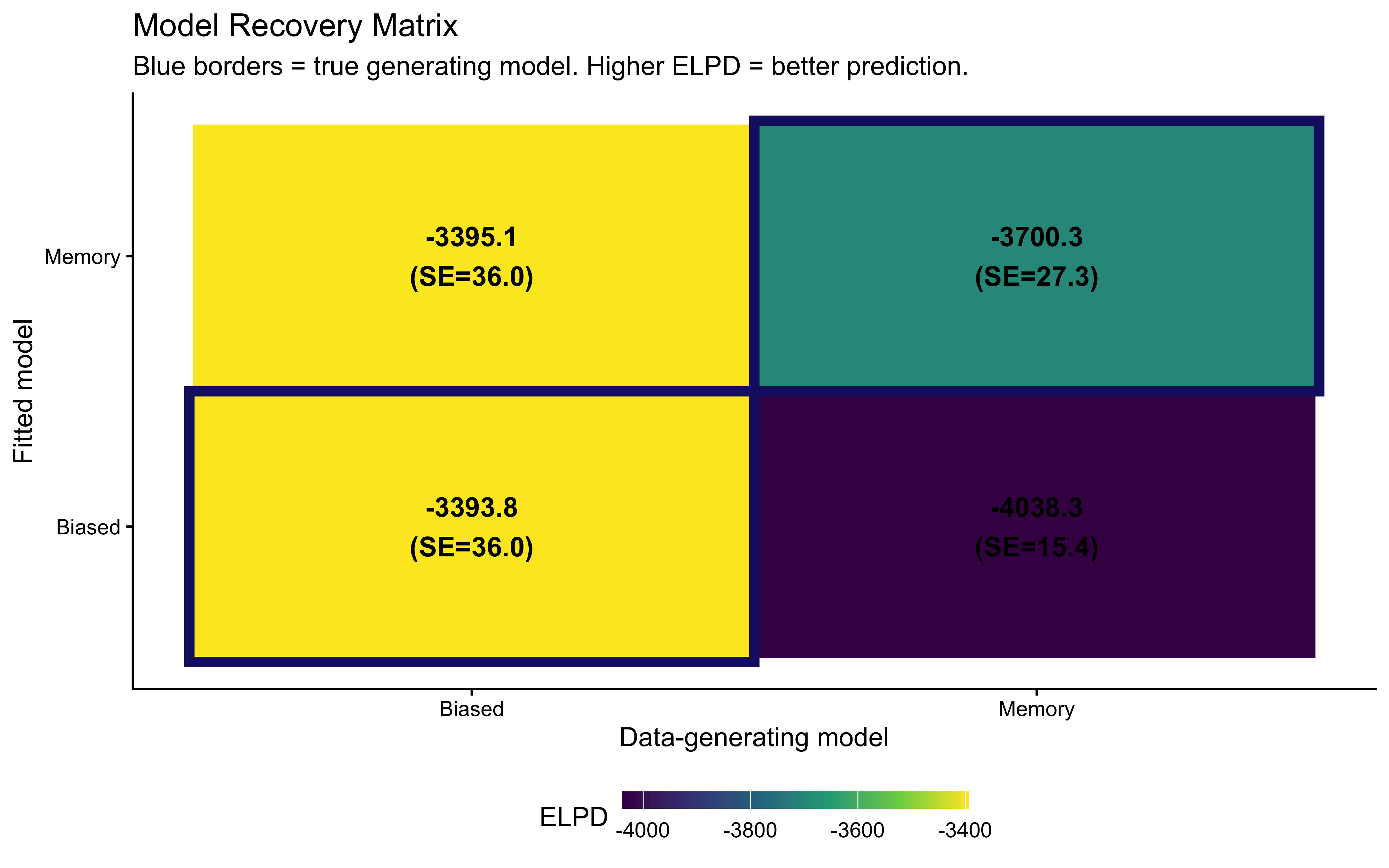

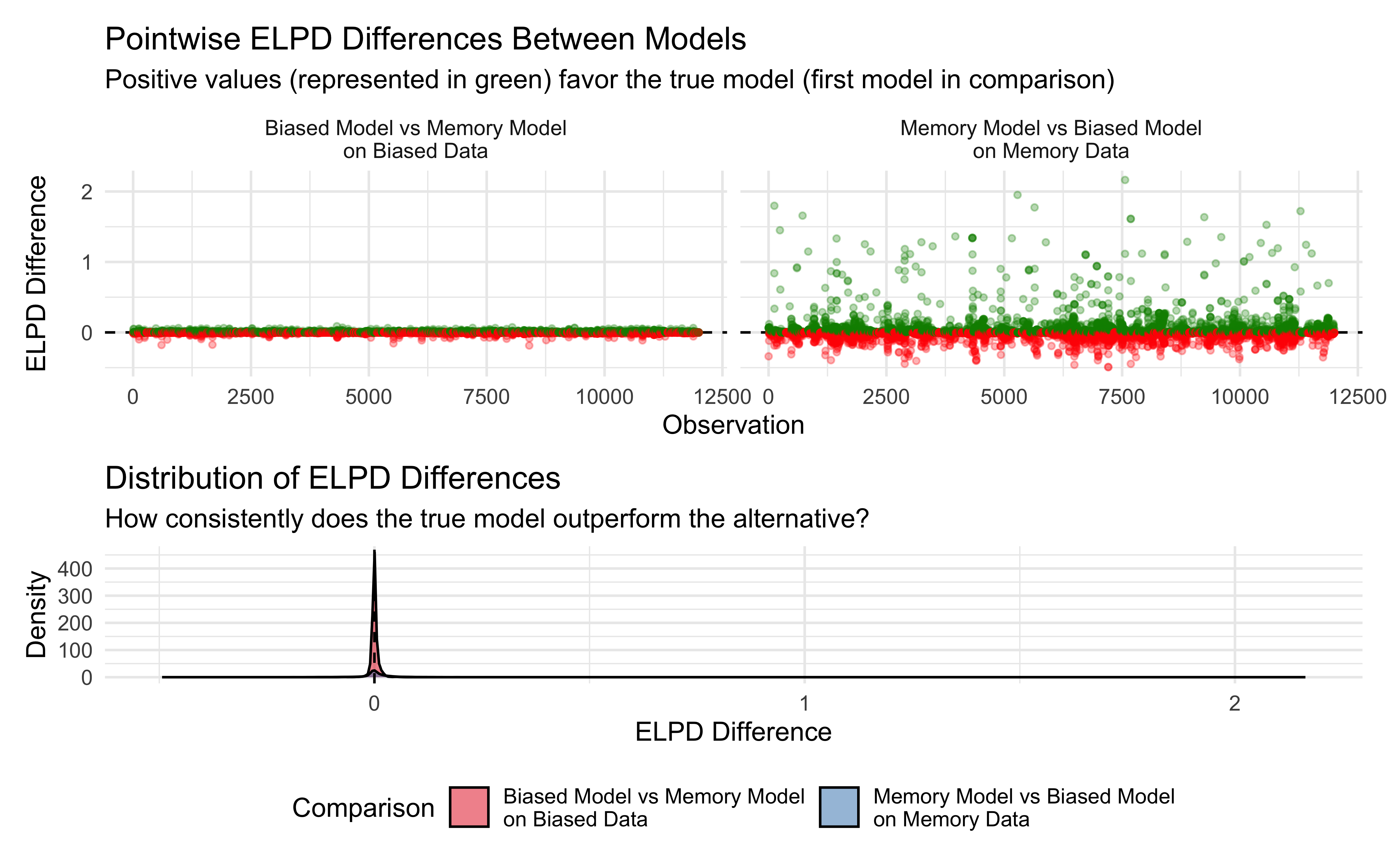

The key insight for model recovery is that we fit both models to both datasets, giving a 2×2 matrix of fits:

Biased model on biased data — the correctly-specified model.

Memory model on biased data — the over-specified model; it has an extra parameter (\(\beta\)) it does not need.

Biased model on memory data — the under-specified model; it lacks the flexibility to capture the reversal.

Memory model on memory data — the correctly-specified model.

PSIS-LOO should prefer the correct model in each column. This is model recovery: the analogue of parameter recovery one level higher — instead of asking “can we recover the right parameter values?”, we ask “can we identify the right model?”

simulate_model_recovery_data <- function(

n_agents = 50, n_trials = 120,

mu_theta = 1.0, sigma_theta = 0.5,

mu_alpha = 0.0, mu_beta = 1.5,

sigma_alpha = 0.3, sigma_beta = 0.5,

rho = 0.3,

opponent_choices = NULL,

seed = 42

) {

set.seed(seed)

# Reversal block design: 80% right for t 1:60, 20% right for t 61:120.

# If a fixed sequence is supplied (from Ch. 6), use it directly so that

# all chapters share the same opponent history.

if (!is.null(opponent_choices)) {

stopifnot(length(opponent_choices) >= n_trials)

opp_choices <- opponent_choices[seq_len(n_trials)]

} else {

opp_choices <- c(

rbinom(60, 1, 0.8),

rbinom(n_trials - 60, 1, 0.2)

)

}

# Perfect unweighted memory (cumulative average)

opp_rate_prev <- numeric(n_trials)

opp_rate_prev[1] <- 0.5

if (n_trials > 1) {

raw_rates <- cumsum(opp_choices[-n_trials]) / seq_len(n_trials - 1)

opp_rate_prev[-1] <- pmax(0.01, pmin(0.99, raw_rates)) # to avoid singularities

}

# Generating the hierarchical biased behaviors

# Generating the hierarchical biased behaviors

theta_j <- rnorm(n_agents, mu_theta, sigma_theta)

# Inject extreme outliers to naturally trigger high Pareto-k values

theta_j[1] <- 4.5 # Agent 1 is extremely biased right

theta_j[2] <- -4.5 # Agent 2 is extremely biased left

biased_data <- map_dfr(seq_len(n_agents), function(j) {

tibble(agent_id = j, trial = seq_len(n_trials),

choice = rbinom(n_trials, 1, plogis(theta_j[j])),

opp_rate_prev = opp_rate_prev, agent_type = "biased",

true_theta = theta_j[j])

})

# Generating the parameters for the hierarchical memory behaviors with correlation

## We follow the NCP parametrization to show you how, but also because it's more

## efficient for sampling correlated bias and beta.

## First we define the Cholesky factor for the correlation matrix:

L_Omega <- matrix(c(1, rho, 0, sqrt(1 - rho^2)), 2, 2)

## Then we need to convert it to the covariance matrix by pre-multiplying by

## the scale parameters (correlations are z-scored, so to get the actual

## values we need to put the scales back in):

Scale <- diag(c(sigma_alpha, sigma_beta)) %*% L_Omega

## Then we create a matrix of independent, standard normal draws

Z <- matrix(rnorm(2 * n_agents), 2, n_agents)

## Then we apply our rescaling to the standard normal draws and add the group-level means to get the individual-level parameters

indiv <- t(matrix(c(mu_alpha, mu_beta), ncol = 1) %*%

matrix(1, 1, n_agents) + Scale %*% Z)

## Then we generate the data for the memory model

memory_data <- map_dfr(seq_len(n_agents), function(j) {

logit_p <- indiv[j, 1] + indiv[j, 2] * qlogis(opp_rate_prev)

tibble(agent_id = j, trial = seq_len(n_trials),

choice = rbinom(n_trials, 1, plogis(logit_p)),

opp_rate_prev = opp_rate_prev, agent_type = "memory",

true_alpha = indiv[j, 1], true_beta = indiv[j, 2])

})

list(biased = biased_data, memory = memory_data,

hyperparams = list(mu_theta = mu_theta, sigma_theta = sigma_theta,

mu_alpha = mu_alpha, mu_beta = mu_beta,

sigma_alpha = sigma_alpha, sigma_beta = sigma_beta,

rho = rho))

}

sim_data_path <- here::here("simdata", "ch7_model_recovery_data.rds")

if (regenerate_simulations || !file.exists(sim_data_path)) {

sim_data <- simulate_model_recovery_data(

opponent_choices = opponent_reversal # Use Ch. 6 reversal schedule

)

saveRDS(sim_data, sim_data_path)

} else {

sim_data <- readRDS(sim_data_path)

}

d_biased <- sim_data$biased

d_memory <- sim_data$memory

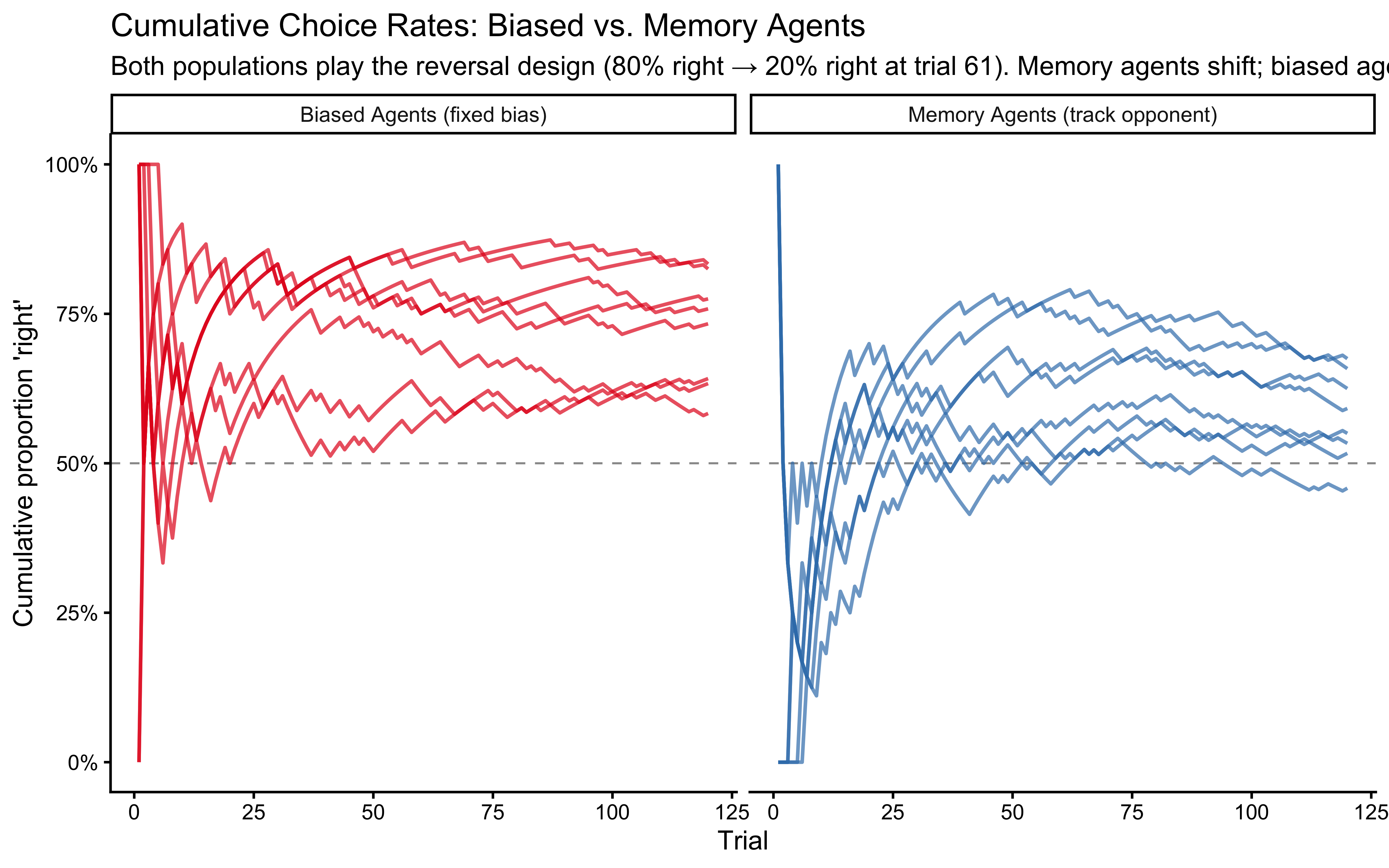

cat("Biased data: N =", nrow(d_biased), "from", n_distinct(d_biased$agent_id), "agents\n")## Biased data: N = 6000 from 50 agents## Memory data: N = 6000 from 50 agentsLet us visualize a sample of agents from both populations before fitting any models. This is a useful sanity check, and part of any sensible workflow. Check your data and simulations, folks!

sampled_agents <- bind_rows(d_biased, d_memory) |>

distinct(agent_type, agent_id) |>

group_by(agent_type) |>

slice_sample(n = 8) |>

ungroup()

bind_rows(d_biased, d_memory) |>

semi_join(sampled_agents, by = c("agent_type", "agent_id")) |>

arrange(agent_type, agent_id, trial) |>

group_by(agent_type, agent_id) |>

mutate(cum_rate = cumsum(choice) / trial) |>

ungroup() |>

ggplot(aes(x = trial, y = cum_rate, group = agent_id, color = agent_type)) +

geom_hline(yintercept = 0.5, linetype = "dashed", color = "gray60",

linewidth = 0.4) +

geom_line(alpha = 0.7, linewidth = 0.7) +

facet_wrap(~agent_type, labeller = as_labeller(

c(biased = "Biased Agents (fixed bias)",

memory = "Memory Agents (track opponent)"))) +

scale_color_brewer(palette = "Set1", guide = "none") +

scale_y_continuous(limits = c(0, 1), labels = scales::percent) +

labs(title = "Cumulative Choice Rates: Biased vs. Memory Agents",

subtitle = "Both populations play the reversal design (80% right → 20% right at trial 61). Memory agents shift; biased agents don't.",

x = "Trial", y = "Cumulative proportion 'right'")

We should see a difference: Both populations initially drift upwards (rightwards in a sense) one because it is biased that way, the other because it initially plays against a 80%-right opponent. Memory agents then start drifting downwards as they track the reversal in the opponent’s behavior, while biased agents stabilize. But it is not always easy to tell them apart by eye, especially if we look at individual agents rather than averages. This is why we need formal model comparison techniques.

Now we prepare the four Stan data lists for our 2x2 comparison. Note that when fitting the memory model we need to add the opp_rate_prev even to the biased-generated data, as the model requires it.

make_stan_biased <- function(d)

list(N = nrow(d), J = n_distinct(d$agent_id),

agent = d$agent_id, h = as.integer(d$choice))

make_stan_memory <- function(d)

list(N = nrow(d), J = n_distinct(d$agent_id),

agent = d$agent_id, h = as.integer(d$choice),

opp_rate_prev = d$opp_rate_prev)

sd_biased_on_biased <- make_stan_biased(d_biased)

sd_memory_on_biased <- make_stan_memory(d_biased)

sd_biased_on_memory <- make_stan_biased(d_memory)

sd_memory_on_memory <- make_stan_memory(d_memory)We reuse the NCP Stan models from Chapter 6. The key feature we need for model comparison is the log_lik vector in the generated quantities block - this is the pointwise log-likelihood for each observation that PSIS-LOO requires. This is why we designed those models with log_lik from the beginning: the Chapter 6 workflow was already leading here.

stan_file_biased <- here::here("stan", "ch6_multilevel_biased_ncp.stan")

if (!file.exists(stan_file_biased)) {

writeLines('

data { int<lower=1> N; int<lower=1> J;

array[N] int<lower=1,upper=J> agent;

array[N] int<lower=0,upper=1> h; }

parameters { real mu_theta; real<lower=0> sigma_theta; vector[J] z_theta; }

transformed parameters { vector[J] theta_logit = mu_theta + z_theta*sigma_theta; }

model {

target += normal_lpdf(mu_theta|0,1.5);

target += exponential_lpdf(sigma_theta|1);

target += std_normal_lpdf(z_theta);

target += bernoulli_logit_lpmf(h|theta_logit[agent]); }

generated quantities {

real lprior = normal_lpdf(mu_theta|0,1.5)+exponential_lpdf(sigma_theta|1)

+ std_normal_lpdf(z_theta);

vector[N] log_lik; array[N] int h_post_rep;

for (i in 1:N) {

log_lik[i] = bernoulli_logit_lpmf(h[i]|theta_logit[agent[i]]);

h_post_rep[i] = bernoulli_logit_rng(theta_logit[agent[i]]); } }',

stan_file_biased)

}

stan_file_memory <- here::here("stan", "ch6_multilevel_memory_ncp.stan")

if (!file.exists(stan_file_memory)) {

writeLines('

data { int<lower=1> N; int<lower=1> J;

array[N] int<lower=1,upper=J> agent; array[N] int<lower=0,upper=1> h;

vector<lower=0.01,upper=0.99>[N] opp_rate_prev; }

parameters {

real mu_alpha; real mu_beta; vector<lower=0>[2] sigma;

cholesky_factor_corr[2] L_Omega; matrix[2,J] z; }

transformed parameters {

matrix[J,2] indiv_params;

{ matrix[2,J] dev = diag_pre_multiply(sigma,L_Omega)*z;

for (j in 1:J) {

indiv_params[j,1] = mu_alpha+dev[1,j];

indiv_params[j,2] = mu_beta+dev[2,j]; } } }

model {

target += normal_lpdf(mu_alpha|0,1); target += normal_lpdf(mu_beta|0,1);

target += exponential_lpdf(sigma|1);

target += lkj_corr_cholesky_lpdf(L_Omega|2);

target += std_normal_lpdf(to_vector(z));

vector[N] logit_p;

for (i in 1:N)

logit_p[i] = indiv_params[agent[i],1]

+ indiv_params[agent[i],2]*logit(opp_rate_prev[i]);

target += bernoulli_logit_lpmf(h|logit_p); }

generated quantities {

matrix[2,2] Omega = multiply_lower_tri_self_transpose(L_Omega);

real rho = Omega[1,2];

real lprior = normal_lpdf(mu_alpha|0,1)+normal_lpdf(mu_beta|0,1)

+ exponential_lpdf(sigma|1)+lkj_corr_cholesky_lpdf(L_Omega|2)

+ std_normal_lpdf(to_vector(z));

vector[N] log_lik; array[N] int h_post_rep;

for (i in 1:N) {

real lp = indiv_params[agent[i],1]

+ indiv_params[agent[i],2]*logit(opp_rate_prev[i]);

log_lik[i] = bernoulli_logit_lpmf(h[i]|lp);

h_post_rep[i] = bernoulli_logit_rng(lp); } }',

stan_file_memory)

}

mod_biased_ncp <- cmdstan_model(stan_file_biased)

mod_memory_ncp <- cmdstan_model(stan_file_memory)

cat("Both NCP models compiled.\n")## Both NCP models compiled.8.5 Fitting the Four Model-Data Combinations

Why fit both models to both datasets? Fitting only the true model to its own data tells us the model works — but it cannot tell us whether ELPD actually discriminates. For model comparison to be meaningful, the wrong model must score worse than the right one. Fitting all four combinations gives us a 2×2 confusion matrix: if PSIS-LOO correctly selects the generating model in each column, the method has recovered the truth. If it selects the wrong model, that is scientifically informative too — it tells us the experimental design is not discriminative enough at this sample size.

We now fit all four combinations. This is the computationally expensive step, but it only needs to be run once — thereafter, results are loaded from disk. The fit_or_load() helper encapsulates this logic.

fit_or_load <- function(label, model, data_list, file_path, init_fn, seed = 123) {

if (regenerate_simulations || !file.exists(file_path)) {

message("Fitting: ", label)

fit <- model$sample(

data = data_list, seed = seed, chains = 4, parallel_chains = 4,

iter_warmup = 1000, iter_sampling = 1000, init = init_fn(data_list),

adapt_delta = 0.90, max_treedepth = 12, refresh = 0)

fit$save_object(file_path)

} else {

fit <- readRDS(file_path)

message("Loaded: ", label)

}

fit

}

init_biased <- function(data_list) {

J <- data_list$J

lapply(1:4, function(i) list(mu_theta = rnorm(1,0,1.5),

sigma_theta = rexp(1,1), z_theta = rnorm(J,0,1)))

}

init_memory <- function(data_list) {

J <- data_list$J

lapply(1:4, function(i) list(

mu_alpha = rnorm(1,0,0.3), mu_beta = rnorm(1,0,0.3),

sigma = abs(rnorm(2,0,0.3)) + 0.1,

L_Omega = matrix(c(1,0,0,1), nrow = 2),

z = matrix(0, nrow = 2, ncol = J)))

}

fit_b_on_b <- fit_or_load("Biased->Biased", mod_biased_ncp, sd_biased_on_biased,

here::here("simmodels","ch7_fit_b_on_b.rds"), init_biased)

fit_m_on_b <- fit_or_load("Memory->Biased", mod_memory_ncp, sd_memory_on_biased,

here::here("simmodels","ch7_fit_m_on_b.rds"), init_memory)

fit_b_on_m <- fit_or_load("Biased->Memory", mod_biased_ncp, sd_biased_on_memory,

here::here("simmodels","ch7_fit_b_on_m.rds"), init_biased)

fit_m_on_m <- fit_or_load("Memory->Memory", mod_memory_ncp, sd_memory_on_memory,

here::here("simmodels","ch7_fit_m_on_m.rds"), init_memory)Before computing any model comparison statistics, we verify that all four fits pass the standard geometry diagnostics from Chapter 5. This step matters especially for the misspecified fits. When we fit the memory model to biased data, we are asking a model to find a memory-tracking signal where none exists - it may strain to do so, which can sometimes produce divergences or poor mixing.

If we find issues, we need to think critically. On the one hand, comparing a well fitted model to a poorly fitted one is not a strong test of their relative performance. On the other hand, if the misspecified model is so difficult to fit that it fails basic diagnostics, that is itself a sign of poor performance. So as we investigate the sources of the issues, we need to critically evaluate whether they are a sign of a fundamental mismatch between the model and the data-generating mechanism or just a technical issue that can be resolved with more careful fitting.

Remember that we already run the full bayesian workflow on these models in the previous chapters, so here we only briefly check whether these new fitting processes show any sign of issues. If these were new models, we would need to do a thorough validation first!

fits <- list("Biased->Biased" = fit_b_on_b, "Memory->Biased" = fit_m_on_b,

"Biased->Memory" = fit_b_on_m, "Memory->Memory" = fit_m_on_m)

map_dfr(names(fits), function(label) {

f <- fits[[label]]

sm <- f$diagnostic_summary(quiet = TRUE)

# Compute both metrics in a single pass

post_summary <- summarise_draws(f$draws(), "rhat", "ess_bulk")

tibble(

Combination = label,

Divergences = sum(sm$num_divergent),

Max_Rhat = round(max(post_summary$rhat, na.rm = TRUE), 3),

Min_ESS_bulk = round(min(post_summary$ess_bulk, na.rm = TRUE), 0)

)

}) |>

knitr::kable(caption = paste(

"Pre-comparison diagnostics. Required: Divergences = 0, R-hat < 1.01, ESS > 400.",

"Misspecified fits (Memory->Biased, Biased->Memory) may show slightly worse ESS",

"because the model is searching for structure that does not exist in the data.",

"This is informative: severe degradation here would itself be evidence against the model."

))| Combination | Divergences | Max_Rhat | Min_ESS_bulk |

|---|---|---|---|

| Biased->Biased | 0 | 1.006 | 868 |

| Memory->Biased | 0 | 1.006 | 963 |

| Biased->Memory | 0 | 1.006 | 929 |

| Memory->Memory | 0 | 1.006 | 707 |

8.6 Computing PSIS-LOO

8.6.1 The Pareto-\(\hat{k}\) Diagnostic: Which Observations Are Influential?

The loo() function performs two critical operations: it estimates the Expected Log Predictive Density (ELPD) via Pareto-smoothed importance sampling, and it quantifies the mathematical reliability of this approximation using the Pareto-\(\hat{k}\) diagnostic.

Recall that PSIS-LOO approximates exact leave-one-out cross-validation by reweighting draws from the full-data posterior. The raw importance weights are the inverse of the pointwise likelihoods. If an observation \(y_i\) is highly unexpected by the model, its importance weight becomes astronomically large. If a few posterior draws capture most of the weight, the Monte Carlo approximation collapses (cannot represent the full dataset).

To stabilize this, the algorithm fits a Generalized Pareto Distribution to the right tail of these raw weights. The \(\hat{k}\) statistic is the estimated shape parameter of this distribution, directly measuring the heaviness of the tail. Conceptually, if removing a single trial would drastically shift the posterior, extreme importance weights are required to compensate, causing \(\hat{k}\) to blow up. Thus, \(\hat{k}\) serves two vital purposes:

Statistical diagnostic: It flags specific observations where the reweighting shortcut is mathematically unreliable.

Cognitive diagnostic: It identifies highly influential or surprising trials that violate the model’s structural expectations.

We execute the function with save_psis = TRUE to retain the smoothed weights matrix, which is strictly required for calibration checks later in the workflow (LOO-PIT, which will be explained then).

get_raw_loo <- function(fit_obj, file_path) {

if (regenerate_simulations || !file.exists(file_path)) {

message("Computing and saving raw LOO to ", file_path)

loo_obj <- fit_obj$loo(save_psis = TRUE)

saveRDS(loo_obj, file_path)

return(loo_obj)

} else {

message("Loading cached raw LOO from ", file_path)

return(readRDS(file_path))

}

}

loo_b_on_b <- get_raw_loo(fit_b_on_b, here::here("simmodels", "ch7_loo_raw_b_on_b.rds"))

loo_m_on_b <- get_raw_loo(fit_m_on_b, here::here("simmodels", "ch7_loo_raw_m_on_b.rds"))

loo_b_on_m <- get_raw_loo(fit_b_on_m, here::here("simmodels", "ch7_loo_raw_b_on_m.rds"))

loo_m_on_m <- get_raw_loo(fit_m_on_m, here::here("simmodels", "ch7_loo_raw_m_on_m.rds"))

summarise_pareto_k <- function(loo_obj, label) {

k <- loo_obj$diagnostics$pareto_k

p_loo <- loo_obj$estimates["p_loo","Estimate"]

n <- length(k)

tibble(Combination=label, N=n,

k_below_05=sum(k<0.5), k_05_07=sum(k>=0.5&k<0.7),

k_07_10=sum(k>=0.7&k<1), k_above_10=sum(k>=1),

Max_k=round(max(k),3),

p_hat_loo=round(p_loo,1), ratio=round(p_loo/n,3),

ELPD=round(loo_obj$estimates["elpd_loo","Estimate"],1),

SE=round(loo_obj$estimates["elpd_loo","SE"],1))

}

pareto_k_table <- bind_rows(

summarise_pareto_k(loo_b_on_b,"Biased->Biased"),

summarise_pareto_k(loo_m_on_b,"Memory->Biased"),

summarise_pareto_k(loo_b_on_m,"Biased->Memory"),

summarise_pareto_k(loo_m_on_m,"Memory->Memory"))

knitr::kable(pareto_k_table,

caption = paste("Pareto-k diagnostics. ratio = p_hat_loo/N measures model",

"complexity relative to dataset size - we will use this for LOO-PIT below."))| Combination | N | k_below_05 | k_05_07 | k_07_10 | k_above_10 | Max_k | p_hat_loo | ratio | ELPD | SE |

|---|---|---|---|---|---|---|---|---|---|---|

| Biased->Biased | 6000 | 6000 | 0 | 0 | 0 | 0.162 | 41.1 | 0.007 | -3393.8 | 36.0 |

| Memory->Biased | 6000 | 5998 | 2 | 0 | 0 | 0.521 | 47.0 | 0.008 | -3395.1 | 36.0 |

| Biased->Memory | 6000 | 6000 | 0 | 0 | 0 | 0.190 | 35.6 | 0.006 | -4038.3 | 15.4 |

| Memory->Memory | 6000 | 5969 | 25 | 6 | 0 | 0.759 | 61.7 | 0.010 | -3700.3 | 27.3 |

The action we take depends on how large \(\hat{k}\) is. The thresholds below correspond roughly to how well the Pareto distribution fits the tail of the importance weights — which determines how reliable the approximation is:

| \(\hat{k}\) | Interpretation | Action |

|---|---|---|

| \(< 0.5\) | Reliable | Accept PSIS-LOO |

| \([0.5, 0.7)\) | Acceptable | But proceed with caution |

| \([0.7, 1.0)\) | Problematic | Apply loo_moment_match() first |

| \(\geq 1.0\) | Very problematic | Use actual K-fold |

The loo_moment_match() refits the model excluding the influential observations one by one, and assesses their out-of-sample error under the refitted model. This brings two concerns: i) computational feasibility: can we actually afford to refit the model multiple times? ii) if there are multiple influential observations, they might not be independent and only removing one would not capture the full influence of whatever is causing the underlying issue. So once again, we need to be critical and do our detective work. However, this time we are lucky and it seems all is in place.

Notice also the p_hat_loo and ratio columns. These are the effective number of parameters estimated by LOO and its ratio to total observations - we will need these shortly when checking LOO-PIT calibration.

The Effective Number of Parameters (\(\hat{p}_{\text{loo}}\)): You may be familiar with Information Criteria like AIC or BIC, which penalize models based on their raw parameter count (\(k\)). In Bayesian hierarchical models, the “true” number of parameters is ambiguous because of shrinkage. Instead, \(\hat{p}_{\text{loo}}\) measures the model’s optimism: the difference between how well the model fits the training data vs. how well it predicts held-out data.\(\hat{p}_{\text{loo}} \approx\) total parameters: The model is learning very little from the population prior.\(\hat{p}_{\text{loo}} <\) total parameters: The hierarchical prior is effectively “narrowing” the model’s flexibility.PSIS-LOO is essentially a more robust version of WAIC (Widely Applicable Information Criterion) that includes the Pareto-\(\hat{k}\) diagnostic to tell you when the penalty is unreliable.

The ratio \(\hat{p}_{\text{loo}}/N\) tells us the effective flexibility per observation. This metric is critical for our next step: checking predictive calibration via LOO-PIT. If this ratio is non-negligible, removing a single observation drastically shifts the posterior, meaning our \(N\) leave-one-out predictive distributions are highly correlated. As Tesso and Vehtari (2026) demonstrated, this dependency invalidates standard statistical tests for uniformity. We will explicitly rely on this ratio to justify using specialized dependent-PIT tests (like POT-C) to accurately diagnose model calibration.

8.6.2 Characterizing Influential Observations: Why Are They Influential?

Finding that some observations have high \(\hat{k}\) is only the beginning of the story. The scientifically interesting question is why those particular observations are influential. In a hierarchical cognitive model, there are two structurally distinct reasons an observation might be hard to predict, with different theoretical implications:

The agent is an outlier from the population. Their individual parameters (say, a very high memory sensitivity \(\beta_j\)) are far from the population mean. The model is surprised by their behavior not because anything unusual happened on that trial, but because the hierarchical prior, which uses all other agents to constrain this agent’s parameters, is pulling the estimate toward the center. Finding this pattern suggests the population-level variance may be underestimated, or that some participants genuinely follow a different strategy than the majority.

The trial itself is unusual for that agent. Given the agent’s estimated parameters, this specific observation is surprising, perhaps the memory signal was strong (opponent rate 90%) and the model predicted the agent would strongly track it, but instead the agent chose randomly. This is a cognitively interesting failure: it identifies a trial where our model’s assumptions break down, potentially revealing strategic flexibility, lapses of attention, or simply that agents sometimes deviate from their average behavior (e.g. becoming distracted).

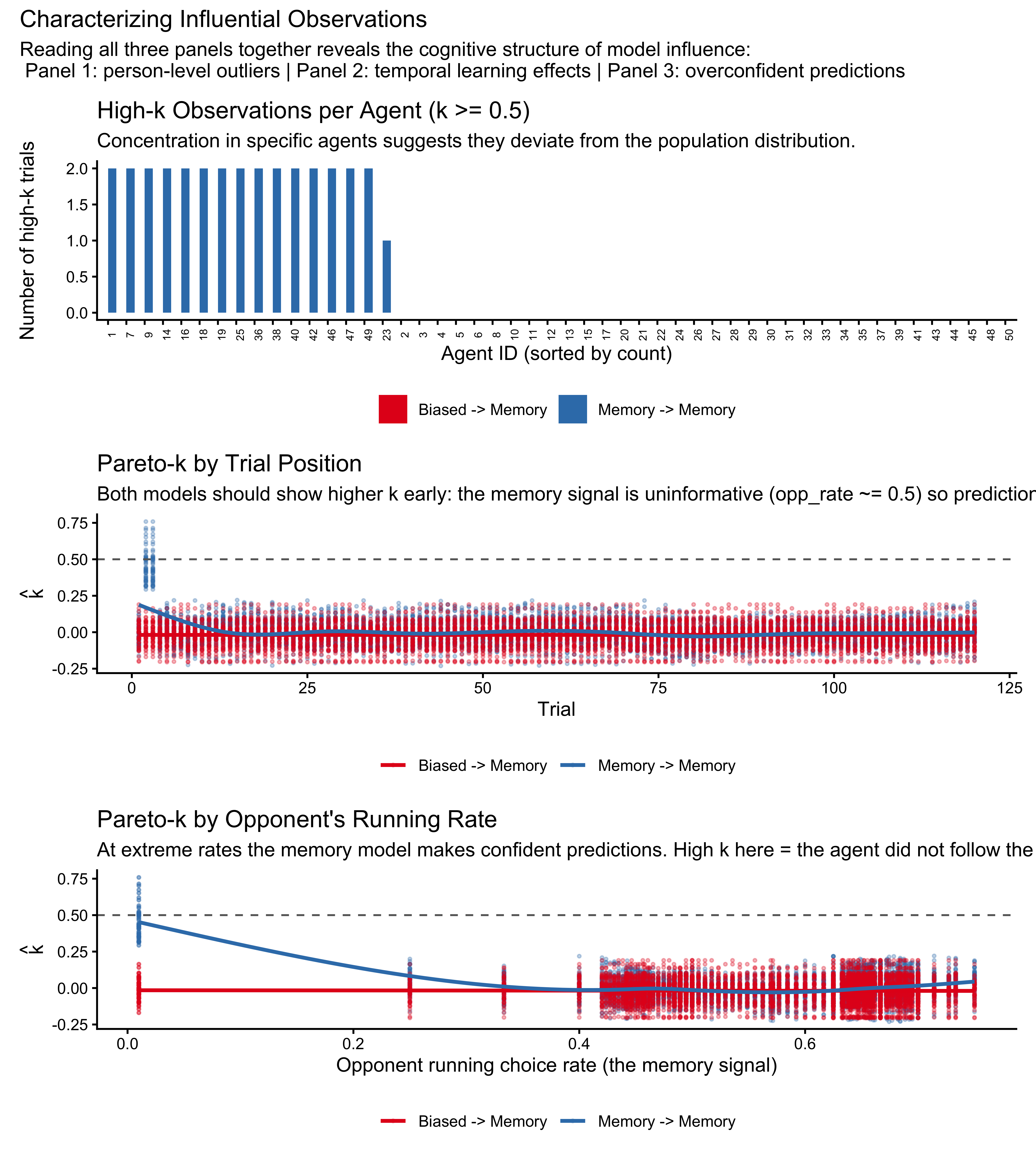

To distinguish these two causes, we join the \(\hat{k}\) values back to the raw data and look for structure across three dimensions: agent identity, trial position, and the strength of the memory signal.

k_mem_on_mem <- tibble(

k = loo_m_on_m$diagnostics$pareto_k,

agent_id = d_memory$agent_id,

trial = d_memory$trial,

opp_rate_prev = d_memory$opp_rate_prev,

fit = "Memory -> Memory")

k_bias_on_mem <- tibble(

k = loo_b_on_m$diagnostics$pareto_k,

agent_id = d_memory$agent_id,

trial = d_memory$trial,

opp_rate_prev = d_memory$opp_rate_prev,

fit = "Biased -> Memory")

k_annotated <- bind_rows(k_mem_on_mem, k_bias_on_mem)

# -- Panel 1: Does influence cluster within specific agents? -------------------

p_by_agent <- k_annotated |>

group_by(fit, agent_id) |>

summarise(n_high = sum(k >= 0.5), .groups = "drop") |>

ggplot(aes(x = reorder(factor(agent_id), -n_high),

y = n_high, fill = fit)) +

geom_col(position = "dodge") +

scale_fill_brewer(palette = "Set1", name = NULL) +

labs(title = "High-k Observations per Agent (k >= 0.5)",

subtitle = paste("Concentration in specific agents suggests they deviate from",

"the population distribution."),

x = "Agent ID (sorted by count)", y = "Number of high-k trials") +

theme(axis.text.x = element_text(angle = 90, size = 6),

legend.position = "bottom")

# -- Panel 2: Does influence cluster at early trials? -------------------------

# Early trials have uninformative memory (opp_rate_prev ~= 0.5) so the

# memory model has nothing to predict from, making any outcome surprising.

p_by_trial <- ggplot(k_annotated, aes(x = trial, y = k, color = fit)) +

geom_point(size = 0.6, alpha = 0.3) +

geom_smooth(method = "gam", formula = y ~ s(x, bs = "cs"),

linewidth = 1, se = FALSE) +

geom_hline(yintercept = 0.5, linetype = "dashed", color = "gray40") +

scale_color_brewer(palette = "Set1", name = NULL) +

labs(title = "Pareto-k by Trial Position",

subtitle = paste("Both models should show higher k early: the memory signal",

"is uninformative (opp_rate ~= 0.5) so predictions are uncertain."),

x = "Trial", y = expression(hat(k))) +

theme(legend.position = "bottom")

# -- Panel 3: Does influence cluster at extreme opponent rates? ----------------

# When opp_rate_prev is extreme (near 0 or 1), the memory model makes a

# strong, confident prediction. If the agent does not follow the signal,

# k will be high -- these are the most cognitively informative failures.

p_by_opp <- ggplot(k_annotated, aes(x = opp_rate_prev, y = k, color = fit)) +

geom_point(size = 0.6, alpha = 0.3) +

geom_smooth(method = "gam", formula = y ~ s(x, bs = "cs"),

linewidth = 1, se = FALSE) +

geom_hline(yintercept = 0.5, linetype = "dashed", color = "gray40") +

scale_color_brewer(palette = "Set1", name = NULL) +

labs(title = "Pareto-k by Opponent's Running Rate",

subtitle = paste("At extreme rates the memory model makes confident predictions.",

"High k here = the agent did not follow the signal."),

x = "Opponent running choice rate (the memory signal)",

y = expression(hat(k))) +

theme(legend.position = "bottom")

p_by_agent / p_by_trial / p_by_opp +

plot_annotation(title = "Characterizing Influential Observations",

subtitle = paste("Reading all three panels together reveals the cognitive structure",

"of model influence:\n",

"Panel 1: person-level outliers | Panel 2: temporal learning effects |",

"Panel 3: overconfident predictions"))

Reading these three panels together, we can build a cognitive story about model influence.

Panel 1 tells us whether specific agents are outliers from the group — if a handful of agents account for most of the high-\(\hat{k}\) observations, those individuals may be genuinely using a different strategy from the majority.

Panel 2 tells us whether the model struggles early in a session, before it has accumulated useful opponent history: at trial 1, the running opponent rate is exactly 0.5 (the model knows nothing about the opponent), so any outcome is equally surprising — this is expected and not a sign of model failure.

Panel 3 is the most cognitively interesting: when the opponent’s running rate is extreme (say, 90% right), the memory model predicts the agent will strongly track this. If instead the agent chose randomly or in the opposite direction, the model is very surprised — \(\hat{k}\) spikes.

These are cognitively informative failures: they identify trials where our model’s assumption that agents track opponent history breaks down. In our concrete case, we see that early trials are the most problematic. Given the relatively high bias in the opponent and the few trials, memory can quickly go to the extreme values and the model has a hard time dealing with that.

But let’s imagine we saw some agents being particularly influential — say, they had 10+ high-\(\hat{k}\) observations. This would suggest that those agents are outliers from the population distribution — perhaps they have a very high memory sensitivity \(\beta_j\) that the hierarchical prior is struggling to accommodate. In this case, we would want to look at the posterior estimates for those agents and see if they indeed have extreme parameter values. We could also consider refitting the model with a more flexible hierarchical structure (e.g., heavier-tailed distributions) or even excluding those agents if we believe they represent a qualitatively different strategy.

# 1. Extract estimated parameters and transform to probability space (Median and 90% HDI)

agent_estimates <- fit_m_on_m$draws("indiv_params") |>

spread_draws(indiv_params[agent_id, param_idx]) |>

# Pivot to have alpha and beta in the same row per draw

pivot_wider(names_from = param_idx, values_from = indiv_params, names_prefix = "param_") |>

rename(alpha = param_1, beta = param_2) |>

mutate(

# Probability transformations evaluated at experimental block extremes

p_bias = plogis(alpha), # Baseline probability (opp = 0.5)

p_block1 = plogis(alpha + beta * qlogis(0.8)), # Prob of Right during 80% block

p_block2 = plogis(alpha + beta * qlogis(0.2)) # Prob of Right during 20% block

) |>

pivot_longer(

cols = c(alpha, beta, p_bias, p_block1, p_block2),

names_to = "metric",

values_to = "value"

) |>

group_by(agent_id, metric) |>

median_hdi(value, .width = 0.90) |>

# Format as "Median [Lower, Upper]"

mutate(estimate_label = sprintf("%.2f [%.2f, %.2f]", value, .lower, .upper)) |>

dplyr::select(agent_id, metric, estimate_label) |>

pivot_wider(names_from = metric, values_from = estimate_label, names_prefix = "est_")

# 2. Extract true parameters and compute true probabilities

agent_truths <- d_memory |>

distinct(agent_id, true_alpha, true_beta) |>

mutate(

true_p_bias = round(plogis(true_alpha), 2),

true_p_block1 = round(plogis(true_alpha + true_beta * qlogis(0.8)), 2),

true_p_block2 = round(plogis(true_alpha + true_beta * qlogis(0.2)), 2),

true_alpha = round(true_alpha, 2),

true_beta = round(true_beta, 2)

)

# 3. Join with the Pareto-k summary

k_annotated |>

filter(fit == "Memory -> Memory") |>

group_by(agent_id) |>

summarise(

n_high_k = sum(k >= 0.5),

max_k = round(max(k), 3),

.groups = "drop"

) |>

arrange(desc(n_high_k)) |>

slice_head(n = 8) |>

left_join(agent_truths, by = "agent_id") |>

left_join(agent_estimates, by = "agent_id") |>

# Select columns in a logical display order

dplyr::select(

agent_id, n_high_k, max_k,

starts_with("true_alpha"), starts_with("est_alpha"),

starts_with("true_beta"), starts_with("est_beta"),

starts_with("true_p_block1"), starts_with("est_p_block1"),

starts_with("true_p_block2"), starts_with("est_p_block2")

) |>

knitr::kable(caption = paste(

"Top 8 agents by number of high-k observations.",

"Probability space metrics (p_block1, p_block2) demonstrate agent determinism at environmental extremes.",

"Values near 1.00 or 0.00 with narrow HDIs indicate near-deterministic behavioral expectations,",

"which might trigger high Pareto-k values upon any contradictory response."

))| agent_id | n_high_k | max_k | true_alpha | est_alpha | true_beta | est_beta | true_p_block1 | est_p_block1 | true_p_block2 | est_p_block2 |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | 2 | 0.518 | 0.11 | 0.17 [-0.13, 0.48] | 1.37 | 1.33 [0.77, 1.93] | 0.88 | 0.88 [0.80, 0.95] | 0.14 | 0.16 [0.05, 0.30] |

| 7 | 2 | 0.620 | -0.15 | -0.12 [-0.40, 0.16] | 1.12 | 1.27 [0.74, 1.82] | 0.80 | 0.84 [0.74, 0.92] | 0.15 | 0.13 [0.05, 0.25] |

| 9 | 2 | 0.651 | -0.32 | -0.40 [-0.70, -0.08] | 1.28 | 1.58 [0.97, 2.17] | 0.81 | 0.86 [0.77, 0.94] | 0.11 | 0.07 [0.01, 0.14] |

| 14 | 2 | 0.673 | -0.17 | -0.20 [-0.50, 0.10] | 1.95 | 1.91 [1.29, 2.51] | 0.93 | 0.92 [0.86, 0.97] | 0.05 | 0.06 [0.01, 0.11] |

| 16 | 2 | 0.714 | 0.26 | 0.19 [-0.12, 0.48] | 1.45 | 1.78 [1.17, 2.40] | 0.91 | 0.93 [0.88, 0.98] | 0.15 | 0.09 [0.03, 0.19] |

| 18 | 2 | 0.511 | 0.04 | 0.13 [-0.16, 0.42] | 1.47 | 1.38 [0.81, 1.97] | 0.89 | 0.89 [0.81, 0.95] | 0.12 | 0.14 [0.05, 0.27] |

| 19 | 2 | 0.759 | -0.08 | -0.35 [-0.69, -0.03] | 1.65 | 2.20 [1.58, 2.91] | 0.90 | 0.94 [0.89, 0.98] | 0.09 | 0.03 [0.01, 0.07] |

| 25 | 2 | 0.702 | 0.43 | 0.26 [-0.06, 0.57] | 1.90 | 2.04 [1.36, 2.66] | 0.96 | 0.96 [0.92, 0.99] | 0.10 | 0.07 [0.02, 0.16] |

8.6.3 Moment Matching for Problematic Observations

When some observations have \(\hat{k} \geq 0.7\), the standard PSIS approximation is unreliable for those specific data points. The first line of defense is moment matching (Paananen et al., 2021): a method that refines the importance weights by applying a transformation to the posterior draws so that the moments of the reweighted distribution better match what the LOO posterior should look like.

Think of it as improving the reweighting without running a full MCMC from scratch. Instead of asking “what would the posterior look like if we had never seen observation \(i\)?”, moment matching asks “can we adjust the existing draws so they better approximate that LOO posterior?” This is substantially cheaper than refitting and works well for moderately influential observations. Only if moment matching fails to bring \(\hat{k}\) below 1.0 should we resort to agent-level K-fold cross-validation (which we implement next).

apply_moment_match_if_needed <- function(loo_obj, fit_obj, label, file_path) {

# 1. If the cached file exists, load it immediately

if (!regenerate_simulations && file.exists(file_path)) {

message(label, ": Loading cached LOO...")

return(readRDS(file_path))

}

n_bad <- sum(loo_obj$diagnostics$pareto_k >= 0.7)

if (n_bad == 0) {

message(label, ": All k < 0.7. PSIS-LOO is reliable.")

saveRDS(loo_obj, file_path)

return(loo_obj)

}

message(label, ": WARNING! ", n_bad, " observations with k >= 0.7.")

message(" Running CmdStanR moment matching to refine importance weights...")

# 2. Run the actual moment matching algorithm

# (Requires cmdstanr >= 0.7.0 and models that can be unconstrained)

loo_mm <- loo::loo_moment_match(

fit_obj,

loo = loo_obj

)

message(" Max k reduced from ", round(max(loo_obj$diagnostics$pareto_k), 2),

" to ", round(max(loo_mm$diagnostics$pareto_k), 2))

saveRDS(loo_mm, file_path)

return(loo_mm)

}

# Execute the caching function

loo_b_on_b <- apply_moment_match_if_needed(loo_b_on_b, fit_b_on_b, "Biased->Biased", here::here("simmodels", "ch7_loo_mm_b_on_b.rds"))

loo_m_on_b <- apply_moment_match_if_needed(loo_m_on_b, fit_m_on_b, "Memory->Biased", here::here("simmodels", "ch7_loo_mm_m_on_b.rds"))

loo_b_on_m <- apply_moment_match_if_needed(loo_b_on_m, fit_b_on_m, "Biased->Memory", here::here("simmodels", "ch7_loo_mm_b_on_m.rds"))

loo_m_on_m <- apply_moment_match_if_needed(loo_m_on_m, fit_m_on_m, "Memory->Memory", here::here("simmodels", "ch7_loo_mm_m_on_m.rds"))8.6.4 The Limits of PSIS: Agent-Level LOO

If cognitive scientists are primarily interested in generalizing to new participants, you might wonder: why can’t we just calculate PSIS-LOO at the participant level?

We actually can. To do this, we simply take our trial-level log-likelihood matrix and sum the log-likelihoods of all 120 trials for each agent before passing the matrix to the loo() function. This asks the PSIS algorithm to approximate what the posterior would look like if an entire agent’s dataset had been excluded.

Let’s try this on our well-fitting Memory -> Memory model.

# 1. Extract the trial-level log-likelihood matrix (S draws x N trials)

ll_trial_m <- fit_m_on_m$draws("log_lik", format = "matrix")

# 2. Create an empty matrix for agent-level log-likelihoods (S draws x J agents)

J <- n_distinct(d_memory$agent_id)

S <- nrow(ll_trial_m)

ll_agent_m <- matrix(0, nrow = S, ncol = J)

# 3. Sum the trial log-likelihoods for each agent

for (j in 1:J) {

# Find which columns in the trial matrix belong to agent j

trial_cols <- which(d_memory$agent_id == j)

# Sum across those columns for every MCMC draw

ll_agent_m[, j] <- rowSums(ll_trial_m[, trial_cols])

}

# 4. Run PSIS-LOO at the agent level

loo_agent_m <- loo::loo(ll_agent_m)

print(loo_agent_m)##

## Computed from 4000 by 50 log-likelihood matrix.

##

## Estimate SE

## elpd_loo -3711.2 48.6

## p_loo 57.1 4.1

## looic 7422.4 97.2

## ------

## MCSE of elpd_loo is NA.

## MCSE and ESS estimates assume independent draws (r_eff=1).

##

## Pareto k diagnostic values:

## Count Pct. Min. ESS

## (-Inf, 0.7] (good) 12 24.0% 136

## (0.7, 1] (bad) 30 60.0% <NA>

## (1, Inf) (very bad) 8 16.0% <NA>

## See help('pareto-k-diagnostic') for details.I f you run this code, the console should scream at you with warnings. You will see that a massive percentage of the Pareto-\(\hat{k}\) values are \(> 0.7\), and many are \(> 1.0\).This is a spectacular mathematical failure, and it teaches us a crucial lesson about the limits of Importance Sampling. The PSIS shortcut only works when the “Leave-One-Out” posterior is relatively similar to the full-data posterior. Removing a single trial from a dataset of 6,000 barely shifts the parameters. But removing an entire agent (and their 120 unique trials) radically changes the population-level estimates. The shortcut completely breaks down because the full-data posterior simply does not contain enough information about what the population would look like without that agent. This failure provides the exact justification for our next step. When we want to test generalization to new agents, we are forced to abandon the PSIS shortcut entirely and perform exact cross-validation.

8.7 Agent-Level K-Fold: Generalizing to New Participants

Cross-validation requires us to make a fundamental choice: what defines a “data point” when we hold data out?

Up until now, we have performed trial-level cross-validation using the PSIS-LOO shortcut. Trial-level CV asks: “How well does the model predict a held-out trial for a participant whose other 119 trials we have already observed and learned from?”

But cognitive scientists usually want to answer a different question: “How well does the model generalize to completely new participants?”. To answer this, we must perform agent-level cross-validation, holding out entire individuals from the training set.

It is crucial to understand that the level of cross-validation (trial vs. agent) is entirely separate from the computational method we use (PSIS approximation vs. exact refitting).

In theory, we could perform agent-level LOO-CV (Leave-One-Agent-Out). We could even try to use our PSIS mathematical shortcut to do it without refitting the model. However, leaving out an entire agent’s worth of data (120 trials) radically changes the shape of the posterior distribution. When the LOO posterior is that different from the full-data posterior, the PSIS importance weights become wildly unstable (\(\hat{k} \gg 1.0\)), and the shortcut completely fails.

Therefore, when we want to test generalization to new agents, we are forced to abandon the PSIS shortcut and perform exact cross-validation, where we actually recompile and refit the Stan model on the training splits. Because exact Leave-One-Agent-Out would require fitting the model \(J=50\) times, we compromise to save computation time and use exact agent-level K-fold CV.

In agent-level K-fold, we hold out \(1/K\) of the agents entirely from the training set, fit the model to the remaining agents, and then ask: given only the population parameters estimated from the training group, how well can we predict the held-out strangers’ behavior?

# 1. Biased K-Fold Model

# Draws ONE parameter per test agent from the population distribution.

writeLines("

data {

int<lower=1> N_train;

int<lower=1> J_train;

array[N_train] int<lower=1, upper=J_train> agent_train;

array[N_train] int<lower=0, upper=1> h_train;

int<lower=1> N_test;

int<lower=1> J_test;

array[N_test] int<lower=1, upper=J_test> agent_test;

array[N_test] int<lower=0, upper=1> h_test;

}

parameters {

real mu_theta;

real<lower=0> sigma_theta;

vector[J_train] z_theta;

}

transformed parameters {

vector[J_train] theta_logit = mu_theta + z_theta * sigma_theta;

}

model {

target += normal_lpdf(mu_theta | 0, 1.5);

target += exponential_lpdf(sigma_theta | 1);

target += std_normal_lpdf(z_theta);

target += bernoulli_logit_lpmf(h_train | theta_logit[agent_train]);

}

generated quantities {

vector[J_test] theta_new;

vector[N_test] log_lik_test;

for (j in 1:J_test) {

theta_new[j] = normal_rng(mu_theta, sigma_theta);

}

for (n in 1:N_test) {

log_lik_test[n] = bernoulli_logit_lpmf(h_test[n] | theta_new[agent_test[n]]);

}

}", here::here("stan", "ch7_biased_kfold.stan"))

# 2. Memory K-Fold Model

# Samples correlated parameter pairs for each test agent from the population prior.

writeLines("

data {

int<lower=1> N_train;

int<lower=1> J_train;

array[N_train] int<lower=1, upper=J_train> agent_train;

array[N_train] int<lower=0, upper=1> h_train;

vector<lower=0.01, upper=0.99>[N_train] opp_rate_prev_train;

int<lower=1> N_test;

int<lower=1> J_test;

array[N_test] int<lower=1, upper=J_test> agent_test;

array[N_test] int<lower=0, upper=1> h_test;

vector<lower=0.01, upper=0.99>[N_test] opp_rate_prev_test;

}

parameters {

real mu_alpha;

real mu_beta;

vector<lower=0>[2] sigma;

cholesky_factor_corr[2] L_Omega;

matrix[2, J_train] z;

}

transformed parameters {

matrix[J_train, 2] indiv_params;

{

matrix[2, J_train] dev = diag_pre_multiply(sigma, L_Omega) * z;

for (j in 1:J_train) {

indiv_params[j, 1] = mu_alpha + dev[1, j];

indiv_params[j, 2] = mu_beta + dev[2, j];

}

}

}

model {

target += normal_lpdf(mu_alpha | 0, 1);

target += normal_lpdf(mu_beta | 0, 1);

target += exponential_lpdf(sigma | 1);

target += lkj_corr_cholesky_lpdf(L_Omega | 2);

target += std_normal_lpdf(to_vector(z));

vector[N_train] logit_p;

for (i in 1:N_train) {

logit_p[i] = indiv_params[agent_train[i], 1] +

indiv_params[agent_train[i], 2] * logit(opp_rate_prev_train[i]);

}

target += bernoulli_logit_lpmf(h_train | logit_p);

}

generated quantities {

matrix[2, J_test] new_indiv;

vector[N_test] log_lik_test;

for (j in 1:J_test) {

vector[2] z_new;

z_new[1] = std_normal_rng();

z_new[2] = std_normal_rng();

vector[2] param_new = [mu_alpha, mu_beta]' + diag_pre_multiply(sigma, L_Omega) * z_new;

new_indiv[1, j] = param_new[1];

new_indiv[2, j] = param_new[2];

}

for (n in 1:N_test) {

real lp = new_indiv[1, agent_test[n]] +

new_indiv[2, agent_test[n]] * logit(opp_rate_prev_test[n]);

log_lik_test[n] = bernoulli_logit_lpmf(h_test[n] | lp);

}

}", here::here("stan", "ch7_memory_kfold.stan"))

mod_biased_kfold <- cmdstan_model(here::here("stan", "ch7_biased_kfold.stan"))

mod_memory_kfold <- cmdstan_model(here::here("stan", "ch7_memory_kfold.stan"))

cat("Both K-fold Stan models compiled.\n")## Both K-fold Stan models compiled.kfold_path <- here::here("simmodels","ch7_kfold_comparison.rds")

K <- 5; set.seed(21)

# We will run this on the Memory data to see the true model win out of sample

agent_ids <- sort(unique(d_memory$agent_id))

fold_assign <- sample(rep(1:K, length.out=length(agent_ids)))

agent_folds <- tibble(agent_id=agent_ids, fold=fold_assign)

if (regenerate_kfold || !file.exists(kfold_path)) {

message("Running 5-fold CV for Biased and Memory models. This takes a moment...")

kfold_list <- lapply(1:K, function(k) {

test_ids <- agent_folds$agent_id[agent_folds$fold == k]

train_ids <- agent_folds$agent_id[agent_folds$fold != k]

d_train <- d_memory |> filter(agent_id %in% train_ids) |>

mutate(agent_train = as.integer(factor(agent_id)))

d_test <- d_memory |> filter(agent_id %in% test_ids) |>

mutate(agent_test = as.integer(factor(agent_id)))

# Stan data lists

sdata_b <- list(

N_train = nrow(d_train), J_train = length(train_ids),

agent_train = d_train$agent_train, h_train = as.integer(d_train$choice),

N_test = nrow(d_test), J_test = length(test_ids),

agent_test = d_test$agent_test, h_test = as.integer(d_test$choice)

)

sdata_m <- list(

N_train = nrow(d_train), J_train = length(train_ids),

agent_train = d_train$agent_train, h_train = as.integer(d_train$choice),

opp_rate_prev_train = d_train$opp_rate_prev,

N_test = nrow(d_test), J_test = length(test_ids),

agent_test = d_test$agent_test, h_test = as.integer(d_test$choice),

opp_rate_prev_test = d_test$opp_rate_prev

)

# Fit models (using 2 chains to save rendering time)

fit_b <- mod_biased_kfold$sample(data = sdata_b, seed = 200+k, chains = 2,

parallel_chains = 2, iter_warmup = 1000,

iter_sampling = 1000, adapt_delta = 0.90, refresh = 0)

fit_m <- mod_memory_kfold$sample(data = sdata_m, seed = 300+k, chains = 2,

parallel_chains = 2, iter_warmup = 1000,

iter_sampling = 1000, adapt_delta = 0.90, refresh = 0)

ll_b <- fit_b$draws("log_lik_test", format = "matrix")

ll_m <- fit_m$draws("log_lik_test", format = "matrix")

idx <- which(d_memory$agent_id %in% test_ids)

list(ll_b = ll_b, ll_m = ll_m, indices = idx, k = k)

})

S_b <- nrow(kfold_list[[1]]$ll_b)

S_m <- nrow(kfold_list[[1]]$ll_m)

ll_kfold_b <- matrix(NA_real_, S_b, nrow(d_memory))

ll_kfold_m <- matrix(NA_real_, S_m, nrow(d_memory))

for (res in kfold_list) {

ll_kfold_b[, res$indices] <- res$ll_b

ll_kfold_m[, res$indices] <- res$ll_m

}

saveRDS(list(biased = ll_kfold_b, memory = ll_kfold_m), kfold_path)

} else {

kfold_res <- readRDS(kfold_path)

ll_kfold_b <- kfold_res$biased

ll_kfold_m <- kfold_res$memory

}

if (!any(is.na(ll_kfold_b))) {

elpd_kf_b <- loo::elpd(ll_kfold_b)

elpd_kf_m <- loo::elpd(ll_kfold_m)

cat("=== Trial-level LOO vs. Agent-level K-fold (Memory Data) ===\n\n")

cat(sprintf("Biased Model - PSIS-LOO: %.1f (SE=%.1f)\n",

loo_b_on_m$estimates["elpd_loo","Estimate"], loo_b_on_m$estimates["elpd_loo","SE"]))

cat(sprintf("Biased Model - K-fold: %.1f (SE=%.1f)\n\n",

elpd_kf_b$estimates["elpd","Estimate"], elpd_kf_b$estimates["elpd","SE"]))

cat(sprintf("Memory Model - PSIS-LOO: %.1f (SE=%.1f)\n",

loo_m_on_m$estimates["elpd_loo","Estimate"], loo_m_on_m$estimates["elpd_loo","SE"]))

cat(sprintf("Memory Model - K-fold: %.1f (SE=%.1f)\n\n",

elpd_kf_m$estimates["elpd","Estimate"], elpd_kf_m$estimates["elpd","SE"]))

cat("=== K-Fold Model Comparison ===\n")

print(loo::loo_compare(elpd_kf_m, elpd_kf_b))

}## === Trial-level LOO vs. Agent-level K-fold (Memory Data) ===

##

## Biased Model - PSIS-LOO: -4038.3 (SE=15.4)

## Biased Model - K-fold: -4075.0 (SE=12.8)

##

## Memory Model - PSIS-LOO: -3700.3 (SE=27.3)

## Memory Model - K-fold: -3773.2 (SE=25.2)

##

## === K-Fold Model Comparison ===

## elpd_diff se_diff

## model1 0.0 0.0

## model2 -301.7 22.2The Agent-Level K-fold ELPD will almost always be lower (worse) than the trial-level LOO ELPD for both models. This is mathematically expected and cognitively informative. Trial-level LOO asks: “How well can I predict trial 120 for an agent whose personal bias and memory sensitivity I have already estimated from 119 trials?” Agent-level K-fold asks: “How well can I predict behavior for a complete stranger, relying purely on population averages?”

The gap between the two ELPDs quantifies exactly how much individual-level variation matters. If the gap is massive, human individuality dominates the cognitive process.

8.8 LOO-PIT: Calibration Checks for Predictive Distributions

8.8.1 What LOO-PIT Measures

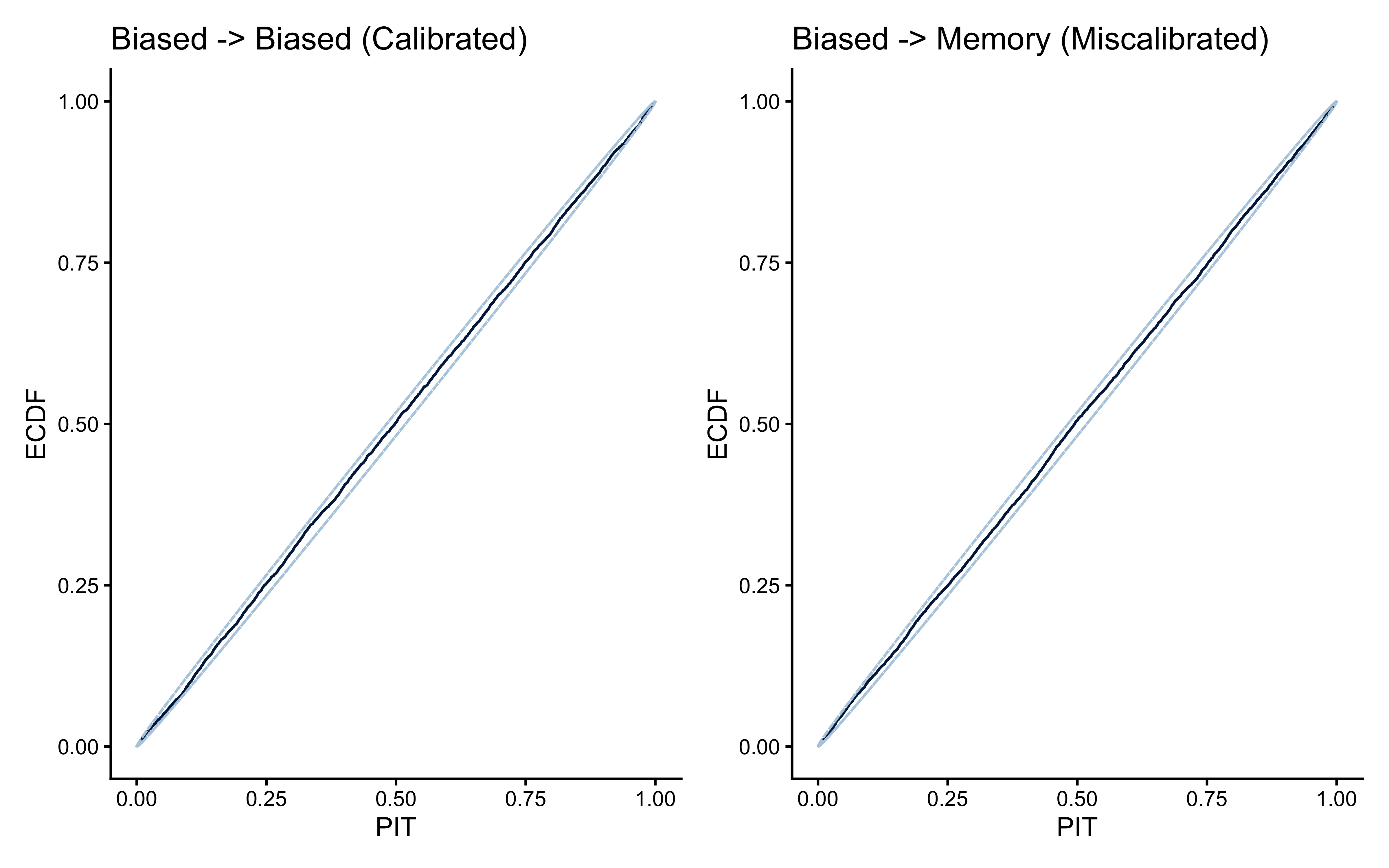

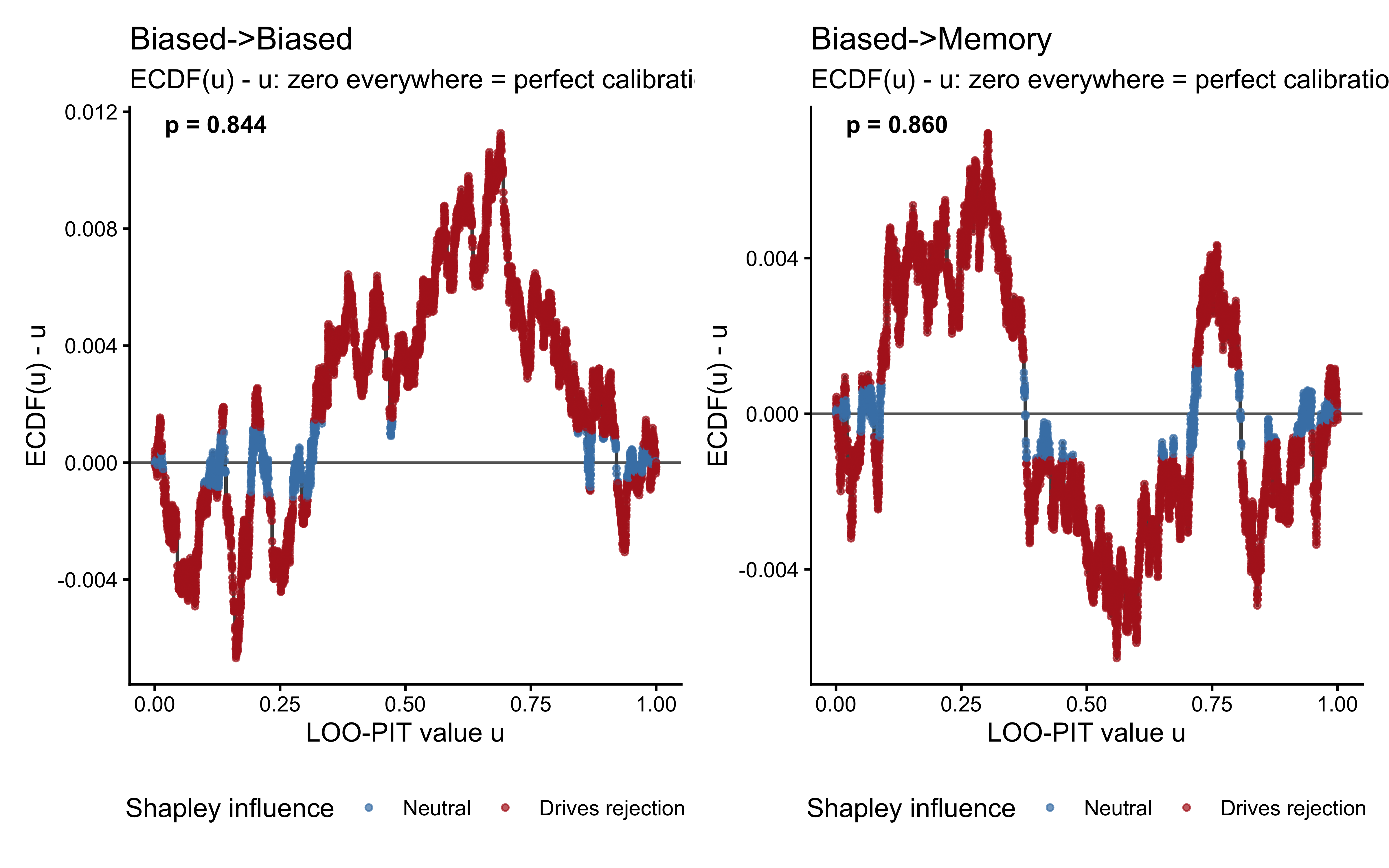

The Pareto-\(\hat{k}\) values tell us whether the importance weights are reliable: whether the PSIS approximation is numerically stable. LOO-PIT tells us something different and complementary: whether the model’s predictive distributions are well-calibrated: whether the model’s uncertainty matches reality.

A model can have perfectly reliable \(\hat{k}\) values and still be systematically miscalibrated. For example, a biased model fitted to memory data might produce low \(\hat{k}\) for every observation (the model is not dramatically wrong on any single trial) while still being consistently wrong in a systematic, diffuse way across all trials. The Pareto-\(\hat{k}\) would not flag this; LOO-PIT will.

The Probability Integral Transform (PIT) builds on a simple but powerful idea. Consider the LOO predictive distribution for observation \(y_i\): the distribution the model assigns to \(y_i\) before seeing it, having been fitted on all other observations. We ask: where does the actual observed \(y_i\) fall within this distribution? Specifically, we compute \(u_i\), the cumulative probability up to the observed value:

\[u_i = \int_{-\infty}^{y_i} p(\tilde{y} \mid y_{-i}) \, d\tilde{y}\]

This is the quantile of the observation within its own LOO predictive distribution. If the model assigned most of its predictive probability below \(y_i\), then \(u_i\) is close to 1 — the model was surprised, expecting a lower value but getting a higher one. If the model assigned most of its probability above \(y_i\), then \(u_i\) is close to 0.

For a perfectly calibrated model, the model’s predictions should be neither systematically too high nor too low. Half the observations should fall in the lower half of their predicted distributions, half in the upper half. More precisely, the \(u_i\) values should be uniformly distributed on \([0, 1]\). Departures from uniformity reveal specific failure modes:

U-shaped distribution (too many values near 0 and 1): The model is overconfident — its predictive distributions are too narrow. Observations consistently fall in the tails, surprising the model.