# Categorization Models: Prototypes

```{r ch13_setup, include=FALSE}

knitr::opts_chunk$set(

echo = TRUE,

warning = FALSE,

message = FALSE,

fig.width = 8,

fig.height = 5,

fig.align = "center",

out.width = "80%",

dpi = 300

)

# Set to TRUE to rerun all Stan fits and heavy simulations; FALSE loads saved results.

regenerate_simulations <- FALSE

# Set to TRUE to run the long-running validation chunks

# (LFO-CV, precision analysis, SBC). FALSE loads cached results

# or skips the chunk if no cache exists.

run_intensive_checks <- regenerate_simulations

pacman::p_load(

tidyverse, # data manipulation and visualization

here, # robust project-relative paths

mvtnorm, # multivariate normal densities

patchwork, # combining plots

cmdstanr, # Stan interface

posterior, # tidy posterior arrays

tidybayes, # tidy extraction of draws

bayesplot, # MCMC diagnostic plots

loo, # LOO-CV and PSIS

SBC, # Simulation-Based Calibration Checks

priorsense, # prior sensitivity

future, # parallel processing

furrr, # parallel functional programming

ellipse, # uncertainty ellipses

matrixStats, # logSumExp for the LFO-CV implementation

MASS, # ginv (pseudo-inverse fallback)

gridExtra,

hexbin,

magick

)

theme_set(theme_classic())

# Prior hyperparameters for log(r) and log(q)

LOG_R_PRIOR_MEAN <- 0 # prior median r = 1

LOG_R_PRIOR_SD <- 1.0 # covers r ≈ 0.13–7.4 at ±2 SD

LOG_Q_PRIOR_MEAN <- -2 # prior median q ≈ 0.14 (small drift)

LOG_Q_PRIOR_SD <- 1.0 # covers q ≈ 0.02–1.0 at ±2 SD

for (d in c("stan", "simdata", "simmodels")) {

if (!dir.exists(d)) dir.create(d)

}

# ── Shared diagnostic helper (canonical version lives in Ch 11) ──────────────

# We re-define it here for standalone use. The function returns a one-row

# tibble summarising the mandatory MCMC diagnostic battery for a cmdstanr

# fit, and prints a warning if any threshold is breached. Chapters 11, 12,

# 13, and 15 all call this so the diagnostic table looks the same everywhere.

diagnostic_summary_table <- function(fit, params = NULL) {

diag <- fit$diagnostic_summary(quiet = TRUE)

draws_summary <- fit$summary(variables = params)

# Calculate individual metrics

n_div <- sum(diag$num_divergent)

max_rhat <- round(max(draws_summary$rhat, na.rm = TRUE), 2)

min_bulk <- round(min(draws_summary$ess_bulk, na.rm = TRUE), 0)

min_tail <- round(min(draws_summary$ess_tail, na.rm = TRUE), 0)

min_ebfmi <- round(min(diag$ebfmi), 3)

# Robust MCSE calculation

mcse_col <- intersect(names(draws_summary), c("mcse_mean", "mcse", "mcse_cp"))[1]

if (!is.na(mcse_col)) {

max_mcse <- round(max(draws_summary[[mcse_col]] / draws_summary$sd, na.rm = TRUE), 4)

} else {

max_mcse <- round(max(1 / sqrt(draws_summary$ess_bulk), na.rm = TRUE), 4)

}

# Build the formatted table

out <- tibble::tibble(

metric = c(

"Divergences (zero tolerance)",

"Max rank-normalised R-hat",

"Min bulk ESS",

"Min tail ESS",

"Min E-BFMI",

"Max MCSE / posterior SD"

),

value = c(n_div, max_rhat, min_bulk, min_tail, min_ebfmi, max_mcse),

threshold = c("== 0", "< 1.01", "> 400", "> 400", "> 0.2", "< 0.05"),

pass = c(

n_div == 0,

max_rhat < 1.01,

min_bulk > 400,

min_tail > 400,

min_ebfmi > 0.2,

max_mcse < 0.05

)

)

# Check if any threshold failed

if (!all(out$pass)) {

warning(

"MCMC diagnostic battery: at least one threshold breached. ",

"Inspect divergences and reparameterize before interpreting the posterior.",

call. = FALSE

)

}

out

}

# ── Kruschke (1993) stimulus set ─────────────────────────────────────────────

# Re-defined here for standalone use; identical to Ch. 11.

stimulus_info <- tibble(

stimulus = c(5, 3, 7, 1, 8, 2, 6, 4),

height = c(1, 1, 2, 2, 3, 3, 4, 4),

position = c(2, 3, 1, 4, 1, 4, 2, 3),

category_true = c(0, 0, 1, 0, 1, 0, 1, 1)

)

n_blocks <- 8

n_stim_per_block <- nrow(stimulus_info)

total_trials <- n_blocks * n_stim_per_block

# ── Per-subject schedule helper ───────────────────────────────────────────────

# Identical to Ch. 11. Redefined here for standalone use.

make_subject_schedule <- function(stimulus_info, n_blocks, seed) {

set.seed(seed)

n_stim <- nrow(stimulus_info)

sequence <- unlist(lapply(seq_len(n_blocks), function(b) {

sample(stimulus_info$stimulus, n_stim, replace = FALSE)

}))

tibble(

trial_within_subject = seq_along(sequence),

block = rep(seq_len(n_blocks), each = n_stim),

stimulus_id = sequence

) |>

left_join(stimulus_info, by = c("stimulus_id" = "stimulus")) |>

rename(category_feedback = category_true) |>

dplyr::select(trial_within_subject, block, stimulus_id,

height, position, category_feedback)

}

plan(multisession, workers = max(1, availableCores() - 1))

```

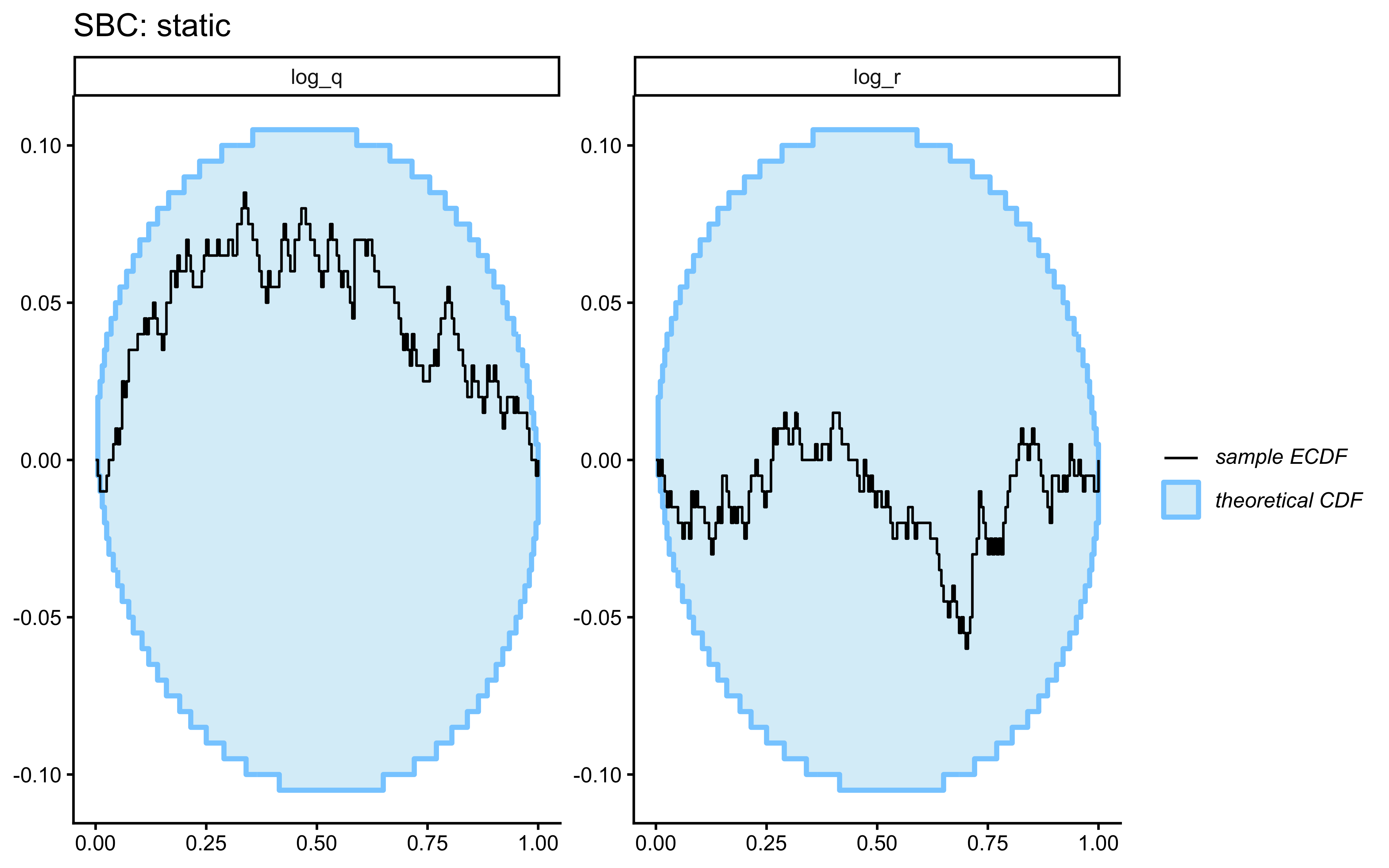

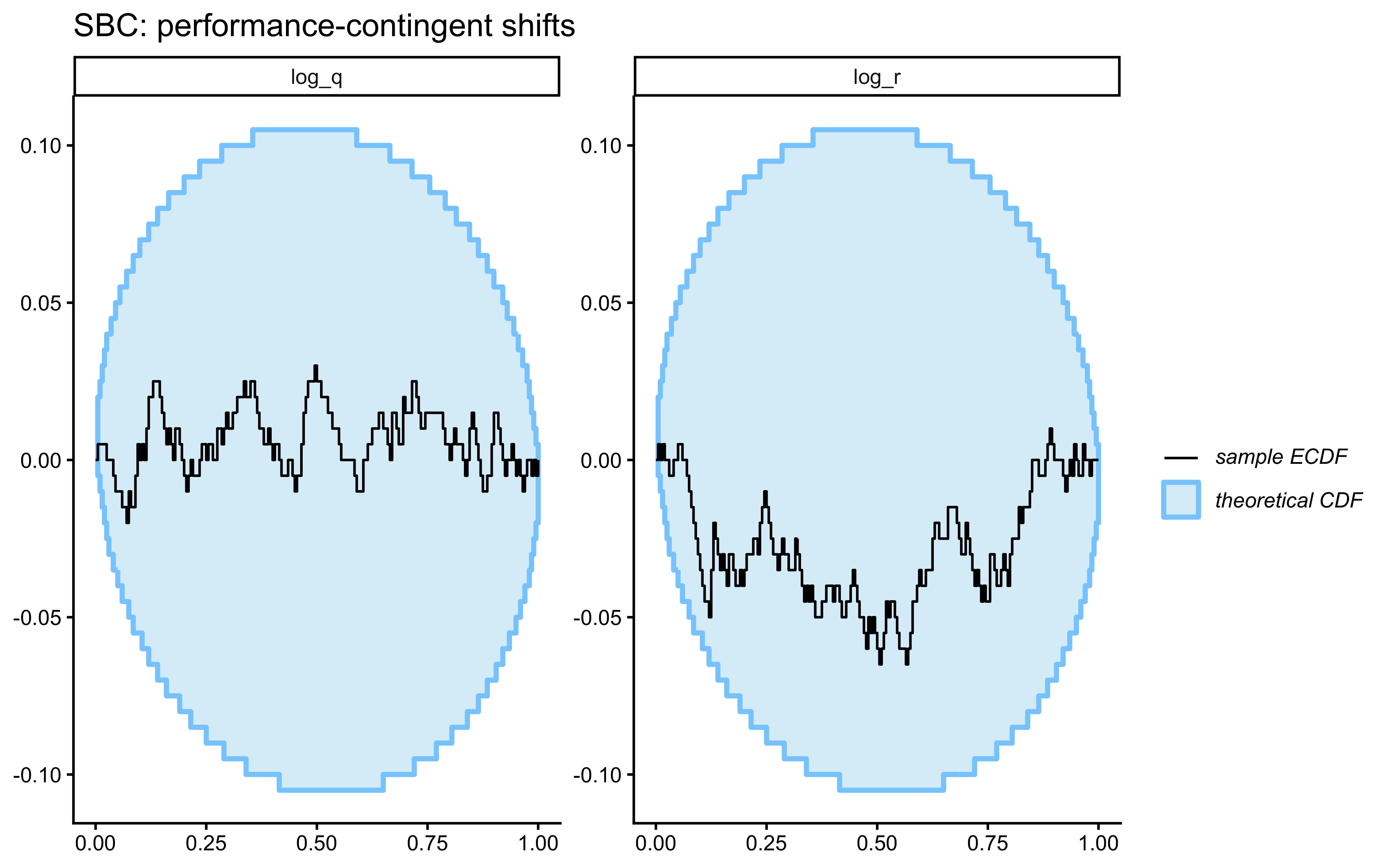

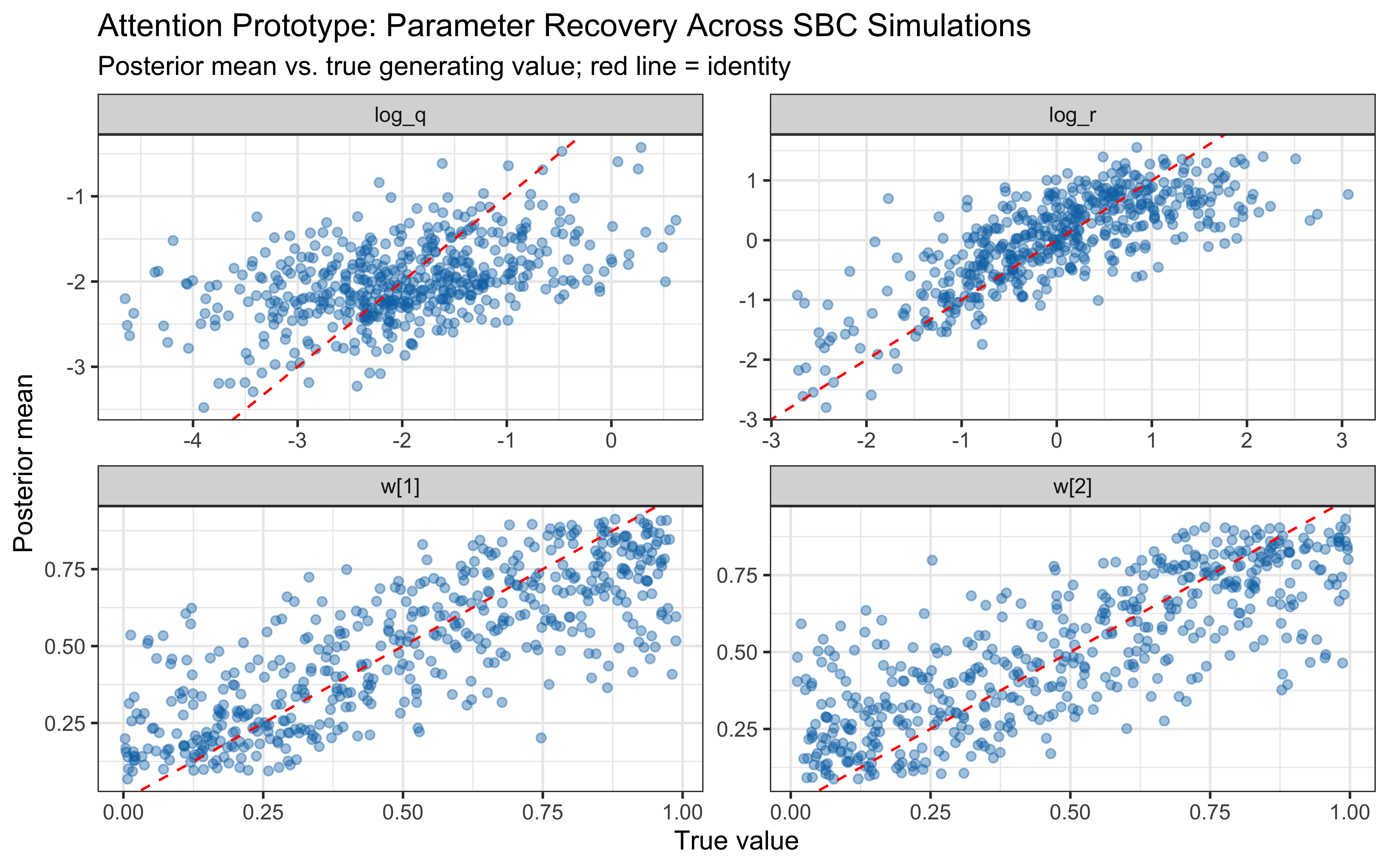

> **📍 Where we are in the Bayesian modeling workflow:**

> Chapter 13 built the GCM — an exemplar model that stores every encountered

> stimulus and decides by summed similarity to memory. This chapter introduces

> a structurally different account: the **prototype model**, which abstracts

> each category to a single running estimate (its mean and uncertainty) rather

> than storing individual instances. We implement prototype learning as a

> multivariate Kalman filter and then add a **Stan parameter-estimation model**

> for the prototype architecture.

>

> The full validation pipeline from Ch. 5 — prior

> predictive check, Pathfinder initialization, mandatory MCMC diagnostic

> battery, posterior predictive check, prior–posterior update plot, randomised

> LOO-PIT, LFO-CV, precision analysis, prior sensitivity,

> and SBC — is applied to this new model. Crucially, the **LFO-CV machinery

> implemented in Chapter 13** (`psis_lfo_gcm`) is generalised here as

> `psis_lfo_kalman` and applied to the prototype model. The chapter then

> extends to a **multilevel prototype model** with population-level priors

> on `log_r`, per-subject NCP offsets, and the full multilevel SBC battery

> (population and individual calibration).

## Rethinking Category Representation: From Examples to Averages

In the previous chapter, we explored the Generalized Context Model (GCM), an exemplar model where every encountered example is stored in memory. This approach is powerful but raises questions about cognitive efficiency. Do we really store every single instance we encounter?

Consider learning about common categories like "birds" or "chairs". While specific examples matter, we also seem to develop a general sense of what constitutes a typical member. Prototype theory offers an alternative perspective: instead of storing individual exemplars, the mind abstracts a summary representation — a prototype — for each category. This prototype often represents the central tendency or "average" member.

## Core Ideas of Prototype Models

| Feature | Prototype Models | Exemplar Models (GCM) |

|---|---|---|

| Representation | Abstract summary (e.g., average) | Collection of individual examples |

| Memory Cost | Low (one prototype per category) | High (potentially all examples) |

| Key Information | Central tendency & variance | Specific instances & their labels |

| Sensitivity | Less sensitive to specific examples | Highly sensitive to specific examples |

::: {.callout-note}

### Historical Context: From Typicality Effects to Dynamic Prototypes

The prototype approach to categorization did not emerge fully formed. It arose as the solution to a specific failure mode of the dominant view (rules) and evolved in a debate with alternative approaches (exemplars).

#### The Classical View and Its Discontents

Through the 1950s and into the 1960s, both philosophers and psychologists took it for granted that concepts had *defining features*: necessary and sufficient conditions that every member must satisfy. A bachelor is an unmarried adult male — full stop. Categorization, on this view, was logical: check the features, apply the rule. The model is appealingly crisp, but Wittgenstein had already noted the trouble in *Philosophical Investigations* (1953): most ordinary categories — *game*, *furniture*, *bird* — resist such definitions. Family resemblance, not shared essence, seemed to be the operative structure.

The empirical broadside came from Eleanor Rosch [@rosch1973natural; @rosch1975cognitive; @rosch1976basic]. In a series of influential experiments she documented **typicality effects**: robins are judged faster and more confidently as birds than penguins are; desk chairs are more chair-like than beanbags. Categories showed graded membership rather than all-or-nothing inclusion. Rosch also identified a privileged **basic level** — the level of abstraction (dog, not animal and not poodle) at which objects are most efficiently perceived, named, and remembered. Neither observation is compatible with the classical view, which predicts uniform membership within a category and no principled distinction across levels.

#### The Prototype as Psychological Mechanism

Rosch's work raised the question of *representation*: if not a rule, what mental structure supports graded membership? The answer on offer was a **prototype** — a summary representation encoding the central tendency of encountered category members. New objects are categorized by their distance from stored prototypes; items close to the prototype are typical, items distant are atypical.

Formal prototype models predated Rosch's program. @posner1968genesis showed that subjects who had studied distorted dot patterns without ever seeing the prototype nonetheless recognized the prototype at the end as highly familiar — abstraction was occurring implicitly. @reed1972pattern implemented an explicit prototype-matching classifier and showed it outperformed several alternatives on face-classification data. These early models computed a **static average** from training examples: the prototype was fixed after learning and then used as a lookup.

#### Static Prototypes and the Exemplar Challenge

The clean elegance of static prototype models made them obvious targets for empirical attack. @medin1978context showed that, on certain category structures, the Context Model (a pure exemplar model) fit human data significantly better than a prototype model could. The critical cases were **exception items**: an atypical member of one category that closely resembles the other category's prototype. Exemplar models accommodate exceptions naturally — the deviant item is stored individually. A static prototype, by definition, assimilates the deviant item into the average, smoothing away the very information that drives behavior.

Through the 1980s and early 1990s, the prototype-versus-exemplar debate generated substantial empirical literature. The consensus, codified in @smith1998prototype, was that neither account was universally superior: prototype-like effects dominated in some conditions (large training sets, brief study times, transfer to novel stimuli) while exemplar-like effects dominated in others (small training sets, extended learning, highly distinctive items). The field was left with a theoretical draw that demanded either a hybrid architecture or a shift in the question being asked.

#### From Static Averaging to Bayesian Updating

We do not take a stance here on the prototype-versus-exemplar debate. The previous chapter implemented exemplar models in some detail; this chapter sticks with prototype representations precisely so we can understand them on their own terms: what changes when the summary representation is allowed to update incrementally, how its parameters are recovered, and how it behaves under different learning environments.

Within that scope, the focus shifts from *what is stored* to *how the stored summary is updated*. A static average treats all observed members the same regardless of when they were encountered, and provides no mechanism for tracking categories that drift over time: the clothing you learn is "fashionable" today may not be fashionable in five years. Several incremental prototype schemes have been proposed in response. The simplest is a **delta rule**: $\mu_{t} = \mu_{t-1} + \alpha\,(x_t - \mu_{t-1})$, with a fixed learning rate $\alpha$ that yields exponentially weighted recency [@gluck1988conditioning; @estes1994classification]. You should recognize this rule from reinforcement learning :-) @anderson1991adaptive's rational model takes a fully Bayesian route, but over a Dirichlet-process partition rather than a parametric prototype. Each captures part of the picture: the delta rule is principled in form but its $\alpha$ is a free knob disconnected from the learner's uncertainty as it faces the stimuli; Anderson's model is Bayesian but solves a different problem (discovering the partition) and is harder to fit at the trial level.

The Kalman filter [@kalman1960new], developed originally for aerospace navigation, sits [I think, nobody else has used it for this!] in a useful intermediate position for prototype modelling. It is the **minimum-variance unbiased estimator** for a linear-Gaussian dynamical system: at each step it computes exactly how much weight to place on the new observation versus the accumulated prior, using the relative magnitudes of measurement noise $R$ and process noise $Q$. The learning rate (the Kalman gain) is not a free parameter but is *derived* at each trial from current uncertainty — recovering a delta-rule-like update whose effective $\alpha$ shrinks as evidence accumulates and grows again when the world is assumed to drift. When $Q = 0$ the prototype is assumed static and the gain decays monotonically — recovering the classical static average as a special case. When $Q > 0$ the prototype can drift and the steady-state gain remains positive, allowing perpetual sensitivity to new observations.

Applications of Kalman-style updating to human learning appear across multiple domains: classical conditioning [@dayan2000learning], interval timing, and reward prediction. The two-parameter family $(r, q)$ instantiates a spectrum from steep, responsive updating ($r$ small, gain high) to heavily smoothed abstraction ($r$ large, gain low, slow forgetting), with $q$ controlling whether the steady-state gain decays to zero or saturates above it.

#### The Bayesian Connection

Importantly for us [well, let's be honest, for me, but I'll take you along for the ride], the Kalman filter is a conceptually Bayesian method: it is the exact Bayesian posterior for a Gaussian state-space model. The prototype at trial $t$ is the posterior mean $\mathbb{E}[\mu_t \mid x_{1:t}]$; the covariance $\Sigma_t$ is the posterior variance. Recognising this identity connects the prototype model to the broader programme of **rational analysis** [@anderson1991adaptive] and **Bayesian models of cognition** [@tenenbaum2011grow]: the learner is performing optimal inference about a latent category structure given noisy, sequentially arriving evidence. The parameters $r$ and $q$ then carry clear psychological interpretations — observation noise (how variable are category members around the prototype?) and process noise (how rapidly does the category itself change?) — rather than being purely phenomenological fitting coefficients.

This framing also clarifies the key difference from the GCM: where the GCM stores the full history and retrieves it at decision time, the Kalman prototype model **compresses** the history into sufficient statistics (mean and covariance) that are updated recursively. The compression is lossless when the generative model is Gaussian and linear — which is why recovery and SBC are cleaner here than in most cognitive models of comparable scope.

:::

## Modeling Dynamic Prototypes: The Kalman Filter

Early prototype models often calculated a static average. However, human learning is dynamic; our understanding evolves with experience. How can we model a prototype that updates incrementally as new examples are encountered?

The Kalman filter provides a suitable mathematical framework. Originally developed for tracking physical systems amidst noise, it's well-suited for modeling prototype learning because it allows us to:

* **Track an Estimate**: The category prototype (its average feature values).

* **Represent Uncertainty**: Maintain not just the prototype's location but also our uncertainty about it.

* **Update Incrementally**: Refine the prototype with each new example.

* **Balance Old and New**: Optimally combine the current prototype (prior belief) with the new example (evidence).

* **Implement an Adaptive Learning Rate**: Automatically adjust how much influence a new example has (the "Kalman gain") based on current uncertainty. Learning is faster when uncertainty is high and slows as confidence grows.

### The Kalman Filter Logic: Updating an Estimate

Imagine tracking a prototype based on a single feature (e.g., average height). The Kalman filter maintains your Current State. At any moment, you have two key pieces of information:

* **Your Best Guess ($\mu$):** This is your current estimate of the prototype's average height.

* **Your Confidence ($\sigma^2$):** This isn't just *if* you're confident, but *how much*. It's the *variance* around your guess. A small variance means you're quite sure; a large variance means you're very unsure.

When a new category member (observation $x$) arrives, you measure its height.

* **The Catch:** Your measurement tool (or the example itself) isn't perfect. There's some inherent "noise" or variability in how examples relate to the true prototype. We represent the uncertainty of this *measurement process* with another variance, often called $R$.

**The Core Problem:** You have your guess (with $\sigma^2_{prev}$) and a new measurement (with $R$). How do you combine them to get a *new, better guess* and an *updated level of uncertainty*?

**Enter the Kalman Gain ($K$): The "Trust" Knob**

The Kalman filter calculates the Kalman Gain $K$, which tells you how much to trust the *new measurement* compared to your *current guess*:

$$K = \frac{\sigma^2_{prev}}{\sigma^2_{prev} + R}$$

If $\sigma^2_{prev}$ is large relative to $R$, then $K \to 1$: trust the measurement almost completely. If $\sigma^2_{prev}$ is small relative to $R$, then $K \to 0$: stick closer to your previous guess.

**Updating Your Guess:**

You nudge your old guess towards the new measurement. The size of the nudge is determined by the Kalman Gain $K$:

$$\mu_{new} = \mu_{prev} + K \cdot (x - \mu_{prev})$$

The term $(x - \mu_{prev})$ is the "prediction error" or "innovation" — how much the new data differs from what you expected.

**Updating Your Confidence (Uncertainty):**

After incorporating new information, uncertainty decreases:

$$\sigma^2_{new} = (1 - K) \cdot \sigma^2_{prev}$$

Since $K \in (0, 1)$, multiplying by $(1 - K)$ always shrinks uncertainty. The more you trusted the measurement (higher $K$), the more your uncertainty shrinks.

**Extending to drifting targets.** The scalar Kalman filter above assumes a static target: uncertainty monotonically decreases because there is no mechanism for it to grow back. The prototype model in this chapter adds a **process noise term** $Q$. Before each measurement update, a *prediction step* is applied:

$$\sigma^2_{k|k-1} = \sigma^2_{k-1|k-1} + Q$$

This increases uncertainty between observations, reflecting the possibility that the true prototype has shifted since the last trial. The subsequent measurement update then decreases uncertainty via the Kalman gain, exactly as before. At steady state, the two forces balance and uncertainty converges to a fixed value rather than shrinking to zero. When $Q = 0$ the model reduces to the static-target filter. We treat the scalar $q$ (the diagonal of $Q = q \cdot I$) as a free parameter to be estimated from data, alongside the observation noise $r$.

### Extending to Multiple Features: Multivariate Kalman Filter

Our stimuli have two features (height and position). We need a multivariate version:

* **Your Current State (Vectors and Matrices):**

* **Your Best Guess ($\vec{\mu}$):** Now a *vector* containing your best guess for each feature.

* **Your Confidence ($\Sigma$):** Now a *covariance matrix*. The diagonal elements give the variance for each feature individually; the off-diagonal elements give their covariance — for example, whether taller prototypes are also typically further to the right.

* **A New Hint Arrives:** Your new observation ($\vec{x}$) is also a vector.

* **Measurement Noise ($R$):** Also a covariance matrix. Often simplified to a diagonal matrix, assuming measurement errors on different features are independent.

* **The Logic Stays the Same, the Math Uses Matrices:**

* **Kalman Gain ($K$):** A *matrix*: $K = \Sigma_{prev} (\Sigma_{prev} + R)^{-1}$

* **Updating Your Guess ($\vec{\mu}$):** $\vec{\mu}_{new} = \vec{\mu}_{prev} + K (\vec{x} - \vec{\mu}_{prev})$

* **Updating Your Confidence ($\Sigma$):** The intuition is the same as the scalar case — uncertainty shrinks after each observation — but now in matrix form. The textbook update is $\Sigma_{new} = (I - K)\Sigma_{prev}$, which can be read as: "keep the share of the prior uncertainty that the new observation did *not* explain away." In code, however, we use the algebraically equivalent Joseph form, $\Sigma_{new} = (I - K)\Sigma_{prev}(I - K)^T + KRK^T$. This longer expression makes the two sources of remaining uncertainty explicit: the leftover prior uncertainty (first term) plus the measurement noise that leaks in through the Kalman gain (second term). The reason for using it in practice is purely numerical: a covariance matrix must be symmetric and have non-negative variances, and the Joseph form preserves both properties even when small rounding errors accumulate over many trials, whereas the shorter form can drift into invalid (asymmetric or negative-variance) territory.

### In a Nutshell

The Kalman filter tracks a prototype as a running estimate $\vec{\mu}$ and a covariance $\Sigma$ that quantifies how confident the learner is. Each trial has two phases: a *predict* step that inflates $\Sigma$ by $Q$ to allow the prototype to drift between observations, and an *update* step that pulls $\vec{\mu}$ toward the new exemplar by an amount set by the Kalman gain $K$ — high when the learner is uncertain or measurements are precise, low when the learner is already confident or measurements are noisy. The gain also determines how much $\Sigma$ shrinks. At steady state, drift ($Q$) and shrinkage balance, so uncertainty stops collapsing to zero and the model keeps learning indefinitely.

### Implementing the Multivariate Update in R

```{r ch13_multivariate_kalman_update}

# One update step of a multivariate Kalman filter.

# Returns updated mean vector, covariance matrix, and Kalman gain.

multivariate_kalman_update <- function(mu_prev, # previous mean vector

sigma_prev, # previous covariance matrix

observation, # observed feature vector

r_matrix) { # observation noise matrix

mu_prev <- as.numeric(mu_prev)

observation <- as.numeric(observation)

sigma_prev <- as.matrix(sigma_prev)

r_matrix <- as.matrix(r_matrix)

n_dim <- length(mu_prev)

I <- diag(n_dim)

# Kalman gain: K = Sigma_prev * (Sigma_prev + R)^{-1}

S <- sigma_prev + r_matrix

S_inv <- tryCatch(

solve(S),

error = function(e) {

warning("Matrix inversion failed; using pseudo-inverse.", call. = FALSE)

MASS::ginv(S)

}

)

K <- sigma_prev %*% S_inv

# Update mean

innovation <- observation - mu_prev

mu_new <- as.numeric(mu_prev + K %*% innovation)

# Update covariance — Joseph form for numerical stability

IK <- I - K

sigma_new <- IK %*% sigma_prev %*% t(IK) + K %*% r_matrix %*% t(K)

# Enforce symmetry (floating-point can introduce tiny asymmetry)

sigma_new <- (sigma_new + t(sigma_new)) / 2

list(mu = mu_new, sigma = sigma_new, k = K)

}

```

This function performs a single update for one category's prototype when it observes a new member.

---

We are fitting a *two-parameter* model: $r$ controls both Kalman gain and decisional precision; $q$ controls prototype drift speed and steady-state uncertainty. Both are inferred from data on the log scale. There is no category bias, fixed initial means, fixed initial covariances, and diagonal noise structures for both $R$ and $Q$. The Stan implementation in §"The Prototype Model in Stan" implements exactly this.

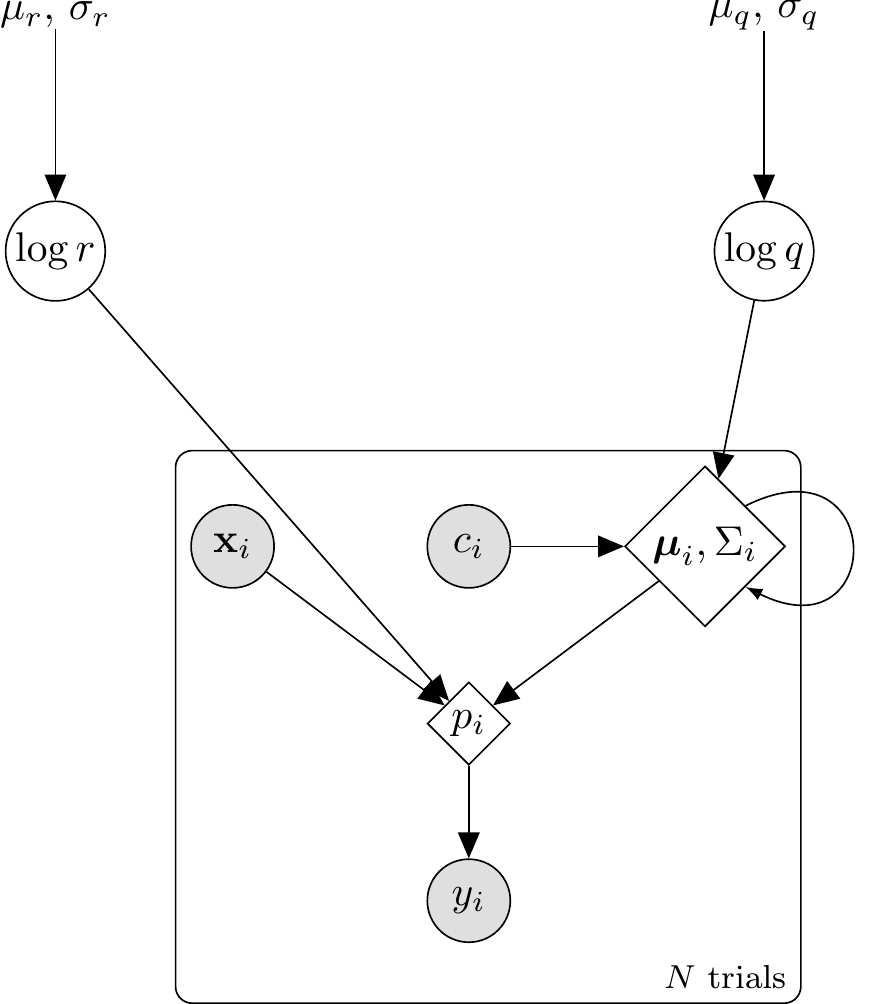

```{tikz prototype-single-dag, fig.cap = "DAG for the single-subject prototype (Kalman) model. Hyperparameters (dots) feed into the two latent parameters (circles): $\\log r$ (observation noise) and $\\log q$ (process noise). Inside the trial plate, the stimulus $\\mathbf{x}_i$ and feedback $c_i$ combine with the carried-forward state $(\\boldsymbol{\\mu}_i, \\Sigma_i)$ — which is updated across trials via the Kalman predict/update steps — to yield the deterministic choice probability $p_i$ (double circle), from which the response $y_i$ is drawn. The self-loop on the state node represents the sequential prototype update.", fig.align = "center", out.width = "70%"}

\usetikzlibrary{bayesnet}

\begin{tikzpicture}

% ── Hyperparameters (const) ─────────────────────────────

\node[const] (hyp_r) at (0, 0) {$\mu_r,\,\sigma_r$};

\node[const] (hyp_q) at (6, 0) {$\mu_q,\,\sigma_q$};

% ── Latent parameters ───────────────────────────────────

\node[latent] (logr) at (0, -2) {$\log r$};

\node[latent] (logq) at (6, -2) {$\log q$};

% ── Trial-level nodes (inside plate) ────────────────────

\node[obs] (xi) at (1.5, -4.5) {$\mathbf{x}_i$};

\node[obs] (ci) at (3.5, -4.5) {$c_i$};

\node[det] (state) at (5.5, -4.5) {$\boldsymbol{\mu}_i,\Sigma_i$};

\node[det] (pi) at (3.5, -6.0) {$p_i$};

\node[obs] (yi) at (3.5, -7.5) {$y_i$};

% ── Edges ───────────────────────────────────────────────

\edge {hyp_r} {logr};

\edge {hyp_q} {logq};

\edge {logq} {state};

\edge {ci} {state};

\edge {state} {pi};

\edge {xi} {pi};

\edge {logr} {pi};

\edge {pi} {yi};

% ── Self-loop on state (sequential update) ─────────────

\draw[->, >=latex] (state.north east) .. controls +(1.2,0.6) and +(1.2,-0.6) .. (state.south east);

% ── Plate ───────────────────────────────────────────────

\plate {trial} {(xi)(ci)(state)(pi)(yi)} {$N$ trials};

\end{tikzpicture}

```

---

## Building the Full Categorization Model

We now integrate this update mechanism into a full agent that learns prototypes for two categories and makes categorization decisions.

### Initialization

* We need separate prototypes ($\mu_0, \Sigma_0$ and $\mu_1, \Sigma_1$) for Category 0 and Category 1.

* We start with high uncertainty (large initial $\Sigma$) and uninformative means at the center of the feature space.

* We define the observation noise matrix $R = r_\text{val} \cdot I$. The parameter $r_\text{val}$ represents how much variability we assume exists within categories or in our perception of stimuli.

* We define the process noise matrix $Q = q_\text{val} \cdot I$. The parameter $q_\text{val}$ controls how much the category prototype may drift between successive observations. Small $q$ means near-static categories; large $q$ means rapidly shifting ones.

### Categorization Decision

How does the model decide which category a new stimulus $\vec{x}$ belongs to? The decision has three steps: compute a **distance** from the stimulus to each prototype, convert distance to **similarity**, then apply a **choice rule**.

**Step 1 — Distance.** The distance between stimulus $\vec{x}$ and category $C$'s prototype is the Mahalanobis distance:

$$d_C = (\vec{x} - \vec{\mu}_C)^T (\Sigma_C + R)^{-1} (\vec{x} - \vec{\mu}_C)$$

Breaking this down for two features (height and position):

$$d_C = \frac{(x_\text{height} - \mu_{C,\text{height}})^2}{\sigma_{C,\text{height}}^2 + r} + \frac{(x_\text{position} - \mu_{C,\text{position}})^2}{\sigma_{C,\text{position}}^2 + r}$$

Each feature contributes its squared difference divided by total uncertainty on that dimension. The three quantities that enter are:

* $\vec{\mu}_C$: the prototype mean — the Kalman filter's current best estimate of the category centre.

* $\Sigma_C$: the prototype uncertainty — a matrix tracking how confident the filter is about that estimate. It starts large (high uncertainty) and shrinks as more examples are seen. High uncertainty on dimension $k$ means differences on that dimension count for less in the distance.

* $R = r \cdot I$: observation noise — a fixed matrix reflecting how variable category members are around the prototype, or how noisy perception is. Together with $\Sigma_C$, it forms the total uncertainty $\Sigma_C + R$ that normalises each squared difference.

**Step 2 — Similarity.** Distance is converted to similarity by an exponential decay:

$$\eta_C = \exp\!\left(-\tfrac{1}{2}\, d_C\right)$$

This is the multivariate normal density evaluated at $\vec{x}$. Similarity is 1 when the stimulus sits exactly on the prototype mean ($d_C = 0$) and falls toward 0 as distance grows. The parameter $r$ controls how steeply: large $r$ inflates the denominators in $d_C$, shrinks the distance, and produces a flat bell curve — the model generalises broadly. Small $r$ produces a sharp bell curve — only stimuli very close to the prototype receive high similarity. This is the role that the sensitivity parameter $c$ plays in the GCM's $\exp(-c \cdot d)$; here it is embedded inside the distance rather than sitting outside it.

**Step 3 — Choice rule.** The probability of choosing Category 1 is the proportion of total similarity belonging to that category (Luce choice rule):

$$P(\text{Choose Cat 1} \mid \vec{x}) = \frac{\eta_1}{\eta_0 + \eta_1}$$

We work with log-probabilities for numerical stability. There is no separate softmax temperature: $r$ already controls decisional sharpness through the similarity step.

### The Learning Loop

The agent processes trials sequentially:

1. **Predict**: Add $Q$ to both category covariances — $\Sigma_{0} \mathrel{+}= Q$, $\Sigma_{1} \mathrel{+}= Q$ — reflecting that each prototype may have drifted since the last observation.

2. **Decide**: Calculate $P(\text{Choose Cat 1} \mid \vec{x}_i)$ based on the post-prediction $\mu_0, \Sigma_0, \mu_1, \Sigma_1$.

3. **Respond**: Generate a response.

4. **Receive feedback**: Observe true category $y_i$.

5. **Update**: Apply the Kalman measurement update to the correct category only.

### R Implementation of the Prototype Agent

Each agent's learning trajectory depends on the order in which stimuli are encountered, and different randomizations produce genuinely different trajectories.

```{r ch13_prototype_model}

# Prototype model agent using Kalman filter with process noise for categorization.

# r_value : observation noise variance (scalar > 0)

# q_value : process noise variance / prototype drift rate (scalar >= 0)

# obs : matrix of observations (trials x features)

# cat_one : vector of true category labels (0 or 1) for feedback

# Returns: tibble with prob_cat1 and sim_response

prototype_kalman <- function(r_value,

q_value,

obs,

cat_one,

initial_mu = NULL,

initial_sigma_diag = 10.0,

quiet = TRUE) {

n_trials <- nrow(obs)

n_features <- ncol(obs)

if (is.null(initial_mu)) {

mu0_init <- rep(2.5, n_features)

mu1_init <- rep(2.5, n_features)

} else {

mu0_init <- initial_mu[[1]]

mu1_init <- initial_mu[[2]]

}

prototype_cat_0 <- list(mu = mu0_init, sigma = diag(initial_sigma_diag, n_features))

prototype_cat_1 <- list(mu = mu1_init, sigma = diag(initial_sigma_diag, n_features))

r_matrix <- diag(r_value, n_features)

q_matrix <- diag(q_value, n_features)

response_probs <- numeric(n_trials)

log_sum_exp <- function(v) {

m <- max(v)

m + log(sum(exp(v - m)))

}

for (i in seq_len(n_trials)) {

if (!quiet && i %% 20 == 0) cat("Trial", i, "\n")

current_obs <- as.numeric(obs[i, ])

# ── Prediction step: add process noise to both prototypes ──────────────

# Reflects potential drift in category location since last trial.

# When q_value = 0 this reduces to the static-target filter.

prototype_cat_0$sigma <- prototype_cat_0$sigma + q_matrix

prototype_cat_1$sigma <- prototype_cat_1$sigma + q_matrix

# ── Decision ─────────────────────────────────────────────────────────────

cov_cat_0 <- prototype_cat_0$sigma + r_matrix

cov_cat_1 <- prototype_cat_1$sigma + r_matrix

log_prob_0 <- tryCatch(

mvtnorm::dmvnorm(current_obs, mean = prototype_cat_0$mu,

sigma = cov_cat_0, log = TRUE),

error = function(e) -Inf

)

log_prob_1 <- tryCatch(

mvtnorm::dmvnorm(current_obs, mean = prototype_cat_1$mu,

sigma = cov_cat_1, log = TRUE),

error = function(e) -Inf

)

if (!is.finite(log_prob_0) && !is.finite(log_prob_1)) {

prob_cat_1 <- 0.5

} else if (!is.finite(log_prob_0)) {

prob_cat_1 <- 1.0

} else if (!is.finite(log_prob_1)) {

prob_cat_1 <- 0.0

} else {

prob_cat_1 <- exp(log_prob_1 - log_sum_exp(c(log_prob_0, log_prob_1)))

}

response_probs[i] <- pmax(1e-9, pmin(1 - 1e-9, prob_cat_1))

# ── Update (measurement update for the correct category only) ────────────

true_cat <- cat_one[i]

if (true_cat == 1) {

upd <- multivariate_kalman_update(prototype_cat_1$mu, prototype_cat_1$sigma,

current_obs, r_matrix)

prototype_cat_1$mu <- upd$mu

prototype_cat_1$sigma <- upd$sigma

} else {

upd <- multivariate_kalman_update(prototype_cat_0$mu, prototype_cat_0$sigma,

current_obs, r_matrix)

prototype_cat_0$mu <- upd$mu

prototype_cat_0$sigma <- upd$sigma

}

}

tibble(

prob_cat1 = response_probs,

sim_response = rbinom(n_trials, 1, response_probs)

)

}

# Wrapper: generates per-agent schedule then calls prototype_kalman

simulate_prototype_agent <- function(agent_id, r_value, q_value,

stimulus_info, n_blocks, subject_seed) {

schedule <- make_subject_schedule(stimulus_info, n_blocks, seed = subject_seed)

obs <- as.matrix(schedule[, c("height", "position")])

cat_one <- schedule$category_feedback

result <- prototype_kalman(

r_value = r_value,

q_value = q_value,

obs = obs,

cat_one = cat_one,

initial_mu = list(rep(2.5, 2), rep(2.5, 2)),

initial_sigma_diag = 10.0,

quiet = TRUE

)

schedule |>

mutate(

agent_id = agent_id,

r_value_true = r_value,

q_value_true = q_value,

log_r_true = log(r_value),

log_q_true = log(q_value),

prob_cat1 = result$prob_cat1,

sim_response = result$sim_response,

correct = as.integer(category_feedback == sim_response)

) |>

group_by(agent_id) |>

mutate(performance = cumsum(correct) / row_number()) |>

ungroup()

}

```

### Simulating Categorization Behavior

Let's simulate behavior on the Kruschke (1993) task. The key parameter here is `r_value`, the observation noise. This parameter reflects the model's assumption about how much variability exists within categories or arises from perceptual noise.

* **Low `r_value`**: The model assumes observations are precise representations of the category; each new example exerts strong influence and prototypes update rapidly.

* **High `r_value`**: The model assumes observations are noisy; learning is slower as each example has less influence, but the model generalizes more broadly.

```{r ch13_simulate_prototype_responses}

param_df <- expand_grid(

agent_id = 1:5,

r_value = c(0.5, 2.0),

q_value = c(0.001, 0.1, 0.5)

) |>

mutate(subject_seed = agent_id)

sim_file <- here("simdata", "ch13_prototype_simulated_responses.csv")

if (regenerate_simulations || !file.exists(sim_file)) {

cat("Regenerating prototype simulations...\n")

prototype_responses <- future_pmap_dfr(

list(

agent_id = param_df$agent_id,

r_value = param_df$r_value,

q_value = param_df$q_value,

subject_seed = param_df$subject_seed

),

simulate_prototype_agent,

stimulus_info = stimulus_info,

n_blocks = n_blocks,

.options = furrr_options(seed = TRUE)

)

write_csv(prototype_responses, sim_file)

cat("Simulations saved.\n")

} else {

prototype_responses <- read_csv(sim_file, show_col_types = FALSE)

cat("Simulations loaded.\n")

}

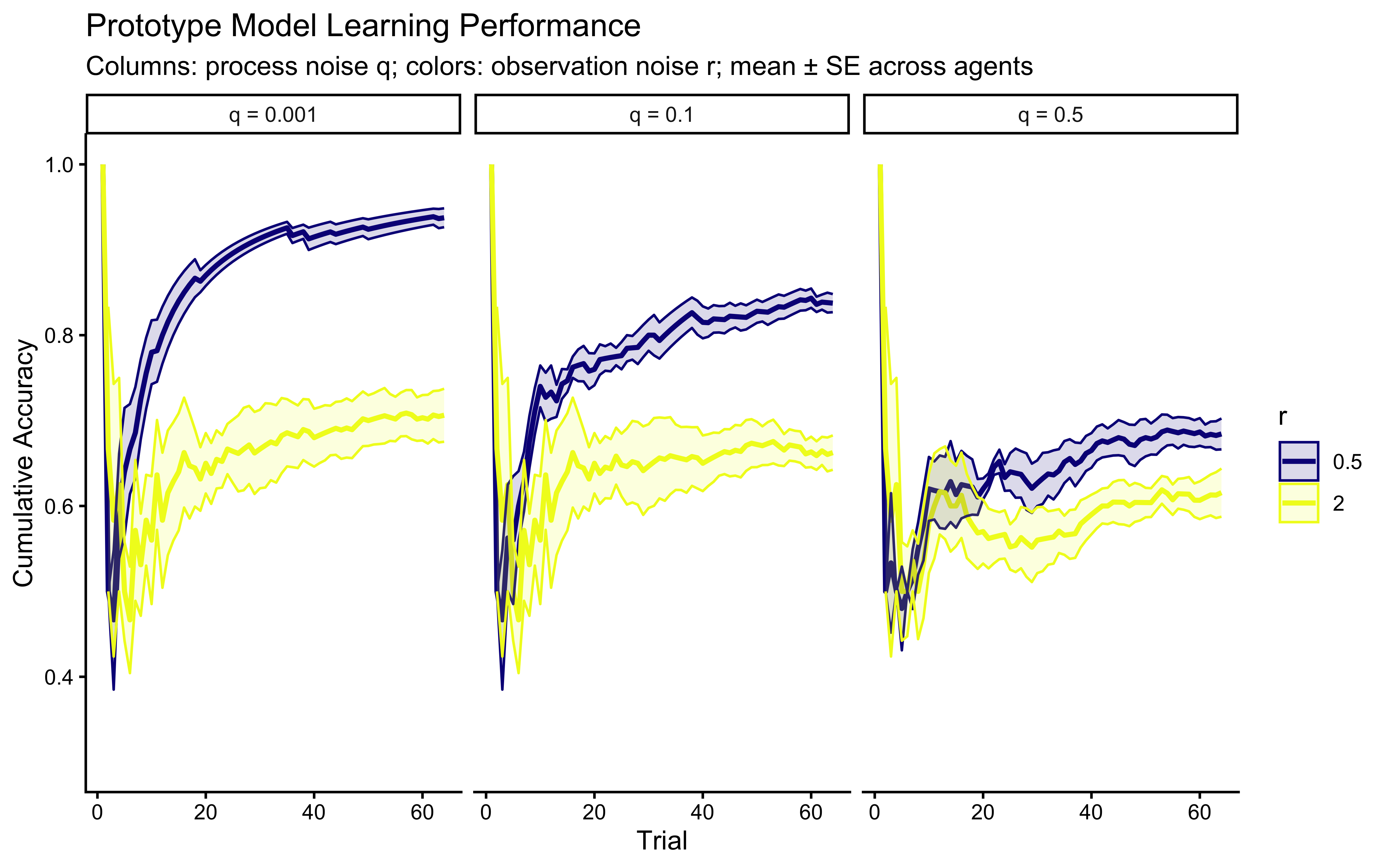

ggplot(prototype_responses,

aes(x = trial_within_subject, y = performance, color = factor(r_value_true))) +

stat_summary(fun = mean, geom = "line", linewidth = 1) +

stat_summary(fun.data = mean_se, geom = "ribbon", alpha = 0.15,

aes(fill = factor(r_value_true))) +

scale_color_viridis_d(option = "plasma", name = "r") +

scale_fill_viridis_d(option = "plasma", name = "r") +

facet_wrap(~paste0("q = ", q_value_true), ncol = 3) +

labs(

title = "Prototype Model Learning Performance",

subtitle = "Columns: process noise q; colors: observation noise r; mean ± SE across agents",

x = "Trial", y = "Cumulative Accuracy"

) +

ylim(0.3, 1.0)

```

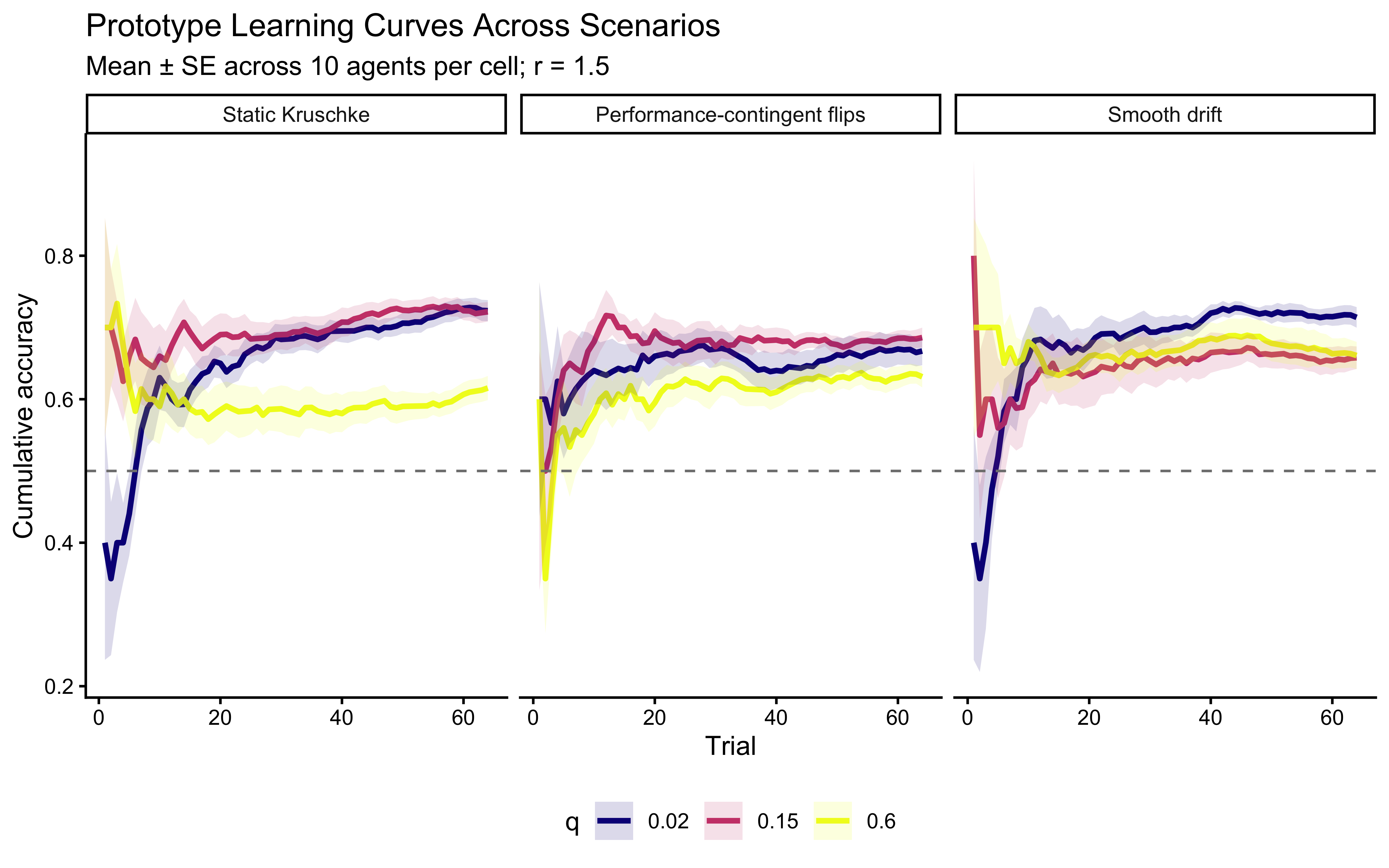

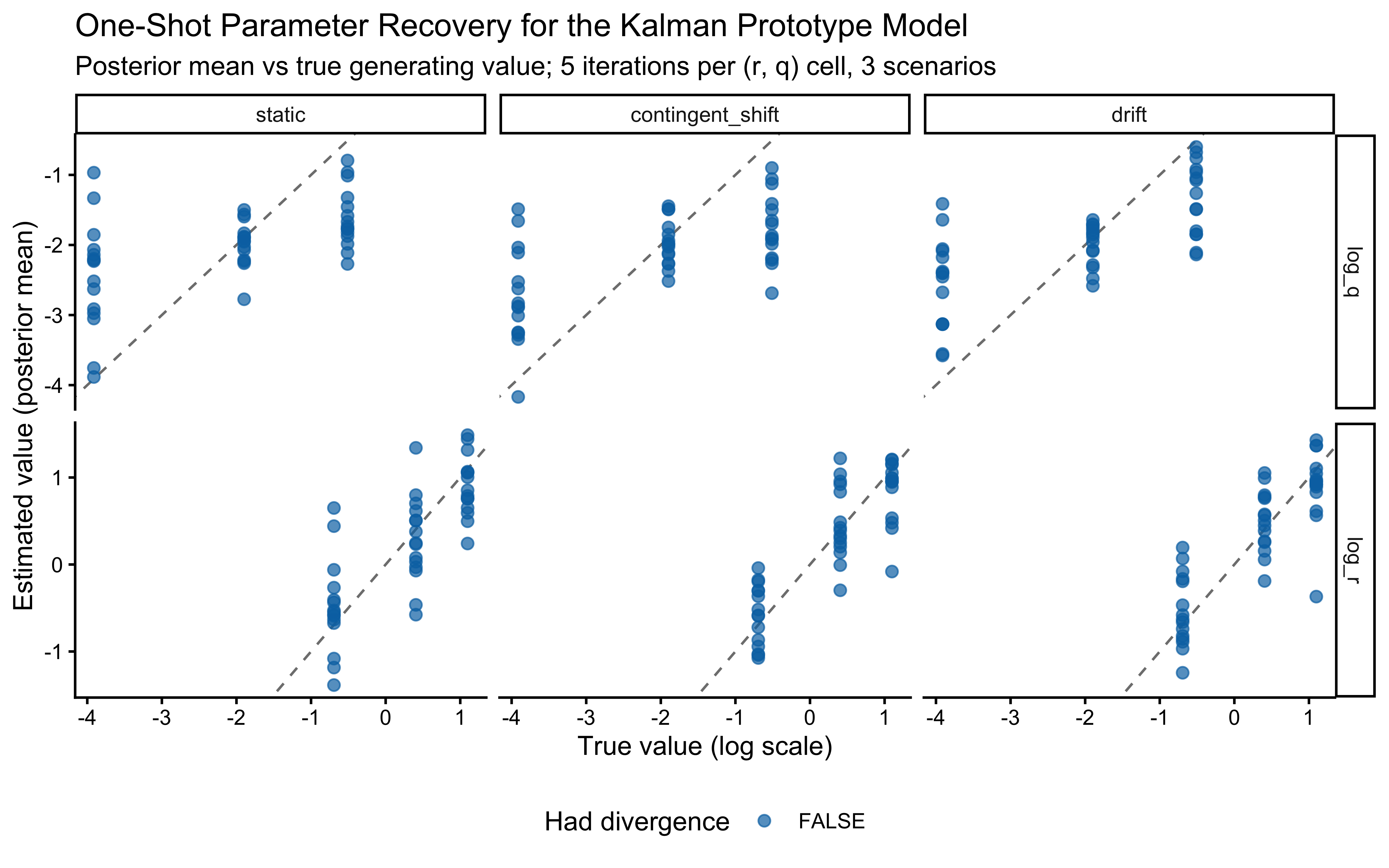

**Interpretation**: The panel structure separates the effects of $r$ (colors) and $q$ (columns). Horizontally (fixed $q$), higher $r$ slows learning and lowers asymptotic accuracy. Vertically (fixed $r$), higher $q$ introduces persistent uncertainty — the model cannot converge to a stable prototype, so accuracy may plateau below ceiling or even oscillate slightly. When $q = 0$ the model reduces to a static-target kalman filter (assumptions of categories as static). The key pedagogical point: $q$ and $r$ are not redundant — they affect different phases of the learning curve and, in non-stationary environments, should become separately identifiable. But we should have learned a lesson from the previous chapter and not assume: let's see if they are identifiable in practice.

### Visualizing Prototype Evolution

Where do the prototypes end up? Let's track their movement and uncertainty over trials.

```{r ch13_track_prototypes}

# Track prototype means and covariance matrices over the course of learning.

# Returns a tibble with one row per (trial × category).

track_prototypes <- function(r_value, obs, cat_one,

q_value = 0,

initial_mu = NULL, initial_sigma_diag = 10.0) {

n_trials <- nrow(obs)

n_features <- ncol(obs)

if (is.null(initial_mu)) {

mu0_init <- rep(2.5, n_features)

mu1_init <- rep(2.5, n_features)

} else {

mu0_init <- initial_mu[[1]]

mu1_init <- initial_mu[[2]]

}

prototype_cat_0 <- list(mu = mu0_init, sigma = diag(initial_sigma_diag, n_features))

prototype_cat_1 <- list(mu = mu1_init, sigma = diag(initial_sigma_diag, n_features))

r_matrix <- diag(r_value, n_features)

history <- list()

for (i in seq_len(n_trials + 1)) {

history[[length(history) + 1]] <- tibble(

trial = i, category = 0,

feature1_mean = prototype_cat_0$mu[1],

feature2_mean = prototype_cat_0$mu[2],

cov_matrix = list(prototype_cat_0$sigma)

)

history[[length(history) + 1]] <- tibble(

trial = i, category = 1,

feature1_mean = prototype_cat_1$mu[1],

feature2_mean = prototype_cat_1$mu[2],

cov_matrix = list(prototype_cat_1$sigma)

)

if (i <= n_trials) {

# Prediction step

prototype_cat_0$sigma <- prototype_cat_0$sigma + diag(q_value, n_features)

prototype_cat_1$sigma <- prototype_cat_1$sigma + diag(q_value, n_features)

current_obs <- as.numeric(obs[i, ])

true_cat <- cat_one[i]

if (true_cat == 1) {

upd <- multivariate_kalman_update(prototype_cat_1$mu, prototype_cat_1$sigma,

current_obs, r_matrix)

prototype_cat_1$mu <- upd$mu

prototype_cat_1$sigma <- upd$sigma

} else {

upd <- multivariate_kalman_update(prototype_cat_0$mu, prototype_cat_0$sigma,

current_obs, r_matrix)

prototype_cat_0$mu <- upd$mu

prototype_cat_0$sigma <- upd$sigma

}

}

}

bind_rows(history)

}

# Ellipse helper for uncertainty visualization

get_ellipse <- function(mu, sigma, level = 0.68) {

mu <- as.numeric(mu)

sigma <- as.matrix(sigma)

if (length(mu) != 2 || !all(dim(sigma) == c(2, 2))) return(NULL)

ev <- eigen(sigma, symmetric = TRUE, only.values = TRUE)$values

if (any(ev <= 1e-6)) sigma <- sigma + diag(ncol(sigma)) * 1e-6

pts <- tryCatch(

ellipse::ellipse(sigma, centre = mu, level = level),

error = function(e) NULL

)

if (is.null(pts)) return(NULL)

as_tibble(pts) |> setNames(c("feature1_mean", "feature2_mean"))

}

# Use a fixed seed-1 schedule for the trajectory visualization

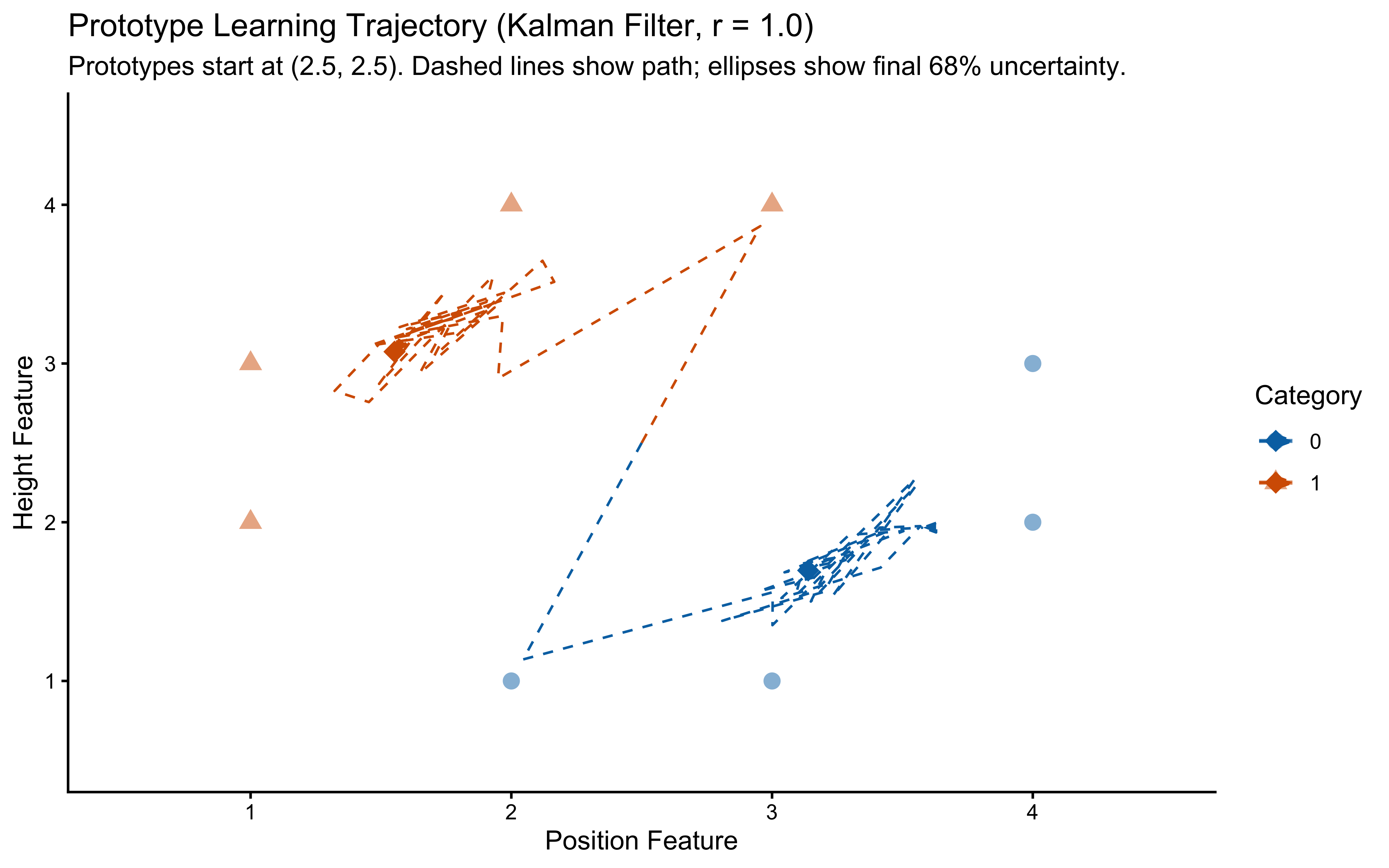

traj_schedule <- make_subject_schedule(stimulus_info, n_blocks, seed = 1)

prototype_trajectory <- track_prototypes(

r_value = 1.0,

q_value = 0.05,

obs = as.matrix(traj_schedule[, c("height", "position")]),

cat_one = traj_schedule$category_feedback,

initial_mu = list(rep(2.5, 2), rep(2.5, 2)),

initial_sigma_diag = 10.0

)

final_prototypes <- prototype_trajectory |> filter(trial == max(trial))

ellipse_data <- final_prototypes |>

rowwise() |>

mutate(ell = list(get_ellipse(c(feature1_mean, feature2_mean), cov_matrix[[1]]))) |>

ungroup() |>

filter(!map_lgl(ell, is.null)) |>

unnest(ell)

ggplot() +

geom_point(data = stimulus_info,

aes(x = position, y = height, color = factor(category_true),

shape = factor(category_true)),

size = 3, alpha = 0.5) +

geom_path(

data = prototype_trajectory |> filter(trial <= total_trials),

aes(x = feature2_mean, y = feature1_mean,

group = category, color = factor(category)),

linetype = "dashed",

arrow = arrow(type = "closed", length = unit(0.08, "inches"), angle = 20)

) +

geom_point(data = final_prototypes,

aes(x = feature2_mean, y = feature1_mean, color = factor(category)),

size = 4, shape = 18) +

geom_path(data = ellipse_data,

aes(x = feature2_mean, y = feature1_mean,

group = category, color = factor(category)),

alpha = 0.7, linewidth = 0.8) +

scale_color_manual(values = c("0" = "#0072B2", "1" = "#D55E00"), name = "Category") +

scale_shape_manual(values = c("0" = 16, "1" = 17), name = "Category") +

labs(

title = "Prototype Learning Trajectory (Kalman Filter, r = 1.0)",

subtitle = "Prototypes start at (2.5, 2.5). Dashed lines show path; ellipses show final 68% uncertainty.",

x = "Position Feature", y = "Height Feature"

) +

coord_cartesian(

xlim = range(c(stimulus_info$position, prototype_trajectory$feature2_mean)) + c(-0.5, 0.5),

ylim = range(c(stimulus_info$height, prototype_trajectory$feature1_mean)) + c(-0.5, 0.5)

)

```

**Interpretation**: The dashed lines show the path the prototypes took during learning (starting from (2.5, 2.5), the center of the feature space). The final prototype locations are marked with diamonds, and the ellipses represent the model's final uncertainty (68% confidence) about the true prototype location for each category. We see the prototypes moving towards the center of their respective category members and uncertainty shrinking over time — exactly the incremental, variance-reducing Bayesian update the Kalman filter implements. The shrinking ellipses indicate that once the agent has seen enough exemplars, the covariances are small enough that further learning is negligible, and the prototypes are effectively frozen.

---

## Prior Predictive Check

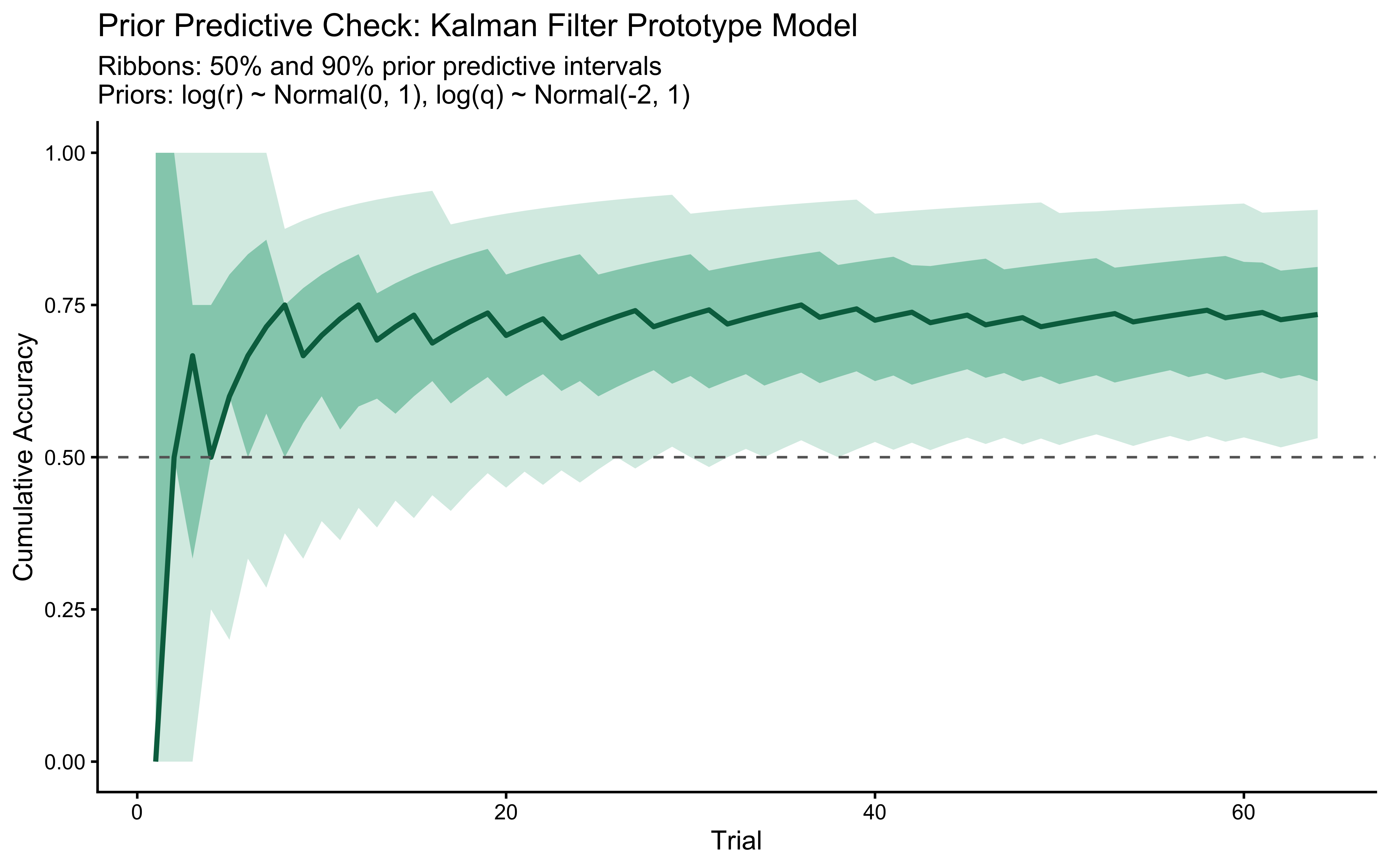

Before fitting any data, we verify that the prior on `log_r` generates only scientifically plausible learning trajectories. We draw $S = 500$ values from $\log r \sim \text{Normal}(0, 1)$ — implying $r \in (0.13, 7.4)$ at $\pm 2$ SD — simulate one agent per draw, and plot the envelope.

```{r ch13_prior_predictive_check}

n_ppc_samples <- 500

ppc_log_r <- rnorm(n_ppc_samples, LOG_R_PRIOR_MEAN, LOG_R_PRIOR_SD)

ppc_log_q <- rnorm(n_ppc_samples, LOG_Q_PRIOR_MEAN, LOG_Q_PRIOR_SD)

ppc_r <- exp(ppc_log_r)

ppc_q <- exp(ppc_log_q)

ppc_sched <- make_subject_schedule(stimulus_info, n_blocks, seed = 999)

prior_pred_curves <- map_dfr(seq_len(n_ppc_samples), function(s) {

res <- prototype_kalman(

r_value = ppc_r[s],

q_value = ppc_q[s],

obs = as.matrix(ppc_sched[, c("height", "position")]),

cat_one = ppc_sched$category_feedback,

initial_mu = list(rep(2.5, 2), rep(2.5, 2))

)

tibble(

sample_id = s,

trial = seq_len(nrow(ppc_sched)),

correct = as.integer(res$sim_response == ppc_sched$category_feedback)

) |>

mutate(cum_acc = cumsum(correct) / row_number())

})

ppc_summary <- prior_pred_curves |>

group_by(trial) |>

summarise(

q05 = quantile(cum_acc, 0.05), q25 = quantile(cum_acc, 0.25),

q50 = median(cum_acc),

q75 = quantile(cum_acc, 0.75), q95 = quantile(cum_acc, 0.95),

.groups = "drop"

)

ggplot(ppc_summary, aes(x = trial)) +

geom_ribbon(aes(ymin = q05, ymax = q95), fill = "#009E73", alpha = 0.20) +

geom_ribbon(aes(ymin = q25, ymax = q75), fill = "#009E73", alpha = 0.40) +

geom_line(aes(y = q50), color = "#006D4E", linewidth = 1) +

geom_hline(yintercept = 0.5, linetype = "dashed", color = "grey40") +

scale_y_continuous(limits = c(0, 1)) +

labs(

title = "Prior Predictive Check: Kalman Filter Prototype Model",

subtitle = "Ribbons: 50% and 90% prior predictive intervals\nPriors: log(r) ~ Normal(0, 1), log(q) ~ Normal(-2, 1)",

x = "Trial", y = "Cumulative Accuracy"

)

```

The prior predictive envelope spans chance performance (uninformative $r$ draws) through near-ceiling accuracy (informative $r$ draws), without implying impossible behavior such as sustained below-chance performance. Not a hard bar to pass, but a good start.

---

## The Prototype Model in Stan

Simulations help us understand the model, but we want to fit it to experimental data to estimate its parameters. The primary free parameter is the observation noise variance `r_value`. We implement the Kalman filter prototype model in Stan to estimate `r_value` (on the log scale) from data.

### Some implementation notes

### Kalman filter in the transformed parameters

The prototype model has two parameters — `log_r` and `log_q`. Given both and the observed data (stimuli and feedback), the *entire* Kalman filter state is deterministic: prototype means, prototype covariances, and the per-trial choice probability $p_i$ all follow algebraically from `log_r` and the trial sequence. Whenever a quantity depends on a parameter but is otherwise deterministic, it belongs in `transformed parameters` (evaluated once per leapfrog step) rather than `transformed data` (which is for parameter-free preprocessing) or `model{}` (which would force a recomputation in `generated quantities`). We follow that rule here, and `log_lik[i]` in `generated quantities` becomes a one-line lookup of `p[i]`.

> **A note on architectural symmetry with the chapter on exemplars.** The previous chapter GCM's choice probabilities were deterministic given the parameters and the data, and therefore belonged in `transformed parameters`. The same is true for the kalman filter model: `prob_cat1[i]` is a transformed parameter, the model block reduces to a single vectorised `bernoulli_lpmf(y | prob_cat1)`, and `log_lik[i]` is a one-line lookup.

#### Path dependence requires LFO-CV

The same path-dependence concern of the previous chapter applies: trial $t$'s choice probability depends on all previous stimuli through the Kalman state, so standard PSIS-LOO is invalid. Unlike previous drafts, we no longer treat that as a caveat to repeat — the LFO-CV machinery from the previous chapter is generalised to the Kalman case in §"Leave-Future-Out Cross-Validation" below.

> **`reduce_sum` and parallelization**: As with the GCM, the Kalman prototype model cannot use `reduce_sum` because successive Kalman steps are causally chained — trial $t$'s contribution to the likelihood depends on the filter state from trials $1, \ldots, t-1$. The entire filter must be computed sequentially. With two parameters (`log_r`, `log_q`) the posterior geometry is 2D, and Pathfinder initialization is still reliable because both parameters are unconstrained scalars with prior-dominated tails.

### Stan Model

```{r ch13_stan_prototype_model}

prototype_single_stan <- "

// Kalman Filter Prototype Model — Single Subject, with Process Noise

// Key design:

// log_r and log_q are the two parameters (both unconstrained).

// r_value = exp(log_r): observation noise variance

// q_value = exp(log_q): process noise / prototype drift rate

// Kalman loop structure: Predict (add Q) → Decide → Update.

// The full filter is in transformed parameters so log_lik in generated

// quantities reads p[i] directly without re-running the filter.

data {

int<lower=1> ntrials;

int<lower=1> nfeatures;

array[ntrials] int<lower=0, upper=1> cat_one;

array[ntrials] int<lower=0, upper=1> y;

array[ntrials, nfeatures] real obs;

vector[nfeatures] initial_mu_cat0;

vector[nfeatures] initial_mu_cat1;

real<lower=0> initial_sigma_diag;

real prior_logr_mean;

real<lower=0> prior_logr_sd;

real prior_logq_mean;

real<lower=0> prior_logq_sd;

}

parameters {

real log_r;

real log_q;

}

transformed parameters {

real<lower=0> r_value = exp(log_r);

real<lower=0> q_value = exp(log_q);

array[ntrials] real<lower=1e-9, upper=1-1e-9> p;

{

vector[nfeatures] mu_cat0 = initial_mu_cat0;

vector[nfeatures] mu_cat1 = initial_mu_cat1;

matrix[nfeatures, nfeatures] sigma_cat0 =

diag_matrix(rep_vector(initial_sigma_diag, nfeatures));

matrix[nfeatures, nfeatures] sigma_cat1 =

diag_matrix(rep_vector(initial_sigma_diag, nfeatures));

matrix[nfeatures, nfeatures] r_matrix =

diag_matrix(rep_vector(r_value, nfeatures));

matrix[nfeatures, nfeatures] q_matrix =

diag_matrix(rep_vector(q_value, nfeatures));

matrix[nfeatures, nfeatures] I_mat =

diag_matrix(rep_vector(1.0, nfeatures));

for (i in 1:ntrials) {

vector[nfeatures] x = to_vector(obs[i]);

// ── Prediction step: add process noise to both categories ──────────

sigma_cat0 = sigma_cat0 + q_matrix;

sigma_cat1 = sigma_cat1 + q_matrix;

// ── Decision ────────────────────────────────────────────────────────

matrix[nfeatures, nfeatures] cov0 = sigma_cat0 + r_matrix;

matrix[nfeatures, nfeatures] cov1 = sigma_cat1 + r_matrix;

real log_p0 = multi_normal_lpdf(x | mu_cat0, cov0);

real log_p1 = multi_normal_lpdf(x | mu_cat1, cov1);

real prob1 = exp(log_p1 - log_sum_exp(log_p0, log_p1));

p[i] = fmax(1e-9, fmin(1 - 1e-9, prob1));

// ── Update (measurement update for the correct category only) ────────

if (cat_one[i] == 1) {

vector[nfeatures] innov = x - mu_cat1;

matrix[nfeatures, nfeatures] S = sigma_cat1 + r_matrix;

matrix[nfeatures, nfeatures] K = mdivide_right_spd(sigma_cat1, S);

matrix[nfeatures, nfeatures] IK = I_mat - K;

mu_cat1 = mu_cat1 + K * innov;

sigma_cat1 = IK * sigma_cat1 * IK' + K * r_matrix * K';

sigma_cat1 = 0.5 * (sigma_cat1 + sigma_cat1');

} else {

vector[nfeatures] innov = x - mu_cat0;

matrix[nfeatures, nfeatures] S = sigma_cat0 + r_matrix;

matrix[nfeatures, nfeatures] K = mdivide_right_spd(sigma_cat0, S);

matrix[nfeatures, nfeatures] IK = I_mat - K;

mu_cat0 = mu_cat0 + K * innov;

sigma_cat0 = IK * sigma_cat0 * IK' + K * r_matrix * K';

sigma_cat0 = 0.5 * (sigma_cat0 + sigma_cat0');

}

}

}

}

model {

target += normal_lpdf(log_r | prior_logr_mean, prior_logr_sd);

target += normal_lpdf(log_q | prior_logq_mean, prior_logq_sd);

target += bernoulli_lpmf(y | p);

}

generated quantities {

vector[ntrials] log_lik;

real lprior;

for (i in 1:ntrials)

log_lik[i] = bernoulli_lpmf(y[i] | p[i]);

lprior = normal_lpdf(log_r | prior_logr_mean, prior_logr_sd) +

normal_lpdf(log_q | prior_logq_mean, prior_logq_sd);

}

"

stan_file_proto_single <- here("stan", "ch13_prototype_single.stan")

write_stan_file(prototype_single_stan, dir = here("stan"),

basename = "ch13_prototype_single.stan")

mod_prototype_single <- cmdstan_model(stan_file_proto_single)

```

**Key aspects of the Stan implementation:**

* **Parameters block**: Two scalars `log_r` and `log_q`. Positivity constraints are handled by `exp()` in `transformed parameters`.

* **Prediction step**: `sigma_cat0` and `sigma_cat1` each have `q_matrix` added before the decision. With `q_value = 0` this recovers the static-target filter.

* **Transformed parameters**: Runs the full Kalman filter trial-by-trial, updating the two prototype distributions and recording `p[i]` — the probability of choosing Category 1 on trial $i$ — before each trial's update. The update happens on *all* trials (including the last), consistent with the R simulation.

* **Model block**: Priors on `log_r` and `log_q` plus a vectorised Bernoulli likelihood using the pre-computed `p` vector. One line.

* **Generated quantities**: `log_lik[i]` reads directly from `p[i]` — no re-computation of the Kalman filter required.

---

## Fitting the Model to Simulated Data

We use Pathfinder initialization before HMC sampling. Theoretically, it should make the HMC faster to run. I haven't tested that yet for this model, shamefully, but it doesn't hurt to do it.

```{r ch13_fit_single_agent}

# Select one simulated agent with r_value = 2.0, q_value = 0.1

agent_to_fit <- prototype_responses |>

filter(r_value_true == 2.0, q_value_true == 0.1, agent_id == 1)

stopifnot(nrow(agent_to_fit) == total_trials)

proto_data_single <- list(

ntrials = nrow(agent_to_fit),

nfeatures = 2L,

cat_one = agent_to_fit$category_feedback,

y = agent_to_fit$sim_response,

obs = as.matrix(agent_to_fit[, c("height", "position")]),

initial_mu_cat0 = c(2.5, 2.5),

initial_mu_cat1 = c(2.5, 2.5),

initial_sigma_diag = 10.0,

prior_logr_mean = LOG_R_PRIOR_MEAN,

prior_logr_sd = LOG_R_PRIOR_SD,

prior_logq_mean = LOG_Q_PRIOR_MEAN,

prior_logq_sd = LOG_Q_PRIOR_SD

)

fit_filepath_single <- here("simmodels", "ch13_proto_single_fit.rds")

if (regenerate_simulations || !file.exists(fit_filepath_single)) {

pf_single <- mod_prototype_single$pathfinder(

data = proto_data_single, seed = 123, num_paths = 4, refresh = 0

)

fit_proto_single <- mod_prototype_single$sample(

data = proto_data_single,

init = pf_single,

seed = 123,

chains = 4,

parallel_chains = min(4, availableCores()),

iter_warmup = 1000,

iter_sampling = 1500,

refresh = 300,

adapt_delta = 0.9

)

fit_proto_single$save_object(fit_filepath_single)

cat("Single-agent prototype fit computed and saved.\n")

} else {

fit_proto_single <- readRDS(fit_filepath_single)

cat("Loaded existing single-agent prototype fit.\n")

}

if (!is.null(fit_proto_single)) {

param_summary <- fit_proto_single$summary(variables = c("log_r", "r_value", "log_q", "q_value"))

print(param_summary)

true_r <- agent_to_fit$r_value_true[1]

true_log_r <- log(true_r)

true_q <- agent_to_fit$q_value_true[1]

true_log_q <- log(true_q)

cat("\nTrue log_r =", round(true_log_r, 3), " True r =", true_r, "\n")

cat("True log_q =", round(true_log_q, 3), " True q =", true_q, "\n")

}

```

### MCMC Diagnostic Battery

Before reading anything off the posterior, we need to check our diagnostic table.

```{r ch13_diagnostic_battery_single}

if (!is.null(fit_proto_single)) {

diag_tbl <- diagnostic_summary_table(

fit_proto_single,

params = c("log_r", "r_value", "log_q", "q_value")

)

print(diag_tbl)

}

```

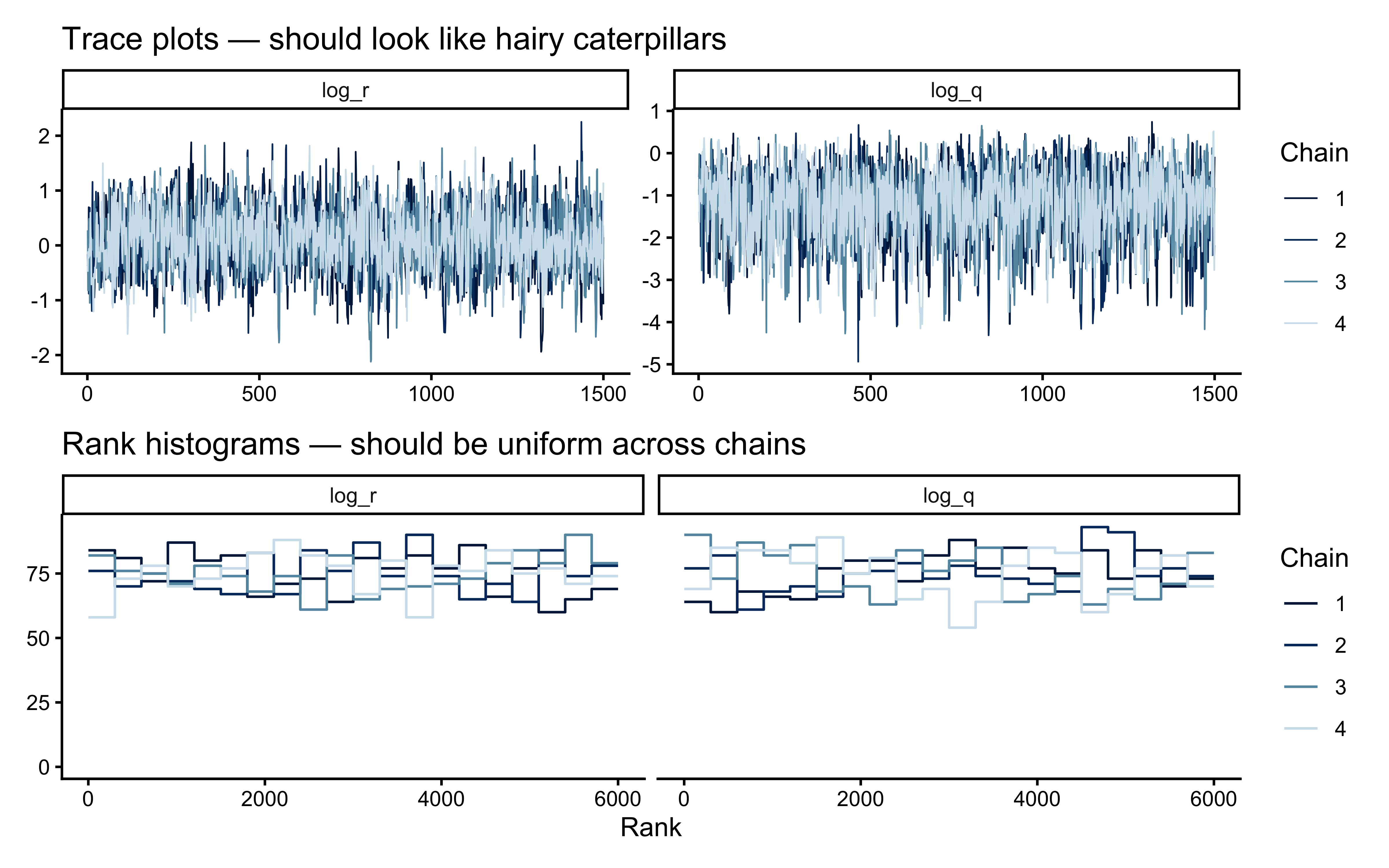

The trace plot grid plus rank histograms remain the primary MCMC diagnostics; we save the joint geometry of `log_r` and `log_q` for after the prior–posterior overlay, where it directly informs the interpretation.

```{r ch13_trace_and_rank}

if (!is.null(fit_proto_single)) {

p_trace <- bayesplot::mcmc_trace(

fit_proto_single$draws(c("log_r", "log_q")),

facet_args = list(ncol = 2)

) +

ggtitle("Trace plots — should look like hairy caterpillars")

p_rank <- bayesplot::mcmc_rank_overlay(

fit_proto_single$draws(c("log_r", "log_q")),

facet_args = list(ncol = 2)

) +

ggtitle("Rank histograms — should be uniform across chains")

print(p_trace / p_rank)

}

```

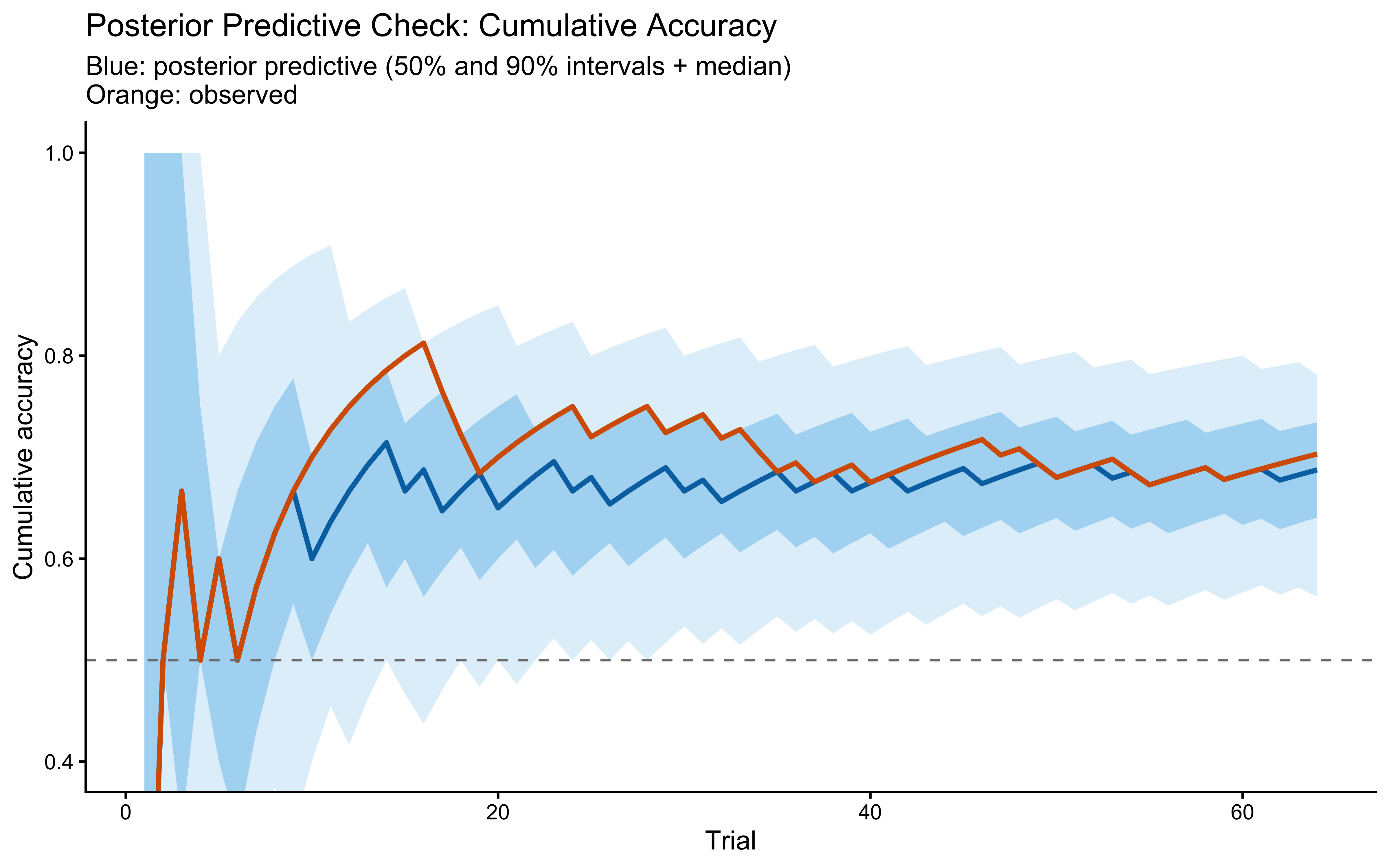

### Posterior Predictive Check

The posterior predictive check asks: does the fitted model, when run forward with parameters drawn from the posterior, produce data that look like the observed data? Since `p` is already in `transformed parameters`, we can read posterior draws of the choice probability directly and use them to generate posterior predictive responses.

```{r ch13_ppc}

if (!is.null(fit_proto_single)) {

# Posterior draws of p[i] (one row per draw, one column per trial)

p_draws <- fit_proto_single$draws("p", format = "matrix")

n_draws <- nrow(p_draws)

n_obs <- ncol(p_draws)

# Generate posterior predictive responses

yrep <- matrix(rbinom(n_draws * n_obs, 1, as.vector(p_draws)),

nrow = n_draws, ncol = n_obs)

# Cumulative-accuracy ribbons vs. observed

obs_cum_acc <- cumsum(agent_to_fit$sim_response == agent_to_fit$category_feedback) /

seq_len(n_obs)

# Use "==" directly and add 0 to coerce to numeric without losing matrix dims

yrep_correct <- sweep(yrep, 2, as.numeric(agent_to_fit$category_feedback), "==") + 0

yrep_cum_acc <- t(apply(yrep_correct, 1, function(r) cumsum(r) / seq_along(r)))

cum_acc_summary <- tibble(

trial = seq_len(n_obs),

q05 = apply(yrep_cum_acc, 2, quantile, 0.05),

q25 = apply(yrep_cum_acc, 2, quantile, 0.25),

q50 = apply(yrep_cum_acc, 2, quantile, 0.50),

q75 = apply(yrep_cum_acc, 2, quantile, 0.75),

q95 = apply(yrep_cum_acc, 2, quantile, 0.95),

obs = obs_cum_acc

)

ggplot(cum_acc_summary, aes(x = trial)) +

geom_ribbon(aes(ymin = q05, ymax = q95), fill = "#56B4E9", alpha = 0.20) +

geom_ribbon(aes(ymin = q25, ymax = q75), fill = "#56B4E9", alpha = 0.40) +

geom_line(aes(y = q50), color = "#0072B2", linewidth = 1) +

geom_line(aes(y = obs), color = "#D55E00", linewidth = 1) +

geom_hline(yintercept = 0.5, linetype = "dashed", color = "grey50") +

labs(

title = "Posterior Predictive Check: Cumulative Accuracy",

subtitle = "Blue: posterior predictive (50% and 90% intervals + median)\nOrange: observed",

x = "Trial", y = "Cumulative accuracy"

) +

coord_cartesian(ylim = c(0.4, 1))

}

```

The observed cumulative-accuracy curve should sit comfortably inside the posterior predictive band. If it drifts to the edge, the model is missing structure that the data carry — the most likely candidate being a separate decisional-noise term that the single $r$ parameter cannot capture.

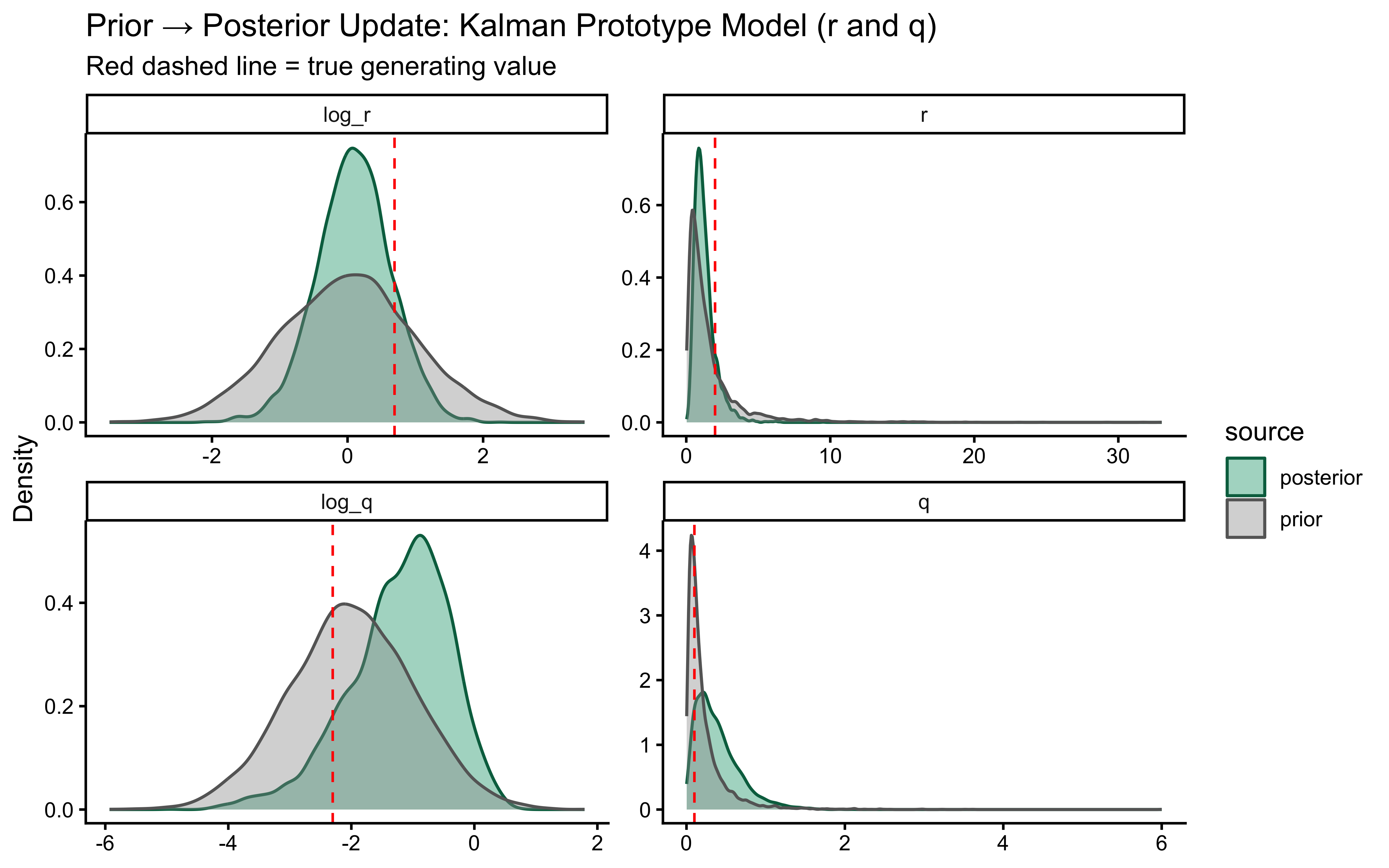

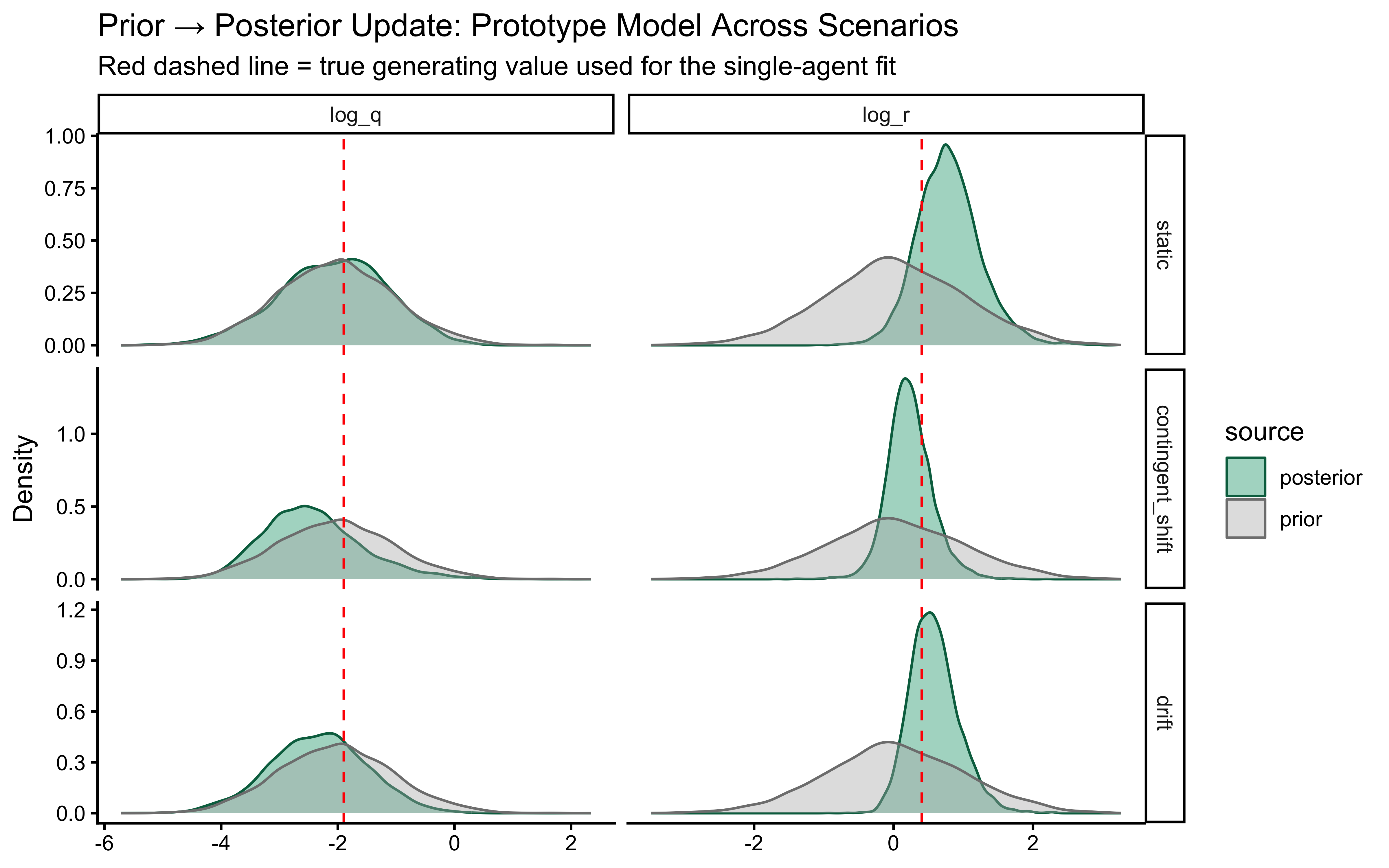

### Prior–Posterior Update Plot

Did the data teach the model anything? We overlay prior and posterior densities on both the *computational scale* (`log_r`, `log_q`, where the priors were defined) and the *interpretable scale* (`r`, `q`, where the cognitive interpretation lives). Heavy overlap means the data were uninformative; a narrow posterior far from the truth means the model is confidently wrong.

```{r ch13_prior_posterior_update}

if (!is.null(fit_proto_single)) {

draws_df <- as_draws_df(

fit_proto_single$draws(c("log_r", "r_value", "log_q", "q_value"))

)

set.seed(7)

n_prior <- nrow(draws_df)

prior_logr <- rnorm(n_prior, LOG_R_PRIOR_MEAN, LOG_R_PRIOR_SD)

prior_r <- exp(prior_logr)

prior_logq <- rnorm(n_prior, LOG_Q_PRIOR_MEAN, LOG_Q_PRIOR_SD)

prior_q <- exp(prior_logq)

prior_post_df <- bind_rows(

tibble(scale = "log_r", value = prior_logr, source = "prior"),

tibble(scale = "log_r", value = draws_df$log_r, source = "posterior"),

tibble(scale = "r", value = prior_r, source = "prior"),

tibble(scale = "r", value = draws_df$r_value, source = "posterior"),

tibble(scale = "log_q", value = prior_logq, source = "prior"),

tibble(scale = "log_q", value = draws_df$log_q, source = "posterior"),

tibble(scale = "q", value = prior_q, source = "prior"),

tibble(scale = "q", value = draws_df$q_value, source = "posterior")

) |>

mutate(scale = factor(scale, levels = c("log_r", "r", "log_q", "q")))

true_lines <- tibble(

scale = factor(c("log_r", "r", "log_q", "q"),

levels = c("log_r", "r", "log_q", "q")),

value = c(true_log_r, true_r, true_log_q, true_q)

)

ggplot(prior_post_df, aes(x = value, fill = source, color = source)) +

geom_density(alpha = 0.4, linewidth = 0.6) +

geom_vline(data = true_lines,

aes(xintercept = value),

color = "red", linetype = "dashed") +

facet_wrap(~ scale, scales = "free", ncol = 2) +

scale_fill_manual(values = c(prior = "#999999", posterior = "#009E73")) +

scale_color_manual(values = c(prior = "#666666", posterior = "#006D4E")) +

labs(

title = "Prior → Posterior Update: Kalman Prototype Model (r and q)",

subtitle = "Red dashed line = true generating value",

x = NULL, y = "Density"

)

}

```

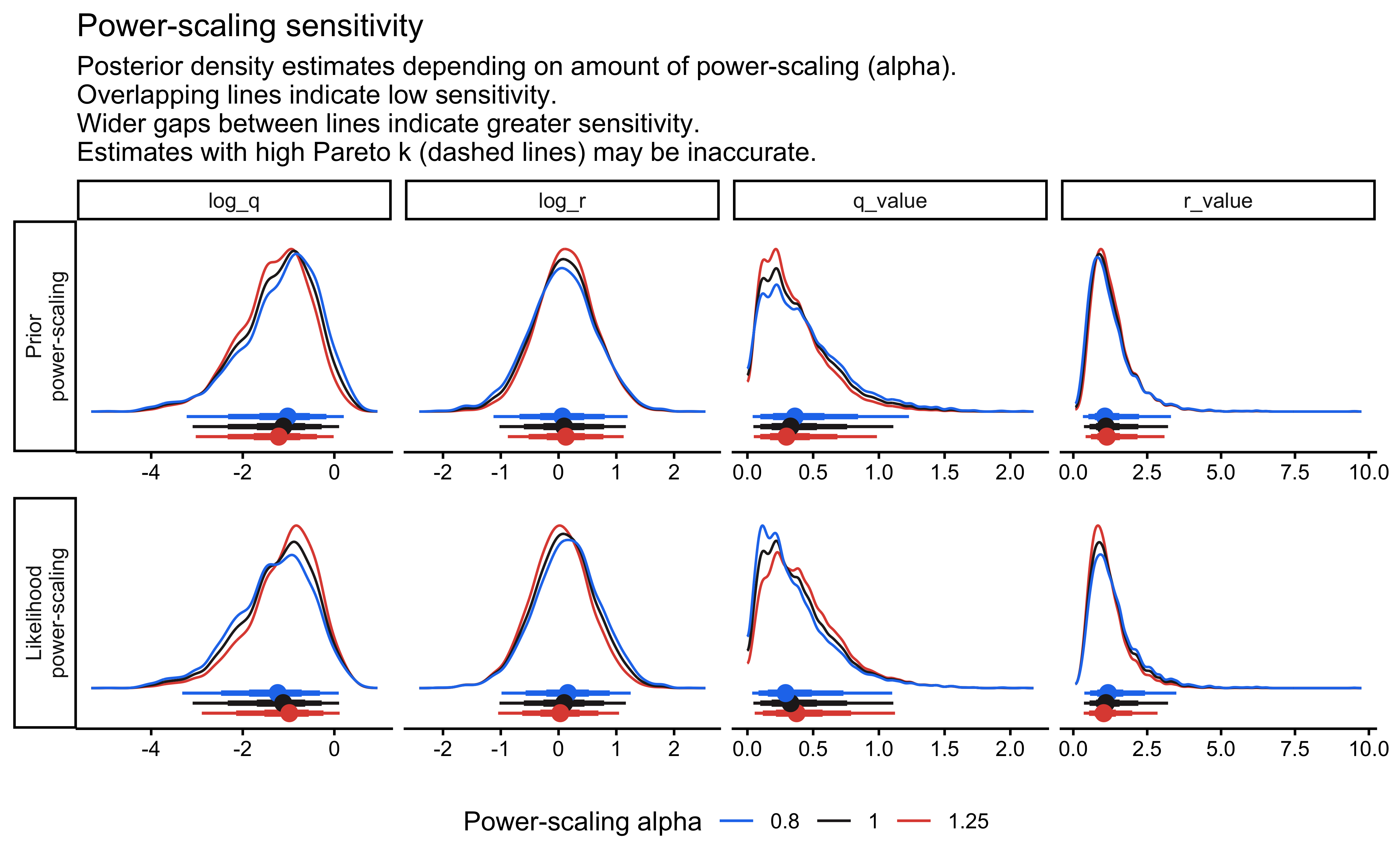

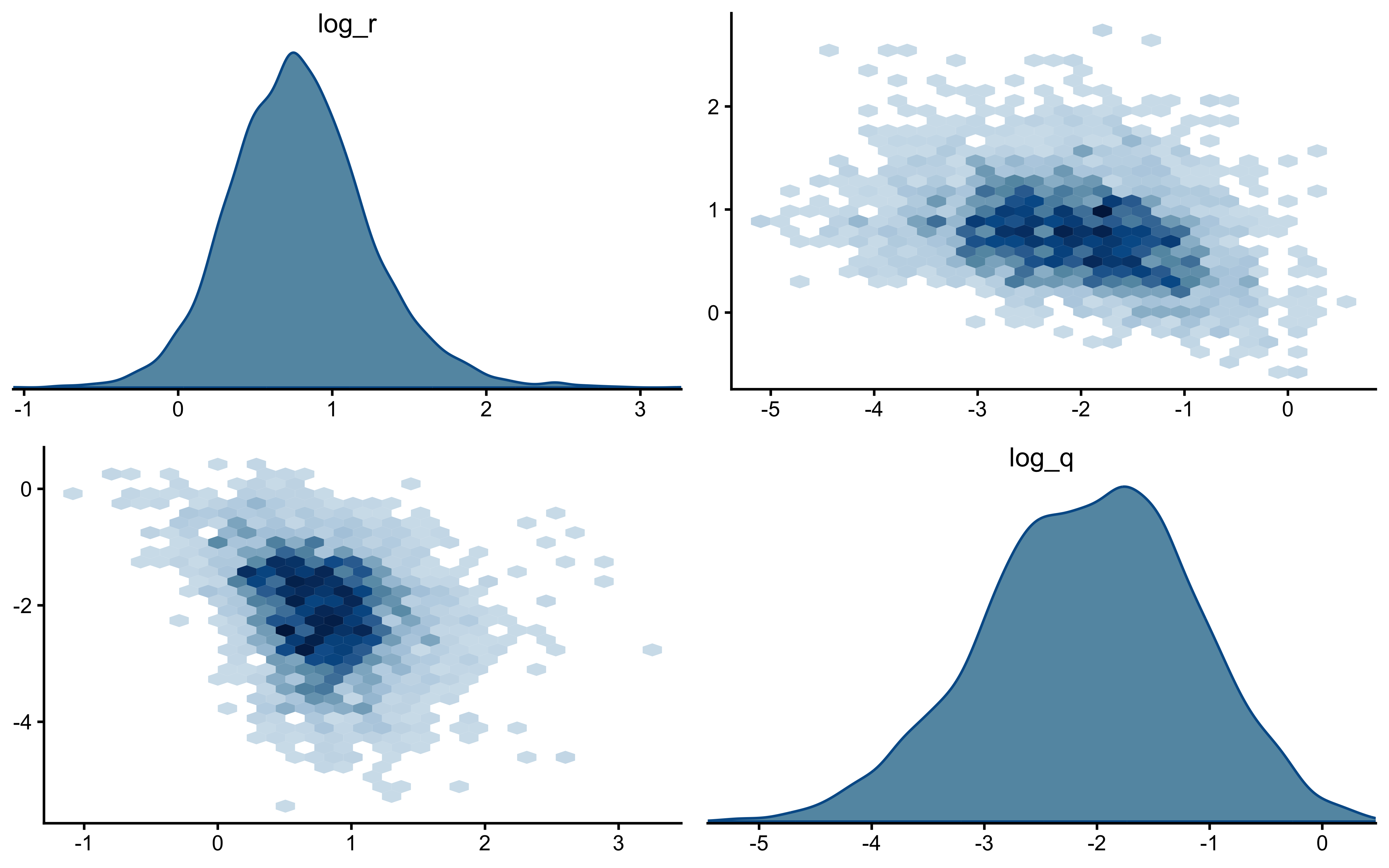

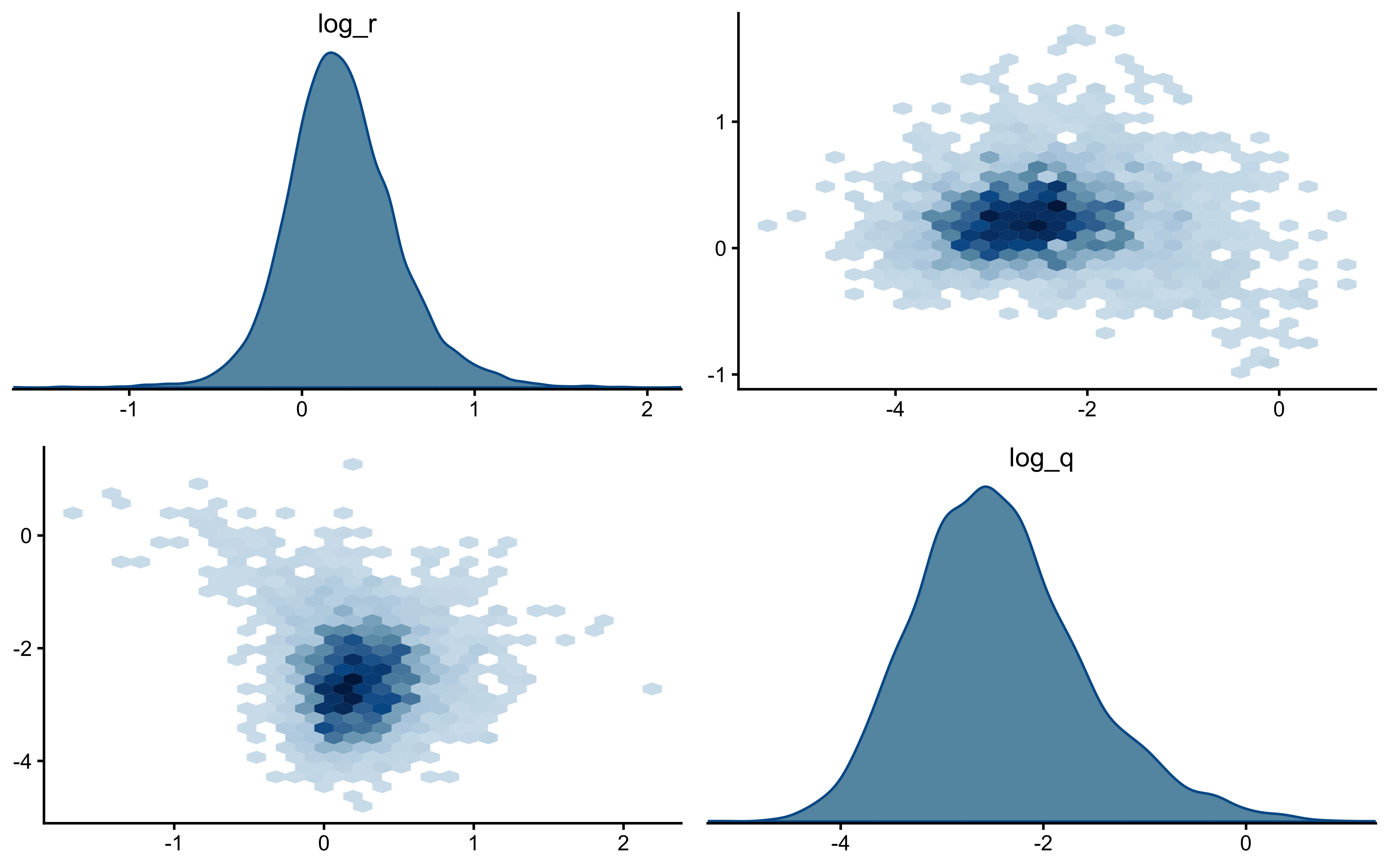

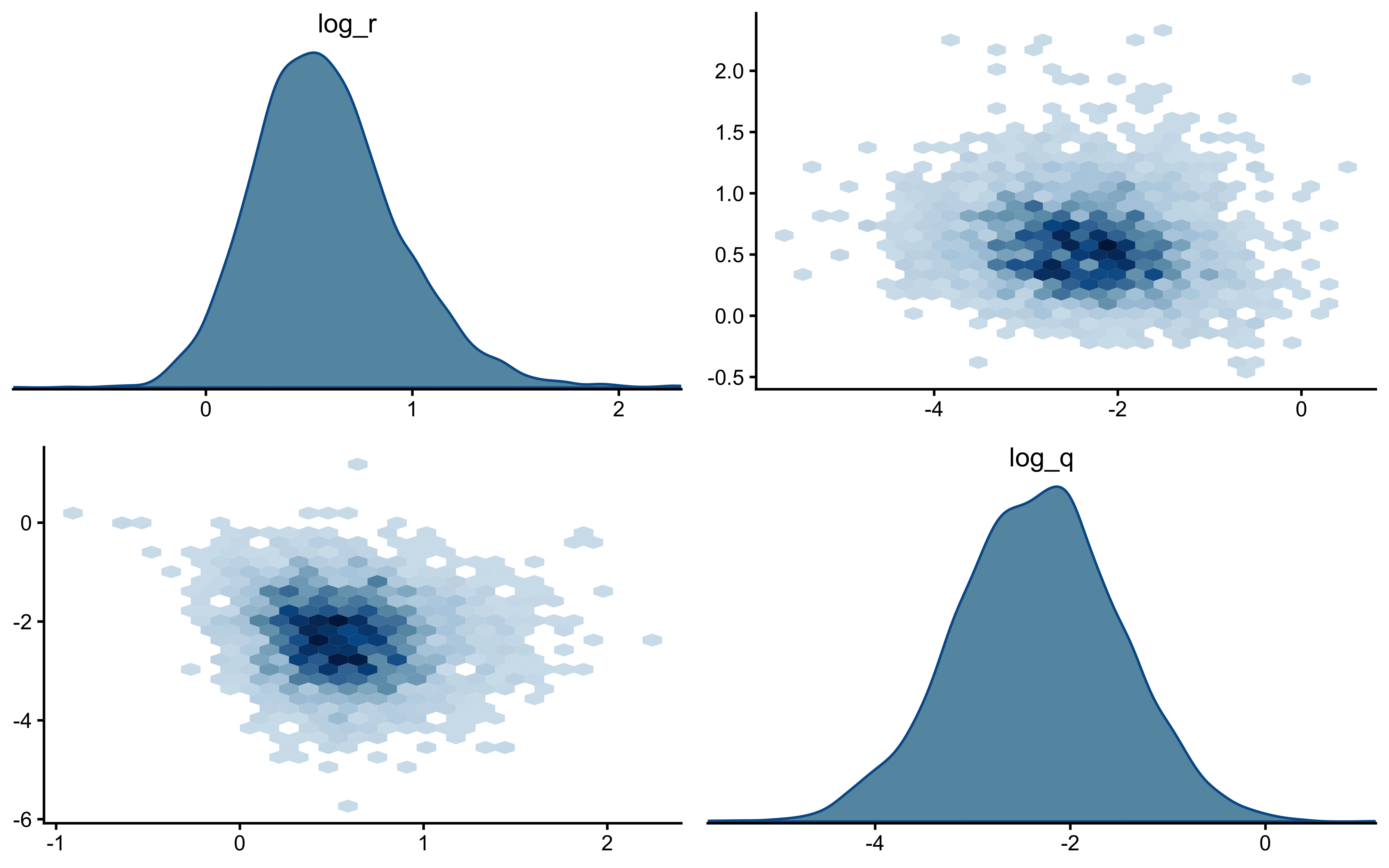

The two parameters update very differently. On `log_r`, the posterior is clearly tighter than the prior and the true value sits well inside the posterior mass — modest but real information gain, with no sign of confident error. On the `r` scale the prior is so heavy-tailed that the contraction is visually compressed into the bulk near zero; the posterior is mostly a sharper version of the prior's left mode, which is the right behaviour given a single subject's worth of trials.

`log_q` is the more interesting panel. The posterior has shifted noticeably *to the right* of the prior, with its mode near $-1$ while the true value sits around $-2.3$ in the posterior's left tail. The data were informative — the posterior is not just the prior — but they pulled $q$ toward larger values than truly generated the sequence. This is the kind of mild, prior-dominated bias one expects when a single trajectory under-determines the process-noise scale: many $(r, q)$ combinations explain the choices about equally well, and the posterior settles in the high-likelihood ridge rather than at the truth. The pairs plot below is the direct way to assess that likely ridge; SBC across many simulated agents is what will tell us whether the offset is systematic.

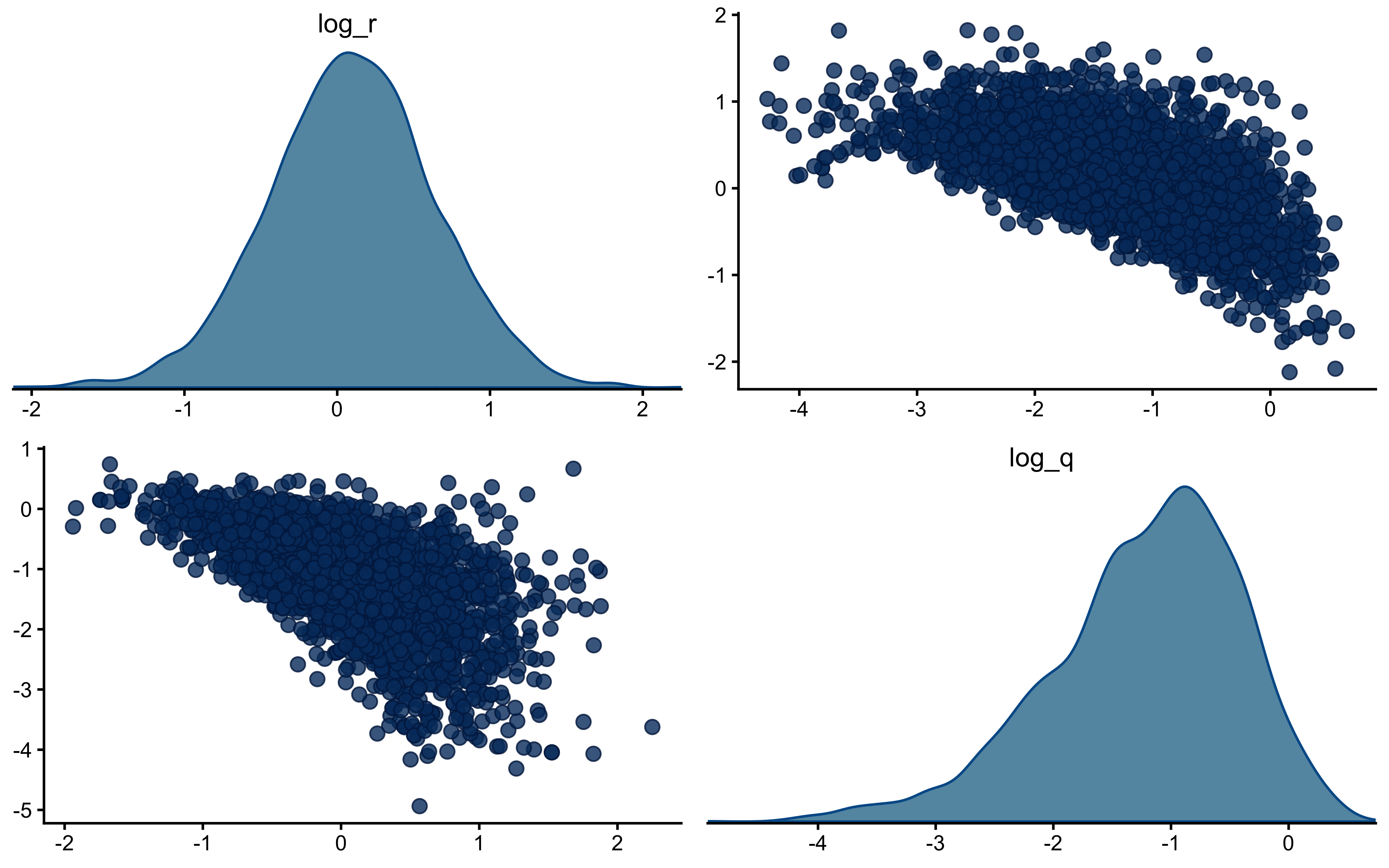

```{r ch13_pairs}

if (!is.null(fit_proto_single)) {

bayesplot::mcmc_pairs(

fit_proto_single$draws(c("log_r", "log_q")),

diag_fun = "dens",

off_diag_fun = "scatter",

np = nuts_params(fit_proto_single)

)

}

```

The off-diagonal scatter shows the ridge plainly: `log_r` and `log_q` are clearly negatively correlated, with a fan that opens toward small `log_q` (low process noise paired with high observation noise). Along that ridge, decreasing $q$ and increasing $r$ leave the predicted choice probabilities largely unchanged — a smaller process step combined with noisier readout produces the same trial-by-trial behaviour as a larger process step with cleaner readout. That is exactly why the marginal posterior on `log_q` can sit above the true value while still fitting the choices well: the truth lies somewhere on this ridge, and a single subject's data are not enough to pin down where. What should we do? We could try hierarchical pooling across subjects, or - likely to work - change the experimental setup more dynamic categories, and more trials could help.

### Randomised LOO-PIT Calibration

For continuous outcomes the preferred calibration check is LOO-PIT. For binary outcomes the equivalent is the *randomised* LOO-PIT (Säilynoja et al. 2022), which avoids the degenerate two-bin distribution that plain LOO-PIT produces on Bernoulli data. Chapter 13 implements this for the GCM; we apply the same construction here.

```{r ch13_loo_pit_randomized}

if (!is.null(fit_proto_single)) {

# PSIS-LOO weights from log_lik

log_lik_mat <- fit_proto_single$draws("log_lik", format = "matrix")

# FIX: Add save_psis = TRUE so the weight matrix is retained

loo_obj <- loo::loo(log_lik_mat, cores = 4, save_psis = TRUE)

log_w <- loo::weights.importance_sampling(loo_obj$psis_object,

normalize = TRUE,

log = TRUE)

# Posterior draws of choice probability

p_draws <- fit_proto_single$draws("p", format = "matrix")

# Per-observation LOO predictive probability of cat 1

loo_p_cat1 <- vapply(seq_len(ncol(p_draws)), function(i) {

sum(exp(log_w[, i]) * p_draws[, i])

}, numeric(1))

y_obs <- agent_to_fit$sim_response

# Randomised PIT for binary y:

# if y == 1: U ~ Uniform(1 - p, 1)

# if y == 0: U ~ Uniform(0, 1 - p)

set.seed(2026)

rloo_pit <- vapply(seq_along(y_obs), function(i) {

p_i <- loo_p_cat1[i]

if (y_obs[i] == 1) runif(1, 1 - p_i, 1) else runif(1, 0, 1 - p_i)

}, numeric(1))

p_pit <- ggplot(tibble(rloo_pit = rloo_pit), aes(x = rloo_pit)) +

geom_histogram(aes(y = after_stat(density)), bins = 20,

fill = "#56B4E9", color = "white") +

geom_hline(yintercept = 1, linetype = "dashed", color = "red") +

labs(

title = "Randomised LOO-PIT: Kalman Prototype Model",

subtitle = "If the model is calibrated, the histogram should look uniform on [0, 1]",

x = "Randomised PIT value", y = "Density"

) +

coord_cartesian(ylim = c(0, 2.5))

print(p_pit)

}

```

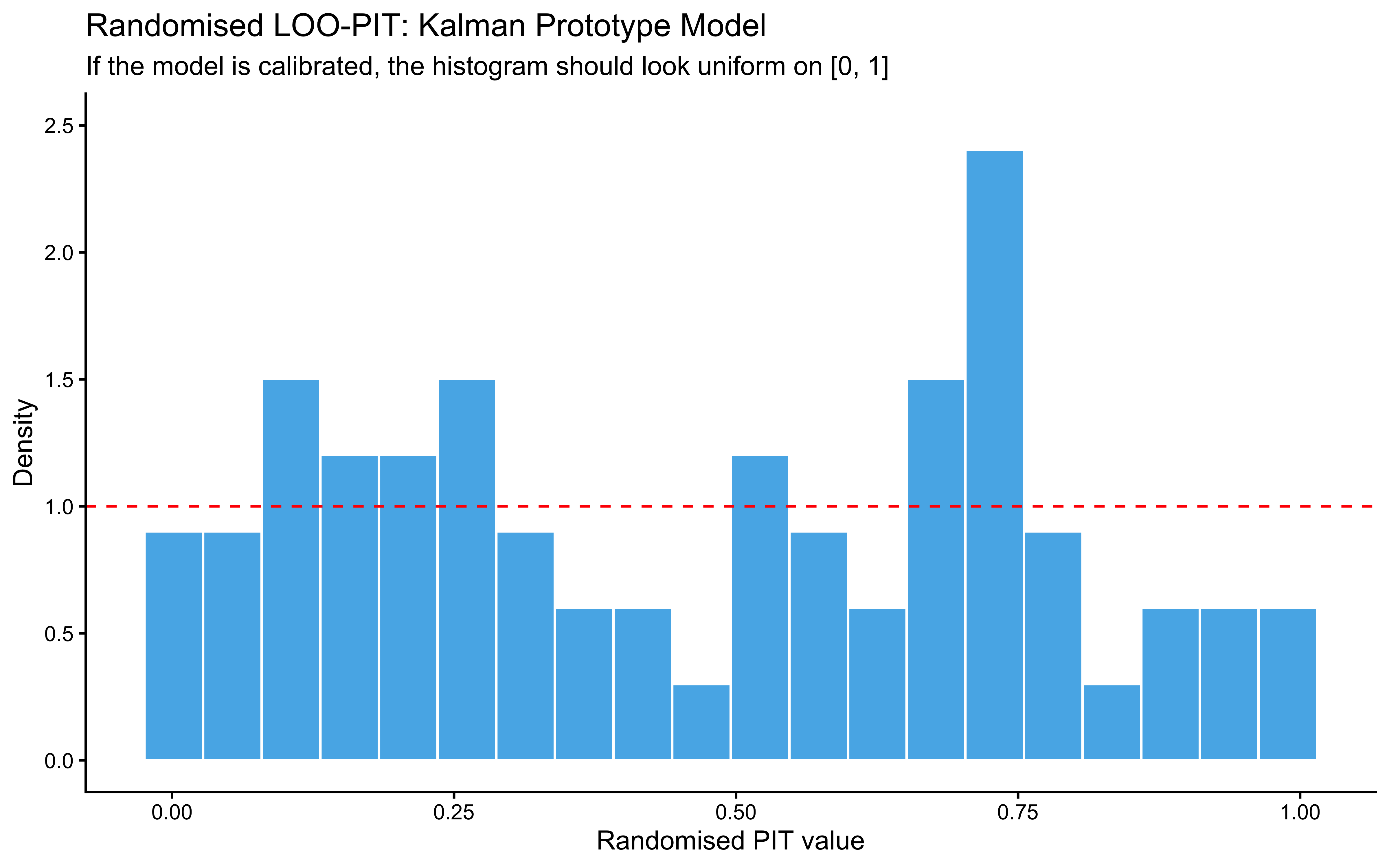

A roughly uniform histogram is the calibration target. The histogram here is bumpy but not pathological: bars hover around the uniform reference (red dashed line at density 1), with no systematic U-shape (which would flag overconfidence), no central hump (underconfidence), and no monotone skew (a directional bias the model has not captured). The two visible spikes — one near $0.1$–$0.25$ and a taller one near $0.7$ — are the kind of small-sample noise expected from 64 binary trials; with only a few dozen randomised PIT values, individual bins fluctuate considerably even under perfect calibration. The mild excess on the lower-middle and upper-middle and the slight under-density in the tails ($>0.85$) are worth keeping in mind, but with this much data they do not constitute evidence of miscalibration. The check kinda passes; the meaningful calibration question is whether this pattern persists across many simulated agents, which is what SBC will answer.

### LOO with Pareto-k as a Diagnostic, Not a Predictive Score

```{r ch13_loo_single}

if (!is.null(fit_proto_single)) {

loo_proto <- loo::loo(log_lik_mat, cores = 4)

print(loo_proto)

plot(loo_proto, label_points = TRUE,

main = "PSIS-LOO Pareto-k: Kalman Prototype Model")

}

```

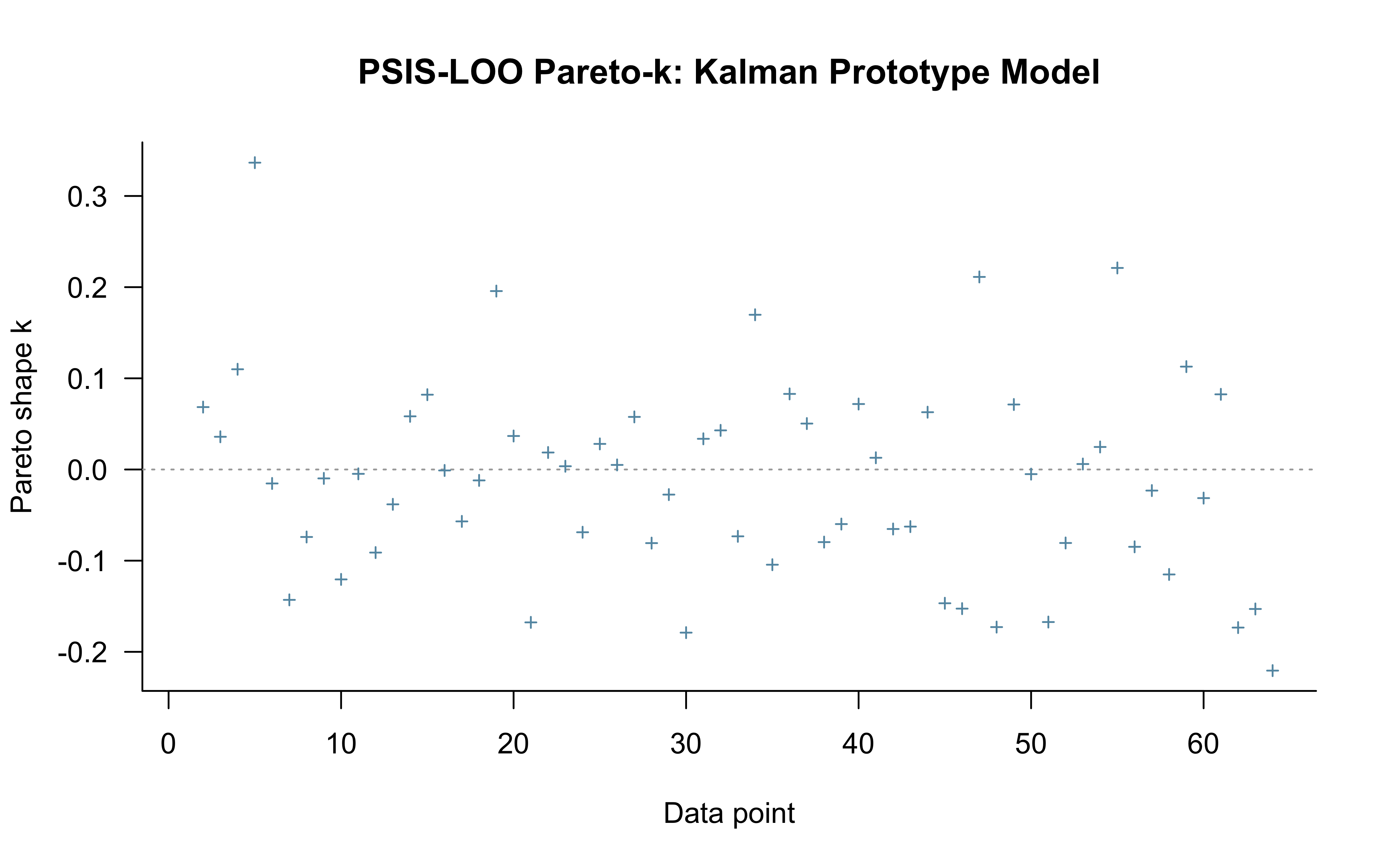

> **⚠️ Reframing PSIS-LOO for sequential models.** The Kalman filter is path-dependent: trial $t$'s choice probability depends on the entire filter state from trials $1, \ldots, t-1$. Standard PSIS-LOO is *not* a valid measure of out-of-sample predictive accuracy here — leaving out trial $t$ implicitly conditions on a counterfactual filter state that the model never actually saw. We compute it anyway, for two reasons: (1) the **Pareto-$\hat{k}$ values are still informative as a localisation diagnostic** — high $\hat{k}$ at specific trials flags observations the model cannot easily reconstruct from the rest, which can reveal where the structural assumptions are stressed; (2) compatibility with downstream tooling. The actual predictive evaluation is done by LFO-CV in the next subsection. The numeric ELPD reported by `loo()` should not be taken as a generalisation estimate.

**What we see here.** The Pareto-$\hat{k}$ scatter is essentially flat: 63 of 64 points sit between roughly $-0.2$ and $+0.35$, with $p_\text{loo} = 1.1$ (close to the parameter count, as expected for a well-behaved fit) and a minimum ESS of $\sim$5000 across observations. Read as a localisation diagnostic, this is the good case — no individual trial is a structural outlier that the rest of the data cannot reconstruct. The single "very bad" entry counted in the diagnostic table (1.6%) is the first trial, which has no preceding history and so is not a meaningful PSIS-LOO target; the plot above clips it. The headline $\text{elpd}_\text{loo} = -38.8$ is reported for completeness but, per the reframing above, should not be interpreted as out-of-sample predictive accuracy — that is what LFO-CV in the next subsection actually measures.

### Leave-Future-Out Cross-Validation: Inheriting and Generalising the GCM Implementation

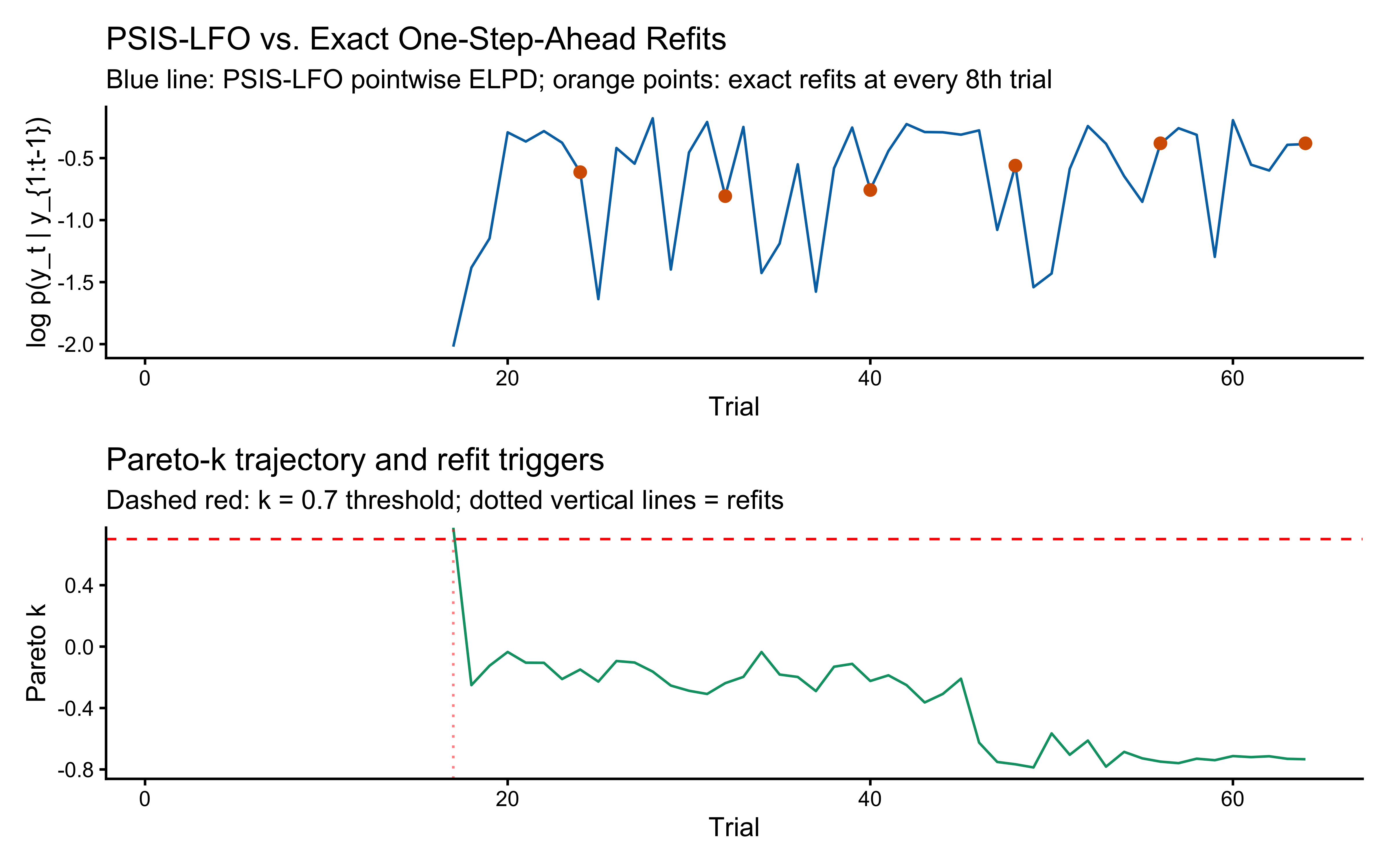

For sequential models, LOO is invalid and LFO-CV is the correct alternative (Bürkner, Gabry, & Vehtari 2020). Chapter 13 implements PSIS-LFO for the GCM as `psis_lfo_gcm()`. The Kalman prototype model has the same path-dependence structure but a much cheaper per-trial cost, so LFO-CV is even more tractable here. Below is the same algorithm specialised to the prototype model and saved as `psis_lfo_kalman()`. The two functions share an interface so later chapters with empirical analyses can call either through a common front-end.

The algorithm (Bürkner et al. 2020 Algorithm 1):

1. Choose a minimum training window $L$ (we use $L = 16$, two blocks of the Kruschke task).

2. Fit the model once on $y_{1:L}$. This is the *reference fit*.

3. For each $t = L+1, \ldots, T$:

a. Use the reference fit's posterior draws $\theta^{(s)}$ to compute the importance ratio for re-weighting $\theta$ from $p(\theta \mid y_{1:t-1})$ relative to the reference.

b. Smooth the ratios with PSIS, check Pareto $\hat{k}$.

c. If $\hat{k} \leq 0.7$, accept the ratios and use them to evaluate $\log p(y_t \mid y_{1:t-1})$.

d. If $\hat{k} > 0.7$, **refit** the model on $y_{1:t-1}$, update the reference, and continue.

4. Return pointwise ELPD, the refit history, and the $\hat{k}$ trajectory.

```{r ch13_psis_lfo_kalman}

# psis_lfo_kalman(): Leave-future-out CV for the Kalman prototype model.

# Mirrors psis_lfo_gcm() from Chapter 12. Returns pointwise ELPD across the

# evaluation window, the indices at which a refit was triggered, and the

# Pareto-k trajectory.

psis_lfo_kalman <- function(stan_data,

stan_model,

L = 16, # min training window

k_thresh = 0.7, # PSIS-k refit threshold

iter_warmup = 800,

iter_sample = 1000,

chains = 2,

seed = 1) {

T_total <- stan_data$ntrials

# Helper: build a stan_data list restricted to trials 1:t

truncate_data <- function(d, t) {

out <- d

out$ntrials <- t

out$cat_one <- d$cat_one[1:t]

out$y <- d$y[1:t]

out$obs <- d$obs[1:t, , drop = FALSE]

out

}

# Helper: fit on y_{1:t}

refit <- function(t) {

d_t <- truncate_data(stan_data, t)

pf <- tryCatch(

stan_model$pathfinder(data = d_t, num_paths = 4, refresh = 0,

seed = seed + t),

error = function(e) NULL

)

init_val <- if (!is.null(pf)) pf else 0.5

stan_model$sample(

data = d_t,

init = init_val,

seed = seed + t,

chains = chains,

parallel_chains = chains,

iter_warmup = iter_warmup,

iter_sampling = iter_sample,

refresh = 0,

adapt_delta = 0.9

)

}

# Helper: given a fitted model, evaluate log_lik at trial t

# using cmdstanr's $generate_quantities() so we don't re-MCMC.

# Helper: given a fitted model, evaluate log_lik at trial t

eval_log_lik_at <- function(fit_ref, t) {

# Build the "extended" data that includes trial t in the likelihood

d_ext <- truncate_data(stan_data, t)

# FIX: Call generate_quantities on stan_model, not fit_ref

gq <- stan_model$generate_quantities(

fitted_params = fit_ref,

data = d_ext,

seed = seed + t,

parallel_chains = chains

)

# log_lik[t] for each posterior draw

ll_mat <- gq$draws("log_lik", format = "matrix")

ll_mat[, t]

}

# ── Initial fit on the minimum window ─────────────────────────────────────

cat("Initial LFO fit on trials 1 to", L, "...\n")

fit_ref <- refit(L)

ref_window <- L

# Storage

pointwise_elpd <- rep(NA_real_, T_total)

k_hat_traj <- rep(NA_real_, T_total)

refit_at <- integer(0)

# ── Sequential evaluation ─────────────────────────────────────────────────

log_ratios_accum <- numeric(0) # log p(y_{ref+1:t-1} | theta) accumulation

for (t in (L + 1):T_total) {

# Build importance ratios for theta_ref vs theta_{1:t-1}

if (length(log_ratios_accum) == 0) {

log_w <- rep(0, posterior::ndraws(fit_ref$draws()))

} else {

log_w <- log_ratios_accum # raw log ratios; psis() handles normalization

}

# PSIS smoothing

psis_obj <- tryCatch(

loo::psis(log_ratios = matrix(log_w, ncol = 1), r_eff = 1),

error = function(e) NULL

)

k_hat <- if (!is.null(psis_obj)) loo::pareto_k_values(psis_obj) else Inf

k_hat_traj[t] <- k_hat

if (!is.finite(k_hat) || k_hat > k_thresh) {

# Refit on y_{1:t-1}

cat(" trial", t, ": k_hat =", round(k_hat, 2), "→ refitting\n")

fit_ref <- refit(t - 1)

ref_window <- t - 1

refit_at <- c(refit_at, t)

log_ratios_accum <- numeric(0)

log_w <- rep(0, posterior::ndraws(fit_ref$draws()))

psis_obj <- loo::psis(log_ratios = matrix(log_w, ncol = 1), r_eff = 1)

}

# Evaluate log p(y_t | y_{1:t-1}, theta) for each draw

ll_t <- eval_log_lik_at(fit_ref, t)

# Importance-weighted estimate of log p(y_t | y_{1:t-1})

log_w_norm <- as.vector(weights(psis_obj, normalize = TRUE, log = TRUE))

pointwise_elpd[t] <- matrixStats::logSumExp(log_w_norm + ll_t)

# Accumulate log p(y_t | theta) for the next iteration's importance ratio

if (length(log_ratios_accum) == 0) {

log_ratios_accum <- ll_t

} else {

log_ratios_accum <- log_ratios_accum + ll_t

}

}

list(

pointwise_elpd = pointwise_elpd,

refit_at = refit_at,

k_hat_traj = k_hat_traj,

L = L,

elpd_lfo = sum(pointwise_elpd, na.rm = TRUE),

n_refits = length(refit_at)

)

}

```

> **Note on the importance-ratio accumulation.** The trick that makes PSIS-LFO efficient is that the importance ratio for $p(\theta \mid y_{1:t-1})$ vs the reference $p(\theta \mid y_{1:L})$ is a *running product*: for each new trial $t$ we just multiply the existing per-draw weight by $p(y_{t-1} \mid \theta)$. We never need to refit so long as the smoothed Pareto-$\hat{k}$ stays below the threshold. When it crosses 0.7, we refit and reset the running product. The accumulation in `log_ratios_accum` above implements this — every time we evaluate `log_lik[t]`, we add it to the cumulative log-ratio for the next iteration.

We now run this on the same single-agent fit, and validate it against *exact* one-step-ahead refits at every 8th trial. If PSIS-LFO is doing its job, the two ELPD curves should agree closely.

```{r ch13_lfo_run}

lfo_cache_file <- here("simdata", "ch13_lfo_kalman_results.rds")

if (regenerate_simulations || !file.exists(lfo_cache_file)) {

cat("Running PSIS-LFO for the prototype model...\n")

lfo_psis <- psis_lfo_kalman(

stan_data = proto_data_single,

stan_model = mod_prototype_single,

L = 16,

k_thresh = 0.7

)

# ── Exact one-step-ahead refits at every 8th trial ────────────────────────

exact_t <- seq(24, total_trials, by = 8)

exact_elpd <- numeric(length(exact_t))

for (k in seq_along(exact_t)) {

t <- exact_t[k]

cat(" exact refit at t =", t, "\n")

d_t <- proto_data_single

d_t$ntrials <- t - 1

d_t$cat_one <- proto_data_single$cat_one[1:(t - 1)]

d_t$y <- proto_data_single$y[1:(t - 1)]

d_t$obs <- proto_data_single$obs[1:(t - 1), , drop = FALSE]

pf <- tryCatch(

mod_prototype_single$pathfinder(data = d_t, num_paths = 4, refresh = 0),

error = function(e) NULL

)

init_val <- if (!is.null(pf)) pf else 0.5

fit_t <- mod_prototype_single$sample(

data = d_t, init = init_val, chains = 2, parallel_chains = 2,

iter_warmup = 800, iter_sampling = 1000, refresh = 0, adapt_delta = 0.9

)

# Evaluate log_lik at trial t

d_ext <- proto_data_single

d_ext$ntrials <- t

d_ext$cat_one <- proto_data_single$cat_one[1:t]

d_ext$y <- proto_data_single$y[1:t]

d_ext$obs <- proto_data_single$obs[1:t, , drop = FALSE]

# ── THE FIX: Called on mod_prototype_single instead of fit_t ──

gq <- mod_prototype_single$generate_quantities(fitted_params = fit_t, data = d_ext)

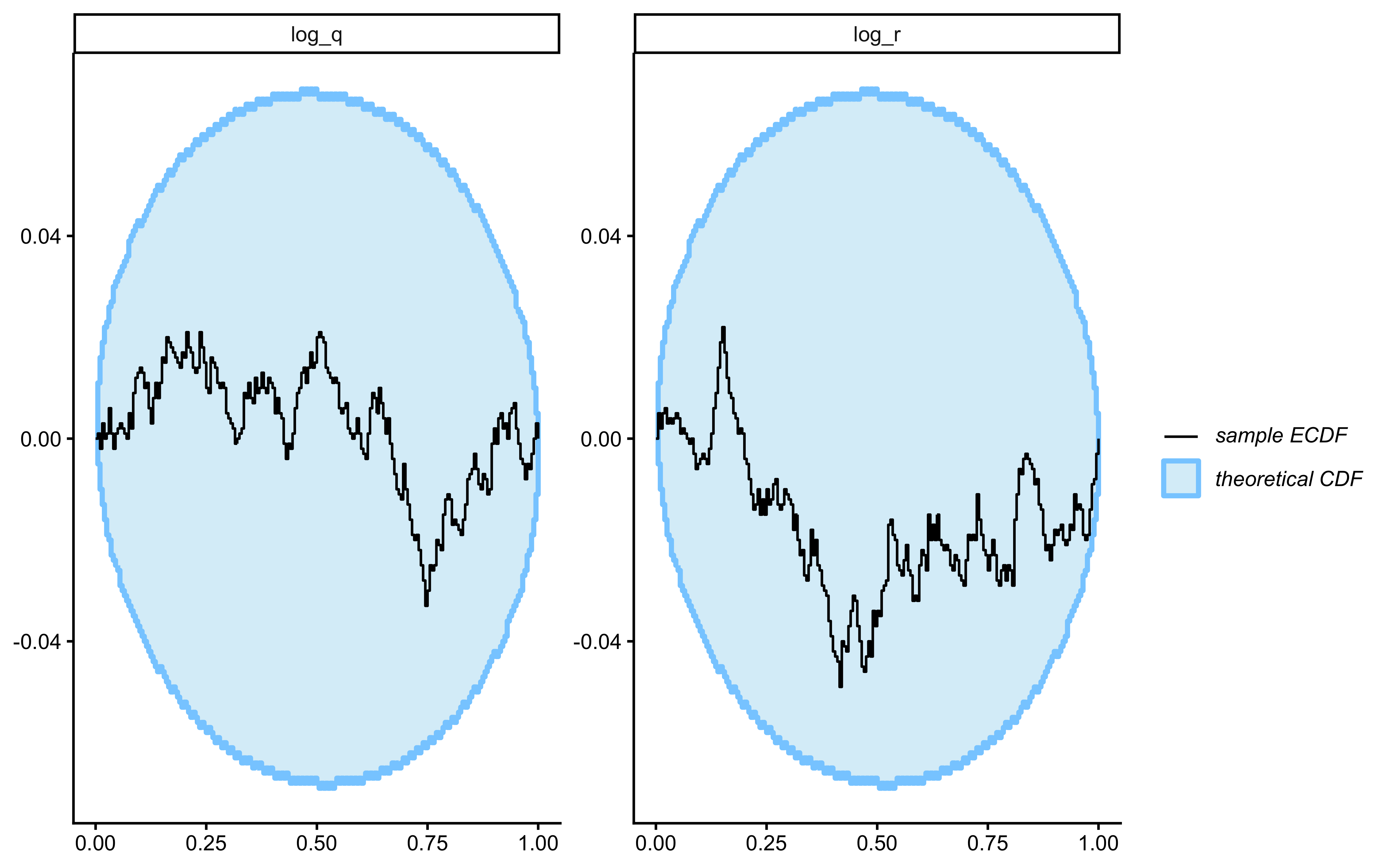

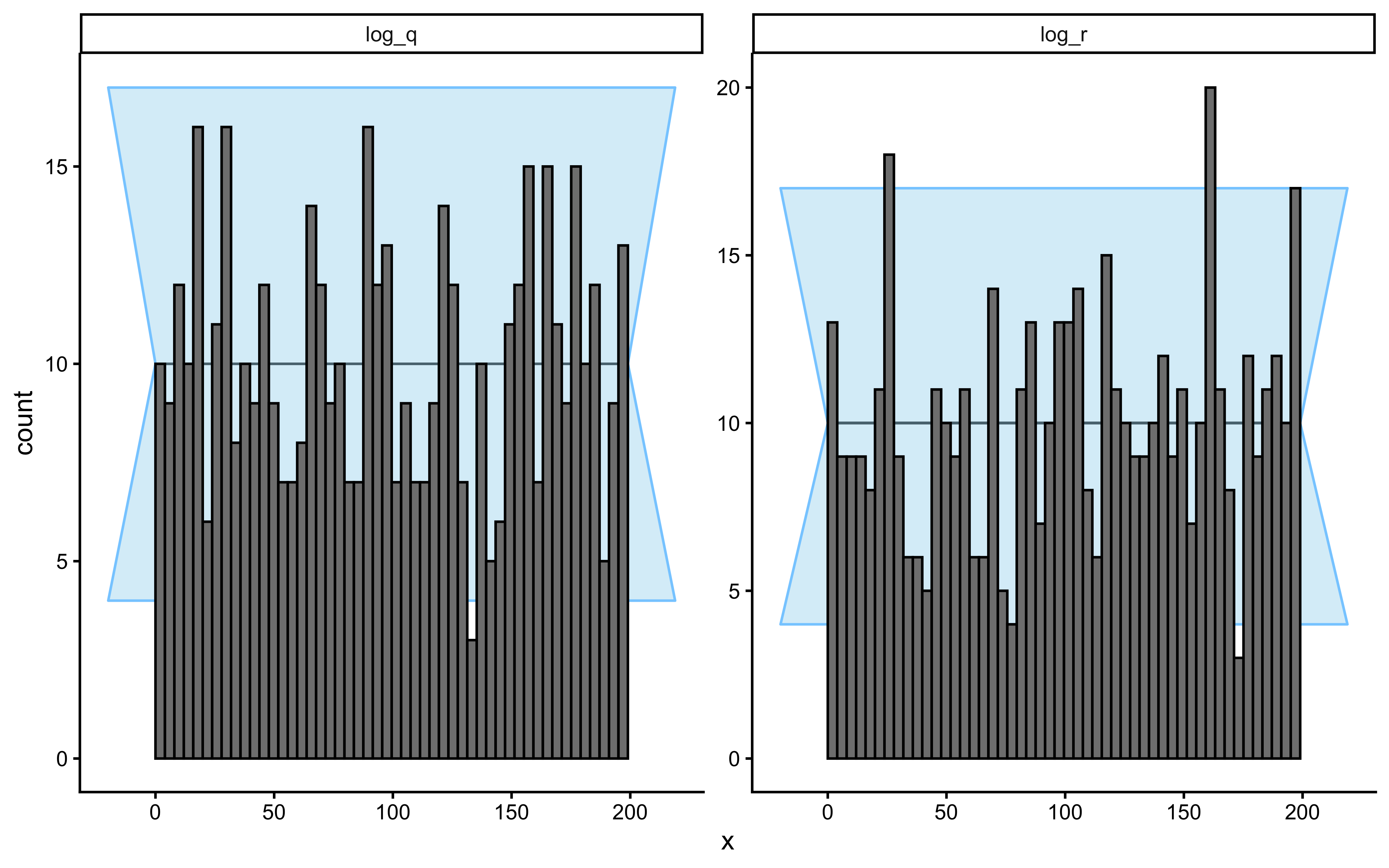

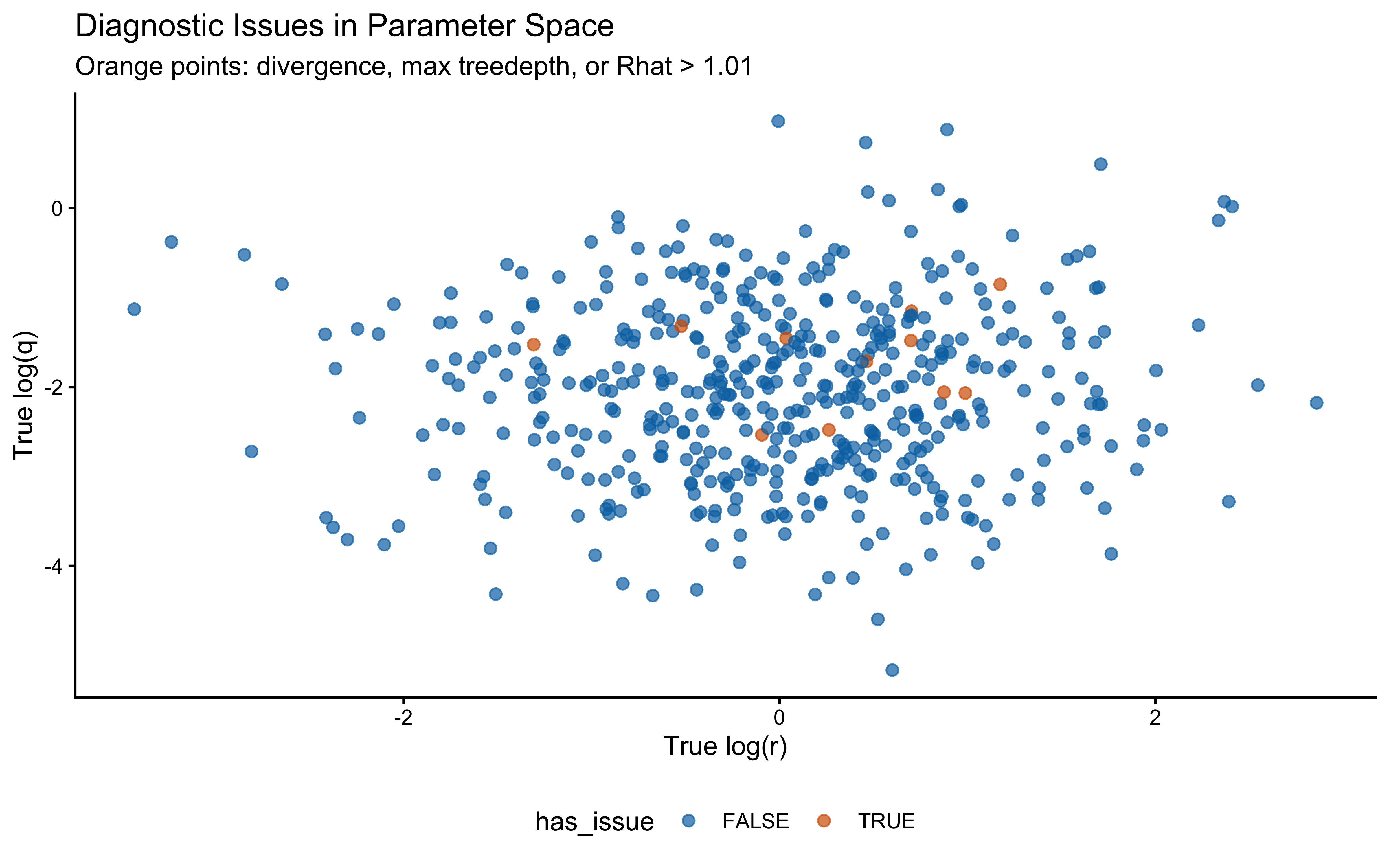

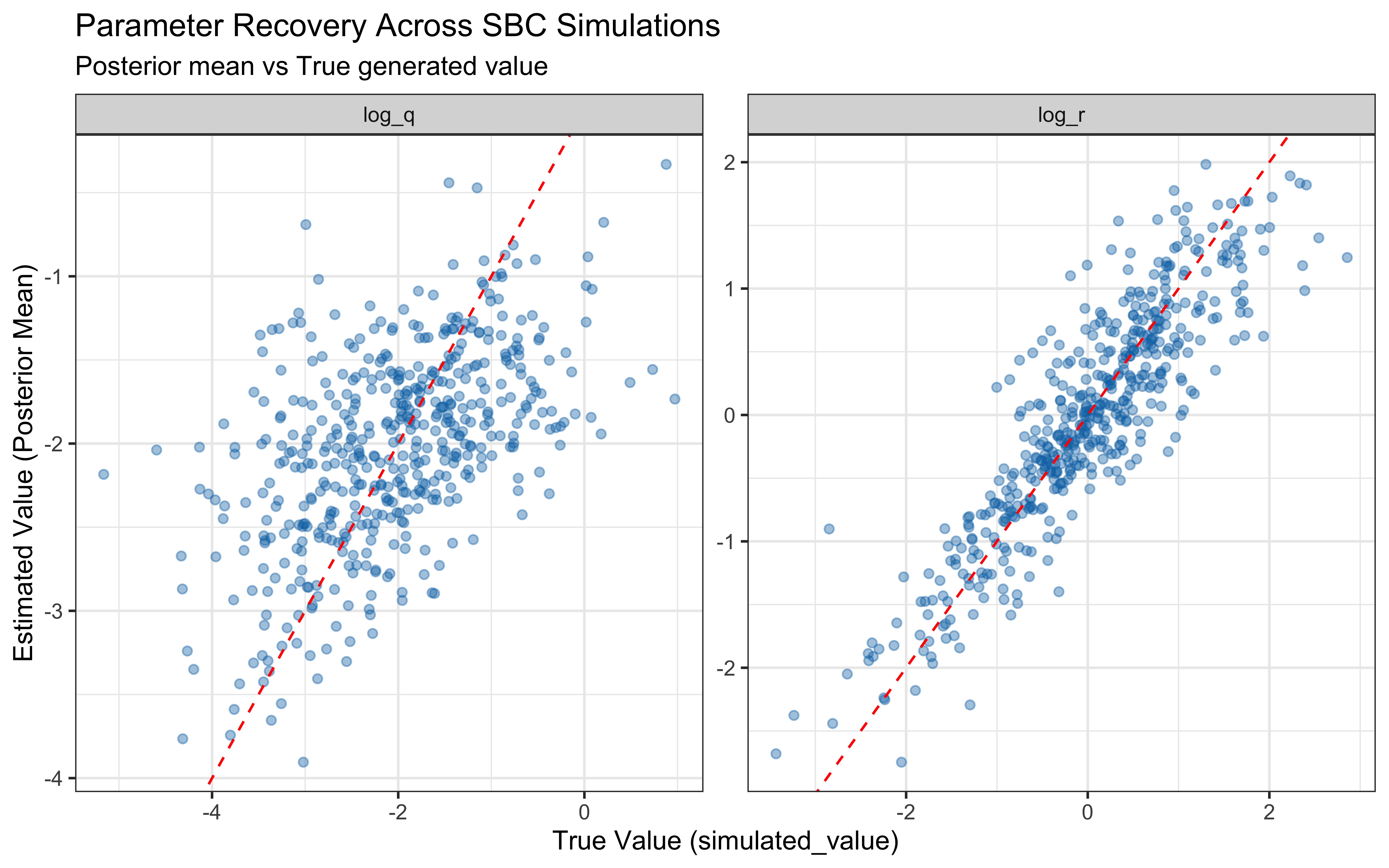

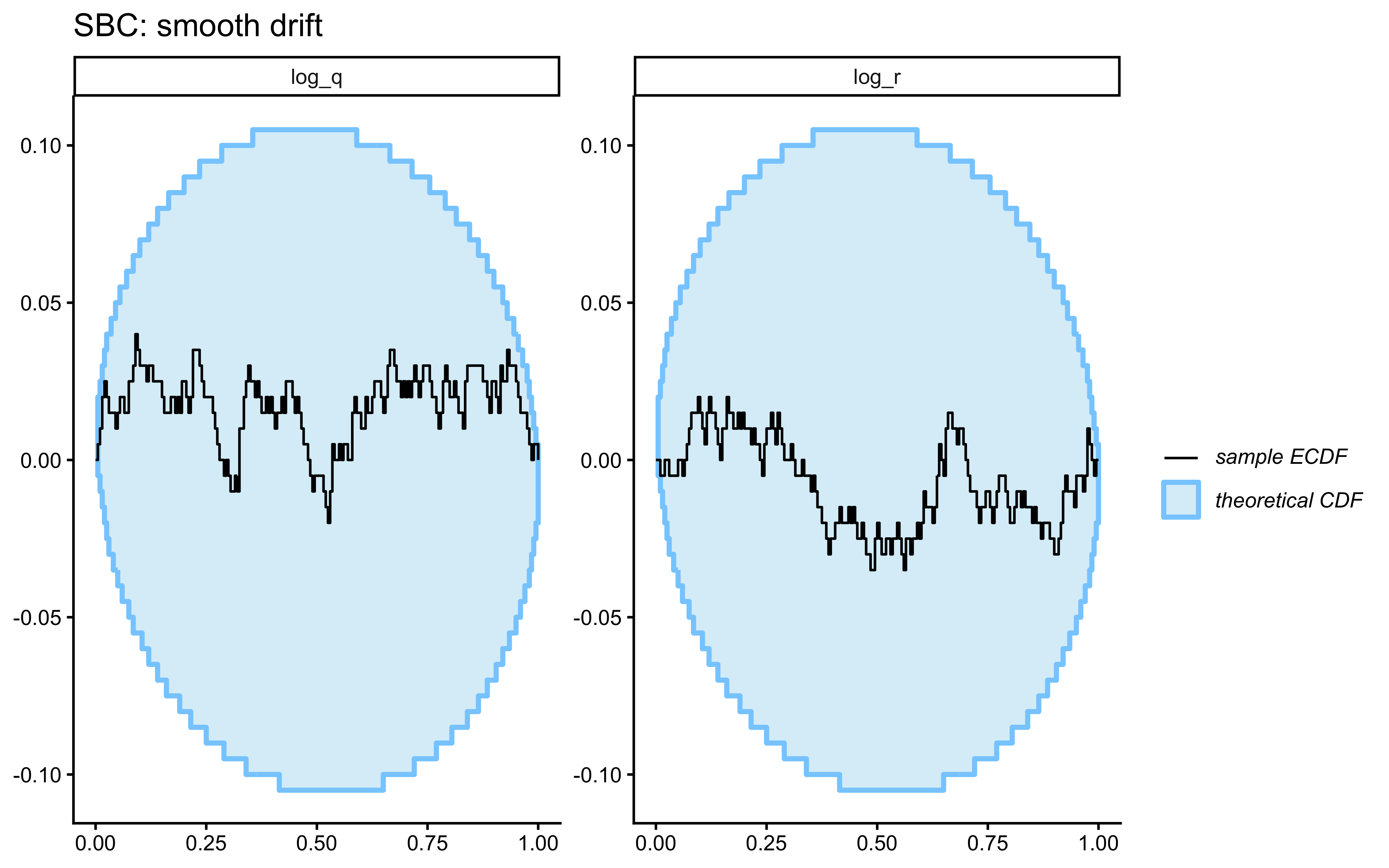

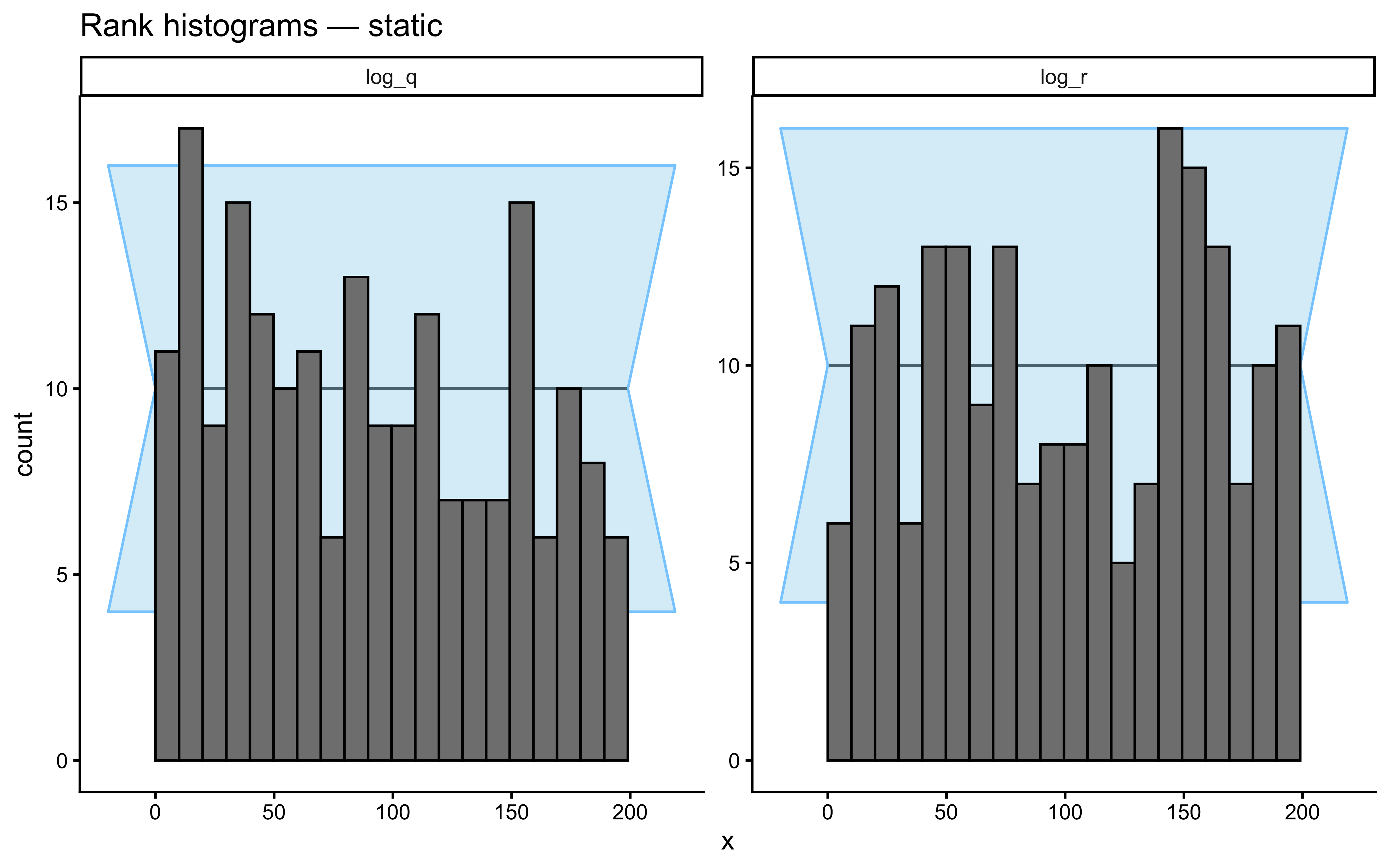

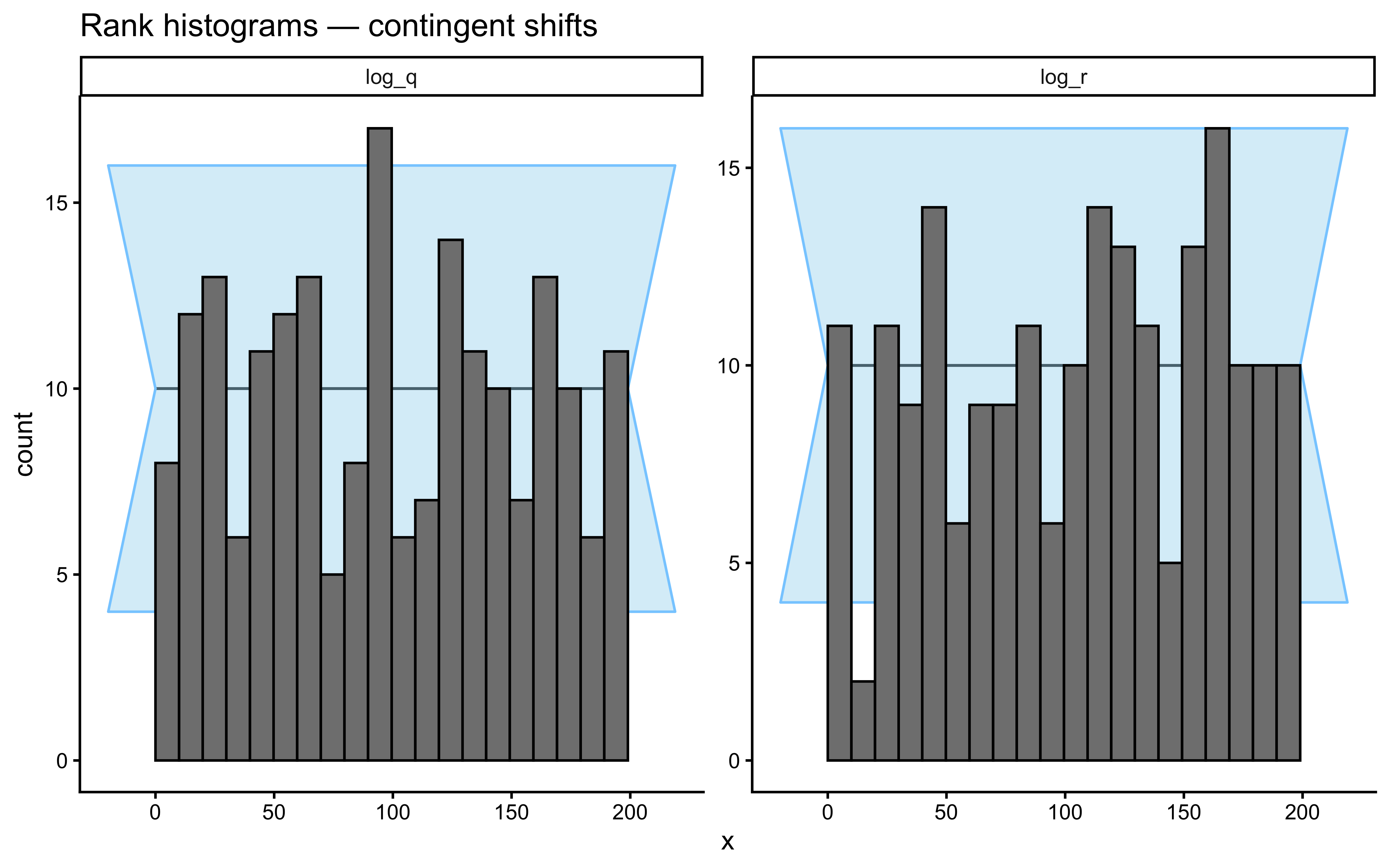

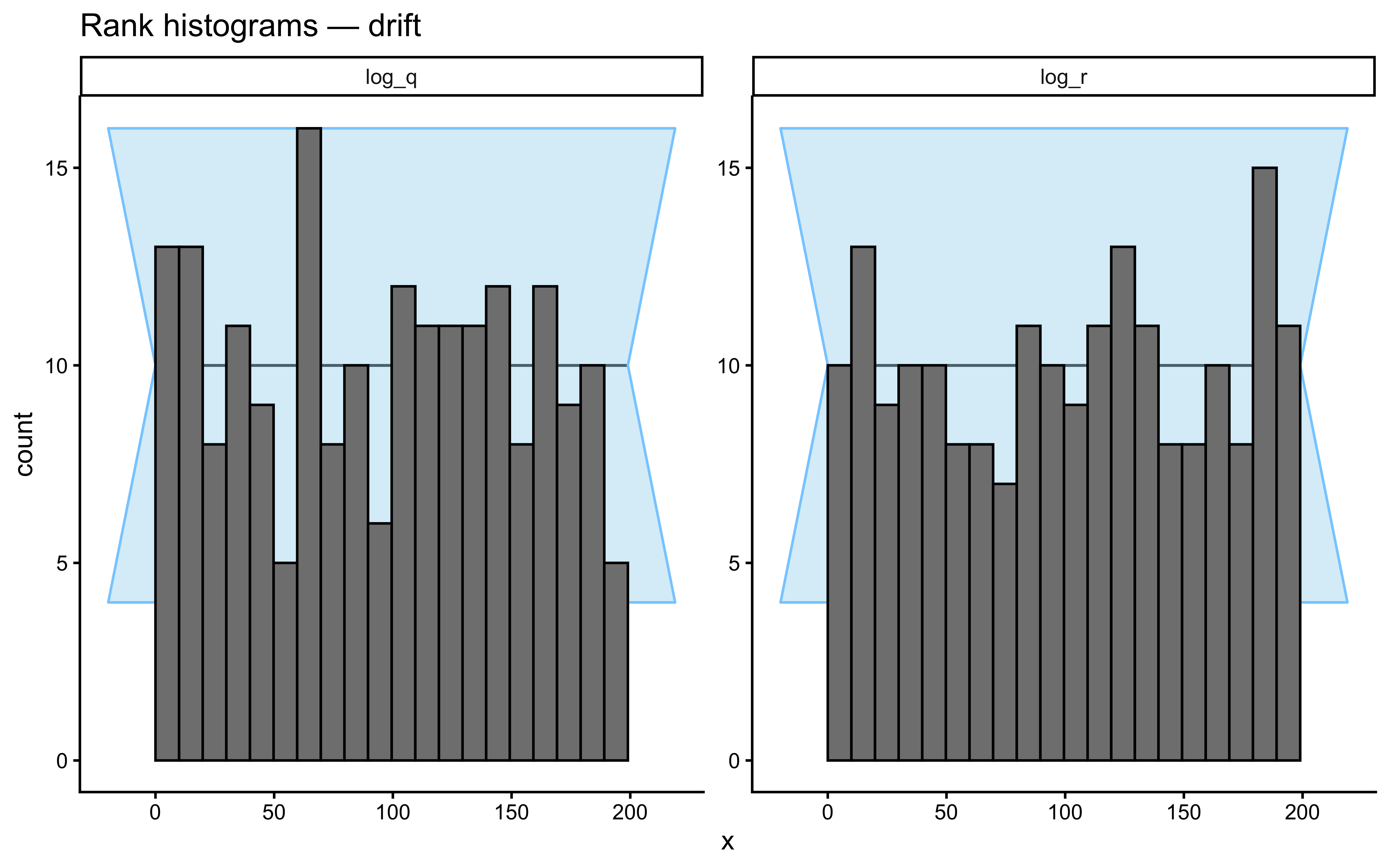

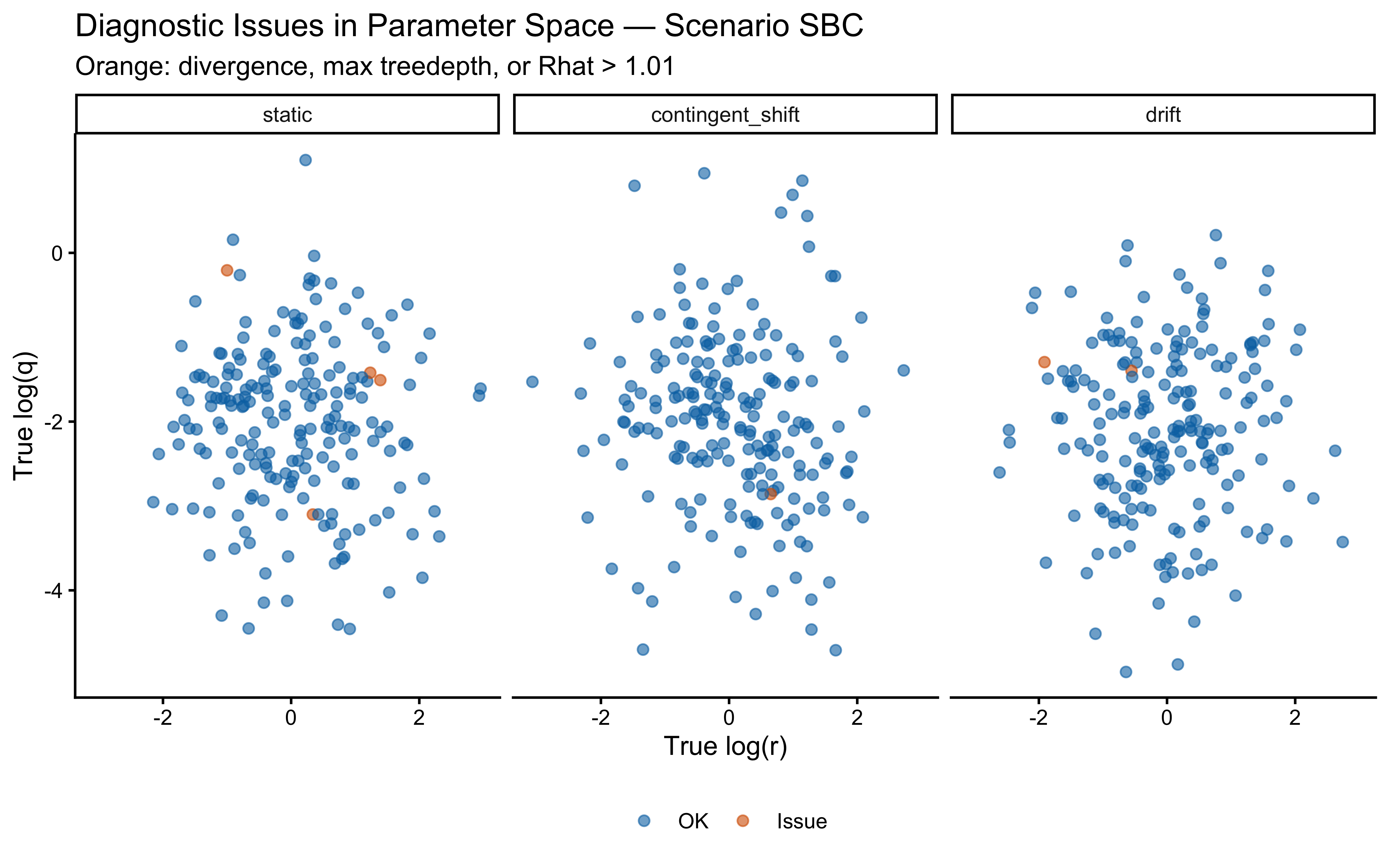

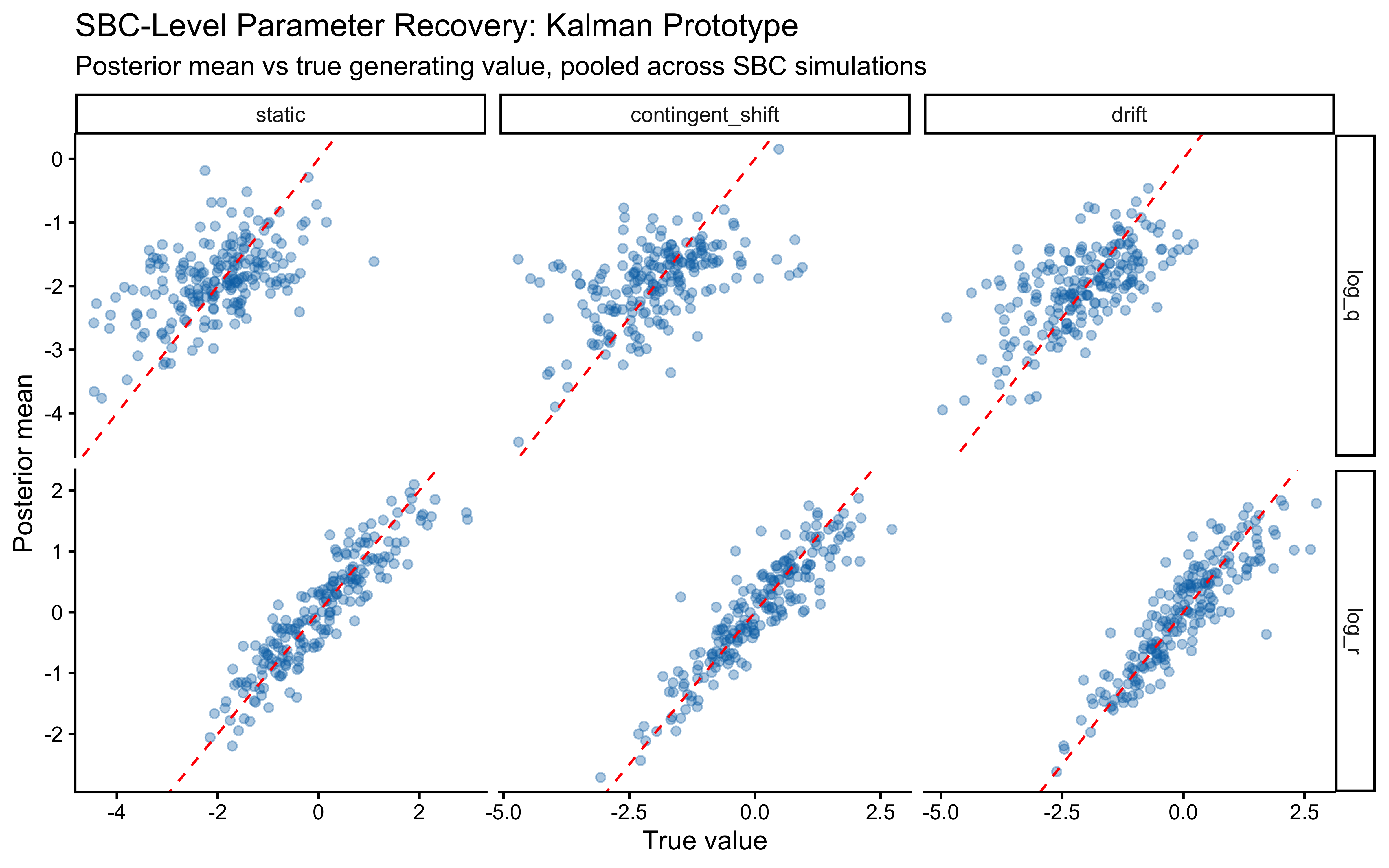

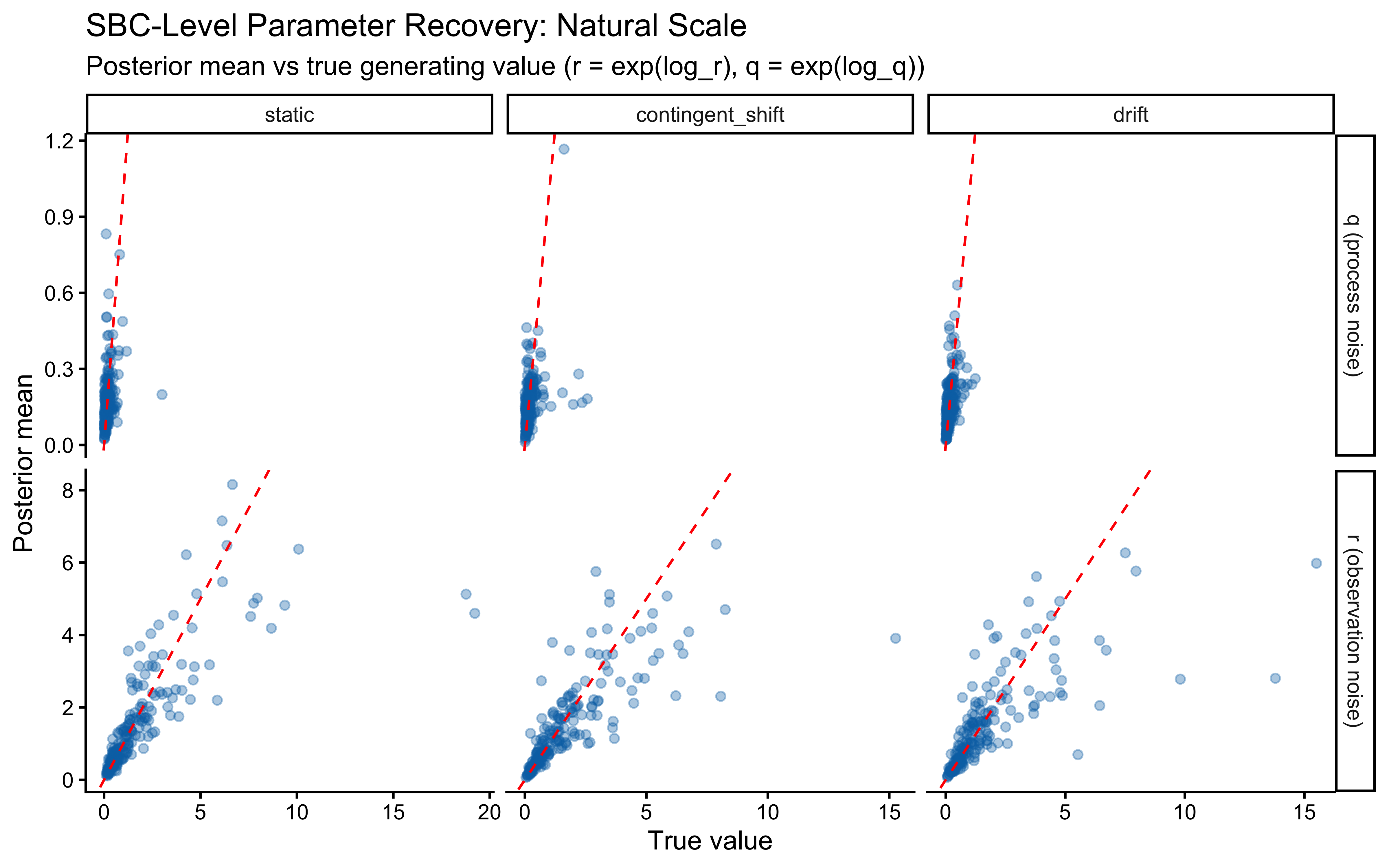

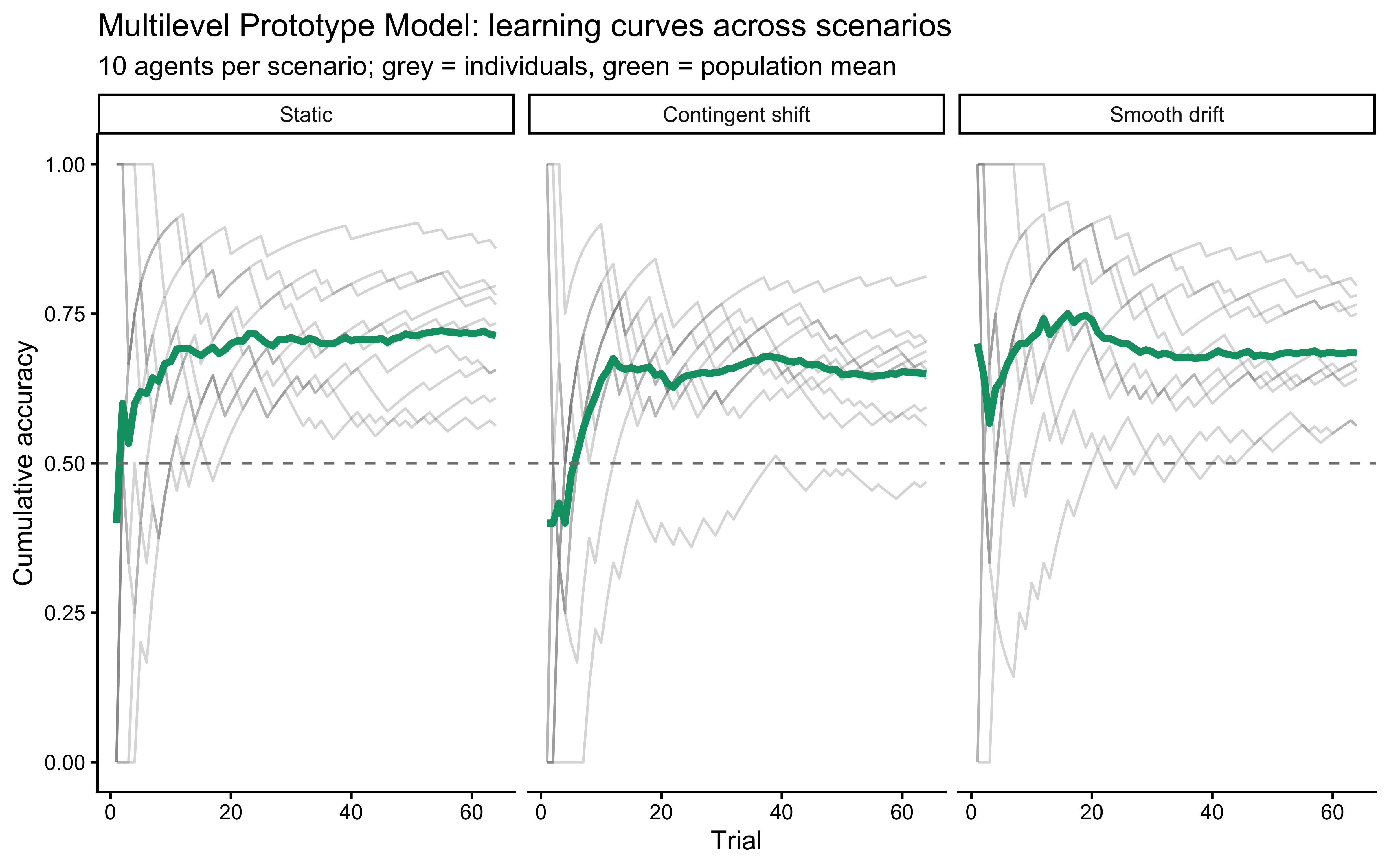

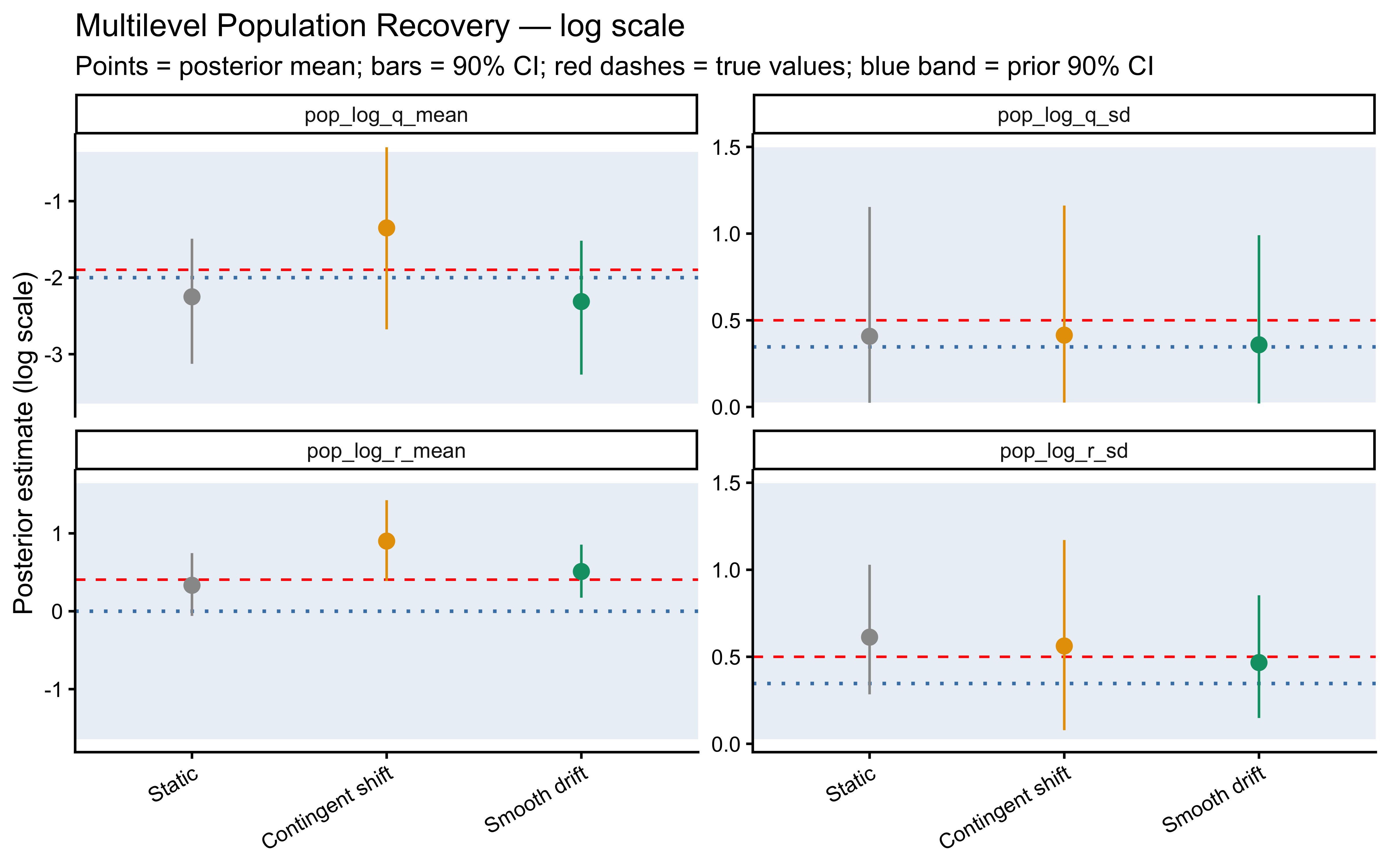

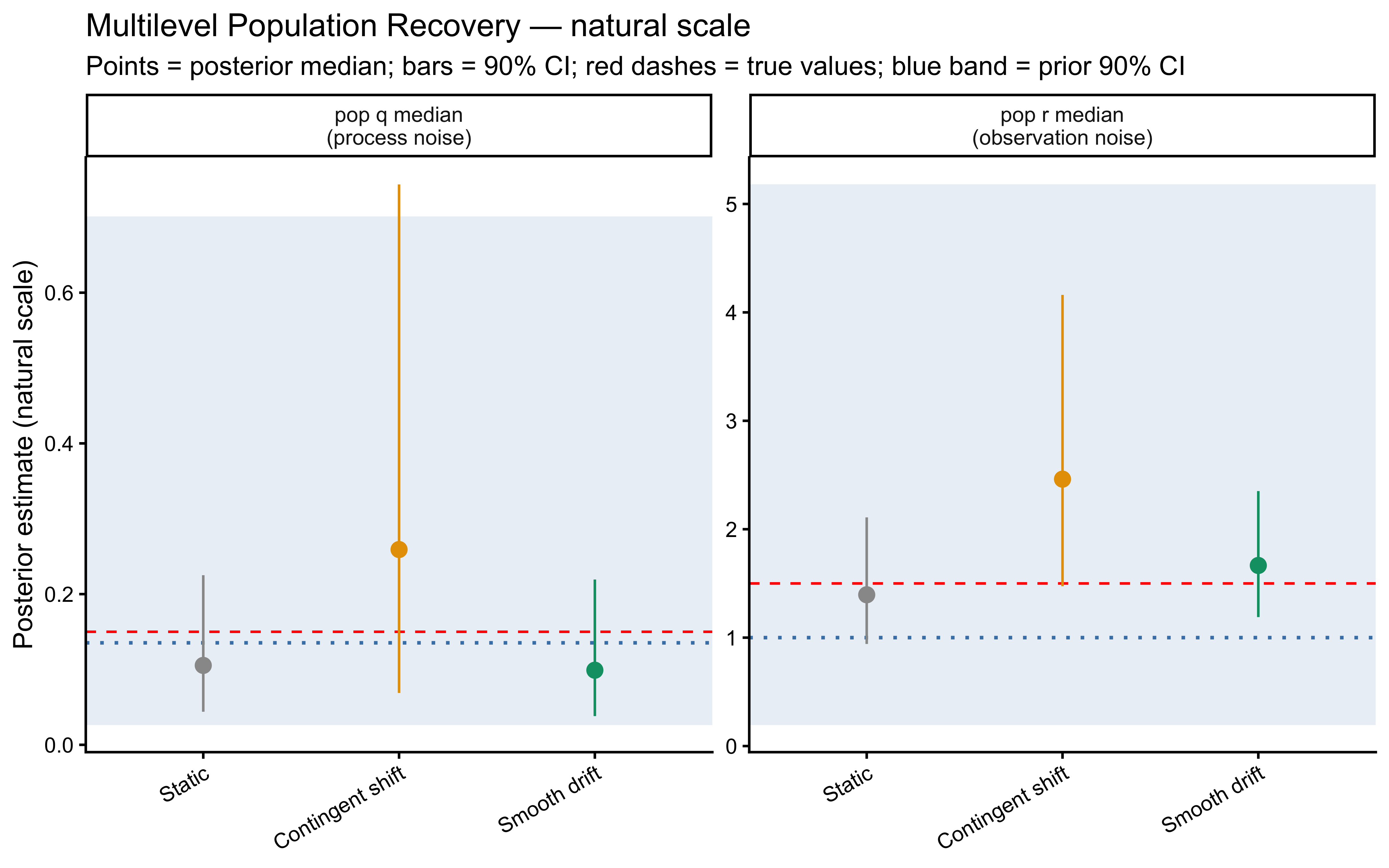

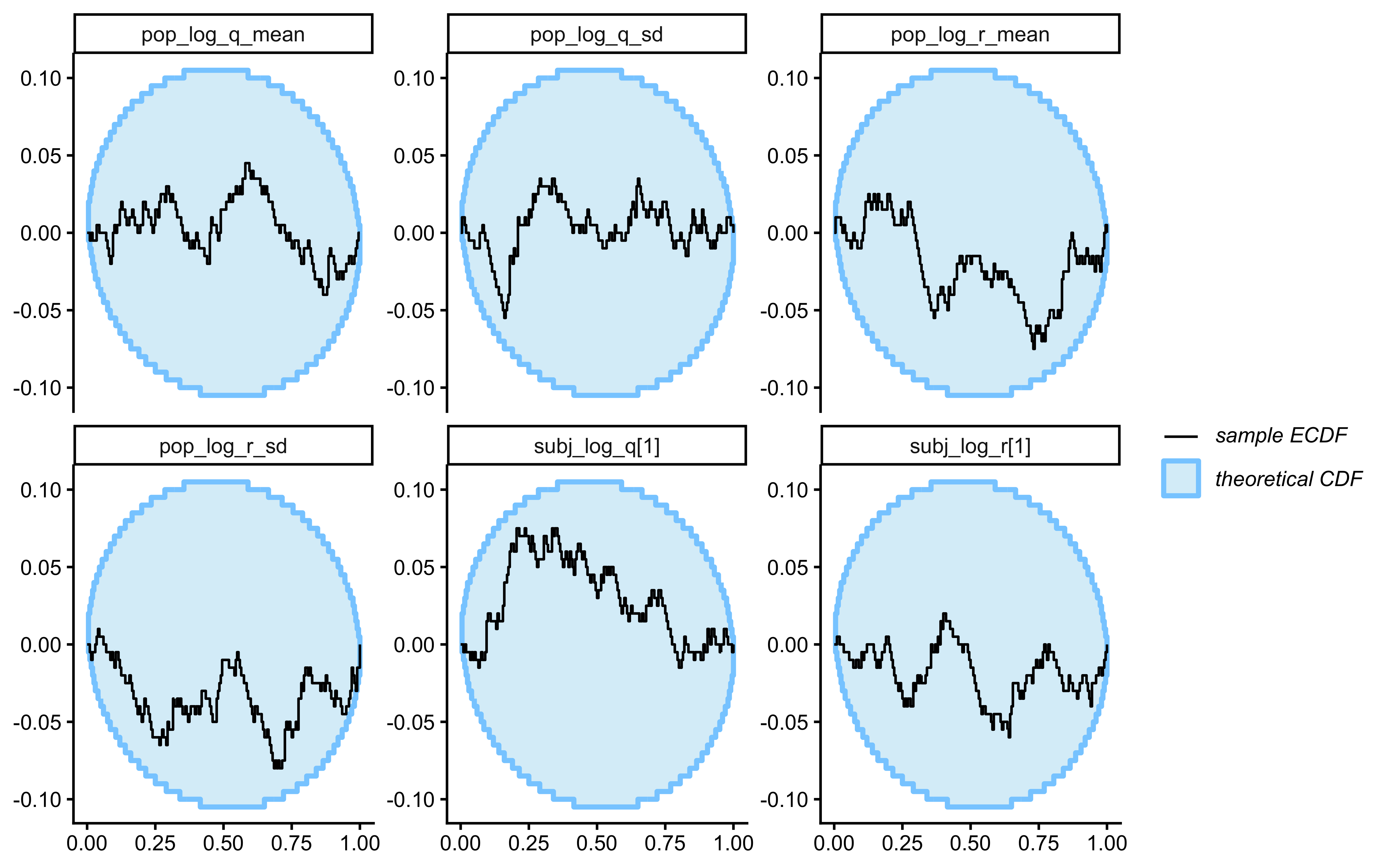

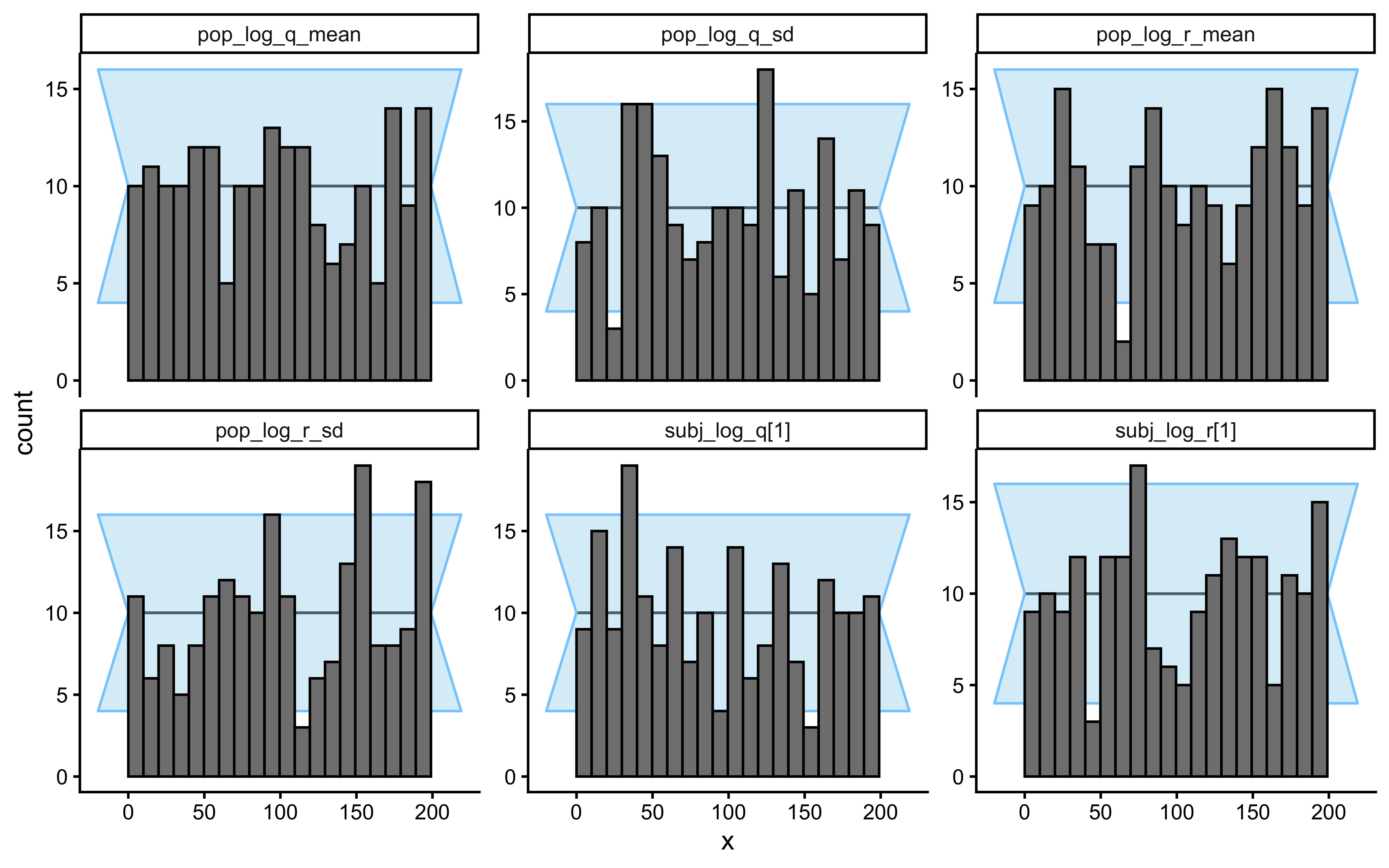

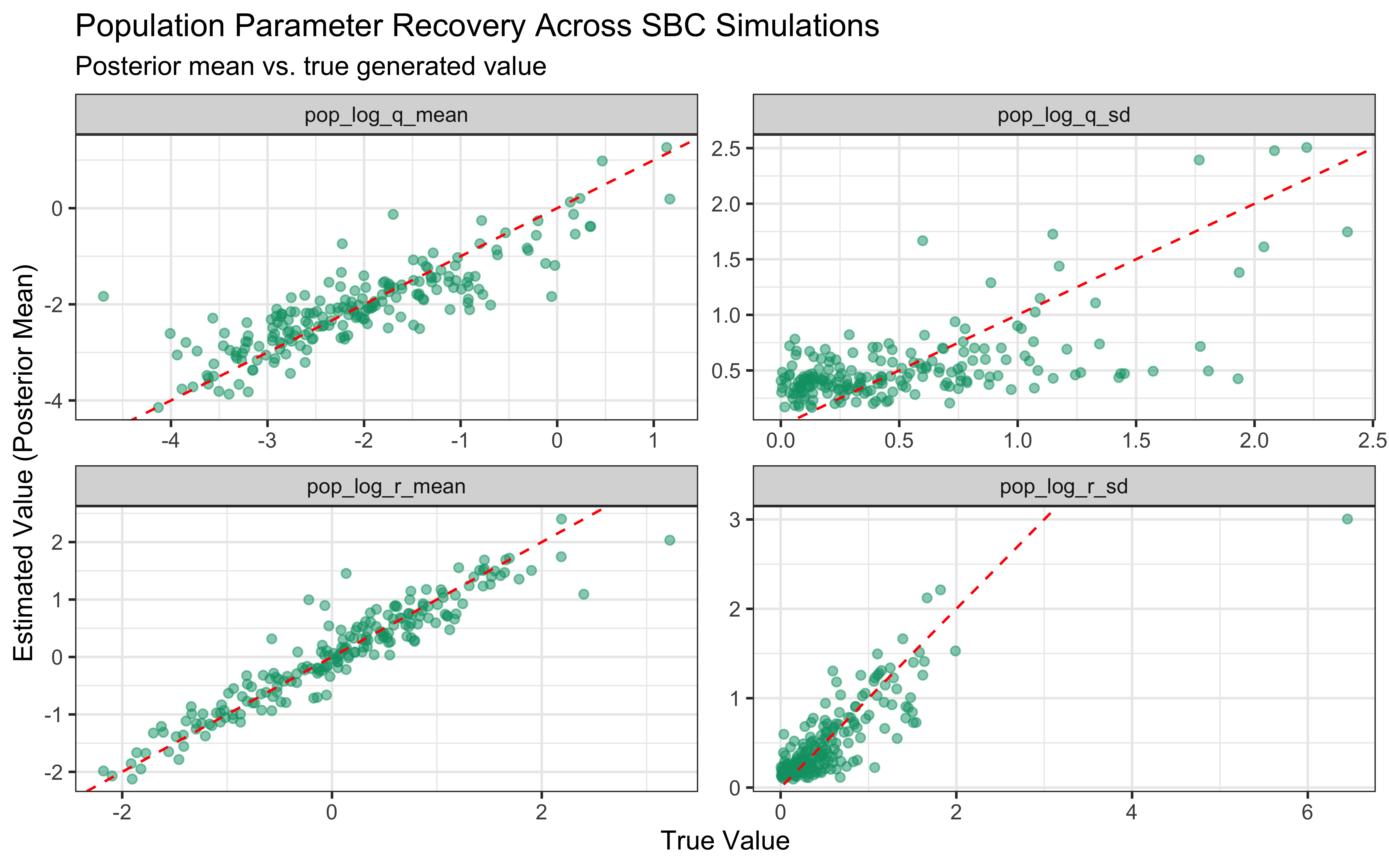

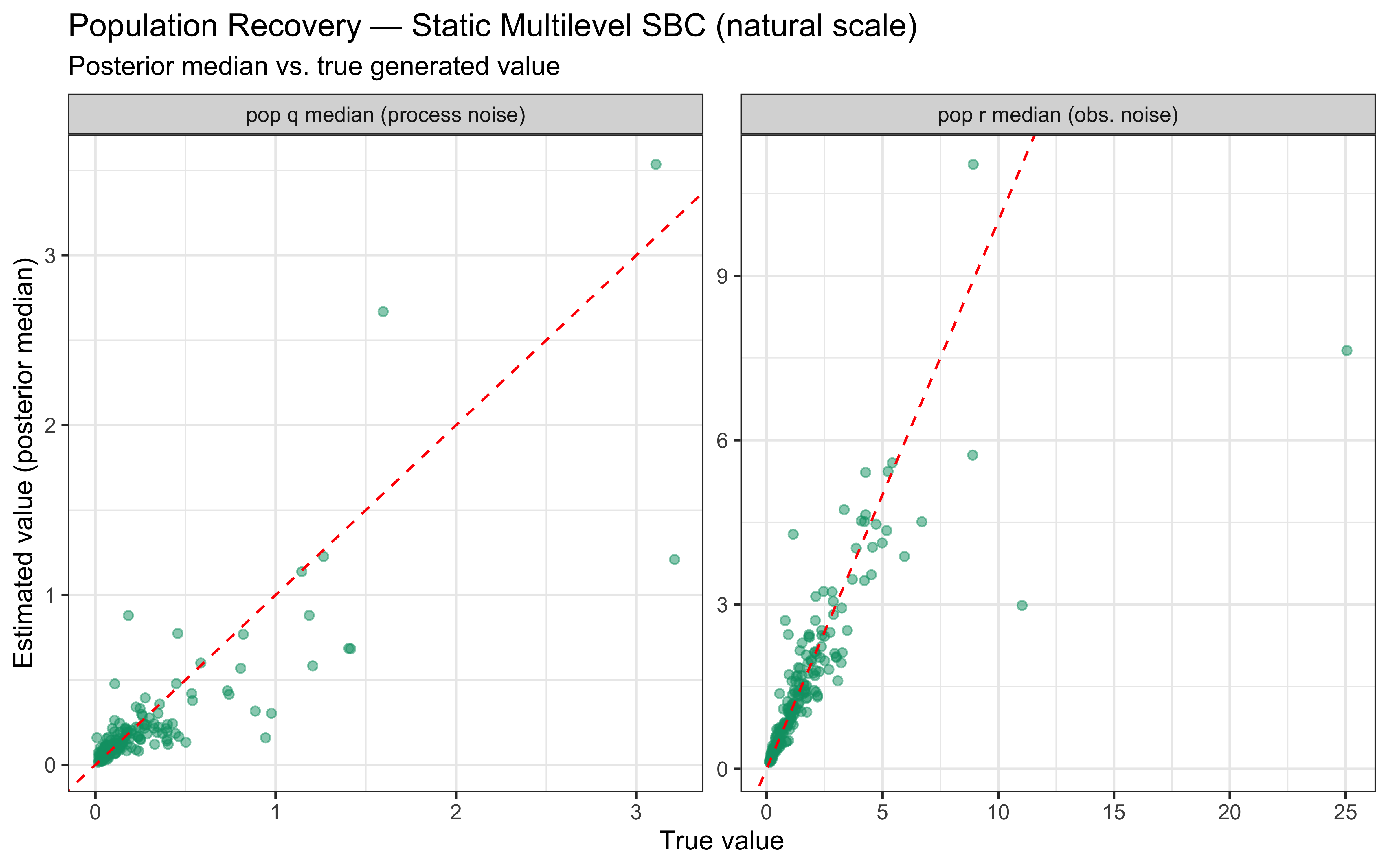

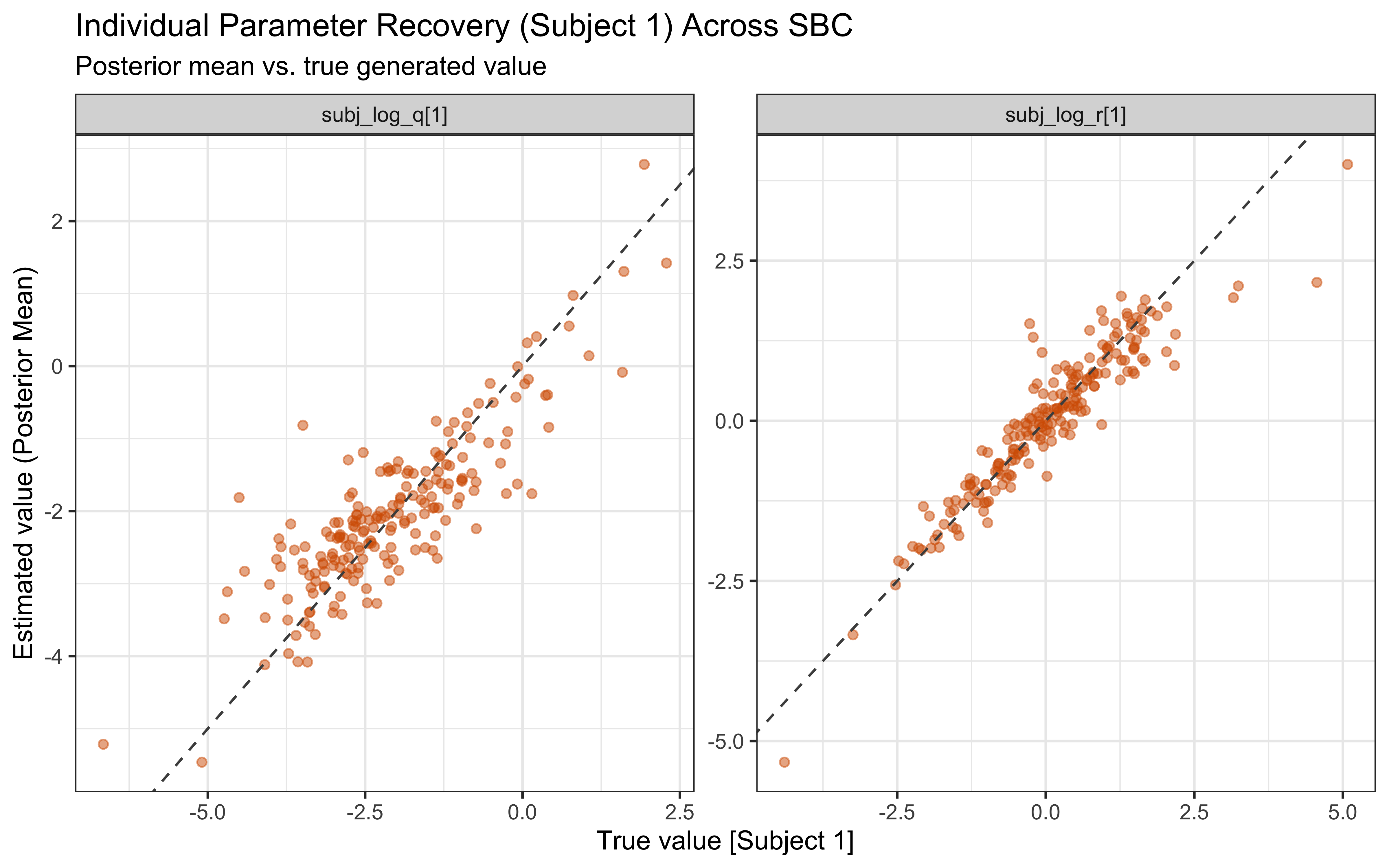

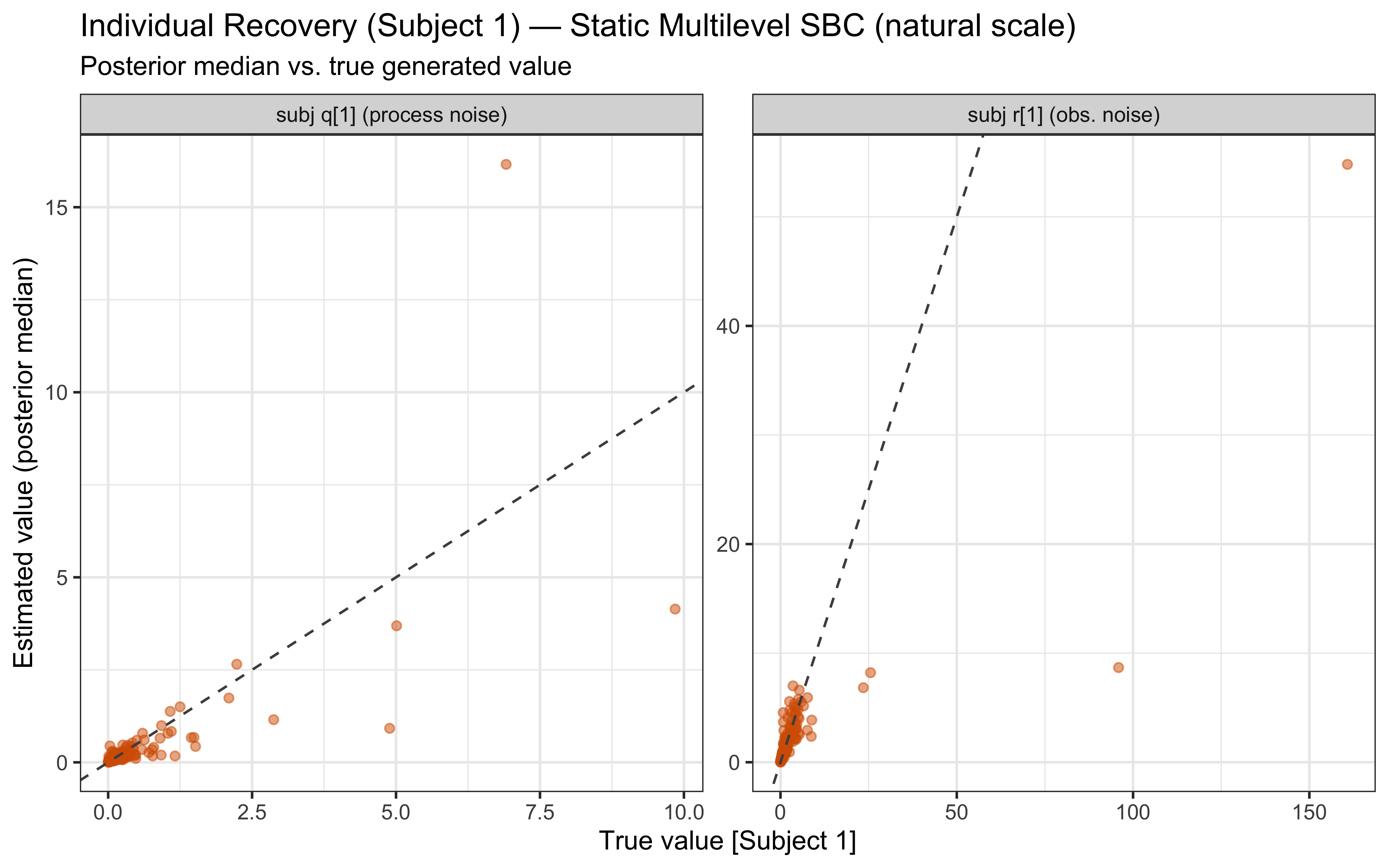

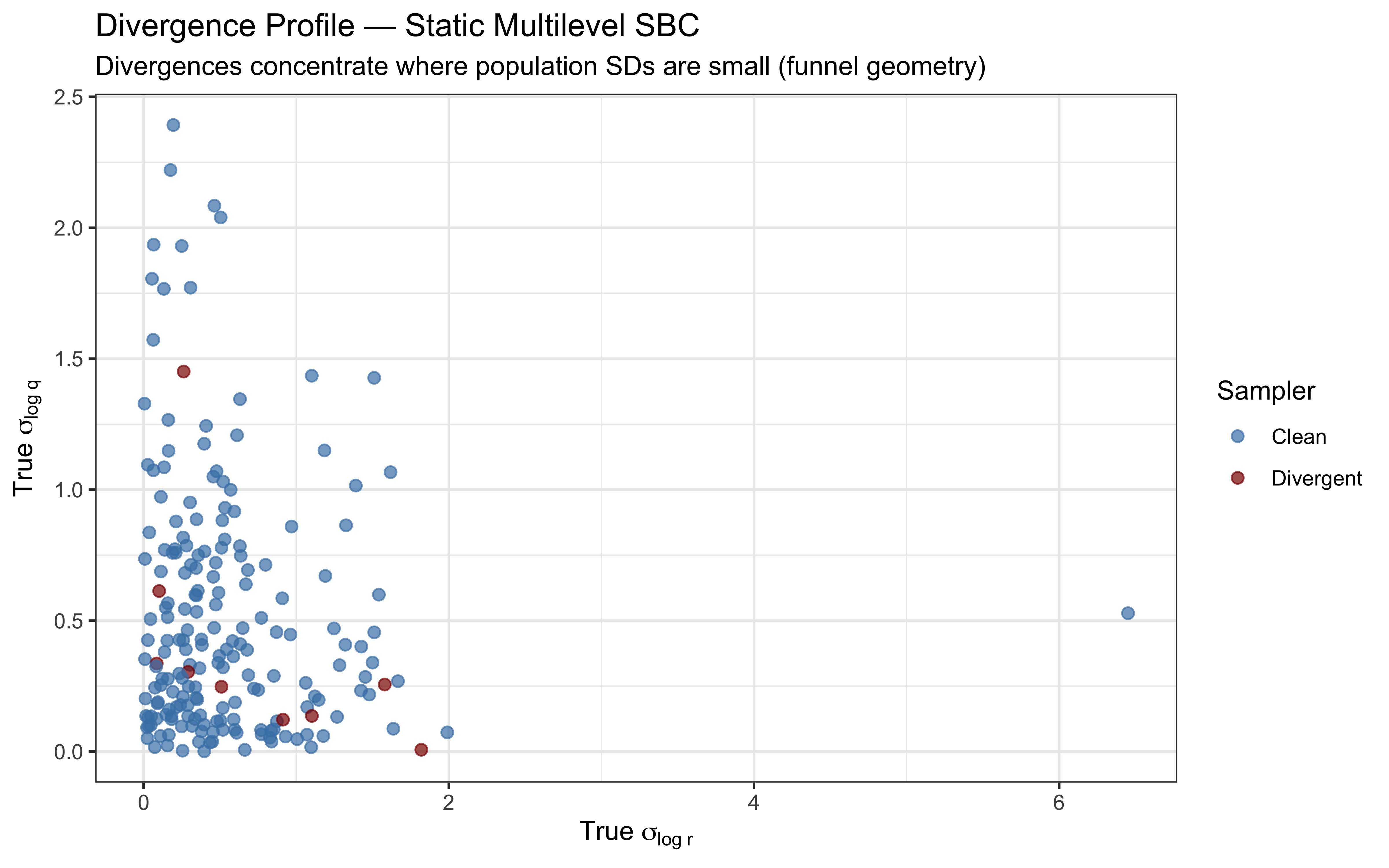

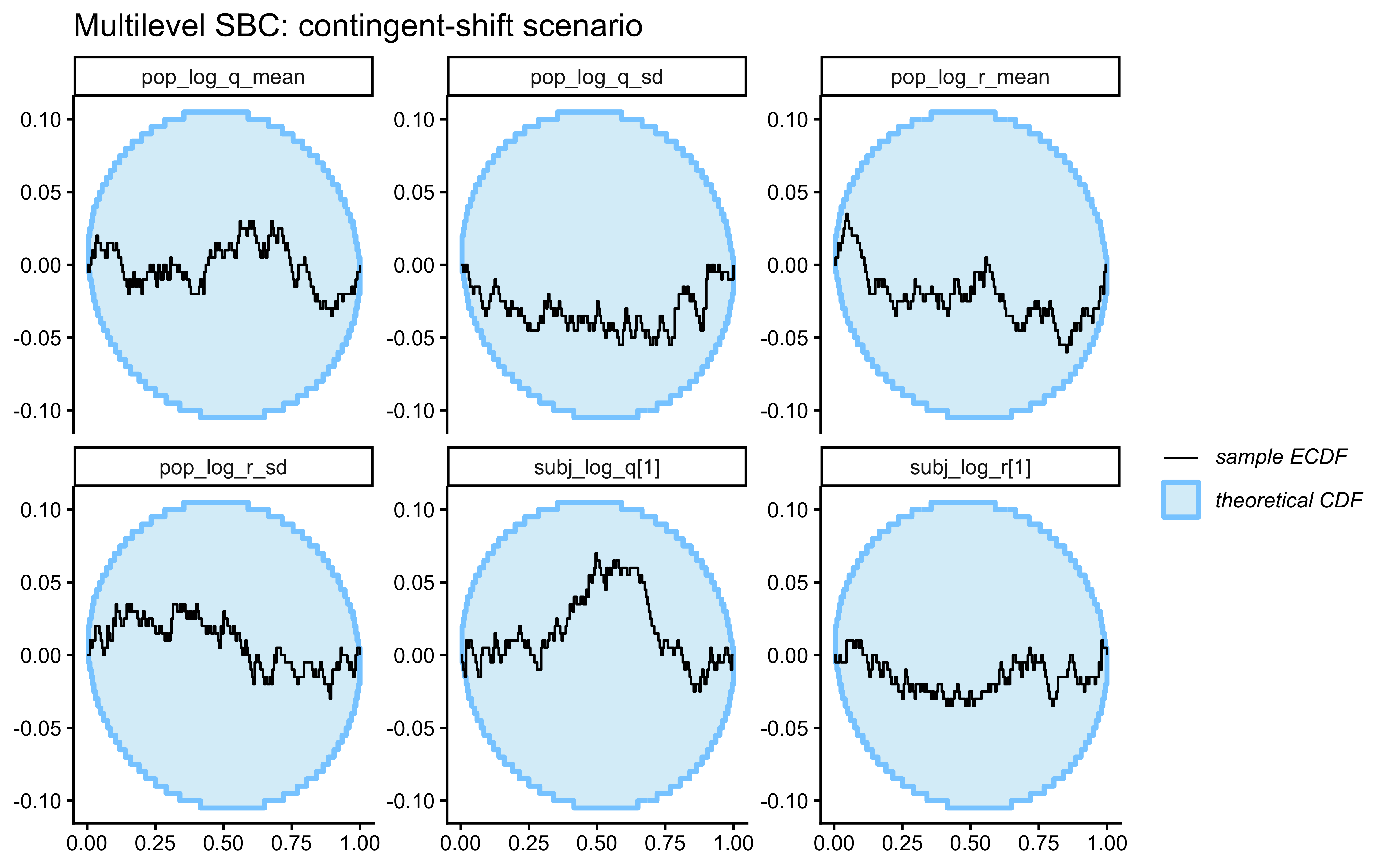

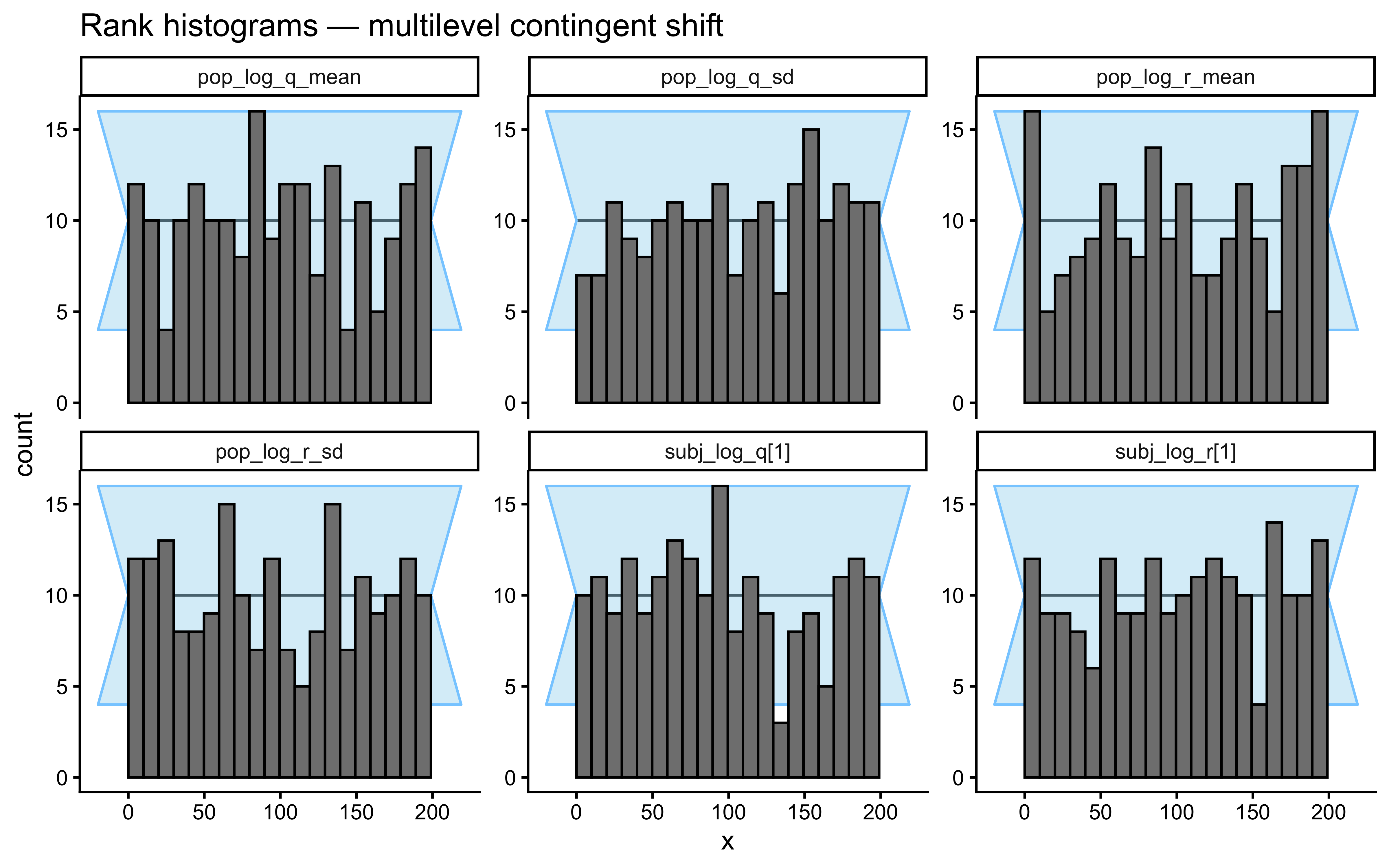

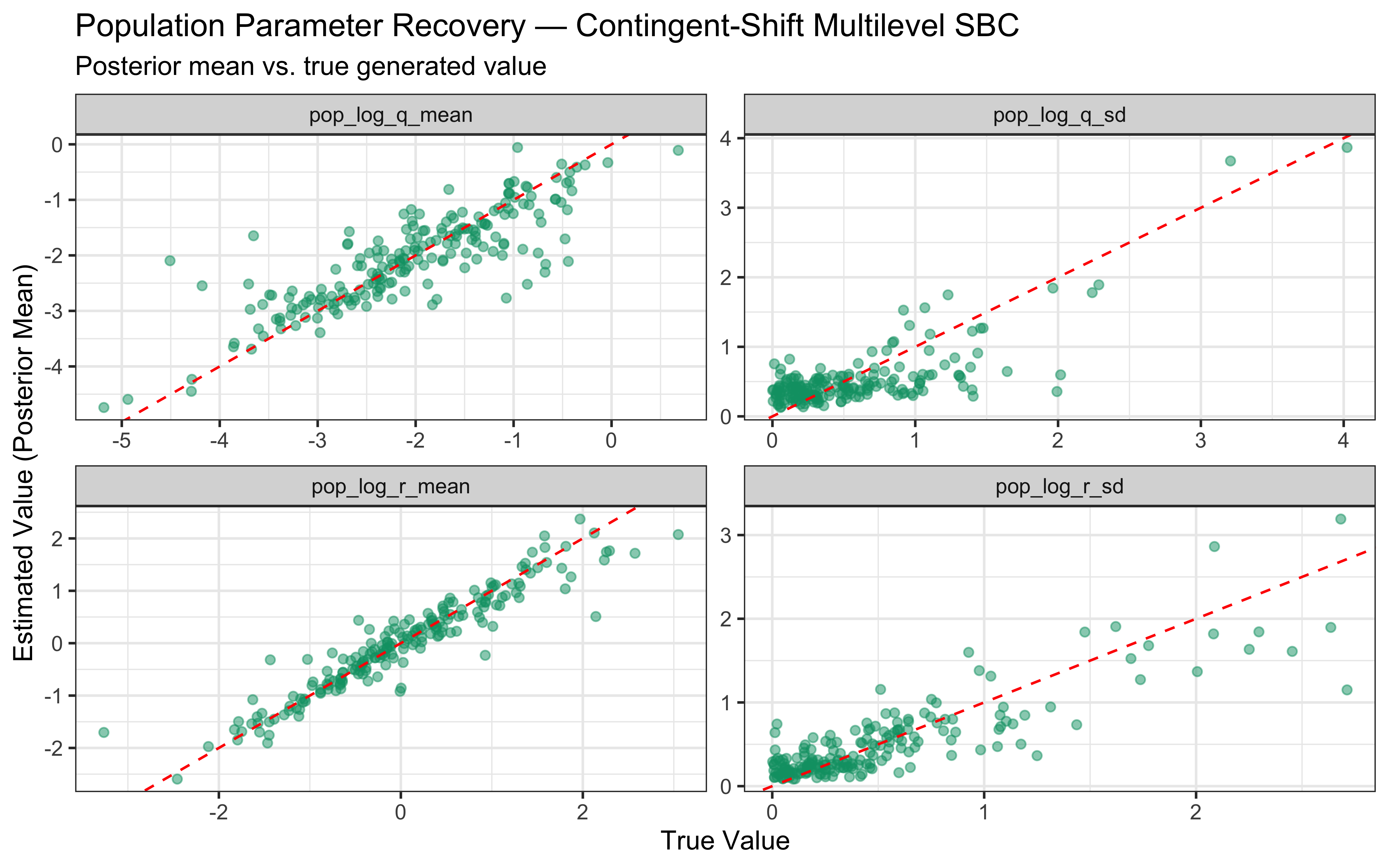

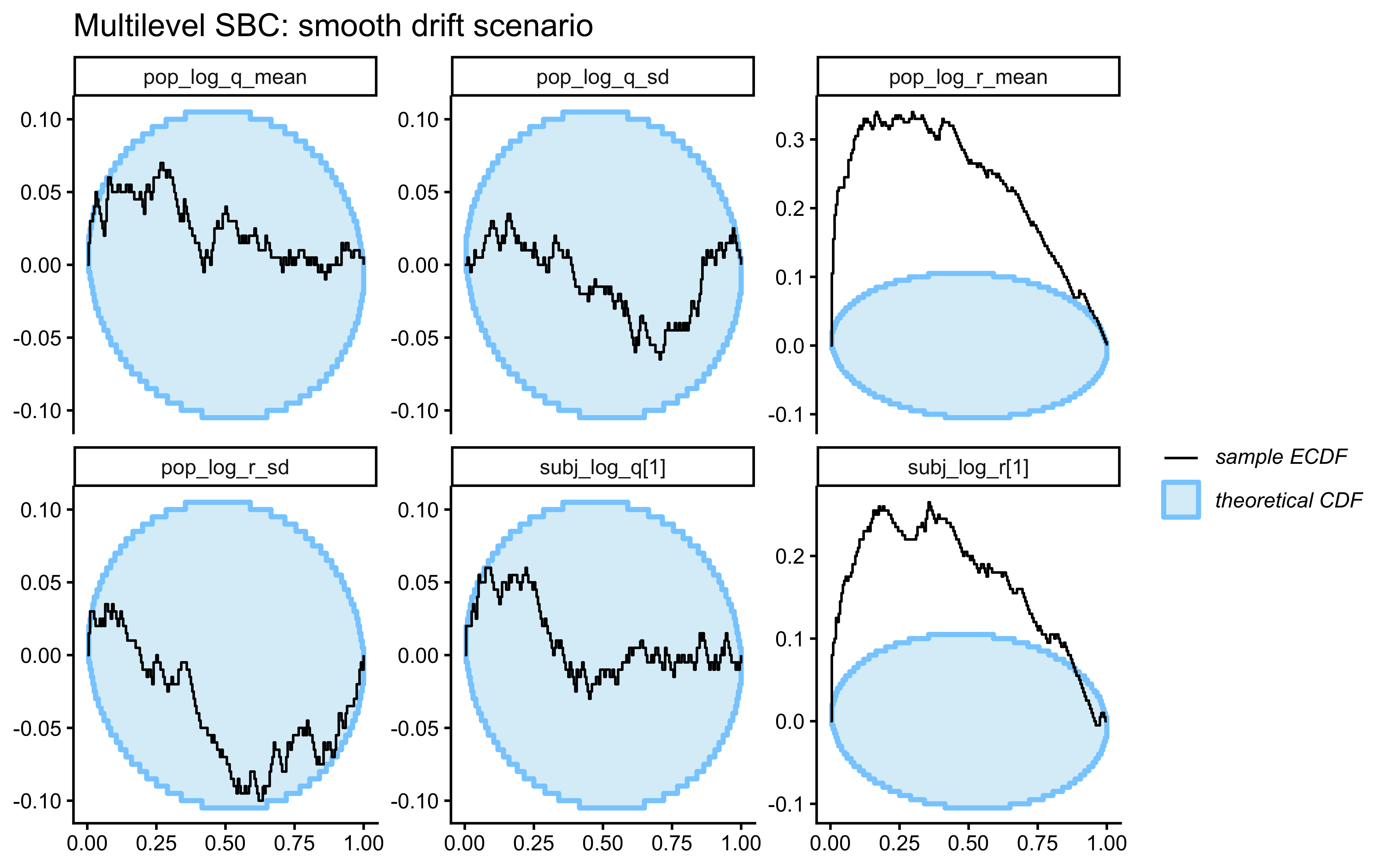

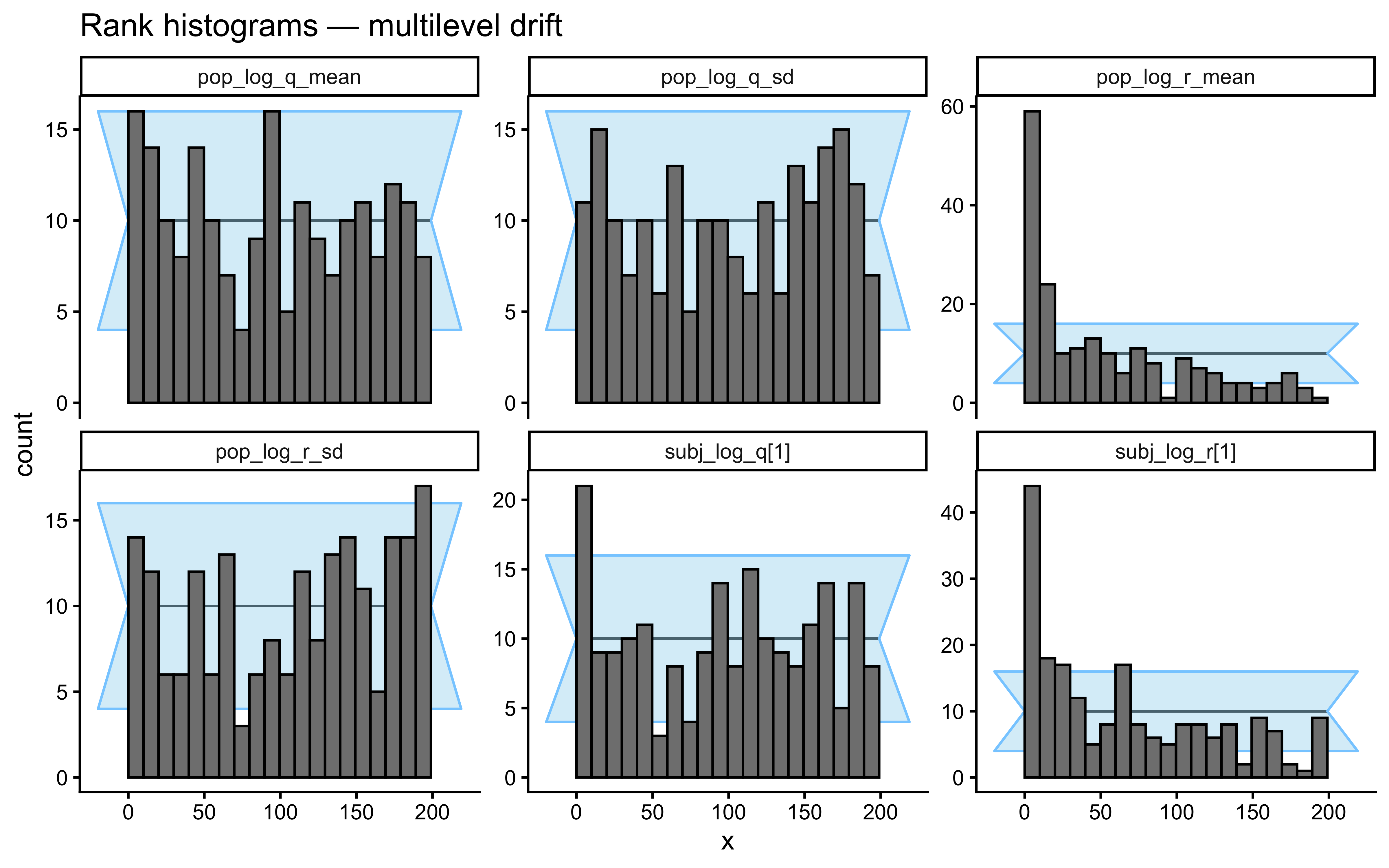

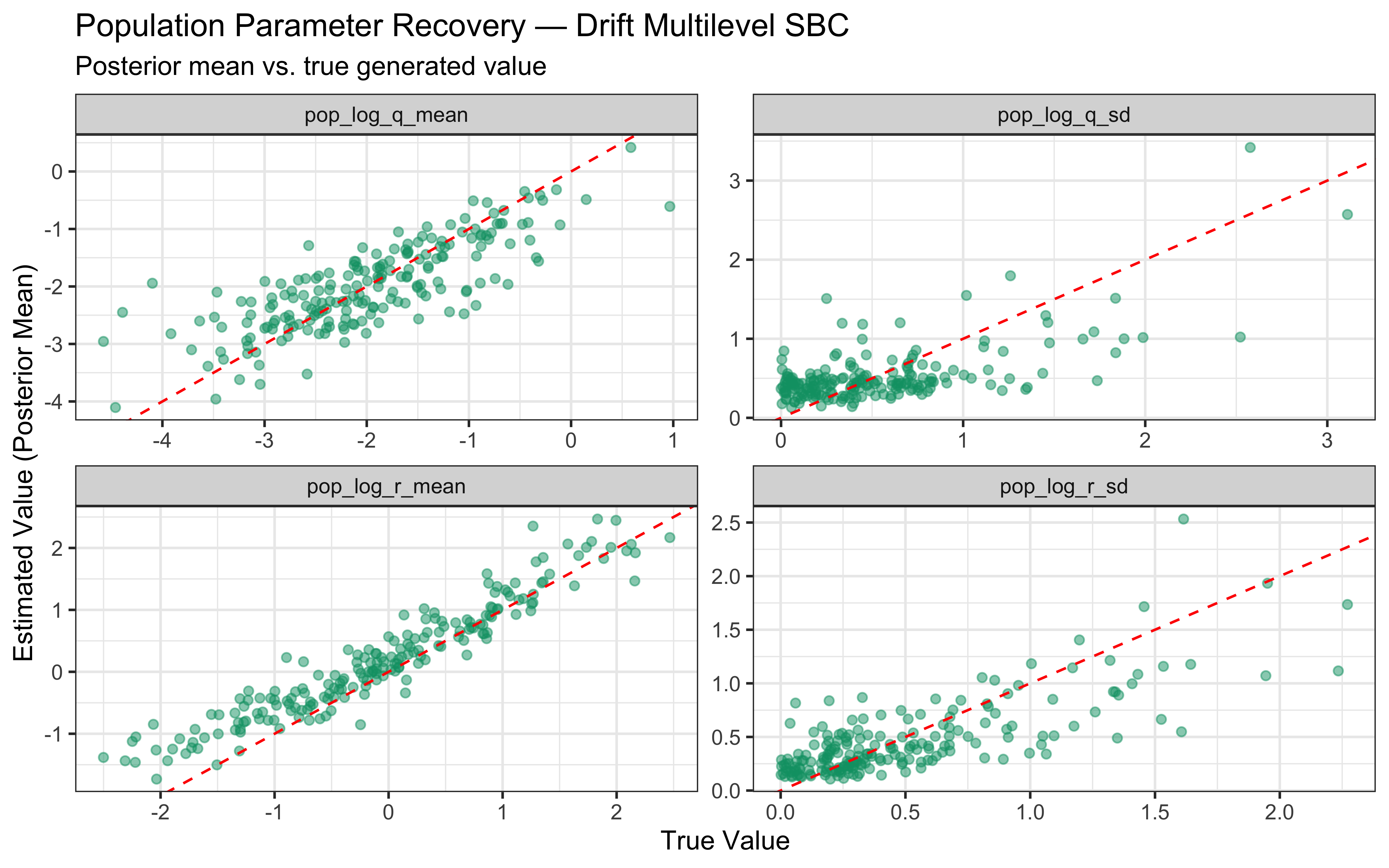

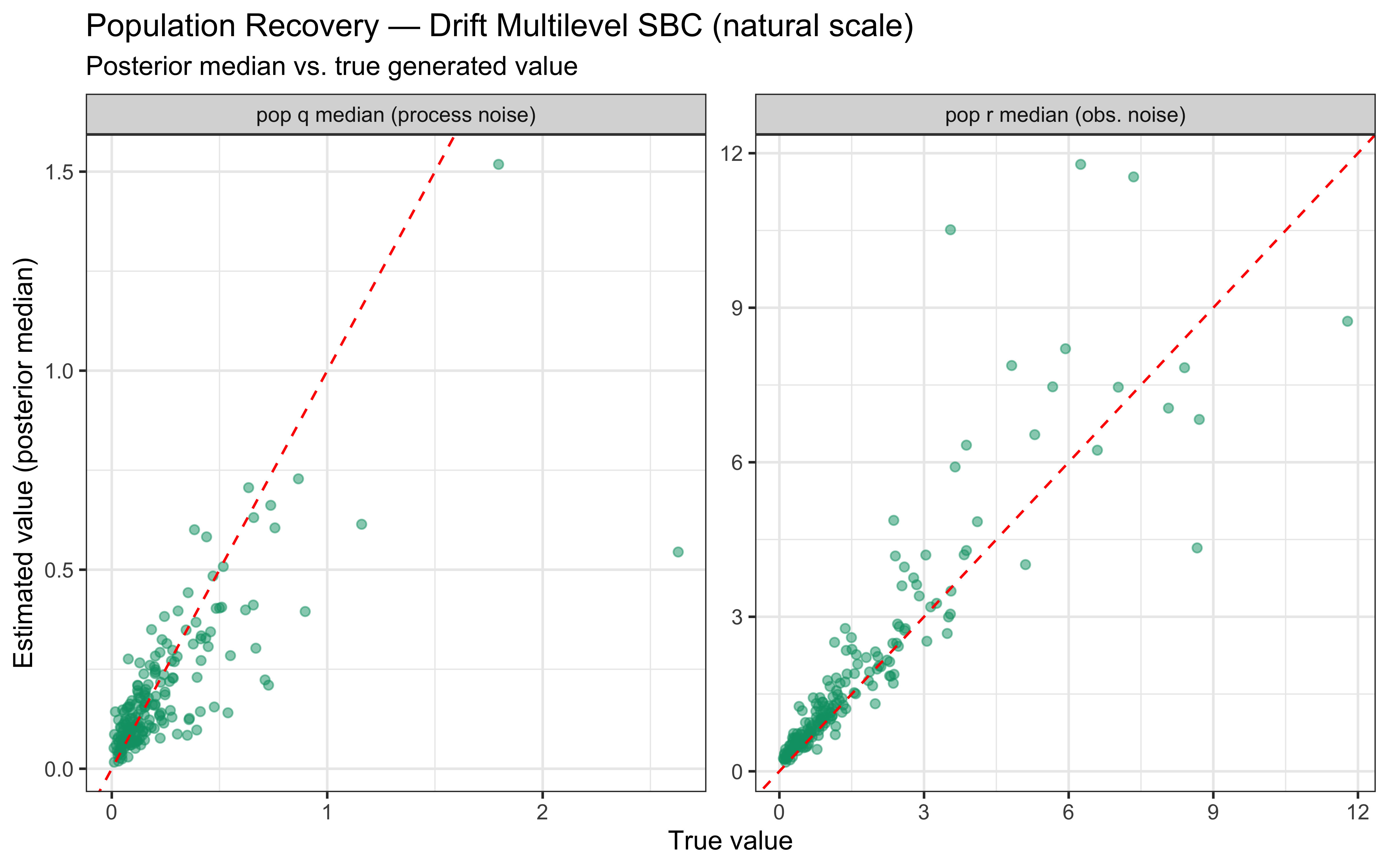

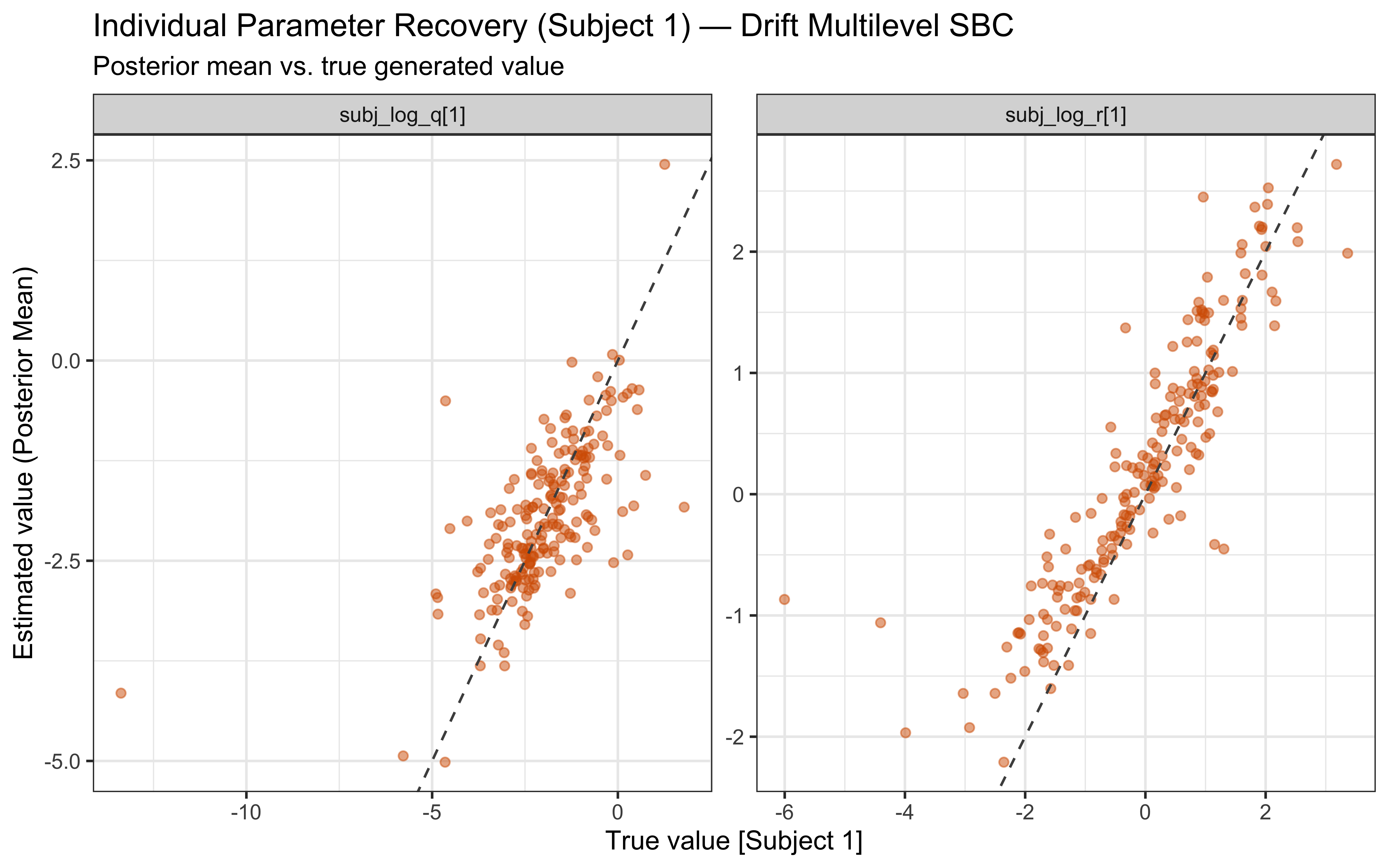

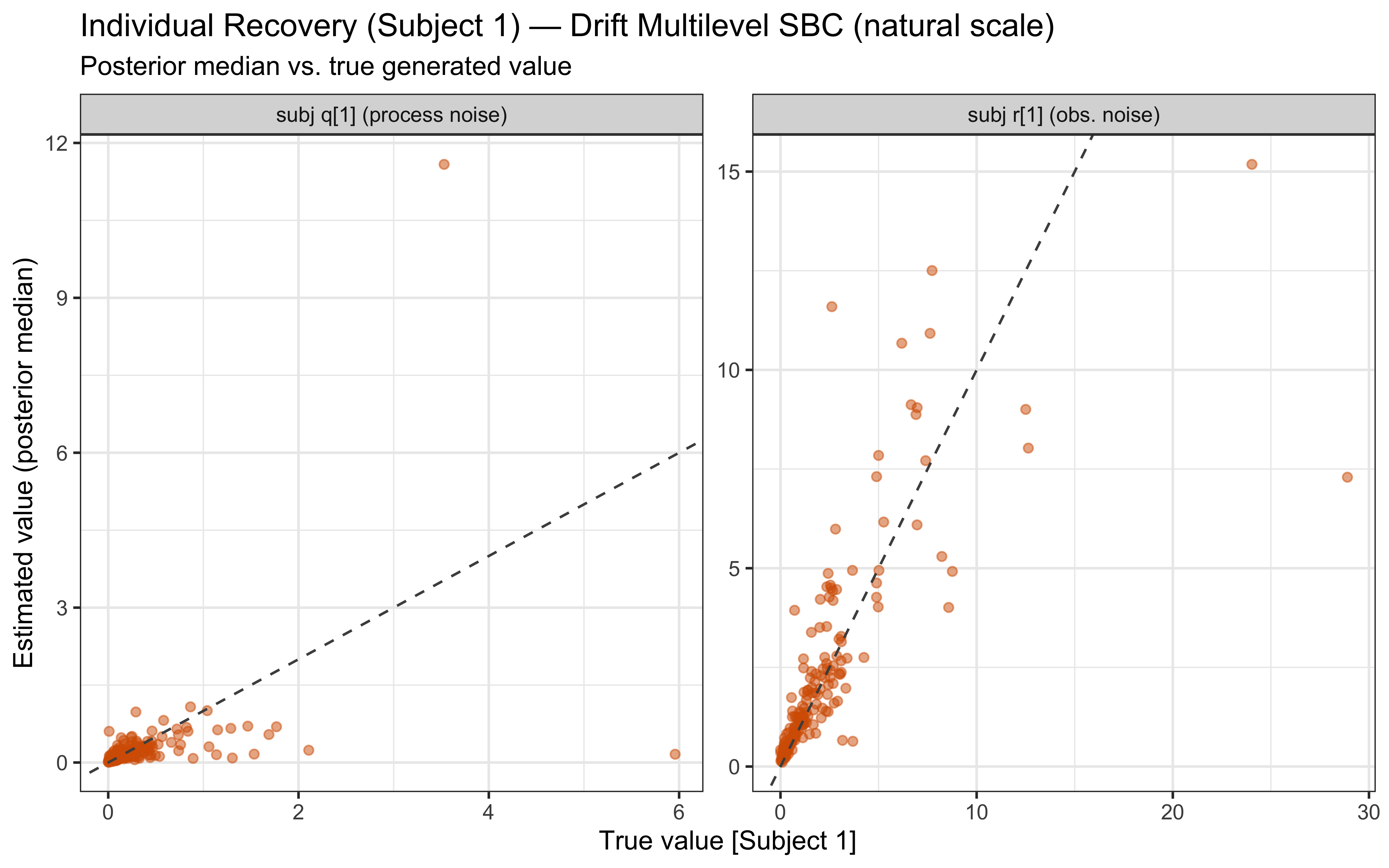

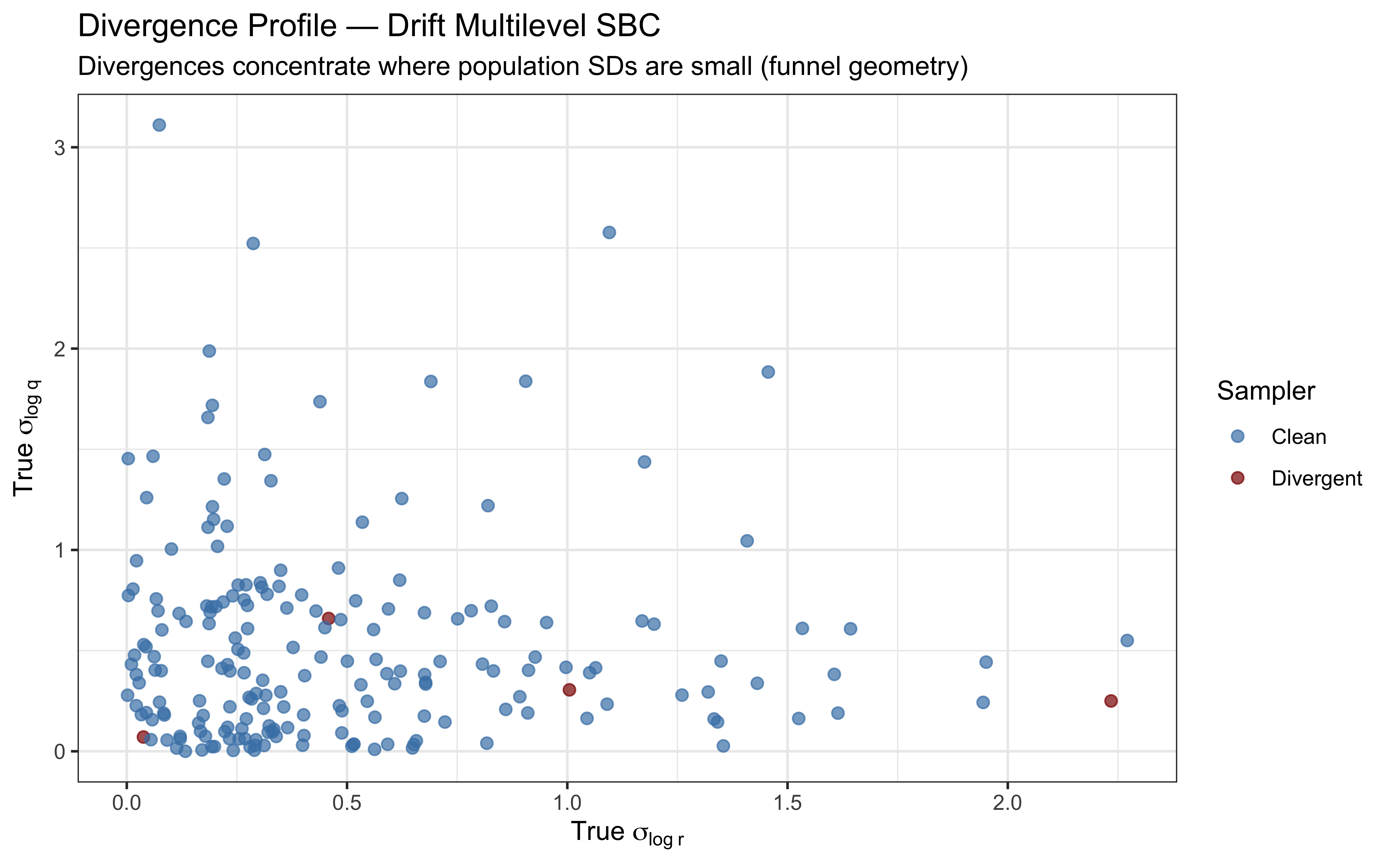

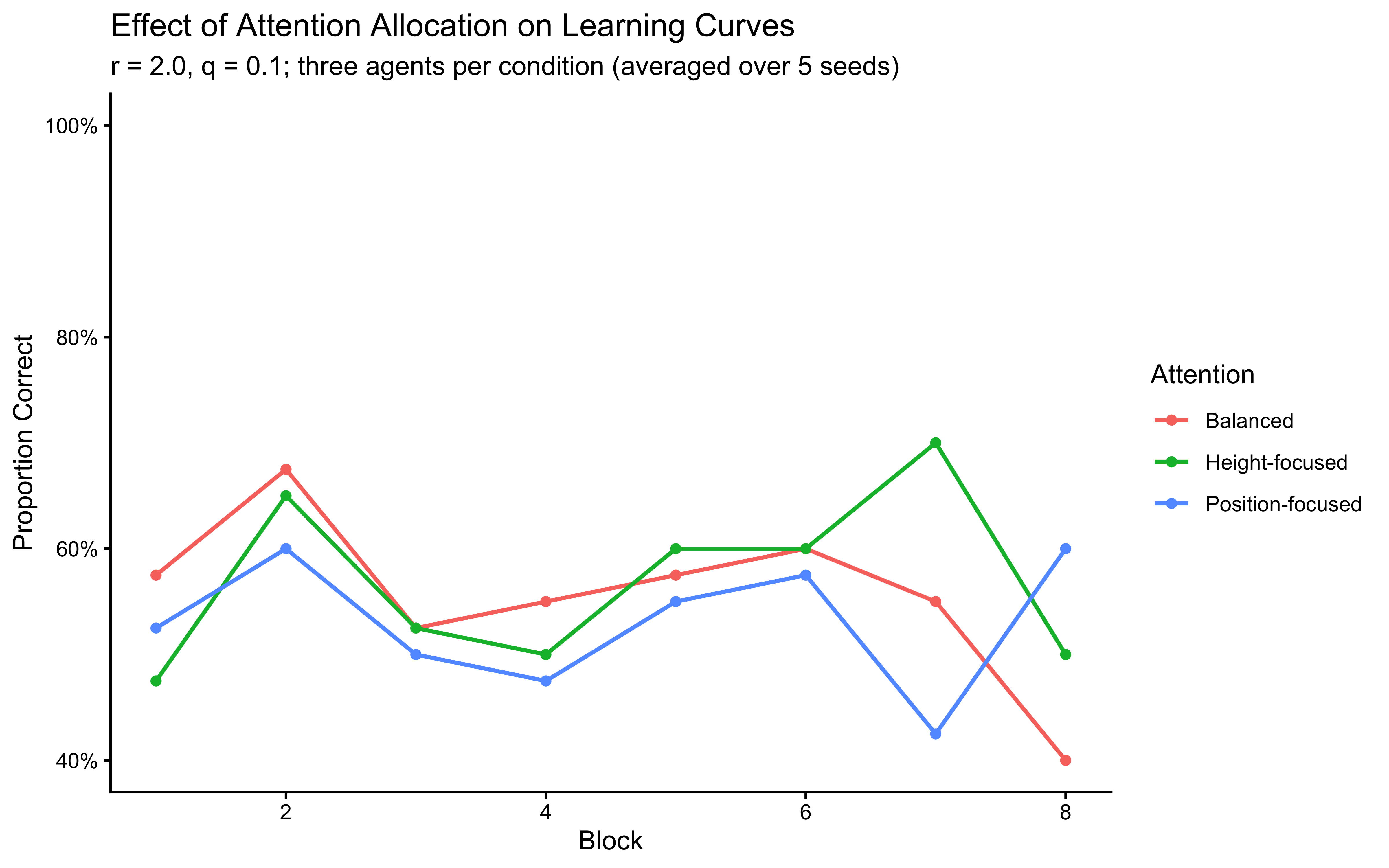

ll_mat <- gq$draws("log_lik", format = "matrix")