---

title: "Rule-Based Categorization Models (v2 — BayesFlow workflow)"

format:

html:

code-fold: true

code-tools: true

---

# Categorization models: Rules {#sec-rules}

::: {.callout-important title="What changed in v2"}

This is a revision of Chapter 15 in which the Neural Posterior Estimation

pipeline has been re-implemented on top of the **BayesFlow 2.x amortized

Bayesian workflow** instead of `sbi` + `reticulate`.

Concretely:

* The neural architecture is now a `TimeSeriesTransformer` summary network

(the trial sequence is ordered and path-dependent — it is structured data,

not a flat covariate vector) followed by a `FlowMatching` inference network.

* Routing is handled by an explicit `bf.Adapter()` chain, and training uses

`workflow.fit_offline` with a held-out validation set.

* In-silico diagnostics are produced by `workflow.compute_default_diagnostics`

and `workflow.plot_default_diagnostics`; hand-rolled SBC / coverage code

has been removed.

* The prior on the working-memory bound has been narrowed from

`Uniform(log 20, log 300)` to `Uniform(log 1, log 100)`, addressing the

cognitive-plausibility concern raised in the v1 placeholder box.

* Heavy compute (simulation + training) is no longer executed inline via

`reticulate`. It is delegated to a standalone Python script,

`scripts/ucloud_npe/amortized_particle_filter.py`, which is intended to be

run on uCloud (or any GPU/CPU node with JAX). The chapter loads the

resulting artifacts (`metrics.csv`, posterior samples, `history.json`) for

presentation.

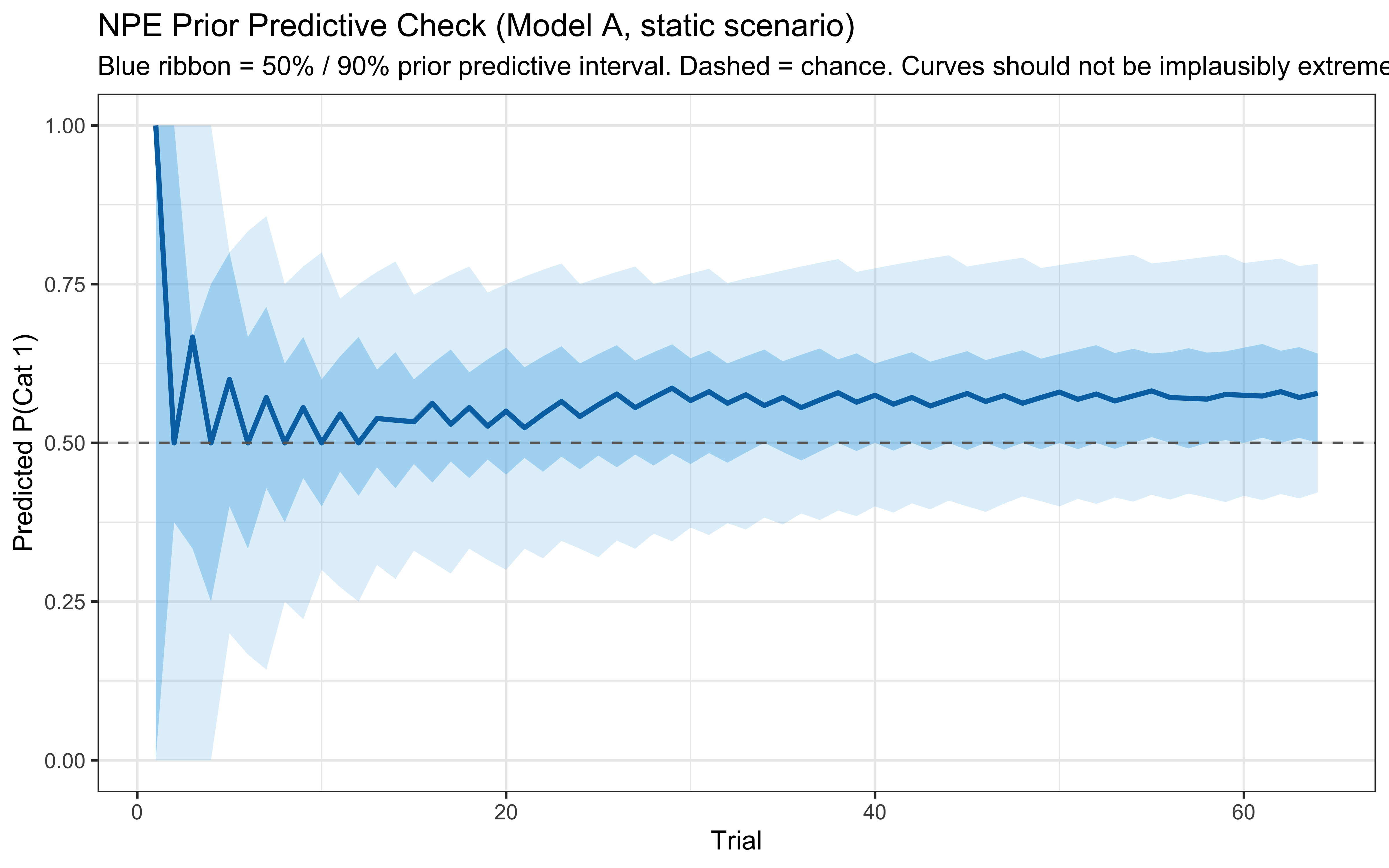

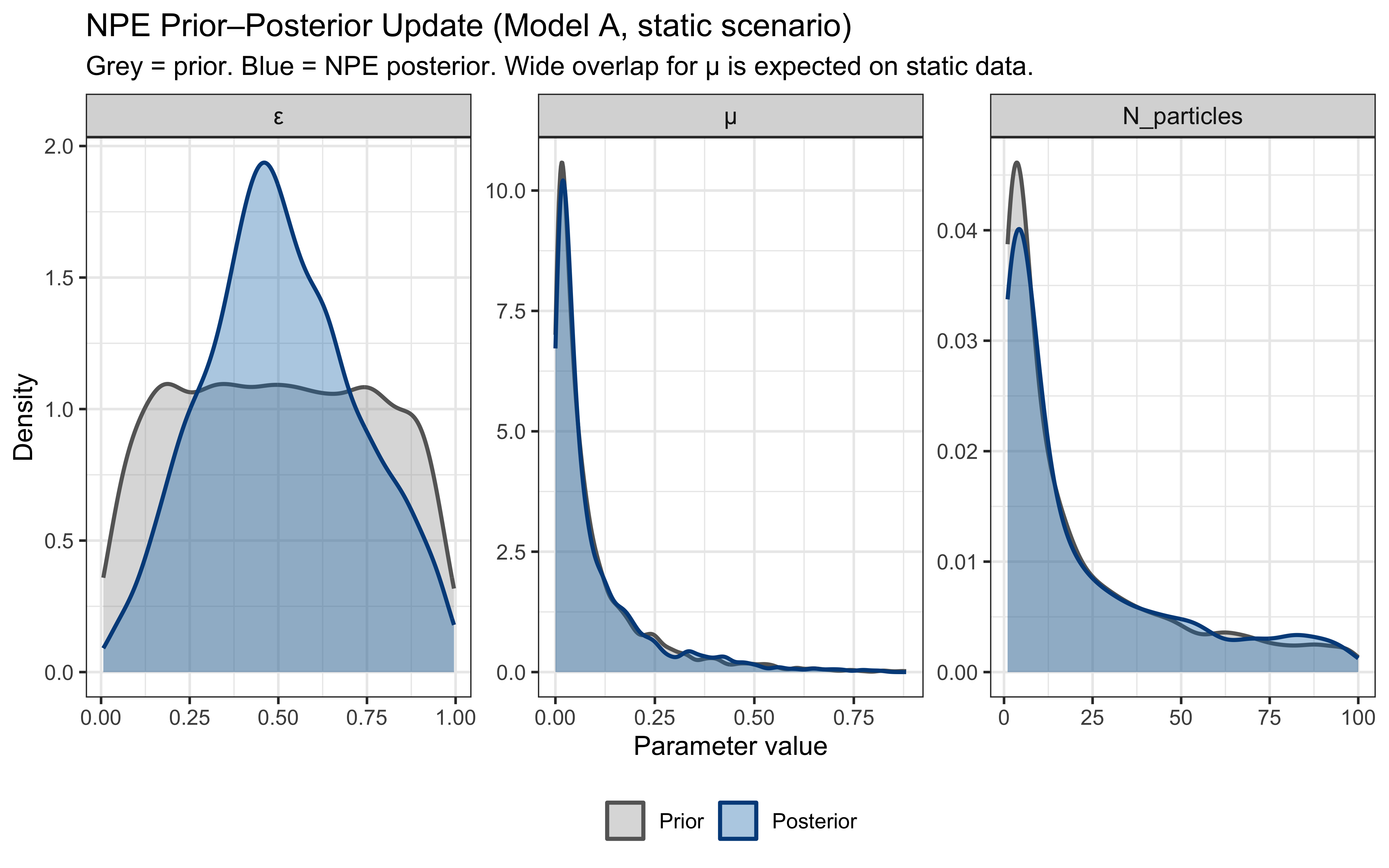

All diagnostic plots are generated as inline ggplot2 panels reading CSV files exported by the standalone Python script, consistent with the visual conventions of the rest of the book.

:::

```{r ch15_setup, include=FALSE}

knitr::opts_chunk$set(

echo = TRUE,

message = FALSE,

warning = FALSE,

fig.width = 8,

fig.height = 5,

fig.align = "center",

out.width = "80%",

dpi = 300

)

# Set to TRUE to rerun simulations and Stan fits.

# FALSE loads pre-computed results from 'simdata/'.

regenerate_simulations <- FALSE

# Set to TRUE to run the long-running validation chunks

# (rule-set sensitivity, real SBC for the Stan proxy, NPE pipeline,

# coverage validation, NPE-vs-Stan comparison).

run_intensive_checks <- FALSE

pacman::p_load(

tidyverse, # data manipulation and visualization

patchwork, # combining plots

cmdstanr, # Stan interface

posterior, # tidy posterior arrays

tidybayes, # tidy extraction of draws

bayesplot, # MCMC diagnostic plots

loo, # LOO-CV and PSIS

SBC, # Simulation-Based Calibration Checks

priorsense, # prior sensitivity

future, # parallel processing

furrr, # parallel functional programming

matrixStats, # logSumExp for PSIS calculations

reticulate, # Python interop for sbi / BayesFlow

slider, # rolling window summaries

ggrepel, # non-overlapping text labels

here

)

theme_set(theme_classic())

# ── NPE artifacts produced off-host ──────────────────────────────────────────

# v2: training and simulation run in scripts/ucloud_npe/amortized_particle_filter.py.

# The script writes per-scenario artifacts under

# simdata/ch15_v2_bayesflow/<scenario>/

# where <scenario> is one of: static, contingent_shift, drift.

# The chapter's "main" NPE chunks read from the static run; the scenario

# subsections read from contingent_shift / drift.

python_available <- FALSE

npe_artifacts_root <- here("simdata", "ch15_v2_bayesflow")

NPE_SCENARIOS <- c("static", "contingent_shift", "drift")

npe_scenario_dir <- function(s) file.path(npe_artifacts_root, s)

npe_scenario_ready <- function(s) {

d <- npe_scenario_dir(s)

dir.exists(d) && file.exists(file.path(d, "metrics.csv"))

}

# Default scenario for the main NPE block (Step 1–4 in the chapter):

npe_artifacts_dir <- npe_scenario_dir("static")

npe_artifacts_available <- npe_scenario_ready("static")

# ── Prior hyperparameter for logit(error_prob) ────────────────────────────────

# Normal(0, 1.5) is approximately uniform on the probability scale.

# Prior median ε ≈ 0.50; 95% mass on (0.05, 0.95).

# This is deliberately wider than the Normal(-2, 1) used in earlier drafts,

# which concentrated mass below ε = 0.27 and cut off the posterior for

# near-chance subjects. priorsense::powerscale_sensitivity() should be

# checked; if ψ > 0.1 for any quantity, the prior matters and this choice

# must be defended.

LOGIT_ERROR_PRIOR_MEAN <- 0.0

LOGIT_ERROR_PRIOR_SD <- 1.5

# ── Prior hyperparameter for logit(mutation_prob) ─────────────────────────────

# logit(μ) ~ Normal(-3, 1.5): prior median μ ≈ 0.047, 95% mass on (0.003, 0.37).

# This reflects the cognitive prior that most learners mutate rarely on any

# given trial — μ = 0 (static filter) is the default baseline. The SD is wide

# enough to accommodate highly adaptive learners (μ ≈ 0.2–0.3) while keeping

# most prior mass below 0.15. On static data we expect the posterior to be

# largely prior-like; on non-stationary data it should sharpen.

LOGIT_MU_PRIOR_MEAN <- -3.0

LOGIT_MU_PRIOR_SD <- 1.5

# ── Development-mode switch ───────────────────────────────────────────────────

# Set DEV_MODE <- TRUE when iterating on the chapter on a laptop (M1 or similar).

# This halves particle count and training simulations, cutting wall time by ~50–60%.

# Set FALSE for the final publication run so all structural choices match the text.

DEV_MODE <- FALSE

# ── Structural commitments for the particle filter (Model A) ─────────────────

# These are NOT inferred from data — they are cognitive choices the modeller

# makes. Sensitivity to each is exercised in dedicated subsections.

# Publication value: 100. DEV_MODE value: 50 (results are nearly identical on

# the Kruschke task; the filter converges reliably at 50 particles).

N_PARTICLES_FIXED <- if (DEV_MODE) 50L else 100L

MAX_RULE_DIMS <- 2 # default for the Kruschke task ONLY. Used as a

# function-argument default throughout this chapter;

# downstream chapters MUST pass max_dims explicitly

# rather than relying on this constant. Chapter 16

# (Alien Game) defines its own default of 3 because

# Sessions 2–3 contain rules over 3+ features.

RULE_OPERATORS <- c(">", "<")

RULE_LOGIC <- c("AND", "OR")

RESAMPLE_THRESHOLD <- 0.5 # resample when ESS < N_particles * this factor

# ── v2: BayesFlow prior bound on N_particles ─────────────────────────────────

# Replaces the v1 Uniform(log 20, log 300) prior, which was an order of

# magnitude above the working-memory literature. v2 uses Uniform(log 1, log 100):

# wide enough to span the relevant cognitive range and the regime where the

# filter degenerates, but anchored at scales the working-memory chunks

# literature treats as plausible.

N_PARTICLES_MIN_BF <- 1L

N_PARTICLES_MAX_BF <- 100L

for (d in c("stan", "simdata", "simmodels")) {

if (!dir.exists(d)) dir.create(d)

}

# ── Shared diagnostic helper (canonical version lives in Ch 11) ──────────────

# Re-defined here for standalone use. Same signature as Ch 11 / Ch 12.

diagnostic_summary_table <- function(fit, params = NULL) {

diag <- fit$diagnostic_summary(quiet = TRUE)

draws_summary <- fit$summary(variables = params)

n_div <- sum(diag$num_divergent)

max_rhat <- round(max(draws_summary$rhat, na.rm = TRUE), 2)

min_bulk <- round(min(draws_summary$ess_bulk, na.rm = TRUE), 0)

min_tail <- round(min(draws_summary$ess_tail, na.rm = TRUE), 0)

min_ebfmi <- round(min(diag$ebfmi), 3)

mcse_col <- intersect(names(draws_summary), c("mcse_mean", "mcse", "mcse_cp"))[1]

if (!is.na(mcse_col)) {

max_mcse <- round(max(draws_summary[[mcse_col]] / draws_summary$sd, na.rm = TRUE), 4)

} else {

max_mcse <- round(max(1 / sqrt(draws_summary$ess_bulk), na.rm = TRUE), 4)

}

out <- tibble::tibble(

metric = c(

"Divergences (zero tolerance)",

"Max rank-normalised R-hat",

"Min bulk ESS",

"Min tail ESS",

"Min E-BFMI",

"Max MCSE / posterior SD"

),

value = c(n_div, max_rhat, min_bulk, min_tail, min_ebfmi, max_mcse),

threshold = c("== 0", "< 1.01", "> 400", "> 400", "> 0.2", "< 0.05"),

pass = c(

n_div == 0,

max_rhat < 1.01,

min_bulk > 400,

min_tail > 400,

min_ebfmi > 0.2,

max_mcse < 0.05

)

)

if (!all(out$pass)) {

warning(

"MCMC diagnostic battery: at least one threshold breached. ",

"Inspect divergences and reparameterize before interpreting the posterior.",

call. = FALSE

)

}

out

}

# ── Kruschke (1993) stimulus set ─────────────────────────────────────────────

# Identical to Ch. 13 and Ch. 14; redefined here for standalone use.

stimulus_info <- tibble(

stimulus = c(5, 3, 7, 1, 8, 2, 6, 4),

height = c(1, 1, 2, 2, 3, 3, 4, 4),

position = c(2, 3, 1, 4, 1, 4, 2, 3),

category_true = c(0, 0, 1, 0, 1, 0, 1, 1)

)

n_blocks <- 8

n_stim_per_block <- nrow(stimulus_info)

total_trials <- n_blocks * n_stim_per_block

# Feature ranges for threshold sampling (min and max of each feature)

feature_range <- list(

height = range(stimulus_info$height),

position = range(stimulus_info$position)

)

# ── Per-subject schedule helper ───────────────────────────────────────────────

make_subject_schedule <- function(stimulus_info, n_blocks, seed) {

set.seed(seed)

n_stim <- nrow(stimulus_info)

sequence <- unlist(lapply(seq_len(n_blocks), function(b) {

sample(stimulus_info$stimulus, n_stim, replace = FALSE)

}))

tibble(

trial_within_subject = seq_along(sequence),

block = rep(seq_len(n_blocks), each = n_stim),

stimulus_id = sequence

) |>

left_join(stimulus_info, by = c("stimulus_id" = "stimulus")) |>

rename(category_feedback = category_true) |>

dplyr::select(trial_within_subject, block, stimulus_id,

height, position, category_feedback)

}

plan(multisession, workers = max(1, availableCores() - 1))

```

> **📍 Where we are in the Bayesian modeling workflow:**

> Chapters 13 and 14 implemented similarity-based accounts of categorization:

> the GCM stores individual exemplars and decides by summed similarity, while

> the Kalman filter prototype model tracks running category averages. Both

> update continuously and live as well-formed Stan likelihoods. This chapter

> takes a third route: **rule-based categorization**, where learners maintain

> and update discrete hypotheses about logical criteria for category

> membership. We implement this using a Bayesian particle filter — a sequential

> Monte Carlo method that maintains a weighted distribution over candidate

> rules.

>

> **Two distinct models will appear in this chapter, and the distinction is

> load-bearing**:

>

> * **Model A (the cognitive theory)** is the particle filter itself. Its

> parameters are $\varepsilon$ (error rate), $N_{\text{particles}}$ (a

> working-memory bound), `max_dims`, the rule grammar, and the resampling

> threshold. Its likelihood has no closed form because the rule space is

> discrete and combinatorial, the resampling step is non-differentiable, and

> the particle trajectories cannot be marginalised analytically. **Stan

> cannot fit Model A.** It can only be fitted via simulator-based inference,

> for which we use Neural Posterior Estimation (NPE) via the BayesFlow 2.x

> framework (Radev et al., 2023).

> * **Model B (a tractable Stan proxy)** is a static, conditionally

> exchangeable mixture over a small fixed set of hand-picked candidate

> rules. Its likelihood is closed-form Bernoulli marginalised with

> `log_sum_exp`. It admits the full HMC validation pipeline. **Validation of

> Model B is not validation of Model A.** A reader who skips this distinction

> will end up using LOO scores from Model B as if they reported on the

> particle filter's predictive accuracy. They do not.

>

> The pedagogical move in this chapter is to fit *both* models, using HMC for

> Model B and Neural Posterior Estimation (NPE) for Model A, and to read the

> gap between their posteriors as the chapter's main empirical finding: the

> magnitude of the structural bias you accept when you replace an intractable

> cognitive theory with a tractable proxy.

## Theoretical Foundations

The previous chapters built two families of similarity-based models: exemplar models that compute similarity to stored instances, and prototype models that compute similarity to a running mean. Both families answer the question "how much does this stimulus resemble what I already know about each category?" Rule-based models ask a different question: "does this stimulus satisfy the defining conditions for category membership?" Think of "a square has four equal sides and four right angles," or the diagnostic shortcut "if it has feathers, it is a bird."

### Core Assumptions of Rule-Based Models

1. **Explicit decision criteria**: Categories are represented as logical conditions on feature values (e.g., "height > 2.5 AND position < 3.0"), not as collections of stored traces or running statistics.

2. **Hypothesis search and weighting**: Learning involves maintaining, testing, and revising candidate rules trial by trial, rather than accumulating evidence incrementally in a fixed representation.

3. **Structurally encoded attention**: A rule makes irrelevant dimensions invisible by construction — a threshold on height simply does not reference position. This differs from the *parametric* attention of GCM ($w_d$ weights) and the prototype model ($w_k$ weights), where all dimensions enter the computation but can be up- or down-weighted.

4. **Sharp, axis-aligned boundaries**: A conjunctive threshold rule partitions the feature space into a rectangle; generalisation drops to zero as soon as the boundary is crossed, unlike the graded gradients of distance-based models.

5. **Verbalizability**: Rules are often directly articulable, which connects this framework to verbal protocol data and dual-process theories of categorization.

### Rule-Based Categorization in the Six-Step Pipeline

Chapters 13 and 14 used a six-stage framework to locate the GCM and the Kalman-filter prototype in the space of possible categorization models. The rule-based particle filter occupies a distinct position at stages 2, 3, and 6:

| Stage | GCM (Ch. 13) | Kalman prototype (Ch. 14) | Rule particle filter (Ch. 15) |

|---|---|---|---|

| **1. Input representation** | Raw feature vector | Raw feature vector | Raw feature vector |

| **2. Attention** | Learned continuous weights $w_d$; any $w_d = 0$ drops a dimension | Optional learned weights $w_k$ (§Selective Attention); base model weights equally | Structural — irrelevant dimensions absent from the rule by definition |

| **3. Intermediate representation** | Per-category similarity sums | Per-category Gaussian running mean + covariance | Weighted distribution over discrete rule hypotheses $\{r_1, \ldots, r_N\}$ |

| **4. Evidential mechanism** | Luce-ratio over similarities | Ratio of Gaussian likelihoods | Weighted vote over rule predictions, softened by $\varepsilon$ |

| **5. Decision rule** | Luce choice (probabilistic similarity matching) | Luce choice (probabilistic density matching) | Luce choice (probability $\varepsilon$ of error, $1-\varepsilon$ of following the rule) |

| **6. Learning** | Exemplar accumulation (decay at rate $\lambda$) | Kalman update (process noise $q$) | Sequential Monte Carlo: weight update → resample → mutate at rate $\mu$ |

The sharpest contrasts are at stages 2, 3, and 6. At stage 2, the GCM and prototype models achieve selective attention *parametrically* — by learning weights that can suppress any dimension to zero — whereas the rule model achieves it *structurally*, because the rule itself never mentions the irrelevant dimensions. At stage 3, the model maintains a hypothesis distribution over discrete logical objects rather than a similarity sum or a running mean. At stage 6, the stochastic resample-and-mutate step breaks differentiability, which is why the likelihood is intractable and NPE is required to fit Model A.

::: {.callout-note title="Why can't we have a single clean Stan model here?"}

In Chapters 13 and 14, each stage of the pipeline mapped onto a differentiable Stan operation: gradient-based sampling could reach every parameter. In Chapter 15, stage 3 (the discrete hypothesis distribution) and stage 6 (the stochastic resample step) break differentiability. The particle filter is a valid Bayesian cognitive model, but it is not a Stan model. This is why the chapter introduces two models: Model A is the cognitive theory, fitted via NPE; Model B is a tractable differentiable proxy, fitted via HMC.

:::

::: {.callout-note}

### Historical Context: From Hypothesis Testing to Bayesian Rule Inference

Rule-based accounts of categorization have the longest intellectual pedigree of the three architectures in this trilogy — and the most turbulent history. They were the dominant framework first, were largely displaced by similarity-based models, and then returned in a transformed Bayesian form that revealed why they had been hard to formalise all along.

#### The Hypothesis-Testing Paradigm

The modern study of concept learning began with @bruner1956study, *A Study of Thinking*. Bruner and colleagues gave participants geometrically varying cards and asked them to identify which feature values defined a target concept. Subjects did not respond randomly: they tested explicit hypotheses, updating their current conjunctive rule in response to feedback. The book introduced a taxonomy of strategies — *conservative focusing*, *focus gambling*, *successive scanning* — that remained the vocabulary of the field for two decades.

@levine1975cognitive extended this programme with a rigorous protocol for inferring which hypothesis a subject was currently testing on each trial, using sequences of blank trials (no feedback) interleaved with informative ones. His work demonstrated that human learners are genuinely maintaining discrete hypothesis states, not merely adjusting continuous weights. The models of this era assumed noiseless rule application: once the correct rule was identified, responses were deterministic. Stochastic behaviour was treated as error rather than as signal.

#### The Crisis: Similarity Defeats Rules on Ill-Defined Categories

The hypothesis-testing models were built for *well-defined* categories — structures where a deterministic rule perfectly separates the classes. The prototype and exemplar literature of the 1970s was constructed with ill-defined categories deliberately, and on those materials, rule models failed badly. A strict conjunctive rule ("height > X AND position > Y") produces sharp, axis-aligned decision boundaries; human data from continuous feature spaces showed graded, curved generalisation gradients inconsistent with any finite rule set. For two decades after Rosch, rule-based theories were widely regarded as inapplicable to natural categories.

The rehabilitation came from two directions. First, @nosofsky1994rule showed that a model combining an exemplar process with an error-correction rule selector — **RULEX** — could account for a wide range of phenomena that neither pure exemplar nor pure rule models handled well, including the speed of one-shot rule learning and the presence of exception items that exemplar-only models struggled to weight correctly. Second, @ashby1998neurobiological proposed **COVIS** (COmpetition between Verbal and Implicit Systems), a dual-process model in which an explicit rule system (implemented in prefrontal cortex) competes with an implicit associative system (implemented in the basal ganglia) for control of behaviour. COVIS made contact with neuroimaging and patient data in a way that purely algorithmic models could not, and substantially renewed empirical interest in the rule hypothesis.

#### The Bayesian Turn: Concepts as Probabilistic Programs

The decisive reframing came from @tenenbaum1999bayesian and the subsequent Bayesian models of cognition programme [@tenenbaum2011grow]. Tenenbaum showed that a learner who places a prior over a hypothesis space of rules and updates by Bayes' theorem naturally reproduces the distinctive features of human concept learning: the *size principle* (smaller consistent hypotheses are preferred), rapid generalisation from one or a few positive examples, and coherent overhypothesis formation across domains.

@goodman2008rational formalised this as **probabilistic language of thought**: concepts are programs in a stochastic generative grammar, and cognition is inference over programs. The framework unifies rule-based and similarity-based accounts — a prototype or exemplar model is a special case of a distributional program; a conjunctive rule is a logical program — and makes the hypothesis space itself an object of inference. The price is computational: exact Bayesian inference over an open-ended program space is intractable, which is why simulation-based methods are required.

#### The Particle Filter and Computational Intractability

Particle filters [@gordon1993novel] provide an approximate Bayesian inference algorithm for state-space models where the posterior over discrete latent states (here: the current rule hypothesis) cannot be computed analytically. At each step a population of hypotheses ("particles") is propagated forward, weighted by likelihood, and resampled proportionally to weight. The *rejuvenation* step — mutating low-weight particles by sampling from the prior — prevents degeneracy and introduces a mutation rate parameter $\mu$ that controls how readily the learner abandons the current hypothesis.

The appeal for cognitive modelling is direct: the particle filter implements hypothesis testing in exactly the sequential, trial-by-trial manner that @levine1975cognitive documented empirically, while tolerating noise and ill-defined categories. The mutation parameter $\mu$ plays an analogous role to the process noise $q$ of the Kalman prototype model — it controls how non-stationary the learner's hypothesis space is — but the mechanism is categorically different. $Q$ in the Kalman filter enters a *continuous* Gaussian prediction step; $\mu$ in the particle filter drives *discrete* resampling and mutation. This difference means that identifiability patterns, scenario-match requirements, and the relationship between $\mu$ and environmental stationarity all differ from what Chapter 14 established for $q$.

The intractability of exact inference is the reason this chapter departs from the Stan-only pipeline of Chapters 13 and 14. There is no closed-form likelihood for the particle filter's resampling trajectory, and no sum-and-marginalise trick that reduces it to one. The chapter therefore works with two separate models: a cognitive theory fitted via Neural Posterior Estimation (NPE), and a tractable Stan proxy. Understanding why those two models agree in some regimes and diverge in others is the chapter's central empirical finding.

:::

### Representing and Evaluating Rules

How can we represent a rule computationally? For our 2D stimuli (height, position), a simple rule might involve one or both dimensions and a threshold.

Example Rule: "If Height > 3.5, then predict Category 1, otherwise Category 0."

We can represent this rule in R as a list:

```{r ch15_rule_representation}

# Example: a simple 1D rule

rule_example_1d <- list(

dimensions = 1,

thresholds = 3.5,

operations = ">",

prediction_if_true = 1

)

# Example: a 2D rule (Height > 2 AND Position < 3 => Cat 0)

rule_example_2d <- list(

dimensions = c(1, 2),

thresholds = c(2.0, 3.0),

operations = c(">", "<"),

logic = "AND",

prediction_if_true = 0

)

# Example: a nested rule — (Height > 2.5) AND ((Height > 3.5) OR (Position < 2.0))

# Cognitive reading: "tall-enough AND (very tall OR low on screen)"

# This is a RULEX-style conjunctive rule with a disjunctive exception clause.

# The 'nested_logic' field marks this as a three-condition rule; the first

# condition is in clause A and clauses B and C are combined by the inner connective.

# The outer connective links clause A with (B OR C).

rule_example_nested <- list(

dimensions = c(1, 1, 2), # features for each of the 3 conditions

thresholds = c(2.5, 3.5, 2.0),

operations = c(">", ">", "<"),

outer_logic = "AND", # connects clause A with (B inner_logic C)

inner_logic = "OR", # connects clauses B and C

prediction_if_true = 1

)

```

Now, a function to evaluate a given rule against a stimulus:

```{r ch15_evaluate_rule}

# Evaluate a rule for a given stimulus.

# Returns the category prediction (0 or 1).

evaluate_rule <- function(rule, stimulus) {

eval_cond <- function(dim_idx, thresh, op) {

switch(op,

">" = stimulus[dim_idx] > thresh,

"<" = stimulus[dim_idx] < thresh,

stop("Invalid operation in rule"))

}

if (length(rule$dimensions) == 1) {

condition_met <- eval_cond(rule$dimensions[1], rule$thresholds[1], rule$operations[1])

} else if (length(rule$dimensions) == 2) {

c1 <- eval_cond(rule$dimensions[1], rule$thresholds[1], rule$operations[1])

c2 <- eval_cond(rule$dimensions[2], rule$thresholds[2], rule$operations[2])

condition_met <- if (rule$logic == "AND") c1 & c2

else if (rule$logic == "OR") c1 | c2

else stop("Invalid logic in 2D rule")

} else if (length(rule$dimensions) == 3 && !is.null(rule$outer_logic)) {

# Nested rule: A outer_logic (B inner_logic C)

# rule$outer_logic connects condition 1 with the (2 inner_logic 3) clause

cA <- eval_cond(rule$dimensions[1], rule$thresholds[1], rule$operations[1])

cB <- eval_cond(rule$dimensions[2], rule$thresholds[2], rule$operations[2])

cC <- eval_cond(rule$dimensions[3], rule$thresholds[3], rule$operations[3])

inner <- if (rule$inner_logic == "OR") cB | cC

else if (rule$inner_logic == "AND") cB & cC

else stop("Invalid inner_logic in nested rule")

condition_met <- if (rule$outer_logic == "AND") cA & inner

else if (rule$outer_logic == "OR") cA | inner

else stop("Invalid outer_logic in nested rule")

} else {

stop("Rule dimension count not supported (only 1, 2, or 3-nested)")

}

ifelse(condition_met, rule$prediction_if_true, 1L - rule$prediction_if_true)

}

# --- Test ---

test_stimulus <- c(2.5, 3.8)

cat("Rule 1 Prediction:", evaluate_rule(rule_example_1d, test_stimulus),

"(Expected: 0 — Height 2.5 is not > 3.5)\n")

cat("Rule 2 Prediction:", evaluate_rule(rule_example_2d, test_stimulus),

"(Expected: 1 — Height > 2 TRUE, Position < 3 FALSE; AND => FALSE => predicts 1)\n")

# Nested rule test: Height > 2.5 TRUE; inner: Height > 3.5 FALSE OR Position < 2.0 FALSE => FALSE

# outer AND: TRUE AND FALSE => FALSE => predicts 0 (complementary of prediction_if_true = 1)

cat("Nested Rule Prediction:", evaluate_rule(rule_example_nested, test_stimulus),

"(Expected: 0 — outer A=TRUE but inner B=FALSE OR C=FALSE => FALSE)\n")

```

---

## Rule Grammar: Formal Specification

Let's build a formal grammar for rule specification.

A rule $r$ in our grammar is a tuple

$$r = (D, T, O, L, c) \in \mathcal{R}$$

where:

* $D \in \mathcal{P}(\{1, 2\}) \setminus \emptyset$ with $|D| \leq \text{max\_dims}$ is the set of feature dimensions used by the rule. With $\text{max\_dims} = 2$, $|D| \in \{1, 2\}$.

* $T \in \mathbb{R}^{|D|}$ is the threshold vector, with the $k$-th element drawn uniformly from the observed range of feature $D_k$ (in our task: height $\in [1, 4]$ and position $\in [1, 4]$).

* $O \in \{>, <\}^{|D|}$ is the operator vector.

* $L \in \{\text{AND}, \text{OR}\}$ is the logical connective; only used when $|D| = 2$.

* $c \in \{0, 1\}$ is the prediction emitted when all conditions are satisfied. The complementary prediction is emitted otherwise.

**Extended grammar: nested three-condition rules.** We also support a second rule form that captures RULEX-style exception clauses (Nosofsky et al. 1994):

$$r_{\text{nested}} = (d_A, t_A, o_A, d_B, t_B, o_B, d_C, t_C, o_C, L_{\text{outer}}, L_{\text{inner}}, c)$$

evaluated as $\text{condition}_A\ L_{\text{outer}}\ (\text{condition}_B\ L_{\text{inner}}\ \text{condition}_C)$. The inner clause $(B\ L_{\text{inner}}\ C)$ acts as a disjunctive exception when $L_{\text{outer}} = \text{AND}$ and $L_{\text{inner}} = \text{OR}$: "the rule applies if A holds, but only unless the exception (B OR C) saves a stimulus from the incorrect prediction." Cognitive reading of `rule_example_nested`: "predict Cat 1 if Height > 2.5 AND (Height > 3.5 OR Position < 2.0)" — a tall-enough stimulus qualifies, *and* especially if it is very tall or at a low screen position. With only two features in the Kruschke task, the feature index $d_A$ may coincide with $d_B$ or $d_C$ without loss of generality; the thresholds differ, so the conditions are not redundant.

The number of distinct rules in this grammar is finite (although large). For $\text{max\_dims} = 2$ flat rules on a 2-feature task: $|\mathcal{R}| = 2 \cdot |T_{\text{grid}}| \cdot 2 \cdot 2 + \binom{2}{2} \cdot |T_{\text{grid}}|^2 \cdot 4 \cdot 2 \cdot 2$, where $|T_{\text{grid}}|$ is the threshold discretisation. Nested three-condition rules add $2^3 \cdot |T_{\text{grid}}|^3 \cdot 4 \cdot 2$ additional equivalence classes. With a continuous threshold space the grammar has uncountably many rules in both forms.

**Commitments encoded in this grammar:**

* No negation operator. A rule cannot say "NOT (Height > 2)." Negation can be partially recovered by flipping $c$ and choosing the complementary inequality, but compound negations cannot be expressed.

* No equality operator. Strict inequalities only.

* Maximum two conditions per flat rule, or three conditions in a nested rule. A reader who believes humans use deeper rule trees will need to extend the grammar further.

* Continuous thresholds. This defeats analytical marginalisation in Stan (see §"Why Model A's Likelihood is Intractable for HMC").

* Nested rules use a fixed two-level tree: one outer condition and one inner clause of two conditions. Deeper trees (e.g., A AND (B AND (C OR D))) are not implemented.

* Counting rules are inexpressible. A rule like "at least 2 of $\{f_1, f_2, f_3\}$ are present" cannot be represented as any single conjunct or disjunct in this grammar. The particle filter approximates such rules via an *ensemble* of 2D conjunction particles that collectively cover the relevant stimulus set, but no individual particle equals the ground-truth rule. This becomes relevant in the Alien Game (Chapter 16, Session 2 Nutritious).

We will test sensitivity to `max_dims` in the dedicated subsection, and the operator/logic-set commitments are flagged in §"Limitations."

### What Changes When Features Are Binary and 5-Dimensional

Chapter 16 applies the same particle filter to the Alien Game (5 binary features per stimulus) instead of the 2 continuous Kruschke features. The cognitive content of the model — particles, weights, ESS resampling, mutation, weighted vote — is unchanged. Four concrete substitutions are needed:

1. **`n_features`: $2 \to 5$.** The simulator reads `obs` as a $T \times n_{\text{features}}$ matrix; nothing in the trial loop assumes a particular width as long as `feature_range` is built from `seq_len(n_features)` rather than hard-coded as `[, 1]` and `[, 2]`.

2. **`feature_range`: per-feature continuous extent $\to$ `c(0, 1)` per feature.** The threshold sampler `runif(1, lo, hi)` still works, but operationally the only informative threshold on a unit interval is the midpoint.

3. **Threshold representation: continuous $\to$ fixed at 0.5.** Because features are binary, every condition reduces to "feature $d$ equals 0" (operator `<` 0.5) or "feature $d$ equals 1" (operator `>` 0.5). Chapter 16 hard-codes `thresholds[k] = 0.5` for clarity rather than sampling continuously over a degenerate interval.

4. **`max_dims`: $2 \to 3$.** Several alien rules require three feature checks (e.g., "Spotty AND (Tall OR Tail)"). The nested form $A\ L_{\text{outer}}\ (B\ L_{\text{inner}}\ C)$ introduced here is **retained** in Chapter 16, because the alien-game grammar `A AND (B OR C)` is exactly this nested shape. The 2-feature Kruschke task can express interesting nested rules over the same two dimensions (different thresholds); the 5-feature alien task expresses interesting nested rules over three different dimensions. The grammar object is the same in both cases — only `n_features`, `feature_range`, threshold sampling, and `max_dims` change.

The four NPE validations introduced later in this chapter (prior predictive, marginal SBC, expected coverage, posterior predictive) all transfer directly: the only changes are in the data tensor shape — $T \times (1 + n_{\text{features}} + 1)$ rather than $T \times 4$ — and in the simulator's `feature_range` argument. The trained network architecture (embedding MLP + neural spline flow) does not need redesign; it is a function from a flat input vector of any fixed length to a posterior over $\theta$.

> **Forward note for Chapter 16.** Chapter 16 inherits this grammar with the four substitutions above, retains the nested `A L_outer (B L_inner C)` form so the alien grammar's `A AND (B OR C)` is directly representable, and raises `max_dims` to 3. Chapter 16 Phase 0 Q5 names this relaxation explicitly and gives its cognitive interpretation.

---

## The Challenge: Searching the Space of Rules

Evaluating a given rule is easy. The hard part of rule-based learning is finding the right rule. The number of possible rules (combinations of dimensions, thresholds, operations, logic) is vast. How can a learner efficiently search this space?

Unlike exemplar and prototype models, where the representation is straightforward, rule-based models need mechanisms for:

1. Generating candidate rules

2. Testing rules against observed data

3. Switching between rules when necessary

4. Maintaining uncertainty about which rule is correct

---

## The Bayesian Particle Filter: Tracking Rule Hypotheses (Model A)

The Bayesian particle filter provides an elegant solution to these challenges. Originally developed for tracking physical objects, particle filters have been adapted to model how humans track and update hypotheses. In our context, each "particle" represents a possible categorization rule, and the filter maintains and updates a distribution over these rules.

**This is Model A — the cognitive theory.** Everything in this section implements the cognitive model whose intractable likelihood will eventually require NPE to fit.

The core idea:

1. **Particles = Rules**: Each particle is a specific candidate rule.

2. **Weights = Belief**: Each particle has a weight $w_i$ representing the probability that this specific rule is the "true" rule. Initially, all weights are equal ($1/N$).

3. **Update Weights**: When a new stimulus and its correct category are observed, we update the weights. Rules that correctly predict the category get their weights increased; rules that predict incorrectly get their weights decreased. The amount of change depends on how likely the observation was under that rule.

4. **Resample**: Over time, many particles accumulate near-zero weight ("particle degeneracy"). To focus computational effort, we periodically resample when the Effective Sample Size drops below a threshold: draw $N$ new particles with probability proportional to their weights. High-weight particles are likely to be selected multiple times; low-weight particles are likely to be dropped. After resampling, weights are reset to $1/N$.

5. **Mutate**: Resampling *selects* from the current hypothesis set — it cannot invent rules that were never in the initial set. A mutation step gives each particle an independent probability $\mu$ of being replaced by a freshly sampled rule from the grammar. This injects hypothesis turnover, prevents the hypothesis space from collapsing to a few survivors, and allows the filter to track categories that change over time. $\mu = 0$ recovers a pure selection filter; $\mu > 0$ is the analogue of process noise $q$ in the Kalman prototype and the forgetting rate $\lambda$ in the decay GCM.

The trial-level loop therefore runs: **predict → receive feedback → update weights → resample if ESS low → mutate**. Mutation comes last because it operates on the post-resample particle set: rules that survived selection are diversified before the next trial begins, ensuring fresh hypotheses enter with uniform weight and are evaluated fairly on the next observation.

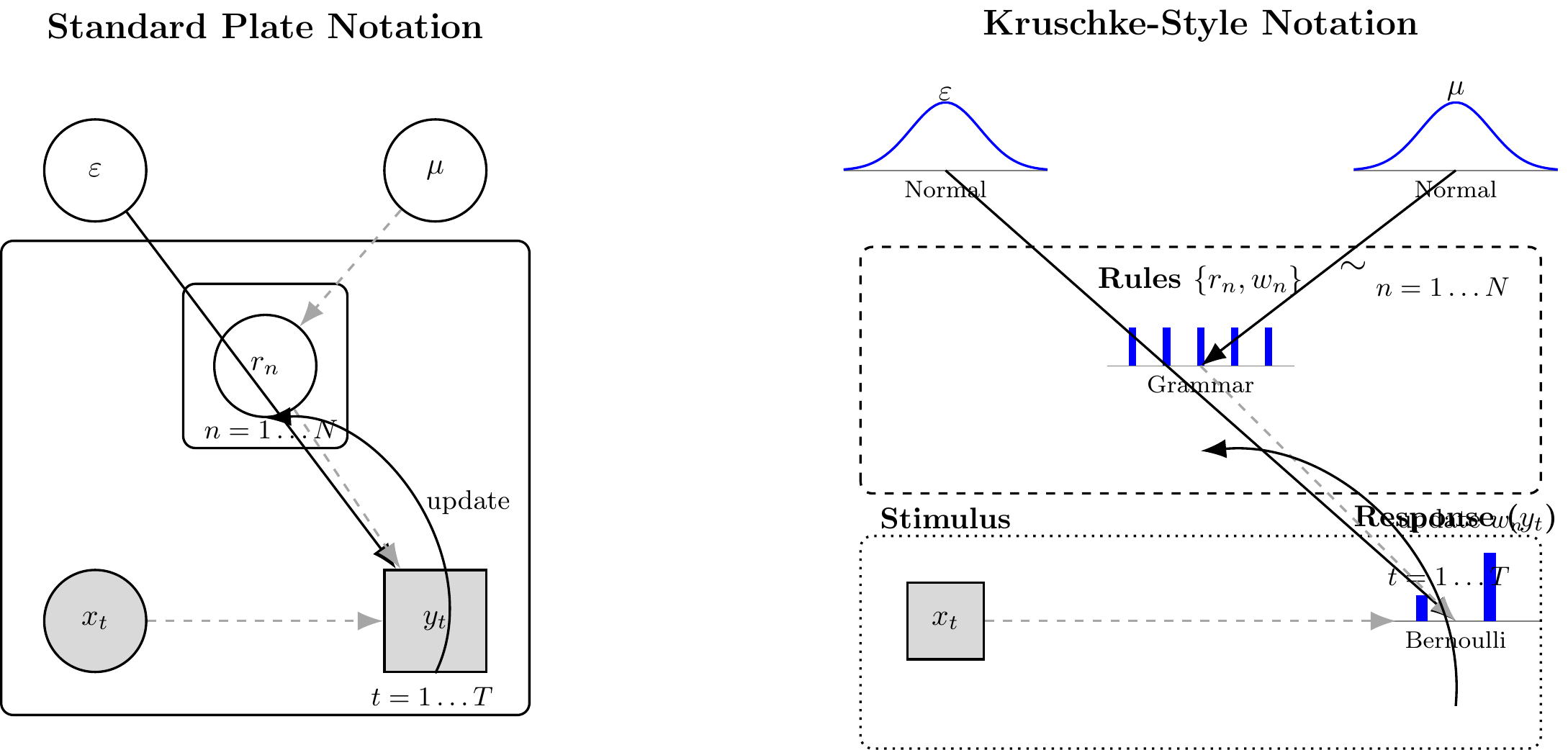

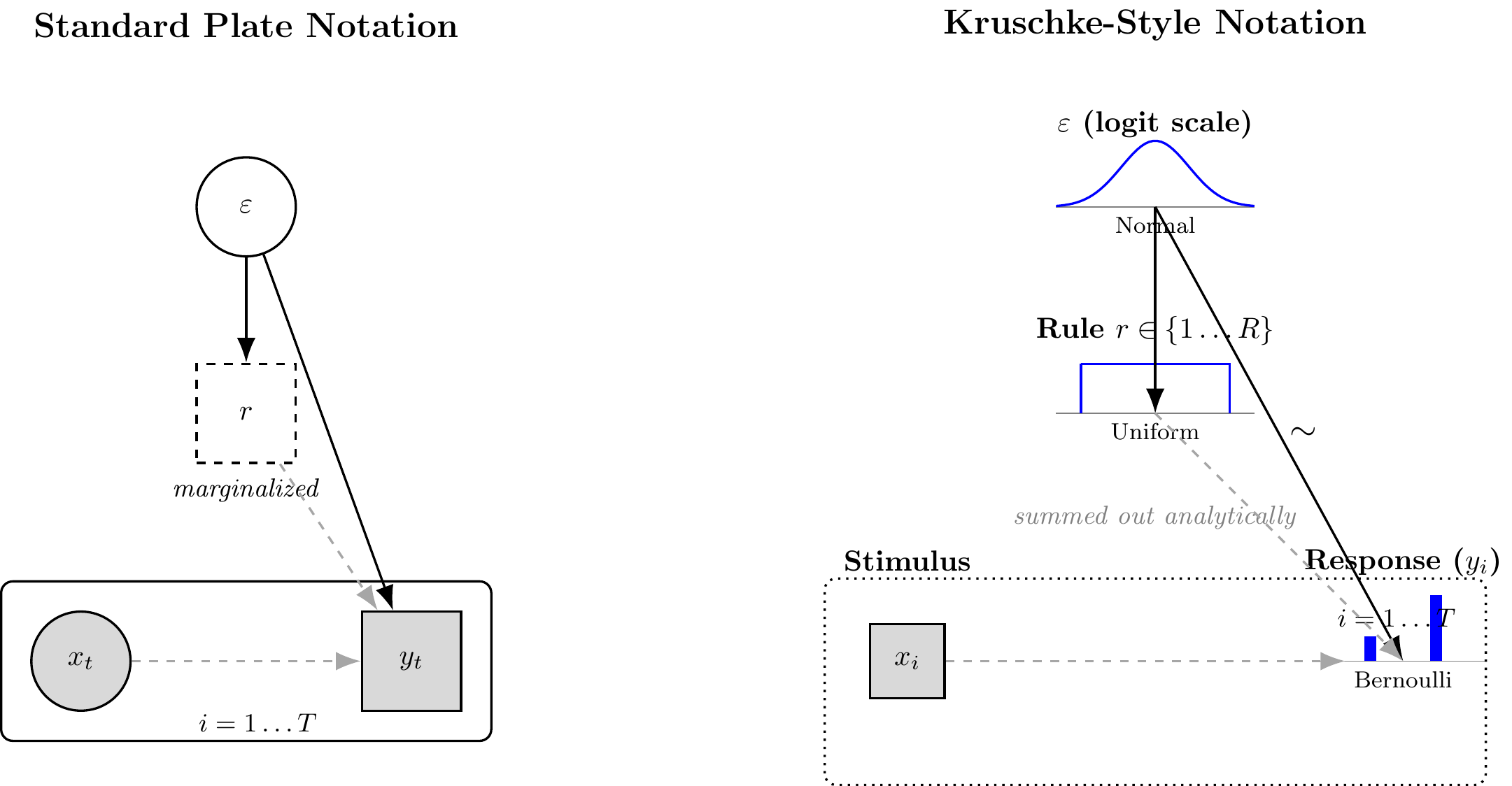

```{tikz ch15_model_a_dag, echo=FALSE, fig.cap="Generative structure of Model A (Bayesian Particle Filter). Left: standard plate notation. Right: Kruschke-style notation. Filled nodes are observed; open nodes are latent. The particle set $\\{r_n\\}$ evolves across trials via resampling and mutation, so the sequential dependency is captured by the trial plate with a self-loop on the particle weights. The two continuous parameters inferred by NPE are $\\varepsilon$ (error probability) and $\\mu$ (mutation probability); $N$ and the rule grammar are fixed structural choices, not estimated.", fig.ext='png', fig.width=12, cache=TRUE}

\usetikzlibrary{positioning, shapes.geometric, arrows.meta, fit, backgrounds, calc}

\tikzset{

stochastic/.style={->, >={Latex[length=3mm]}, thick},

deterministic/.style={->, >={Latex[length=3mm]}, thick, dashed, draw=gray!70},

plate/.style={draw=black, thick, rounded corners, inner sep=10pt},

continuous/.style={circle, draw=black, thick, minimum size=1.2cm, inner sep=0pt},

discrete/.style={rectangle, draw=black, thick, minimum size=1.2cm},

observed/.style={fill=gray!30},

latent/.style={fill=white},

pics/norm_dist/.style={code={

\draw[thin, gray] (-1.2,0) -- (1.2,0);

\draw[thick, blue] plot[domain=-1.2:1.2, samples=50] (\x, {0.8*exp(-3*\x*\x)});

\node[font=\footnotesize, below] at (0,0) {Normal};

}},

pics/bern_dist/.style={code={

\draw[thin, gray] (-1,0) -- (1,0);

\draw[blue, line width=4pt] (-0.4, 0) -- (-0.4, 0.3);

\draw[blue, line width=4pt] (0.4, 0) -- (0.4, 0.8);

\node[font=\footnotesize, below] at (0,0) {Bernoulli};

}},

pics/grammar_dist/.style={code={

\draw[thin, gray] (-1.1,0) -- (1.1,0);

\foreach \x in {-0.8,-0.4,0,0.4,0.8} {

\draw[blue, line width=2.5pt] (\x, 0) -- (\x, 0.45);

}

\node[font=\footnotesize, below] at (0,0) {Grammar};

}}

}

\begin{tikzpicture}

%% LEFT: STANDARD PLATE NOTATION

\node[font=\large\bfseries] at (0, 3.5) {Standard Plate Notation};

% Hyperparameters / priors (fixed structural choices at top)

\node[continuous, latent] (eps) at (-2, 1.8) {$\varepsilon$};

\node[continuous, latent] (mu) at ( 2, 1.8) {$\mu$};

% Particle plate

\node[continuous, latent] (r_n) at (0, -0.5) {$r_n$};

\node[plate, fit=(r_n), label={[anchor=south east, font=\small\bfseries]below right:$n=1\dots N$}] (pN) {};

% Trial plate

\node[continuous, observed] (x_t) at (-2, -3.5) {$x_t$};

\node[discrete, observed] (y_t) at ( 2, -3.5) {$y_t$};

\node[plate, inner sep=14pt, fit=(x_t)(y_t)(pN), label={[anchor=south east, font=\small\bfseries]below right:$t=1\dots T$}] (pT) {};

% Arrows

\draw[stochastic] (eps) -- (y_t);

\draw[deterministic] (mu) -- (r_n);

\draw[deterministic] (r_n) -- (y_t);

\draw[deterministic] (x_t) -- (y_t);

% Self-loop indicating sequential update of particles across trials

\draw[stochastic, bend right=60] (y_t.south) to node[right, font=\small]{update} (r_n.south);

%% RIGHT: KRUSCHKE-STYLE NOTATION

\node[font=\large\bfseries] at (11, 3.5) {Kruschke-Style Notation};

% Priors

\coordinate (k_eps) at (8, 1.8); \draw (k_eps) pic {norm_dist};

\node[above=0.7cm of k_eps, font=\bfseries] {$\varepsilon$};

\coordinate (k_mu) at (14, 1.8); \draw (k_mu) pic {norm_dist};

\node[above=0.7cm of k_mu, font=\bfseries] {$\mu$};

% Particle set (grammar prior)

\coordinate (k_r) at (11, -0.5); \draw (k_r) pic {grammar_dist};

\node[above=0.7cm of k_r, font=\bfseries, align=center] {Rules $\{r_n, w_n\}$};

% Observed stimulus (fixed/given)

\node[discrete, observed, minimum size=0.9cm] (k_x) at (8, -3.5) {$x_t$};

\node[above=0.5cm of k_x, font=\bfseries] {Stimulus};

% Response

\coordinate (k_y) at (14, -3.5); \draw (k_y) pic {bern_dist};

\node[above=0.9cm of k_y, font=\bfseries] {Response ($y_t$)};

% Plates

\draw[plate, dashed] (7.0, -2.0) rectangle (15.0, 0.9) node[below left, font=\small\bfseries] {$n=1\dots N$};

\draw[plate, dotted] (7.0, -5.0) rectangle (15.0, -2.5) node[below left, font=\small\bfseries] {$t=1\dots T$};

% Arrows

\draw[stochastic] (k_eps) -- (k_y);

\draw[stochastic] (k_mu) -- (k_r) node[midway, right, font=\large]{$\sim$};

\draw[deterministic] (k_r) -- (k_y);

\draw[deterministic] (k_x) -- (13.3, -3.5);

% Sequential update arrow

\draw[stochastic, bend right=50] (14, -4.5) to node[right, font=\small]{update $w_n$} (11, -1.5);

\end{tikzpicture}

```

### Why Model A's Likelihood is Intractable for HMC

Stan's HMC sampler requires a continuous, differentiable log posterior density. Individual rule particles are discrete, symbolic structures — lists containing integers, operators, and real-valued thresholds. The *space* of all possible rules is a combinatorial set of structured objects: there is no natural notion of a gradient with respect to "which logical operator appears in condition 1" or "which dimension is used." This is exactly the class of discrete latent variables that must be integrated out analytically before any Stan code is written (the same principle we applied to mixture components in Chapter 8).

For Model A, this analytical marginalisation is **not feasible in closed form** over a combinatorial rule space crossed with continuous thresholds and a non-differentiable resampling step. The particle filter therefore lives entirely in R, where it acts as its own approximate Bayesian inference engine via sequential Monte Carlo.

**This is the standard situation for which simulator-based inference was invented.** The Lintusaari et al. ABC review describes the pattern exactly:

> "Many recent models in biology describe nature to a high degree of accuracy but are not amenable to analytical treatment. The models can, however, be simulated on computers… While it is usually relatively easy to generate data from the models for any configuration of the parameters, the real interest is often focused on the inverse problem: the identification of parameter configurations that would plausibly lead to data that are sufficiently similar to the observed data."

Model A is exactly such a model: $\theta = (\varepsilon, N_{\text{particles}}, \text{max\_dims}, \ldots)$, $V$ is the random variables driving particle initialisation and resampling, $M$ is the `rule_particle_filter` function, and $y$ is the trial-by-trial response sequence. Section "Neural Posterior Estimation for Model A" implements NPE for this simulator. Until then, we treat the particle filter purely as a forward simulator.

### Parameters: Cognitive Commitment, Algebraic Role, NPE Parameterization

| Parameter | Cognitive meaning | Algebraic role | R / NPE parameterization |

|---|---|---|---|

| **ε** (error prob) | Probability that a learner misapplies an otherwise-correct rule; captures lapses, feature noise, and decision variability | Softens the weighted particle vote: $\hat{p}_t = \sum_n w_n [(1-\varepsilon)\,\mathbf{1}_{\{r_n=1\}} + \varepsilon\,\mathbf{1}_{\{r_n=0\}}]$ | `error_prob` in `rule_particle_filter()`; inferred on the logit scale: `logit(ε) ~ Normal(0, 1.5)` |

| **μ** (mutation rate) | Per-trial probability that a learner discards the current hypothesis and draws a fresh one from the grammar; controls how readily rules are revised | Drives hypothesis diversity after resampling: each particle independently replaced by $r_n^{\text{new}} \sim p_{\mathcal{R}}$ with probability $\mu$ | `mutation_prob` in `rule_particle_filter()`; inferred on the logit scale: `logit(μ) ~ Normal(-3, 1.5)` |

| **N** (particles) | Working-memory capacity: number of rule hypotheses the learner maintains simultaneously | Controls approximation quality of the SMC filter; not a continuous parameter — a structural commitment | Fixed at `N_PARTICLES_FIXED = 100`; treated as a sensitivity variable in §"Sensitivity to Rule-Set Size" |

**Trilogy comparison.** ε is the rule-model analogue of the GCM's sensitivity $c$ (sharpness of evidence accumulation) and the prototype model's $\sigma$ (response noise). μ is the analogue of the decay GCM's forgetting rate $\lambda$ and the Kalman prototype's process noise $q$ — the parameter that allows the model to track non-stationary categories.

### Mathematical Formulation

Model A is a state-space generative model. Let $N$ denote the number of particles, $\mathcal{R}$ the rule grammar, and $\varepsilon, \mu \in (0,1)$ the error and mutation probabilities.

**Priors:**

$$\text{logit}(\varepsilon) \sim \mathcal{N}(0,\ 1.5) \qquad \text{logit}(\mu) \sim \mathcal{N}(-3,\ 1.5)$$

**Initialisation** ($t = 0$): draw $N$ rules i.i.d. from the grammar prior, $r_n^{(0)} \sim p_{\mathcal{R}}$; set weights $w_n^{(0)} = 1/N$.

**Per-trial update** (for trial $t = 1, \ldots, T$, stimulus $\mathbf{x}_t$, label $y_t \in \{0,1\}$):

1. **Predict:** $\hat{p}_t = \sum_{n=1}^{N} w_n^{(t-1)} \cdot \left[(1-\varepsilon)\,\mathbf{1}\{r_n \text{ predicts } 1\} + \varepsilon\,\mathbf{1}\{r_n \text{ predicts } 0\}\right]$

2. **Weight update:** $\tilde{w}_n^{(t)} \propto w_n^{(t-1)} \cdot \left[(1-\varepsilon)^{\mathbf{1}\{r_n(\mathbf{x}_t)=y_t\}} \cdot \varepsilon^{\mathbf{1}\{r_n(\mathbf{x}_t)\neq y_t\}}\right]$

3. **Resample** if $\text{ESS} = 1/\sum_n (\tilde{w}_n^{(t)})^2 < N/2$: draw indices with replacement proportional to $\tilde{w}_n^{(t)}$; reset weights to $1/N$.

4. **Mutate:** each particle independently replaced by $r_n^{\text{new}} \sim p_{\mathcal{R}}$ with probability $\mu$.

5. **Observe:** $y_t \mid \hat{p}_t \sim \text{Bernoulli}(\hat{p}_t)$.

The resampling step in (3) is non-differentiable, which means the marginal likelihood $p(\mathbf{y} \mid \varepsilon, \mu)$ has no closed form and HMC cannot be applied. The steps below walk through each operation in detail and supply the R helper functions that the full agent assembles.

#### Step 1: Generating Initial Rule Particles

```{r ch15_generate_rules}

# Generate a single random rule.

# This is the implementation of the formal grammar from §"Rule Grammar".

generate_random_rule <- function(n_features,

max_dims = MAX_RULE_DIMS,

feature_range = list(c(0, 5), c(0, 5)),

nested_prob = 0.25) {

# With probability nested_prob, generate a nested 3-condition rule (if

# there are >= 2 features and the grammar allows it). Otherwise generate

# a flat 1- or 2-condition rule as before.

use_nested <- (n_features >= 2) && (runif(1) < nested_prob)

sample_thresh <- function(di) runif(1, feature_range[[di]][1], feature_range[[di]][2])

if (use_nested) {

# Three conditions; dimensions may repeat (same feature, different thresholds)

dims_used <- sample(seq_len(n_features), size = 3, replace = TRUE)

thresholds <- mapply(sample_thresh, dims_used)

operations <- sample(RULE_OPERATORS, 3, replace = TRUE)

list(

dimensions = dims_used,

thresholds = thresholds,

operations = operations,

outer_logic = sample(RULE_LOGIC, 1),

inner_logic = sample(RULE_LOGIC, 1),

prediction_if_true = sample(0:1, 1)

)

} else {

num_dims <- sample(seq_len(min(max_dims, n_features)), 1)

dims_used <- sort(sample(seq_len(n_features), size = num_dims, replace = FALSE))

thresholds <- numeric(num_dims)

operations <- character(num_dims)

for (k in seq_len(num_dims)) {

di <- dims_used[k]

thresholds[k] <- sample_thresh(di)

operations[k] <- sample(RULE_OPERATORS, 1)

}

list(

dimensions = dims_used,

thresholds = thresholds,

operations = operations,

logic = if (num_dims == 2) sample(RULE_LOGIC, 1) else NA,

prediction_if_true = sample(0:1, 1)

)

}

}

# Initialize N particles with equal weights.

initialize_particles <- function(n_particles, n_features,

max_dims = MAX_RULE_DIMS,

feature_range = list(c(0, 5), c(0, 5))) {

particles <- vector("list", n_particles)

for (i in seq_len(n_particles))

particles[[i]] <- generate_random_rule(n_features, max_dims, feature_range)

list(particles = particles, weights = rep(1 / n_particles, n_particles))

}

# --- Example ---

n_particles_example <- 5

n_features_example <- 2

initial_system <- initialize_particles(n_particles_example, n_features_example)

cat(paste("Initialized", n_particles_example, "particles. Example rule 1:\n"))

print(initial_system$particles[[1]])

cat("Initial weights:", initial_system$weights, "\n")

```

#### Step 2: Updating Particle Weights

When we observe a stimulus $\vec{x}$ and its true category $y$, we update weight $w_i$ for each particle $i$. We introduce a small $\varepsilon$ (`error_prob`) to allow for occasional noise or misapplication:

$$P(y \mid \vec{x}, \text{rule}_i) = \begin{cases} 1 - \varepsilon & \text{if rule}_i \text{ predicts } y \\ \varepsilon & \text{otherwise} \end{cases}$$

The updated weight is $w_i' \propto w_i \times P(y \mid \vec{x}, \text{rule}_i)$, then renormalized.

```{r ch15_update_weights}

update_weights <- function(particles, weights, stimulus, true_category,

error_prob = 0.05) {

n <- length(particles)

new_w <- numeric(n)

for (i in seq_len(n)) {

pred <- evaluate_rule(particles[[i]], stimulus)

likelihood <- if (pred == true_category) 1 - error_prob else error_prob

new_w[i] <- weights[i] * likelihood

}

total <- sum(new_w)

if (total > 1e-9) new_w / total else rep(1 / n, n)

}

# --- Example ---

cat("\n--- Example Weight Update ---\n")

updated_w <- update_weights(

initial_system$particles, initial_system$weights,

stimulus = c(4, 3), true_category = 1, error_prob = 0.1

)

preds <- sapply(initial_system$particles, evaluate_rule, stimulus = c(4, 3))

cat("Rule predictions for stimulus [4, 3]:", preds, "\n")

cat("Weights BEFORE:", round(initial_system$weights, 3), "\n")

cat("Weights AFTER: ", round(updated_w, 3), "\n")

```

#### Step 3: Resampling Particles

If we only update weights, eventually most weights become near zero and only a few particles dominate — **particle degeneracy**. Resampling revitalizes the particle set by drawing $N$ new particles with probability proportional to weights, then resetting all weights to $1/N$.

**Why not resample when any individual weight falls below a threshold?** A single low weight is not a reliable signal: in a healthy filter, a few particles always receive less support than the rest. A per-particle threshold triggers resampling whenever one particle becomes unlikely — which can happen after every informative trial even when the overall set is perfectly adequate. The result is constant, unnecessary resampling that destroys diversity without solving any real problem. The relevant question is not "is any single weight small?" but "how much of the population is effectively pulling its weight?"

**Why not resample on every trial?** Resampling discards particles: particles that lose a draw at one step may have been the correct hypothesis a few trials later, after an unexpected observation. Resampling every trial collapses diversity prematurely and makes the filter brittle to any short streak of misleading feedback. It also breaks the sequential importance-sampling interpretation of the filter — the weights are supposed to accumulate evidence across trials before being acted on.

**The ESS-based threshold balances both concerns.** The **Effective Sample Size** $\text{ESS} = 1 / \sum_i w_i^2$ is a single number that summarises the whole weight distribution: it equals $N$ when all weights are uniform (maximum diversity) and approaches 1 when one particle has all the weight (complete degeneracy). Using the rule "resample when $\text{ESS} < N \times \tau$" (with $\tau = 0.5$ as the default threshold) means resampling fires only when the collective distribution of weights has become concentrated — not because any particular particle is small, and not on a fixed schedule. This allows weights to accumulate evidence across trials when the current hypothesis set is still informative, and intervenes only when the approximation quality has genuinely degraded.

> **A note on ESS here vs. ESS in MCMC diagnostics.** ESS appears in two distinct contexts in this book. In Chapters 4–10 it measures how many effectively independent posterior draws an MCMC chain produces, diagnosing autocorrelation. Here it measures how many particles are effectively contributing to the filter's approximation, given their weights. Both are measures of statistical efficiency under a sampling procedure — the underlying intuition (fewer effective units = less reliable estimate) is the same — but the contexts are different. The MCMC ESS is about temporal autocorrelation; the particle ESS is about weight concentration. Both names are correct and they refer to genuinely different things.

```{r ch15_resample_particles}

resample_particles <- function(particles, weights) {

n <- length(particles)

idx <- sample.int(n, size = n, replace = TRUE, prob = weights)

new_p <- particles[idx]

new_w <- rep(1 / n, n)

list(particles = new_p, weights = new_w)

}

# --- Example ---

cat("\n--- Example Resampling ---\n")

skewed_w <- c(0.01, 0.60, 0.02, 0.35, 0.02)

cat("Weights BEFORE resampling:", skewed_w, "\n")

rs <- resample_particles(initial_system$particles, skewed_w)

cat("Weights AFTER resampling: ", rs$weights, "\n")

```

#### Step 4: Mutating Particles

Resampling solves particle degeneracy but creates a subtler problem: the effective hypothesis space shrinks monotonically. Every particle that loses the resampling lottery is gone forever, and no new rules are ever introduced. A filter running long enough on any task will collapse to a handful of particles — possibly the right ones, but with no ability to recover if the task changes or if the true rule was simply unlucky at initialisation.

The mutation step is the direct fix: after resampling on each trial, each particle independently regenerates with probability $\mu$, replaced by a fresh rule drawn from the grammar. The parameter $\mu$ is the third free parameter of Model A (alongside $\varepsilon$ and the structural commitments). It plays the same conceptual role as process noise $q$ in the Kalman prototype and forgetting rate $\lambda$ in the decay GCM:

| Chapter | Model | Non-stationarity parameter | Mechanism |

|---|---|---|---|

| Ch. 13 (decay GCM) | Exemplar store | $\lambda$ (forgetting rate) | Exponentially downweight old exemplars |

| Ch. 14 (Kalman prototype) | Running mean + covariance | $q$ (process noise) | Re-inflate uncertainty each trial |

| Ch. 15 (rule filter) | Particle distribution over rules | $\mu$ (mutation rate) | Randomly regenerate a fraction of particles each trial |

Setting $\mu = 0$ recovers a pure selection filter. On a stationary task, small $\mu$ barely affects inference — the filter mostly selects and the occasional regenerated particle is usually wrong, so it is quickly downweighted. On a task where the correct rule changes, $\mu > 0$ is essential: without it, all the rules that would fit the new regime have been eliminated and the filter cannot recover.

```{r ch15_rule_mutation}

# At each trial, each particle independently has probability mutation_prob

# of being replaced by a freshly drawn rule from the grammar.

# mutation_prob = 0 is a no-op (pure selection filter).

mutate_particles <- function(particles, mutation_prob, n_features,

max_dims, feature_range) {

if (mutation_prob <= 0) return(particles)

n <- length(particles)

to_mutate <- which(runif(n) < mutation_prob)

for (i in to_mutate) {

particles[[i]] <- generate_random_rule(n_features, max_dims, feature_range)

}

particles

}

```

This is the **prior-sampling** mutation kernel: replacement rules are drawn uniformly from the grammar, with no relationship to the rule they replace. §"Targeted Mutation: Revision Rather Than Replacement" develops cognitively motivated alternatives where the learner *revises* rather than discards — keeping useful features and adjusting only what failed.

#### Step 5: Making Predictions

The particle filter makes a categorization decision for a new stimulus $\vec{x}$ via a weighted vote across all particles:

$$P(\text{Choose Cat 1} \mid \vec{x}) = \sum_{i=1}^{N} w_i \times P(\text{Cat 1} \mid \vec{x}, \text{rule}_i)$$

where $P(\text{Cat 1} \mid \vec{x}, \text{rule}_i) = 1 - \varepsilon$ if rule $i$ predicts category 1, and $\varepsilon$ otherwise.

### R Implementation of the Particle Filter Agent

```{r ch15_particle_filter_agent}

# Full particle filter agent — this is Model A.

# Returns a tibble of prob_cat1 and sim_response for each trial.

# Note: n_particles defaults to N_PARTICLES_FIXED (= 100), the structural

# structural commitment documented in §"Rule Grammar: Formal Specification".

rule_particle_filter <- function(n_particles = N_PARTICLES_FIXED,

error_prob,

mutation_prob = 0.0,

obs,

cat_one,

max_dims = MAX_RULE_DIMS,

resample_threshold_factor = RESAMPLE_THRESHOLD,

quiet = TRUE) {

n_trials <- nrow(obs)

n_features <- ncol(obs)

# feature_range is built generically across n_features so the same simulator

# handles 2D continuous (Kruschke) and 5D binary (Ch. 16 Alien Game) inputs.

fr <- lapply(seq_len(n_features), function(d) range(obs[, d]))

ps <- initialize_particles(n_particles, n_features, max_dims, fr)

particles <- ps$particles

weights <- ps$weights

response_probs <- numeric(n_trials)

for (i in seq_len(n_trials)) {

if (!quiet && i %% 20 == 0) cat("Trial", i, "\n")

stim <- as.numeric(obs[i, ])

# ── Prediction ────────────────────────────────────────────────────────────

preds <- sapply(particles, evaluate_rule, stimulus = stim)

p_cat1_rules <- ifelse(preds == 1, 1 - error_prob, error_prob)

p <- sum(weights * p_cat1_rules)

response_probs[i] <- pmax(1e-9, pmin(1 - 1e-9, p))

# ── Weight update ─────────────────────────────────────────────────────────

weights <- update_weights(particles, weights, stim, cat_one[i], error_prob)

# ── Resample if ESS is low ────────────────────────────────────────────────

ess <- 1 / sum(weights^2)

if (ess < n_particles * resample_threshold_factor) {

rs <- resample_particles(particles, weights)

particles <- rs$particles

weights <- rs$weights

}

# ── Mutation ──────────────────────────────────────────────────────────────

# After resampling, each particle independently regenerates with probability

# mutation_prob. mutation_prob = 0 is a no-op (pure selection filter).

particles <- mutate_particles(particles, mutation_prob, n_features,

max_dims, fr)

}

tibble(prob_cat1 = response_probs,

sim_response = rbinom(n_trials, 1, response_probs))

}

cat("Particle filter agent function defined.\n")

```

This implementation captures the core cognitive processes hypothesized by rule-based models: hypothesis generation, testing against evidence, belief updating, and adaptive focusing of the hypothesis set. **It is also the simulator that NPE will use in §"Neural Posterior Estimation for Model A."**

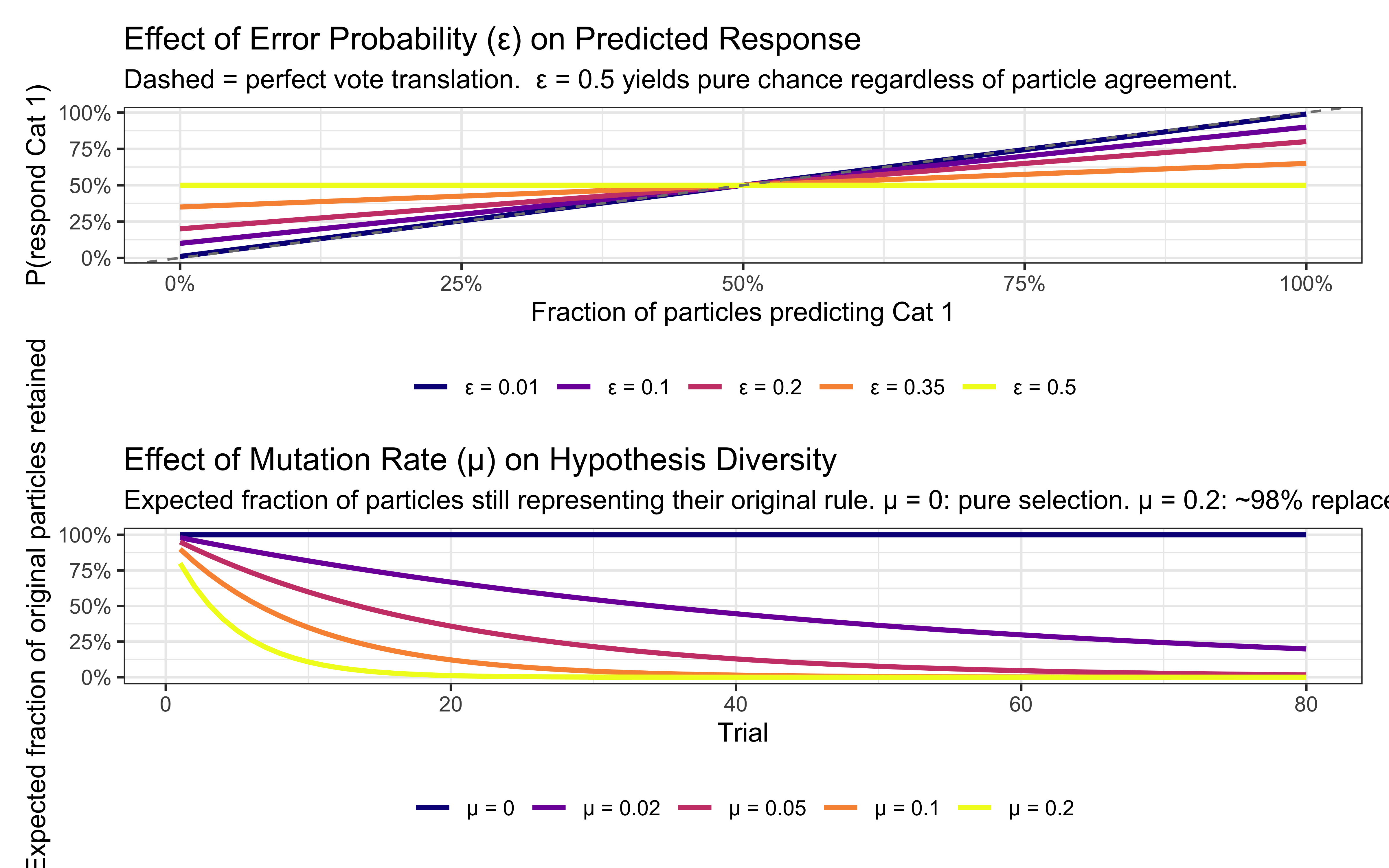

### Visualizing the Effect of ε and μ

Before running full simulations it is worth seeing what the two continuous parameters *do* mathematically — the same exercise performed for sensitivity $c$ in Chapter 11.

```{r ch15_param_effects}

# ── ε panel: how error probability softens the particle vote ─────────────────

eps_df <- expand_grid(

frac_correct = seq(0, 1, by = 0.01),

eps = c(0.01, 0.10, 0.20, 0.35, 0.50)

) |>

mutate(

p_cat1 = frac_correct * (1 - eps) + (1 - frac_correct) * eps,

eps_label = factor(eps, labels = paste0("ε = ", c(0.01, 0.10, 0.20, 0.35, 0.50)))

)

p_eps <- ggplot(eps_df, aes(x = frac_correct, y = p_cat1,

color = eps_label, group = eps_label)) +

geom_line(linewidth = 1) +

geom_abline(slope = 1, intercept = 0, linetype = "dashed", color = "grey50") +

scale_color_viridis_d(option = "plasma", name = NULL) +

scale_x_continuous(labels = scales::percent) +

scale_y_continuous(labels = scales::percent) +

labs(

title = "Effect of Error Probability (ε) on Predicted Response",

subtitle = "Dashed = perfect vote translation. ε = 0.5 yields pure chance regardless of particle agreement.",

x = "Fraction of particles predicting Cat 1",

y = "P(respond Cat 1)"

) +

theme_bw() + theme(legend.position = "bottom")

# ── μ panel: how mutation rate drives hypothesis turnover ────────────────────

mu_df <- expand_grid(

trial = 1:80,

mu = c(0, 0.02, 0.05, 0.10, 0.20)

) |>

mutate(

frac_surviving = (1 - mu)^trial,

mu_label = factor(mu, labels = paste0("μ = ", c(0, 0.02, 0.05, 0.10, 0.20)))

)

p_mu <- ggplot(mu_df, aes(x = trial, y = frac_surviving,

color = mu_label, group = mu_label)) +

geom_line(linewidth = 1) +

scale_color_viridis_d(option = "plasma", name = NULL) +

scale_y_continuous(labels = scales::percent, limits = c(0, 1)) +

labs(

title = "Effect of Mutation Rate (μ) on Hypothesis Diversity",

subtitle = "Expected fraction of particles still representing their original rule. μ = 0: pure selection. μ = 0.2: ~98% replaced by trial 20.",

x = "Trial",

y = "Expected fraction of original particles retained"

) +

theme_bw() + theme(legend.position = "bottom")

p_eps / p_mu

```

The two panels map directly onto the trilogy comparison: ε controls *response softness* (the analogue of sensitivity $c$ in the GCM and $\sigma$ in the prototype model), while μ controls *hypothesis turnover* (the analogue of forgetting rate $\lambda$ in the decay GCM and process noise $q$ in the Kalman prototype). The key difference from those continuous-state models is that μ acts on a discrete particle set — replacement is all-or-nothing rather than a smooth re-inflation of variance — which is why the fraction curve is exponential rather than linear.

::: {.callout-tip title="Generalization checklist for downstream chapters"}

The `rule_particle_filter` above is written so that the same function body runs unchanged on Chapter 16's 5-feature binary alien stimuli. A downstream chapter inherits the simulator by changing only the four items below — none of which touch the trial loop, the weight update, the resampling step, or the mutation step.

| Item | Kruschke (Ch. 15) | Alien Game (Ch. 16) |

|---|---|---|

| `n_features` | $2$ (height, position) | $5$ (binary feature vector) |

| `feature_range` | per-feature `range(obs[, d])` | `c(0, 1)` per feature |

| Threshold sampling | `runif(1, lo, hi)` | hard-coded at $0.5$ |

| `max_dims` default | $2$ | $3$ |

The grammar object (`dimensions`, `thresholds`, `operations`, `logic` / `outer_logic` / `inner_logic`, `prediction_if_true`) is identical in both chapters; the nested form $A\ L_{\text{outer}}\ (B\ L_{\text{inner}}\ C)$ is preserved so the alien-game grammar `A AND (B OR C)` is directly representable.

:::

---

## Simulating Categorization Behavior

Let's simulate how Model A performs on the Kruschke (1993) task. Each agent receives its **own independently randomized stimulus order**, consistent with the path-dependence design from Chapters 11 and 12.

```{r ch15_simulate_rule_responses}

# Wrapper: generates per-agent schedule then calls rule_particle_filter.

simulate_rule_agent <- function(agent_id, n_particles, max_dims, error_prob,

stimulus_info, n_blocks, subject_seed,

mutation_prob = 0.0) {

schedule <- make_subject_schedule(stimulus_info, n_blocks, seed = subject_seed)

obs <- as.matrix(schedule[, c("height", "position")])

cat_one <- schedule$category_feedback

result <- rule_particle_filter(

n_particles = n_particles,

error_prob = error_prob,

mutation_prob = mutation_prob,

obs = obs,

cat_one = cat_one,

max_dims = max_dims,

resample_threshold_factor = RESAMPLE_THRESHOLD,

quiet = TRUE

)

schedule |>

mutate(

agent_id = agent_id,

n_particles = n_particles,

max_dims = max_dims,

error_prob = error_prob,

mutation_prob = mutation_prob,

prob_cat1 = result$prob_cat1,

sim_response = result$sim_response,

correct = as.integer(category_feedback == sim_response)

) |>

group_by(agent_id, n_particles, max_dims, error_prob, mutation_prob) |>

mutate(performance = slider::slide_dbl(correct, mean, .before = 4, .complete = TRUE)) |>

ungroup()

}

param_df_rules <- expand_grid(

agent_id = 1:10,

n_particles = c(50, 200), # exploring sensitivity to N_particles

max_dims = c(1, 2), # exploring sensitivity to grammar depth

error_prob = c(0.05, 0.2)

) |>

mutate(subject_seed = agent_id) # unique, reproducible seed per agent

rule_sim_file <- here("simdata", "ch14_rule_simulated_responses.csv")

if (regenerate_simulations || !file.exists(rule_sim_file)) {

cat("Regenerating rule-based simulations...\n")

rule_responses <- future_pmap_dfr(

list(

agent_id = param_df_rules$agent_id,

n_particles = param_df_rules$n_particles,

max_dims = param_df_rules$max_dims,

error_prob = param_df_rules$error_prob,

subject_seed = param_df_rules$subject_seed

),

simulate_rule_agent,

stimulus_info = stimulus_info,

n_blocks = n_blocks,

.options = furrr_options(seed = TRUE)

)

write_csv(rule_responses, rule_sim_file)

cat("Simulations saved.\n")

} else {

rule_responses <- read_csv(rule_sim_file, show_col_types = FALSE)

cat("Simulations loaded.\n")

}

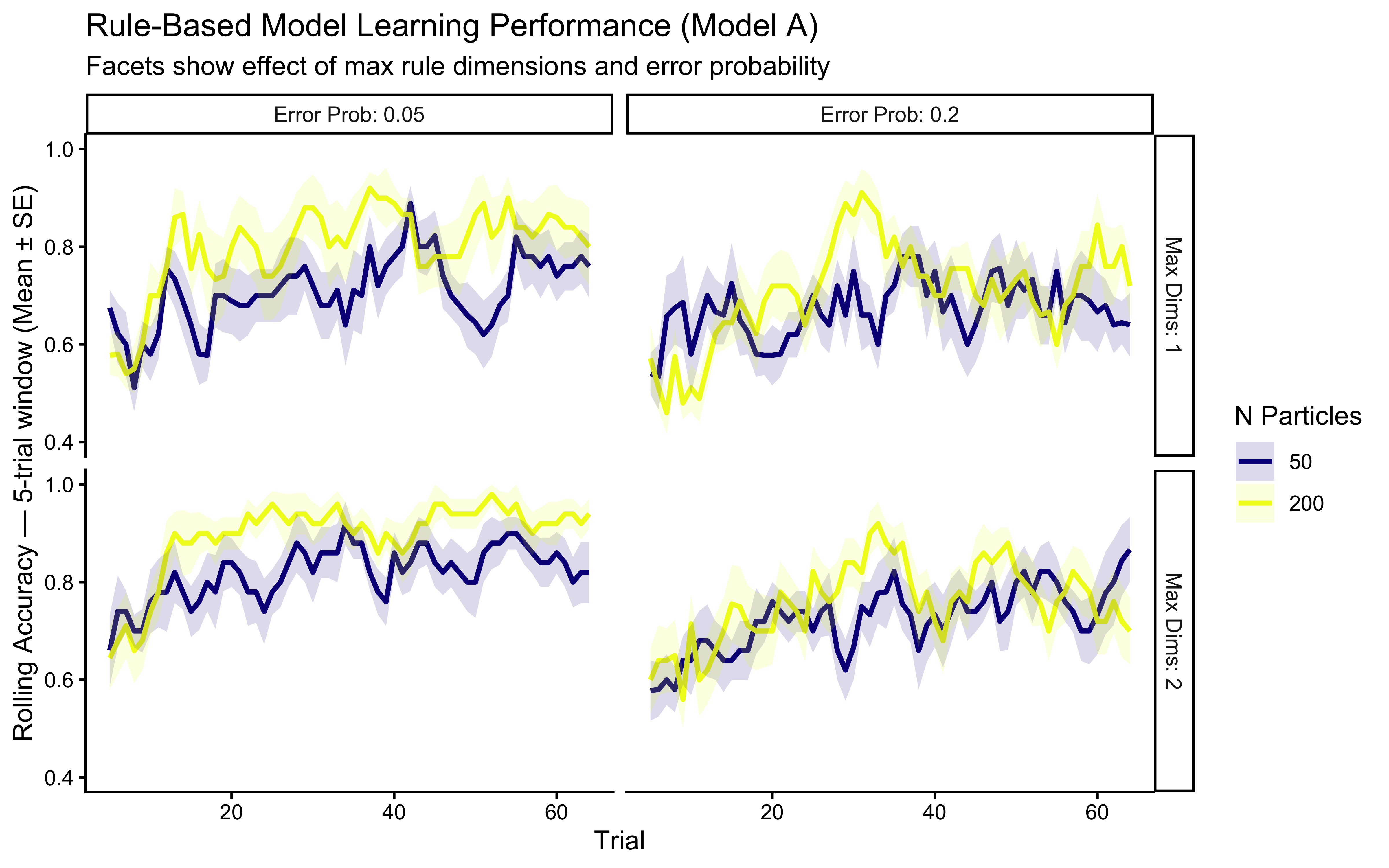

ggplot(rule_responses,

aes(x = trial_within_subject, y = performance,

color = factor(n_particles))) +

stat_summary(fun = mean, geom = "line", linewidth = 1) +

stat_summary(fun.data = mean_se, geom = "ribbon", alpha = 0.15,

aes(fill = factor(n_particles)), linetype = 0) +

facet_grid(

max_dims ~ error_prob,

labeller = labeller(

max_dims = function(x) paste("Max Dims:", x),

error_prob = function(x) paste("Error Prob:", x)

)

) +

scale_color_viridis_d(option = "plasma", name = "N Particles") +

scale_fill_viridis_d(option = "plasma", name = "N Particles") +

labs(

title = "Rule-Based Model Learning Performance (Model A)",

subtitle = "Facets show effect of max rule dimensions and error probability",

x = "Trial", y = "Rolling Accuracy — 5-trial window (Mean ± SE)"

) +

ylim(0.4, 1.0)

```

**Simulation Interpretation**: This visualization shows how Model A's performance is affected by:

* **Number of Particles**: More particles allow better exploration of the rule space, potentially leading to faster learning or higher final accuracy, though gains may diminish. **This parameter has cognitive content** (working-memory bound on hypothesis count) and is a candidate for NPE inference rather than fixing.

* **Maximum Rule Dimensions**: Allowing 2D rules (`max_dims = 2`) may be necessary if the true category boundary requires integrating information across dimensions. Setting `max_dims = 1` corresponds to a simpler grammar that cannot express conjunctive concepts.

* **Error Probability**: A higher `error_prob` makes the model more tolerant of imperfect rules, which can be helpful in noisy environments but may prevent convergence on a precise rule if one exists.

### Visualizing Rule Learning Over Time

A key feature of Model A is that the hypotheses are explicit. We can track which rules the particle filter believes are most likely at different points during learning.

```{r ch15_track_rule_learning}

track_rule_learning <- function(n_particles, error_prob, obs, cat_one,

mutation_prob = 0.0,

max_dims = MAX_RULE_DIMS,

top_k = 1,

resample_threshold_factor = RESAMPLE_THRESHOLD) {

n_trials <- nrow(obs)

n_features <- ncol(obs)

fr <- lapply(seq_len(n_features), function(d) range(obs[, d]))

ps <- initialize_particles(n_particles, n_features, max_dims, fr)

particles <- ps$particles

weights <- ps$weights

format_rule_info <- function(rule) {

list(

dimension1 = if (length(rule$dimensions) >= 1) rule$dimensions[1] else NA_integer_,

operation1 = if (length(rule$operations) >= 1) rule$operations[1] else NA_character_,

threshold1 = if (length(rule$thresholds) >= 1) rule$thresholds[1] else NA_real_,

dimension2 = if (length(rule$dimensions) >= 2) rule$dimensions[2] else NA_integer_,

operation2 = if (length(rule$operations) >= 2) rule$operations[2] else NA_character_,

threshold2 = if (length(rule$thresholds) >= 2) rule$thresholds[2] else NA_real_,

dimension3 = if (length(rule$dimensions) >= 3) rule$dimensions[3] else NA_integer_,

operation3 = if (length(rule$operations) >= 3) rule$operations[3] else NA_character_,

threshold3 = if (length(rule$thresholds) >= 3) rule$thresholds[3] else NA_real_,

logic = if (!is.null(rule$logic)) rule$logic

else if (!is.null(rule$outer_logic)) paste0(rule$outer_logic, "(", rule$inner_logic, ")")

else "N/A",

prediction = rule$prediction_if_true

)

}

history <- vector("list", n_trials)

for (i in seq_len(n_trials)) {

stim <- as.numeric(obs[i, ])

weights <- update_weights(particles, weights, stim, cat_one[i], error_prob)

top_idx <- order(weights, decreasing = TRUE)[seq_len(min(top_k, n_particles))]

history[[i]] <- map_dfr(top_idx, ~{

tibble(trial = i, rank = which(top_idx == .x),

weight = weights[.x], !!!format_rule_info(particles[[.x]]))

})

ess <- 1 / sum(weights^2)

if (ess < n_particles * resample_threshold_factor) {

rs <- resample_particles(particles, weights)

particles <- rs$particles

weights <- rs$weights

}

particles <- mutate_particles(particles, mutation_prob, n_features,

max_dims, fr)

}

bind_rows(history)

}

# Run tracking with a fixed seed-1 schedule for reproducibility

track_schedule <- make_subject_schedule(stimulus_info, n_blocks, seed = 1)

rule_tracking_data <- track_rule_learning(

n_particles = N_PARTICLES_FIXED,

error_prob = 0.1,

mutation_prob = plogis(LOGIT_MU_PRIOR_MEAN), # prior median ≈ 0.047

obs = as.matrix(track_schedule[, c("height", "position")]),

cat_one = track_schedule$category_feedback,

max_dims = MAX_RULE_DIMS,

top_k = 1

)

# Format rule text for plotting

rule_to_text <- function(rule_row) {

dim_names <- c("Height", "Position")

if (is.na(rule_row$dimension2)) {

sprintf("IF %s %s %.1f THEN Cat %d",

dim_names[rule_row$dimension1], rule_row$operation1,

rule_row$threshold1, rule_row$prediction)

} else {

sprintf("IF %s %s %.1f %s %s %s %.1f THEN Cat %d",

dim_names[rule_row$dimension1], rule_row$operation1, rule_row$threshold1,

rule_row$logic,

dim_names[rule_row$dimension2], rule_row$operation2, rule_row$threshold2,

rule_row$prediction)

}

}

rule_tracking_plot_data <- rule_tracking_data |>

filter(rank == 1) |>

rowwise() |>

mutate(rule_text = rule_to_text(cur_data())) |>

ungroup() |>

mutate(rule_block = consecutive_id(rule_text))

# Vertical lines at every hypothesis switch

rule_switches <- rule_tracking_plot_data |>

group_by(rule_block) |>

summarise(switch_trial = min(trial), .groups = "drop") |>

filter(switch_trial > 1)

# Label only the top-4 highest-weight peaks (most informative moments)

top_labels <- rule_tracking_plot_data |>

group_by(rule_block, rule_text) |>

slice_max(weight, n = 1, with_ties = FALSE) |>

ungroup() |>

slice_max(weight, n = 4) |>

mutate(rule_text = str_wrap(rule_text, width = 30))

# Run particle filter on the same schedule to get trial-by-trial responses

set.seed(1)

track_filter <- rule_particle_filter(

n_particles = N_PARTICLES_FIXED,

error_prob = 0.1,

mutation_prob = plogis(LOGIT_MU_PRIOR_MEAN),

obs = as.matrix(track_schedule[, c("height", "position")]),

cat_one = track_schedule$category_feedback,

max_dims = MAX_RULE_DIMS,

quiet = TRUE

)

track_perf <- tibble(

trial = seq_len(nrow(track_schedule)),

correct = as.integer(track_filter$sim_response == track_schedule$category_feedback)

) |>

mutate(rolling_acc = slider::slide_dbl(correct, mean, .before = 4, .complete = TRUE))

p_weights <- ggplot(rule_tracking_plot_data, aes(x = trial, y = weight)) +

geom_vline(

data = rule_switches, aes(xintercept = switch_trial - 0.5),

linetype = "dashed", color = "grey60", linewidth = 0.4

) +

geom_line(aes(group = 1), color = "#0072B2") +

geom_point(color = "#0072B2", size = 1.5) +

geom_text_repel(

data = top_labels,

aes(label = rule_text),

size = 2.8, lineheight = 0.9,

box.padding = 0.5, point.padding = 0.3,

max.overlaps = Inf, min.segment.length = 0

) +

scale_y_continuous(limits = c(0, NA), name = "Weight of Top Rule") +

scale_x_continuous(limits = c(0, total_trials + 5)) +

labs(

title = "Evolution of the Top Rule Hypothesis Over Time (Model A)",

subtitle = "Dashed lines mark hypothesis switches; labels show the four highest-weight rules.",

x = NULL

)

p_perf <- ggplot(track_perf, aes(x = trial, y = rolling_acc)) +

geom_vline(

data = rule_switches, aes(xintercept = switch_trial - 0.5),

linetype = "dashed", color = "grey60", linewidth = 0.4

) +

geom_hline(yintercept = 0.5, linetype = "dotted", color = "grey40") +

geom_line(color = "#0072B2") +

scale_x_continuous(limits = c(0, total_trials + 5)) +

scale_y_continuous(limits = c(0, 1), name = "Rolling Accuracy\n(5-trial window)") +

labs(x = "Trial")

p_weights / p_perf + plot_layout(heights = c(2, 1))

```

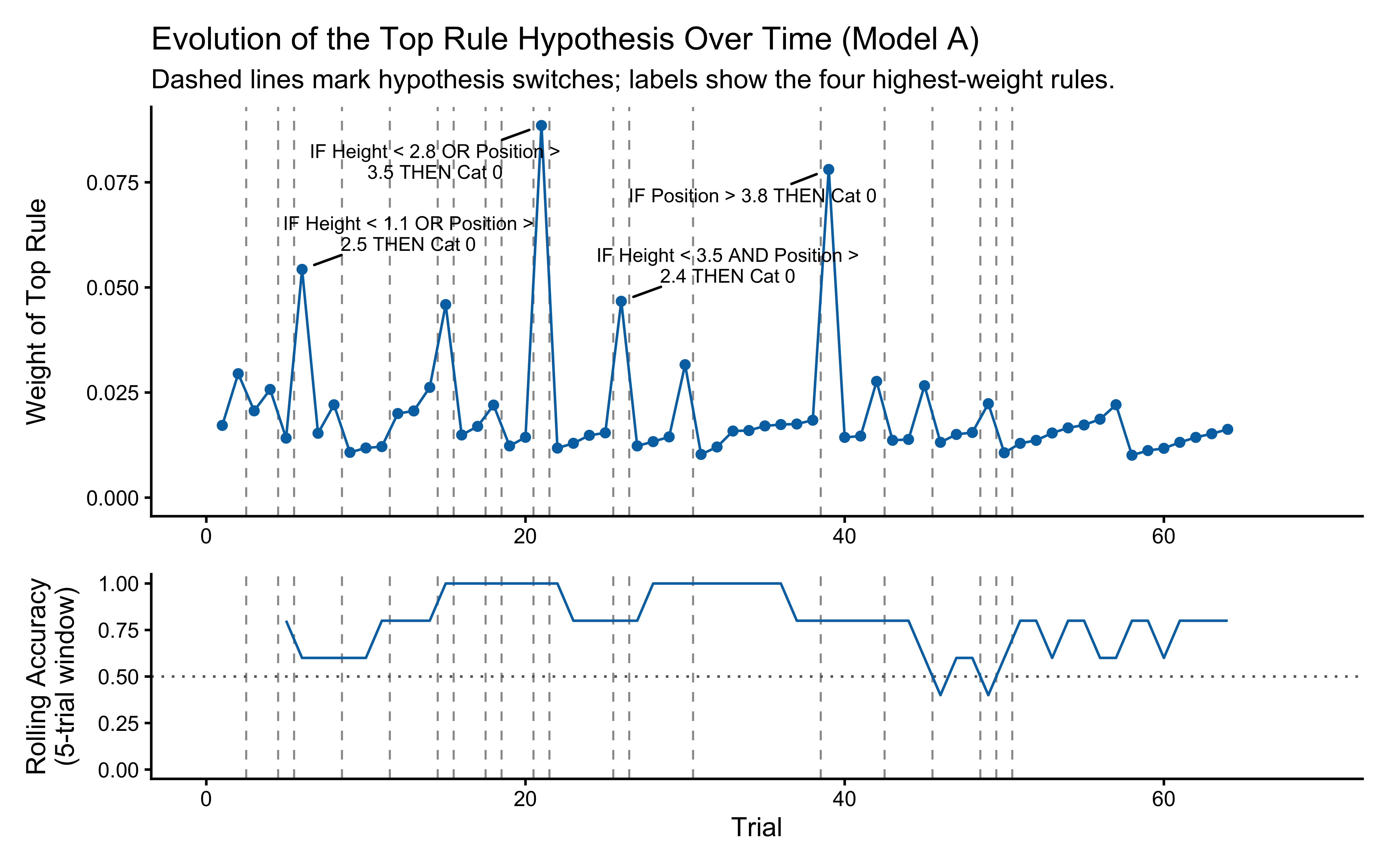

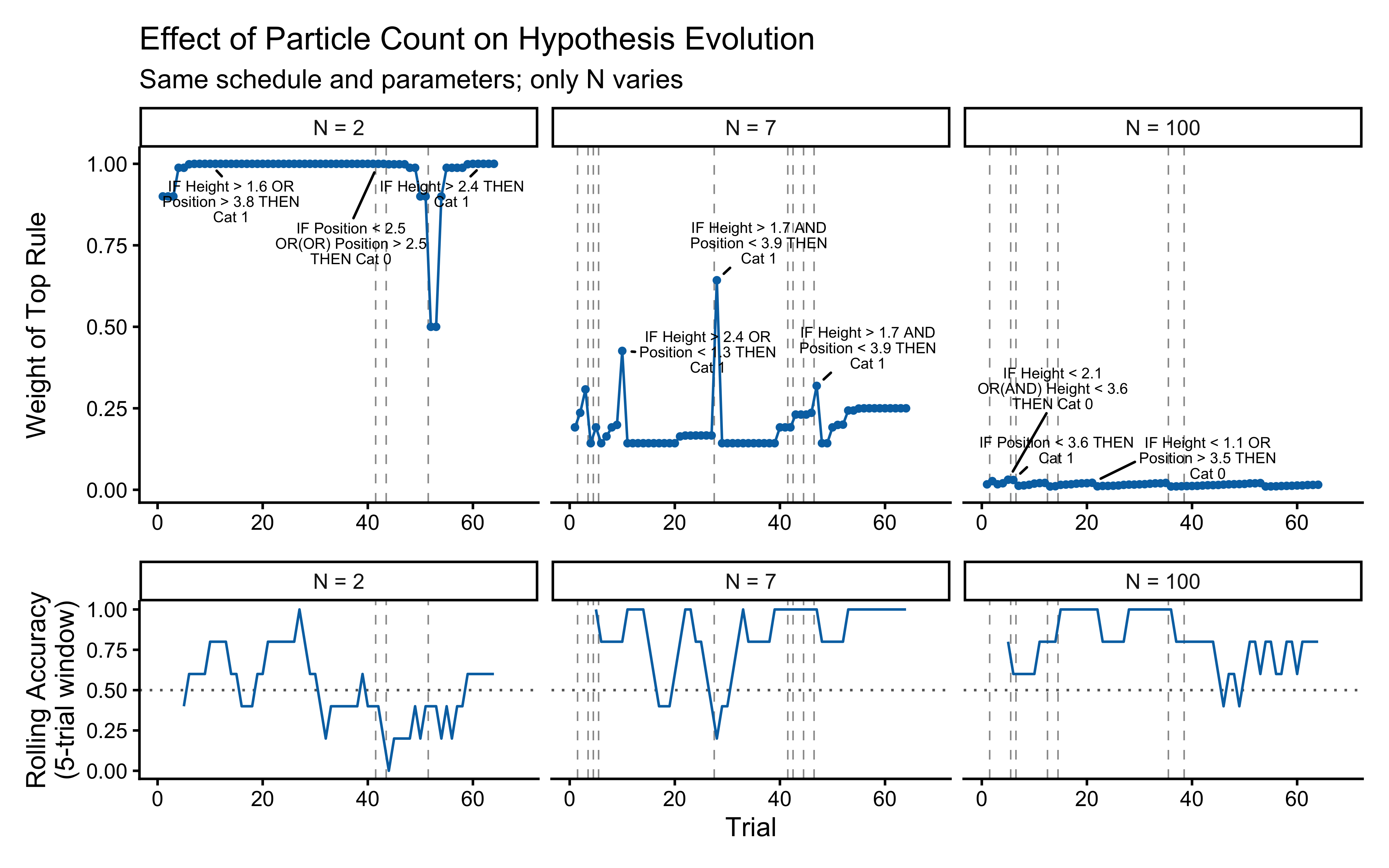

**Top panel — hypothesis weight.** The line tracks the probability assigned to whichever rule particle currently has the highest weight. Two features stand out. First, the weight never exceeds roughly 0.09: the filter never commits to a single rule, remaining diffuse across the full particle set throughout learning. Second, dashed vertical lines mark every moment the top-ranked rule changes identity — these switches are frequent, especially early on, as the filter resamples and mutates the particle population in response to prediction errors.

**Bottom panel — rolling accuracy.** The 5-trial rolling accuracy of the agent's actual responses (drawn from the full weighted ensemble) is shown on the same time axis, with a dotted reference line at chance (0.5). A key observation is that performance does *not* dip at every hypothesis switch. This is because the agent's response is determined by the collective vote of all particles, not by the top rule alone: many particles may agree on the correct prediction even while the highest-weight particle changes identity. Accuracy falls noticeably only when the ensemble as a whole is confused — not merely when one particle loses its rank. This dissociation between hypothesis instability and behavioural stability is a defining feature of particle-filter cognition.

How much of this stability is due to ensemble size? The comparison below repeats the same schedule with $N = 2$, $N = 7$, and the default $N = `r N_PARTICLES_FIXED`$ particles.

```{r ch15_particle_count_comparison}

np_compare <- c(2L, 7L, N_PARTICLES_FIXED)

# Collect tracking and performance data for each particle count

compare_tracking <- map_dfr(np_compare, function(np) {

set.seed(1)

track_rule_learning(

n_particles = np,

error_prob = 0.1,

mutation_prob = plogis(LOGIT_MU_PRIOR_MEAN),

obs = as.matrix(track_schedule[, c("height", "position")]),

cat_one = track_schedule$category_feedback,

max_dims = MAX_RULE_DIMS,

top_k = 1

) |> mutate(n_particles = np)

})

compare_plot_data <- compare_tracking |>

filter(rank == 1) |>

rowwise() |>

mutate(rule_text = rule_to_text(cur_data())) |>

ungroup() |>

group_by(n_particles) |>

mutate(rule_block = consecutive_id(rule_text)) |>

ungroup()